The Next Phase: Apple Lays Out Plans To Transition Macs from x86 to Apple SoCs

by Ryan Smith on June 22, 2020 7:00 PM EST

After many months of rumors and speculation, Apple confirmed this morning during their annual WWDC keynote that the company intends to transition away from using x86 processors at the heart of their Mac family of computers. Replacing the venerable ISA – and the exclusively-Intel chips that Apple has been using – will be Apple’s own Arm-based custom silicon, with the company taking their extensive experience in producing SoCs for iOS devices, and applying that to making SoCs for Macs. With the first consumer devices slated to ship by the end of this year, Apple expects to complete the transition in about two years.

The last (and certainly most anticipated) segment of the keynote, Apple’s announcement that they are moving to using their own SoCs for future Macs was very much a traditional Apple announcement. Which is to say that it offered just enough information to whet developers (and consumers’) appetites without offering too much in the way of details too early. So while Apple has answered some very important questions immediately, there’s also a whole lot more we don’t know at the moment, and likely won’t known until late this year when hardware finally starts shipping.

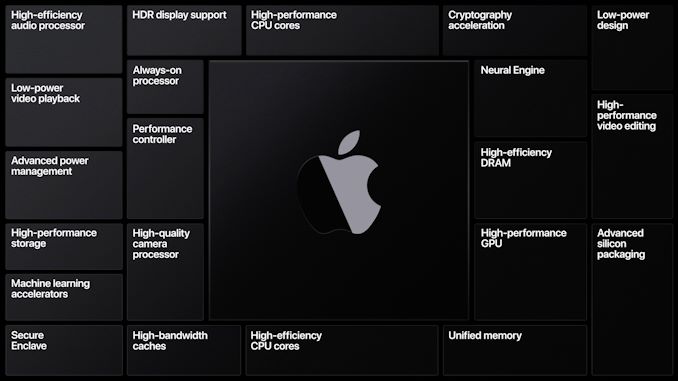

What we do know, for the moment, is that this is the ultimate power play for Apple, with the company intending to leverage the full benefits of vertical integration. This kind of top-to-bottom control over hardware and software has been a major factor in the success of the company’s iOS devices, both with regards to hard metrics like performance and soft metrics like the user experience. So given what it’s enabled Apple to do for iPhones, iPads, etc, it’s not at all surprising to see that they want to do the same thing for the Mac. Even though the OS itself isn’t changing (much), the ramifications of Apple building the underlying hardware down to the SoC means that they can have the OS make full use of any special features that Apple bakes into their A-series SoCs. Idle power, ISPs, video encode/decode blocks, and neural networking inference are all subjects that are potentially on the table here.

Apple SoCs: Market Leading Performance & Efficiency

At the heart of this shift in the Mac ecosystem will be the transition to new SoCs built by Apple. Curiously, the company has carefully avoided using the word “Arm” anywhere in their announcement, but the latest macOS developer documentation makes it clear Apple is taking their future into their own hands with the Arm architecture. The company will be making a series of SoCs specifically for the Mac, and while I wouldn’t be too surprised if we see some iPad/Mac overlap, at the end of the day Apple will want SoCs more powerful than their current wares to replace the chips in their most powerful Mac desktops.

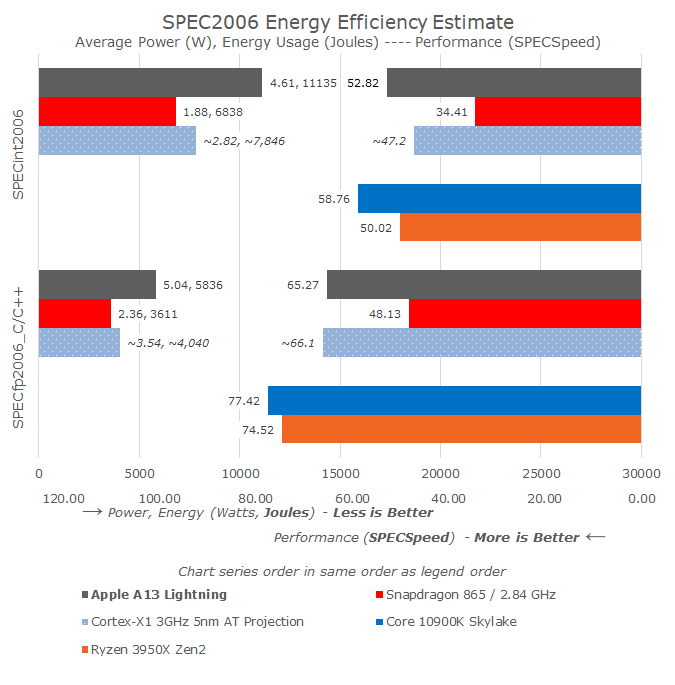

And it goes without saying that Apple’s pedigree in chip designs is nothing less than top-tier at this point. The company has continued to iterate on its CPU core designs year after year, making significant progress at a time when x86 partner Intel has stalled, allowing the company’s latest Lightning cores to exceed the IPC of Intel’s architectures, while overall performance has closed in on their best desktop chips.

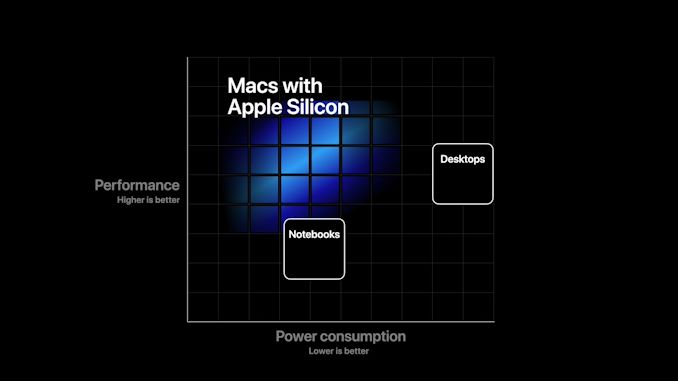

Apple’s ability to outdo Intel’s wares is by no means guaranteed, especially when it comes to replacing the likes of the massive Xeon chips in the Mac Pro, but the company is coming into this with a seasoned design team that has done some amazing things with low-power phone and tablet SoCs. Now we’re going to get a chance to see what they can do when the last of the chains come off, and they are allowed to scale up their designs to full desktop and workstation-class chips. Apple believes they can deliver better performance at lower power than the current x86 chips they use, and we’re all excited to see just what they can do.

Though from an architecture standpoint, the timing of the transition is a bit of an odd one. As noted by our own Arm guru, Andrei Frumusanu, Arm is on the precipice of announcing the Arm v9 ISA, which will bring several notable additions to the ISA such as Scalable Vector Extension 2 (SVE2). So either Arm is about to announce v9, and Apple’s A14 SoCs will be among the first to implement the new ISA, otherwise Apple will be setting the baseline for macOS-on-Arm as v8.2 and its NEON extensions fairly late into the ISA’s lifecycle. This will be something worth keeping an eye on.

Selling x86 & Arm Side-by-Side: A Phased Transition

While for obvious reasons Apple’s messaging today is about where they want to be at the end of their two-year transition, their transition is just that: around two years long. As a result, Apple has confirmed that there will be an overlapping period where the company will be selling both x86 and Arm devices – and there will even be new x86 devices that the company has yet to launch.

In the near term, it will take Apple some time to build new devices around their in-house SoCs. So even if Apple doesn’t introduce any new device families or form factors over the next two years, the company will still need to refresh x86-based Macs with newer Intel processors to keep them current until their Arm-based successors are ready. And although Apple hasn’t offered any guidance on what devices will get replaced first, it’s as reasonable a bet as any that the earliest devices will be lower-end laptops and the like, while Apple’s pro gear such as the Mac Pro tower will be the last parts to transition, as those will require the most extensive silicon engineering.

This also means that Apple is still on the clock as far as x86 software support goes, and will continue to be so well after they complete their hardware transition. In part a practical statement to avoid Osborning themselves and their current x86-based systems, Apple has confirmed that they will continue supporting x86 Macs for years to come. Just how long that will be remains to be seen, of course, but unless Apple accelerates the retirement x86 Mac support, the company as of late has been supporting Macs with newer OSes and OS updates for several years after their initial launch.

x86 Compatibility: Rosetta 2 & Virtualization

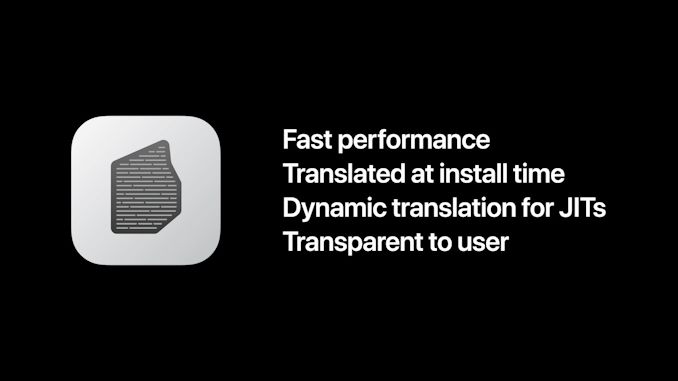

Meanwhile, in order to bridge the gap between Apple’s current software ecosystem and where they want to be in a couple of years, Apple will once again be investing in a significant software compatibility layer in order to run current x86 applications on future Arm Macs. To be sure, Apple wants developers to recompile their applications to be native – and they are investing even more into the Xcode infrastructure to do just that – but some degree of x86 compatibility is still a necessity for now.

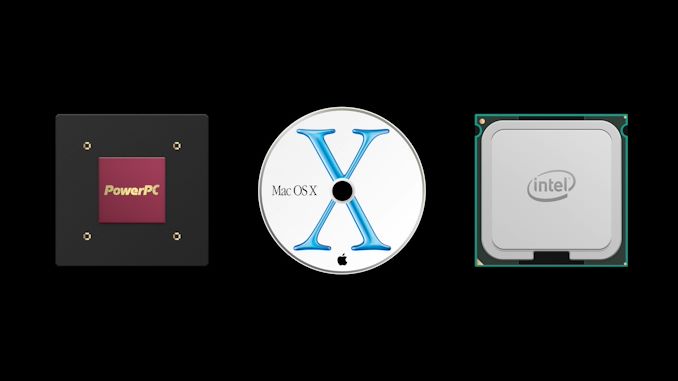

The cornerstone of this is the return of Rosetta, the PowerPC-to-x86 binary translation layer that Apple first used for the transition to x86 almost 15 years ago. Rosetta 2, as it’s called, is designed to do the same thing for x86-to-Arm, translating x86 macOS binaries so that they can run on Arm Macs.

Rosetta 2’s principle mode of operation will be to translate binaries at install time. I suspect that Apple is eyeing distributing pre-translated binaries via the App Store here (rather than making every Mac translate common binaries), but we’ll see what happens there. Meanwhile Rosetta 2 will also support dynamic translation, which is necessary for fast performance on x86 applications that do their own Just-in-Time compiling.

Overall Apple is touting Rosetta 2 as offering “fast performance”, and while their brief Maya demo is certainly impressive, it remains to be seen just how well the binary translation tech works. x86 to Arm translation has been a bit of a mixed bag, judging from Qualcomm & Microsoft’s efforts, though past efforts haven’t involved the kind of high-performance chips Apple is aiming for. At the same time, however, even with the vast speed advantage of x86 chips over PPC chips, running PPC applications under the original Rosetta was functional, but not fast.

As a result, Rosetta 2 is probably best thought of as a backstop to ensure program compatibility while devs get an Arm build working, rather than an ideal means of running x86 applications in the future. Especially since Rosetta 2 doesn’t support high-performance x86 instructions like AVX, which means that in applications that use dense, performance-critical code, they will need to fall back to slower methods.

On which note, right now it’s not clear how long Apple will offer Rosetta 2 for macOS. The original Rosetta was retired relatively quickly, as Apple has always pushed its developers to move quickly to keep up with the platform. And with a desire to have a unified architecture across all of its products, Rosetta 2 may face a similarly short lifecycle.

Meanwhile, macOS Big Sur (11.0), the launch OS for this new Mac ecosystem, will also be introducing a new binary format called Universal 2. Apple has ample experience here with fat binaries, and Universal 2 will extend that to cover Arm binaries. Truth be told, Apple already has the process down so well that I don’t expect this to be much more than including yet another folder in an application bundle with the necessary Arm binaries.

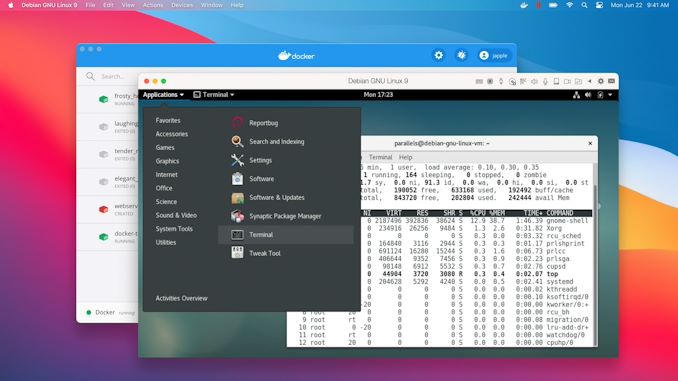

Finally, rounding out the compatibility package is an Apple-developed virtualization technology to handle things such as Linux Docker containers. Information on this feature is pretty light – the company briefly showed it off as part of Parallels running Linux in the keynote – so it remains to be seen just what the tech can do. At a minimum, and appropriate for a developers conference, the fact that they have a solution in place for Linux and Docker is an good feature to show off, as these are features that are critical to WWDC’s software developer crowd.

But it leaves unanswered some huge questions about Windows support, and whether this tech can be used to run Windows 10 similar to how Parallels and other virtualization software can run Windows inside of macOS today. As well, Apple isn’t saying anything about BootCamp support at this time, despite the fact that dual-booting macOS and Windows has long been a draw for Apple’s Mac machines.

Dev Kits: A12Z As A Taste of Things To Come

Finally, in order to prepare developers to launch native, Arm-compiled software later this year when the first Arm Macs ship, Apple has also put together a developer transition kit, which the company will be loaning out to registered developers. The DTK, as it’s called, was used in Apple’s keynote to demonstrate the features of macOS Big Sur. And while it’s essentially just an iPad in a Mac Mini’s body, it’ll be an important step in getting developers ready with native applications by giving them actual hardware to test and optimize against.

Overall, the DTK is based on Apple’s A12Z processor, and includes 16GB of RAM as well as a 512GB SSD. I wouldn’t be the least bit surprised if the machine is also clocked a bit higher than iPads as well, thanks to the device’s larger form factor, but in an interesting twist of fate it’s still likely to be slower than the iPhone 11 series of devices, which use the newer A13 SoC. The upside, at least, is that the A12Z sets a rather high low for performance, and conversely encourages developers to make efficient applications. So if developers can get their applications running well on an A12Z device, then they should have no problems whatsoever in running those apps on future A14-derived silicon.

And although the A12Z SoC inside the DTKs is a known quantity at this point, like their other beta programs, Apple will be keeping a tight lid on performance. The DTK license agreement bans public benchmarking, and even though developers will pay $500 to take part in the program, the DTKs remain the property of Apple and must be returned. So while leaks will undoubtedly drip out over the coming months, it would seem that we’re not going to get the chance to do any kind of extensive, above-the-board performance testing of Mac-on-Arm hardware until the final consumer systems come out late this year.

Closing Thoughts

While the confluence of events that have led to Apple’s decision may have removed any surprise from today’s announcement, there is no downplaying the significance of what Apple has decided to do. To go vertically integrated – developing their own chips and controlling virtually every aspect of Mac hardware and software – is a big move on its own. But the fact that Apple will be doing this while simultaneously transitioning the macOS and the larger Mac software ecosystem to another Instruction Set Architecture makes it all the more monumental. A large number of things have to go right for the company in order to successfully make the transition, both at the hardware and the software level. Every group within the Mac division will be up to bat, as it were.

The good news is that, as a fixture of the personal computing industry since the very beginning, there are few companies more experienced in these sorts of transitions than Apple. The move to x86 almost 15 years ago more or less created the playbook for Apple’s move to Arm over the next two years, as many of the software and hardware challenges at the same. By not tying themselves down with legacy software – and by forcing developers to keep up or perish – Apple has remained nimble enough to pull off these kinds of transitions, and to do so in a handful of years instead of a decade or longer.

Overall, I’m incredibly excited to see what Apple can do for the Mac ecosystem by supplying their own chips, as they have accomplished some amazing things with their A-series silicon thus far. However it’s also an announcement that brings mixed feelings. Apple’s original move to x86 finally unified the desktop computing market behind a single ISA, letting one system natively run macOS to Windows, and everything in between. But after 15 years of a software compatibility utopia, the PC market is about to become fractured once again.

241 Comments

View All Comments

Bp_968 - Tuesday, June 23, 2020 - link

Even if they made a GPU that performed at 3090 levels for 100$ and sipped 50 watts at full bore they would only use it on their systems and never allow it to be used outside the apple ecosystem. 30 years of watching these guys, they play by the old school monopoly rules. If they could own the entire supply chain and entire retail chain and entire data services chain (your phone provider) they would.Zerrohero - Tuesday, June 23, 2020 - link

Since you have been watching Apple for 30 years, when, in your opinion, they became a monopoly and in which product category or categories?Apple is not obliged to sell or license their CPUs or GPUs to anyone. It’s not “playing by old school monopoly rules” when they don’t.

Do you really think that Apple should be obliged to supply their own chips to the likes of Huawei, presumably without profit even?

Owning the design of your core HW components and having your own OS etc. is not a crime either.

But of course you know all this.

(The bitterness towards Apple from people who have never owned, and never will own, a single Apple product just never ceases to amaze me. None of this affects your life in any way whatsoever.)

starcrusade - Tuesday, June 23, 2020 - link

Definition of Monopoly: Exclusive possession or control of something.So yes they are a monopoly.

Monopolies are not inherently illegal (in the US). Abuse of a monopoly is. If for example Apple went and said any apps sold in our app store cannot be sold anywhere else (ie google play), that would be illegal abuse of a monopoly. Just ask Amazon, that's exactly what they did with their Kindle store and they lost. Amazon still has a virtual monopoly on the Ebook market.

liquid_c - Tuesday, June 23, 2020 - link

That's not a monopoly, that's exclusiveness and while they might be similar in a few aspects, they're not the same thing. And to refrain to your example, it's up to the app developers to choose if they want to sell on Apple only platforms or ditch Apple and sell their apps on other platforms. Considering that the developers still have the freedom of choice regarding their apps, this is not a monopoly.RedGreenBlue - Wednesday, June 24, 2020 - link

It’s called vertical integration. People calling Apple a monopoly don’t call Ford or GM monopolies for designing and making their own engines and car parts. Apple has a monopoly on doing well all the things needed for a phone or computer to work best, but anybody else in the industry has the opportunity to do the same. What are you gonna do, demand they stop making better products so someone can catch up?You’re also neglecting the fact that they ditched Intel to do this. Whose chips alone made up about 25% of the cost of a cheap macbook and sometime probably as much as 40% of the bill of materials. Apple can make a competitive chip for 25-50 dollars. As opposed to over $250. Intel was the more monopolistic company in this relationship. Apple could drop mac prices by $100 and still make $100 more profit.

They should have done this years ago. The benefits were incredibly obvious since 2016 at the latest.

lucam - Tuesday, June 23, 2020 - link

Nope, likely to be Imagination...psychobriggsy - Tuesday, June 23, 2020 - link

Undoubtedly the end result will be AMD GPUs are out of Apple.But integrated graphics, even on 5nm, can only do so much. I presume the A14Z on 5nm will have double the GPU of the A12Z at least, so the silicon will be very powerful - but it won't be >5 TFLOPS powerful.

So I guess AMD GPUs in laptops are dead by next year, but AMD GPUs in iMac and Mac Pro might last a couple more years - it depends on whether or not Apple are going to create their own discrete GPU line using their IP or not.

Fulljack - Tuesday, June 23, 2020 - link

well, for next year or so, I don't think Apple iGPU will replace laptop dGPU just yet. It'll be a beast in it's range, no doubt, but there's so much you can do with limited power draw. MBP 16 refresh with Apple Arm CPU will surely still has AMD dGPU option.caribbeanblue - Sunday, October 30, 2022 - link

Welp, now that they've made a 10.4tflop integrated GPU, and their 13.7tflop laptop iGPU is coming this November, what do you think?If the top-end Mac Pro chip ends up being 4x M2 Max chips as rumored, that'll have 54.7 fp32 tflops, about as much as the 4x Radeon Pro VII GPUs that the current Mac Pro has.

defferoo - Tuesday, June 23, 2020 - link

Apple may even create a dedicated GPU die with a high bandwidth connection to their SoC so that they can create products with a powerful GPU in addition to products using their integrated GPU.I'm not sure how much they would get out of doing that vs. just using AMD dedicated GPUs so it's still an open question.