Arm's New Cortex-A78 and Cortex-X1 Microarchitectures: An Efficiency and Performance Divergence

by Andrei Frumusanu on May 26, 2020 9:00 AM EST- Posted in

- SoCs

- CPUs

- Arm

- Smartphones

- Mobile

- GPUs

- Cortex

- Cortex A78

- Cortex X1

- Mali G78

The Cortex-X1 Micro-architecture: Bigger, Fatter, More Performance

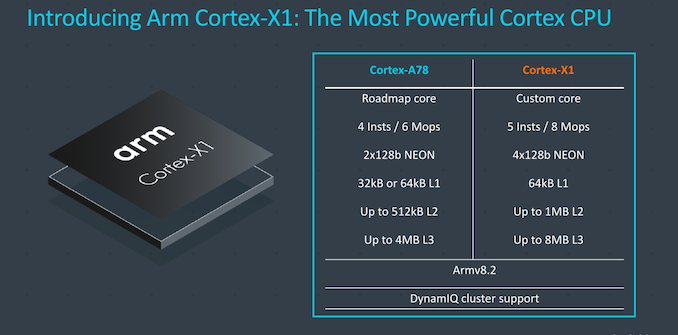

While the Cortex-A78 seems relatively tame in its performance goals, today’s biggest announcement is the far more aggressive Cortex-X1. As already noted, Cortex-X1 is a significant departure from Arm's usual "balanced" design philosophy, with Arm designing a core that favors absolute performance, even if it comes at the cost of energy efficiency and space efficiency.

At a high level, the design could be summed up as being a ultra-charged A78 – maintaining the same functional principles, but increasing the structures of the core significantly in order to maximize performance.

Compared to an A78, it’s a wider core, going up from a 4- to a 5-wide decoder, increasing the renaming bandwidth to up to 8 Mops/cycle, and also vastly changing up some of the pipelines and caches, doubling up on the NEON unit, and double the L2 and L3 caches.

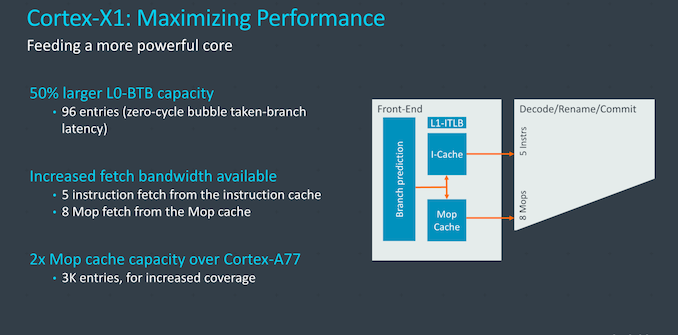

On the front-end (and valid the rest of the core as well), the Cortex-X1 adopts all the improvements that we’ve already covered on the Cortex-A78, including the new branch units. On top of the changes the A78 introduced, the X1 further grows some aspects of the blocks here. The L0 BTB has been upgraded from 64 entries on the Cortex-A77 and A78, to up to 96 entries on the X1, allowing for more zero latency taken branches. The branch target buffers are still of a two-tier hierarchy with the L0 and L2 BTBs, which Arm in previous disclosures referred to as the nanoBTB and mainBTB. The microBTB/L1 BTB was present in the A76 but had been subsequently discontinued.

The macro-op cache has been outright doubled from 1.5K entries to 3K entries, making this a big structure amongst the publicly disclosed microarchitectures out there, bigger than even Sunny Cove’s 2.25K entries, but shy of Zen2’s 4K entry structure - although we do have to make the disambiguation that Arm talks about macro-ops while Intel and AMD talk about micro-op caches.

The fetch bandwidth out of the L1I has been bumped up 25% from 4 to 5 instructions with a corresponding increase in the decoder bandwidth, and the fetch and rename bandwidth out of the Mop-cache has seen a 33% increase from 6 to 8 instructions per cycle. In effect, the core can act as a 8-wide machine as long as it’s hitting the Mop cache.

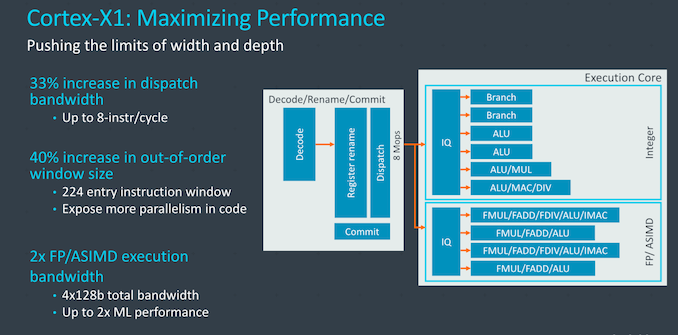

On the mid-core, Arm here again talks about increasing the dispatch bandwidth in terms of Mops or instructions per cycle, increasing it by 33% from 6 to 8 when comparing the X1 to the A78. In µops terms the core can handle up to 16 dispatches per cycle when cracking Mops fully into smaller µops, in that regard, representing a 60% increase compared to the 10µops/cycle the A77 was able to achieve.

The out-of-order window size has been increased from 160 to 224 entries, increasing the ability for the core to extract ILP. This had always been an aspect Arm had been hesitant to upgrade as they had mentioned that performance doesn’t scale nearly as linearly with the increased structure size, and it comes at a cost of power and area. The X1 here is able to make those compromises given that it doesn’t have to target an as wide range of vendor implementations.

On the execution side, we don’t see any changes on the part of the integer pipelines compared to the A78, however the floating point and NEON pipelines more significantly diverge from past microarchitectures, thanks to the doubling of the pipelines. Doubling here can actually be taken in the literal sense, as the two existing pipelines of the A77 and A78 are essentially copy-pasted again, and the two pairs of units are identical in their capabilities. That’s a quite huge improvement and increase in execution resources.

In effect, the Cortex-X1 is now a 4x128b SIMD machine, pretty much equal in vector execution width as some desktop cores such as Intel’s Sunny Cove or AMD’s Zen2. Though unlike those designs, Arm's current ISA doesn't allow for individual vectors to be larger than 128b, which is something to be addressed in a next generation core.

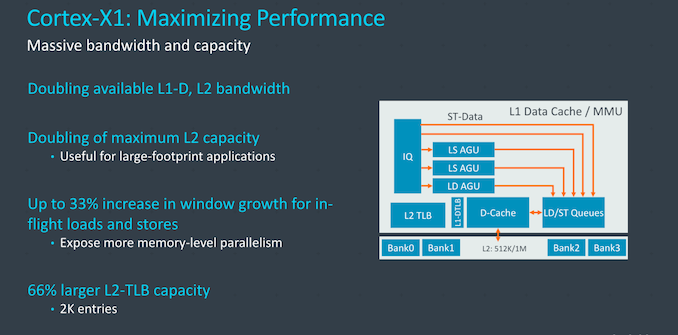

On the memory subsystem side, the Cortex-X1 also sees some significant changes – although the AGU setup is the same as that found on the Cortex-A78.

On the part of the L1D and L2 caches, Arm has created new designs that differ in their access bandwidth. The interfaces to the caches here aren’t wider, but rather what’s changed is the caches designs themselves, now implementing double the memory banks. What this solves is possible bank conflicts when doing multiple concurrent accesses to the caches, it’s something that we may have observed with odd “zig-zag” patterns in our memory tests of the Cortex-A76 cores a few years back, and still present in some variations of that µarch.

The L1I and L1D caches on the X1 are meant to be configured at 64KB. On the L2, because it’s a brand new design, Arm also took the opportunity to increase the maximum size of the cache which now doubles up to 1MB. Again, this actually isn’t the same 1MB L2 cache design that we first saw on the Neoverse-N1, but a new implementation. The access latency is 1 cycle better than the 11-cyle variant of the N1, achieving 10 cycles on the X1, regardless of the size of the cache.

The memory subsystem also increases the capability to support more loads and stores, increasing the window here by 33%, adding even more onto the MLP ability of the core. We have to note that this increase not merely refers to the store and load buffers but the whole system’s capabilities with tracking and servicing requests.

Finally, the L2 TLB has also seen a doubling in size compared to the A78 (66% increase vs A77) with 2K entries coverage, serving up to 8MB of memory at 4K pages, which makes for a good fit for the envisioned 8MB L3 cache for target X1 implementations.

The doubling of the L3 cache in the DSU doesn’t necessarily mean that it’s going to be a slower implementation, as the latency can be the same, but depending on partner implementations it can mean a few extra cycles of latency. Likely what this is referring to is likely the option for banking the L3 with separated power management. To date, I haven’t heard of any vendors using this feature of the DSU as most implementers such as Qualcomm have always had the 4MB L3 fully powered on all the time. It is possible that with a 8MB DSU that some vendors might look into power managing this better, for example it having being only partially powered on as long as only little cores are active.

Overall, what’s clear here about the Cortex-X1 microarchitecture is that it’s largely consisting of the same fundamental building blocks as that of the Cortex-A78, but only having bigger and more of the structures. It’s particularly with the front-end and the mid-core where the X1 really supersizes things compared to the A78, being a much wider microarchitecture at heart. The arguments about the low return on investment on some structures here just don’t apply on the X1, and Arm went for the biggest configurations that were feasible and reasonable, even if that grows the size of the core and increases power consumption.

I think the real only design constraints the company set themselves here is in terms of the frequency capabilities of the X1. It’s still a very short pipeline design with a 10-cycle branch mispredict penalty and a 13-stage deep frequency design, and this remains the same between the A78 and X1, with the latter’s bigger structures and wider design not handicapping the peak frequencies of the core.

192 Comments

View All Comments

Kurosaki - Thursday, June 25, 2020 - link

It's the future!MarcGP - Tuesday, May 26, 2020 - link

You don't make any sense. You say at the same time :1) It's not a problem of the SOCs performance but of the poor emulation

2) The emulation software is not the issue but the weak SOCs

Make up your mind, it's one or the other, but it can't be both.

armchair_architect - Tuesday, May 26, 2020 - link

This looks pretty exciting!What if vendors will go for 2+4 configs (like Apple) with 2 X1 + 4 A78?

Apple has showed that this configuration is really good!

That would be a killer combinations as the littles are practically useless in real scenarios and a slow implementation of a A78 could very well cover the low part of the cluster DVFS curve, for idle.

A55 is super old and doesnt offer any useful performance, I suspect they are only there for the low-power scenarios.

But again, Apple has showed that its out-of-order little cores can be super efficient when implemented at low frequency (I think they run at something like 1.7GHz peak frequency).

I didnt read much information on X1 power, but yes it will for sure be less power efficient than A78 when both of them are running flat out at 3GHz. But, I highly suspect that (like A78 vs A77) on the whole DVFS curve, X1 can be lower power than A78 in the performance regions in which they overlap.

It is simply a matter of being wider and slower, this makes you more efficient. That is the Apple way, wide and slow.

They have the example of iso-performance metric, X1 will need much lower frequencies and voltages to reach a middle-of-the-road performance point (something like ~35 SpecInt, given that projection is 47 SpecInt flat-out). This could easily offset the intrinsic iso-frequency power deficit that the X1 brings.

spaceship9876 - Tuesday, May 26, 2020 - link

I'm surprised they are still using Arm v8.2, that was released in Jan 2016.Kamen Rider Blade - Tuesday, May 26, 2020 - link

I concur.In September 2019, ARMv8.6-A was introduced.

https://en.wikipedia.org/wiki/ARM_architecture#ARM...

There's also these new instructions to add in the future:

In May 2019, ARM announced their upcoming Scalable Vector Extension 2 (SVE2) and Transactional Memory Extension (TME)

eastcoast_pete - Tuesday, May 26, 2020 - link

I might be mistaken, but aren't those wide SVEs a co-development with Fujitsu? ARM might simply not have a blanket license to use that jointly developed tech. I am rooting for wider availability of those for my own reasons (video encoding runs much faster if it can use wide extensions). And there is no such thing as too much oomph for working with videos.SarahKerrigan - Tuesday, May 26, 2020 - link

It's just taking a while for SVE to get in. Future ARM Ltd cores are likely to have it; future server chips from Hisilicon are roadmapped to as well (although at this point, all bets are off due to the ongoing difficulties between the American state and Huawei.)GC2:CS - Tuesday, May 26, 2020 - link

So Apple is finally defeated now ?A11 was 25% A12 15% and A13 just 20% faster than last gen. So A10 is still quite competitive today.

This is higher gain than Apple for a third year in a row.

The question is, how much of that is won by going to the 5nm process ? I heard it is quite advanced compared to 7nm.

tipoo - Tuesday, May 26, 2020 - link

30% faster than the A77 will bring X1 closer to, but probably still under the A13. And A14 will be out by then. Wouldn't call Apple defeated yet, it's easier to make larger gains when you were at half their per core performance a few years ago...MarcGP - Wednesday, May 27, 2020 - link

30% faster in IPC gains alone (iso-process and frequency). The X1 SOCs will be much faster than that 30% over the current A77 SOCs, much closer to 50% than 30%.