The Intel Comet Lake Core i9-10900K, i7-10700K, i5-10600K CPU Review: Skylake We Go Again

by Dr. Ian Cutress on May 20, 2020 9:00 AM EST- Posted in

- CPUs

- Intel

- Skylake

- 14nm

- Z490

- 10th Gen Core

- Comet Lake

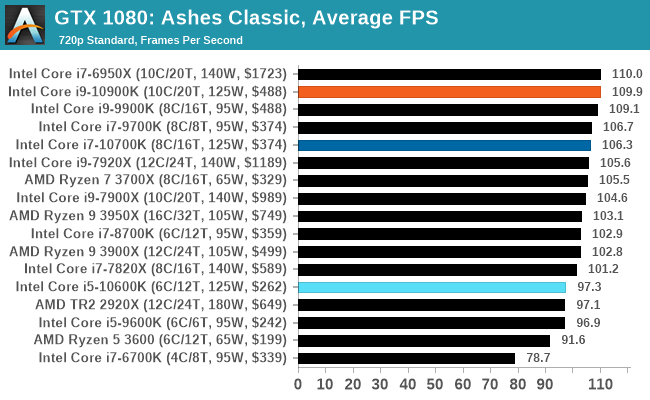

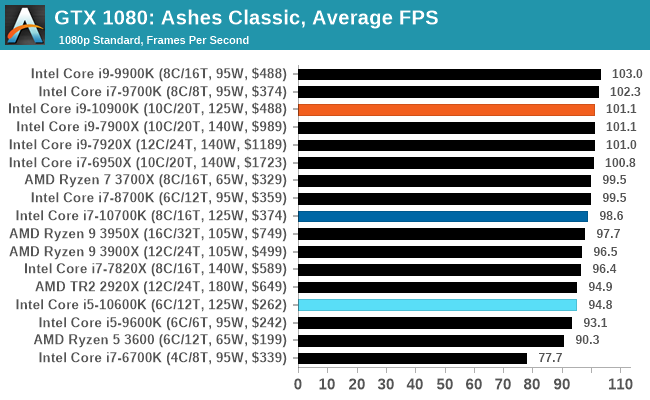

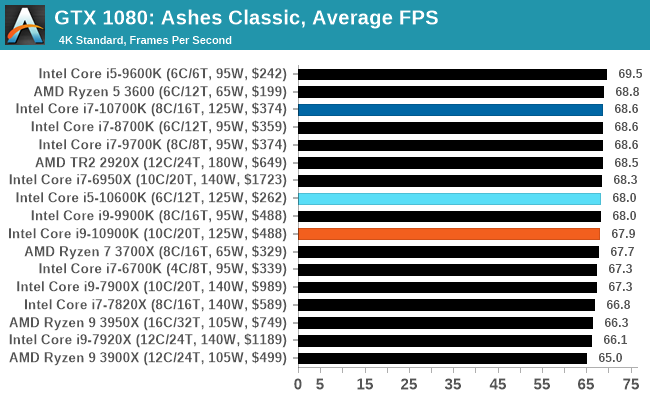

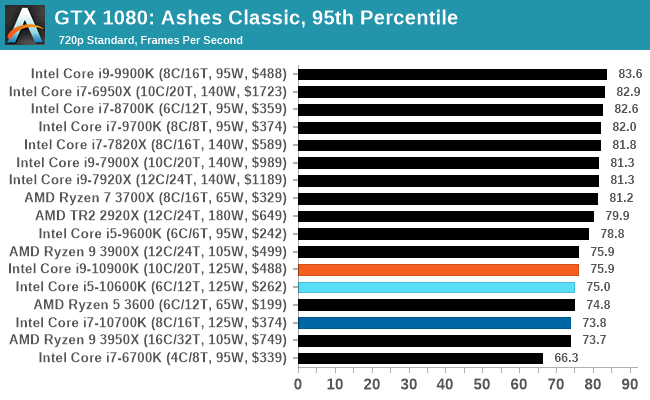

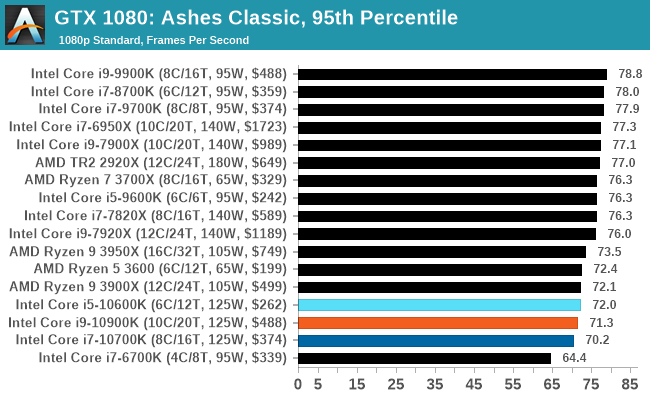

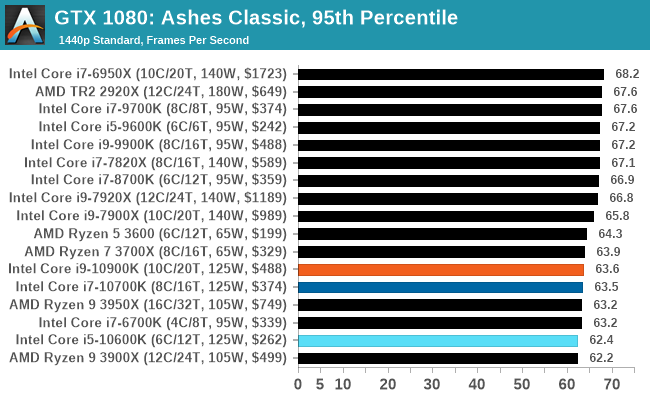

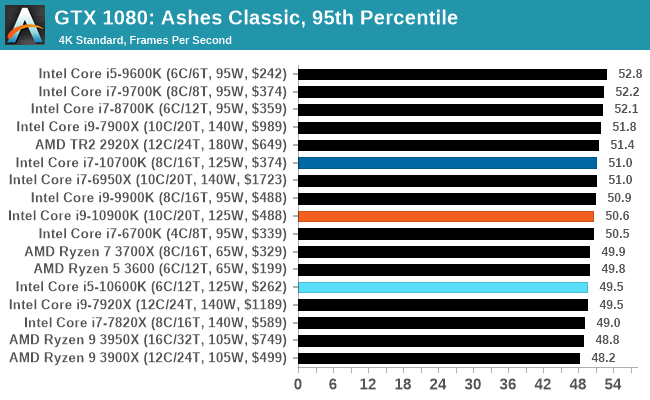

Gaming: Ashes Classic (DX12)

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of the DirectX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run Ashes Classic: an older version of the game before the Escalation update. The reason for this is that this is easier to automate, without a splash screen, but still has a strong visual fidelity to test.

Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at the above settings, and take the frame-time output for our average and percentile numbers.

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

220 Comments

View All Comments

Gastec - Friday, May 22, 2020 - link

"pairing a high-end GPU with a mid-range CPU" should already be a meme, so many times I've seen it copy-pasted.dotjaz - Thursday, May 21, 2020 - link

What funny stuff are you smoking? In all actual configurations, AMD doesn't lose by any meaningful margin at a much better value.Anandtech is running CPU test where you set the quality low and get 150+fps or even 400+fps, nobody actually does that.

deepblue08 - Thursday, May 21, 2020 - link

Intel may not be a great value chip all around. But a 10 FPS lead in 1440p is a lead nevertheless: https://hexus.net/tech/reviews/cpu/141577-intel-co...DrKlahn - Thursday, May 21, 2020 - link

If that's worth the more expensive motherboard, beefier (and more costly) cooling, and increased heat then go for it. If you put 120fps next to 130fps without a counter up how many people could tell?Personally I don't see it as worth it at all. Nor do I consider it a dominating lead. But I'm sure there are people out there that will buy Intel for a negligible lead.Spunjji - Friday, May 22, 2020 - link

An entirely unnoticeable lead that you get by sacrificing any sort of power consumption / cooling sanity and spending measurably larger amounts of cash on the hardware to achieve the boost clocks required to get that lead.The difference was meaningful back when AMD had lower minimum framerates, less consistency and -30fps or so off the average. Now it's just silly.

babadivad - Thursday, May 21, 2020 - link

Do you need a new mother board with these? If so they make even less sense than they already did.MDD1963 - Friday, May 22, 2020 - link

As for Intel owners, I don't think too many 8700K, 9600K or above owners would seriously feel they are CPU limited and in a dire/ imminent need of a CPU upgrade as they sit now, anyway. Users of prior generations (I'm still on 7700K) will make their choices at a time of their own choosing, of course, and not simply because 'a new generation is out'. (I mean, look at 8700K vs. 10600K results.....; looks almost like a rebadging operation)khanikun - Wednesday, May 27, 2020 - link

I was on a 7700k and didn't feel CPU limited at all, but decided to get an 8086k for the 2 more cores and just cause it was an 8086. For my normal workloads or gaming, I don't notice a difference. I do reencode videos maybe a couple times a year. The only times I'll see the difference.I'll probably just be sitting on this 8086k for the next few years, unless something on my machine breaks or Intel does something crazy ridiculous, like making some 8 core i7 on 10nm at 5 ghz all core, in a new socket, then making dual socket consumer boards for it for relatively decent price. I'd upgrade for that, just cause I'd like to try making a dual processor system that isn't some expensive workstation/server system.

Spunjji - Friday, May 22, 2020 - link

Yes, you do. So no, they don't make sense xDGastec - Friday, May 22, 2020 - link

Games...framerate is pointless in video games, all that matters now are the "surprise mechanics".