Rebranded Ethernet Technology Consortium Unveils 800 Gigabit Ethernet

by Gavin Bonshor on April 9, 2020 11:00 AM EST- Posted in

- Networking

- Cisco

- Ethernet

- 800 GbE

- 400 GbE

- 800GBase-T

With an increasing demand for networking speed and throughput performance within the datacenter and high performance computing clusters, the newly rebranded Ethernet Technology Consortium has announced a new 800 Gigabit Ethernet technology. Based upon many of the existing technologies that power contemporary 400 Gigabit Ethernet, the 800GBASE-R standard is looking to double performance once again, to feed ever-hungrier datacenters.

The recently-finalized standard comes from the Ethernet Technology Consortium, the non-IEEE, tech industry-backed consortium formerly known as the 25 Gigabit Ethernet Consortium. The group was originally created to develop 25, 50, and 100 Gigabit Ethernet technology, and while IEEE Ethernet standards have since surpassed what the consortium achieved, the consortium has stayed formed to push even faster networking speeds, and changing its name to keep with the times. Some of the biggest contributors and supporters of the ETC include Broadcom, Cisco, Google, and Microsoft, with more than 40 companies listed as integrators of its work.

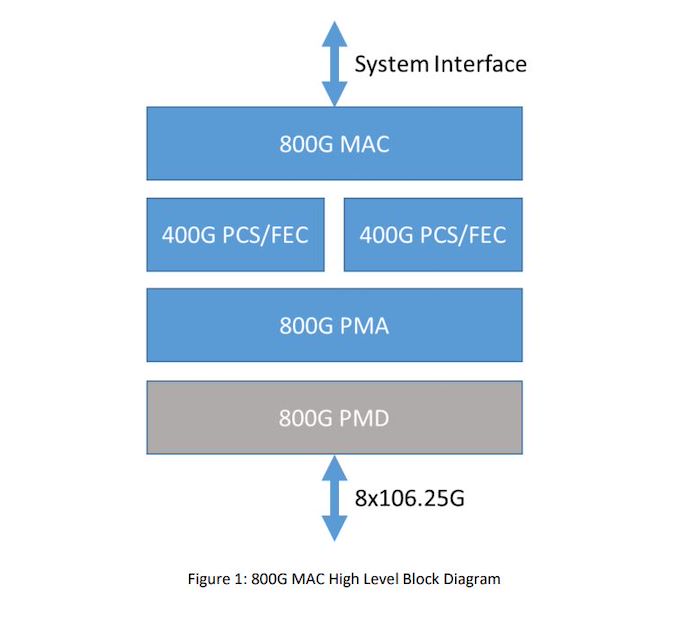

800 Gigabit Ethernet Block Diagram

As for their new 800 Gigabit Ethernet standard, at a high level 800GbE can be thought of as essentially a wider version of 400GbE. The standard is primarily based around using existing 106.25G lanes, which were pioneered for 400GbE, but doubling the number of total lanes from 4 to 8. And while this is a conceptually simple change, there is a significant amount of work involved in bonding together additional lanes in this fashion, which is what the new 800GbE standard has to sort out.

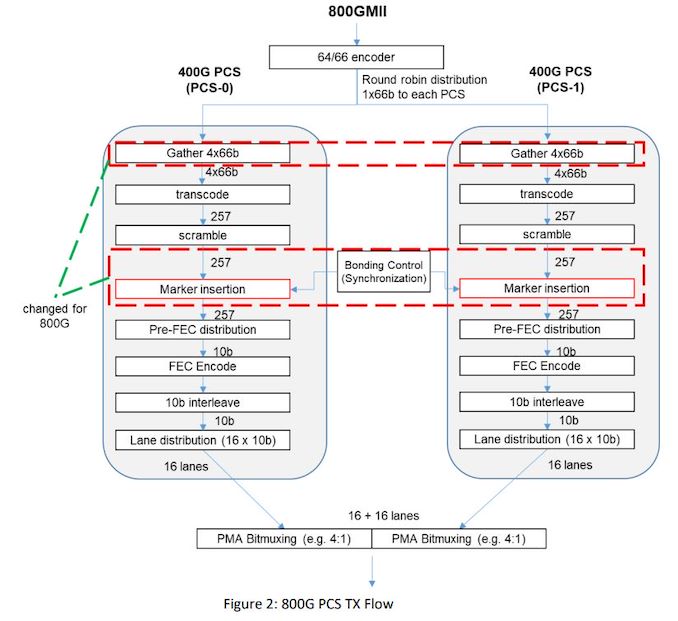

Diving in, the new 800GBASE-R specification defines a new Media Access Control (MAC) and a Physical Coding Sublayer (PCS), which in turn is built on top of two 400 GbE 2xClause PCS's to create a single MAC which operates at a combined 800 Gb/s. Each 400 GbE PCS uses 4 x 106.25 GbE lanes, which when doubled brings the total to eight lanes, which has been used to create the new 800 GbE standard. And while the focus is on 106.25G lanes, it's not a hard requirement; the ETC states that this architecture could also allow for larger groupings of slower lanes, such as 16x53.125G, if manufacturers decided to pursue the matter.

Focusing on the MAC itself, the ETC claims that 800 Gb Ethernet will inherit all of the previous attributes of the 400 GbE standard, with full-duplex support between two terminals, and with a minimum interpacket gap of 8-bit times. The above diagram depicts each 400 GbE with 16 x 10 b lanes, with each 400 GbE data stream transcoding and scrambling packet data separately, with a bonding control which synchronizes and muxes both PCS's together.

All told, the 800GbE standard is the latest step for an industry as a whole that is moving to Terabit (and beyond) Ethernet. And while those future standards will ultimately require faster SerDes to drive the required individual lane speeds, for now 800GBASE-R can deliver 800GbE on current generation hardware. All of which should be a boon for the standard's intended hyperscaler and HPC operator customers, who are eager to get more bandwidth between systems.

The Ethernet Technology Consortium outlines the full specifications of the 800 GbE on its website in a PDF. There's no information when we might see 800GbE in products, but as its largely based on existing technology, it should be a relatively short wait by datacenter networking standards. Though datacenter operators will probably have to pay for the luxury; with even a Cisco Nexus 400 GbE 16-port switch costing upwards of $11,000, we don't expect 800GbE to come cheap.

Related Reading

- Sonnet Unveils Solo5G: A USB-C to 5 GbE Network Adapter

- Intel Launches Atom P5900: A 10nm Atom for Radio Access Networks

- D-Link Announces Nuclias Remote Management Solutions for SMB Networks

- TP-Link Updates Deco Mesh Networking Family with Wi-Fi 6

Source: Ethernet Technology Consortium

QSFP-DD Image Courtesy Optomind

75 Comments

View All Comments

willis936 - Thursday, April 9, 2020 - link

Pro-tip: all optical signals start and end as electrical signals. You still need to make a 8x100G link if you want to modulate 8 channels on a single piece of fiber. What's really astonishing here is the 100G link. If this doesn't impress you, then you simply have no clue. When I stepped out of the industry about a year ago, 50GBaud PAM-4 plugfests were still relatively new things. I would hear higher ups in big companies poo-poo the idea of making links that fast because demand was not high enough to warrant it. Still, it is where all of the R&D money is spent because lower speeds are already done. It's difficult to overstate just how much effort went into making something like an 8-lane 800G link possible. Just go to the meetings.Brane2 - Thursday, April 9, 2020 - link

it's not about impressing me. It's about making real advance.And those advances are about as real as WI-Fi speed advances, where marketing bulls**t paints parallel universe.

THis thing is pushing copper past practical limits. Basically they are playing with overclocking the copper SERDES. OK, so what ?

You get higher speeds, but at what costs ?

voicequal - Thursday, April 9, 2020 - link

Datacenter is mostly about $/bps. If this 2x bps at 1.5x $$, then it delivered value and will be adopted.PeachNCream - Thursday, April 9, 2020 - link

And here I am with a ISP-issued DSL router that is lavishly equipped with 4 100mbit ethernet ports and yes, I got it about 11 months ago when my last one died.Adonisds - Thursday, April 9, 2020 - link

Does it use fiber optics and a different connector than the one in the picture?Railgun - Thursday, April 9, 2020 - link

Runs fine on Cat3willis936 - Friday, April 10, 2020 - link

The PMD isn’t mentioned in this article or the press release (afaict). You need to be a member (anyone got 5 grand?) to see the spec. This is one reason why IEEE specs are better: at least third parties can easily evaluate them.A5 - Friday, April 10, 2020 - link

Technically, Ethernet spec isn’t tied to any physical media.Practically, anything past 10GbE is on fiber. Cat 8 can take 40G about 25m.

willis936 - Saturday, April 11, 2020 - link

It does depend on the specific clause. Clause 92 and 93 are 100GBASE over 4 lanes of cable and backplane, respectively. Clause 83D (CAUI-4) is 100GBASE over 4 lanes of unspecified PMD.Deicidium369 - Wednesday, April 15, 2020 - link

Purely optical - even the 10Gb/s SFP+ over copper was pushing it.