Rebranded Ethernet Technology Consortium Unveils 800 Gigabit Ethernet

by Gavin Bonshor on April 9, 2020 11:00 AM EST- Posted in

- Networking

- Cisco

- Ethernet

- 800 GbE

- 400 GbE

- 800GBase-T

With an increasing demand for networking speed and throughput performance within the datacenter and high performance computing clusters, the newly rebranded Ethernet Technology Consortium has announced a new 800 Gigabit Ethernet technology. Based upon many of the existing technologies that power contemporary 400 Gigabit Ethernet, the 800GBASE-R standard is looking to double performance once again, to feed ever-hungrier datacenters.

The recently-finalized standard comes from the Ethernet Technology Consortium, the non-IEEE, tech industry-backed consortium formerly known as the 25 Gigabit Ethernet Consortium. The group was originally created to develop 25, 50, and 100 Gigabit Ethernet technology, and while IEEE Ethernet standards have since surpassed what the consortium achieved, the consortium has stayed formed to push even faster networking speeds, and changing its name to keep with the times. Some of the biggest contributors and supporters of the ETC include Broadcom, Cisco, Google, and Microsoft, with more than 40 companies listed as integrators of its work.

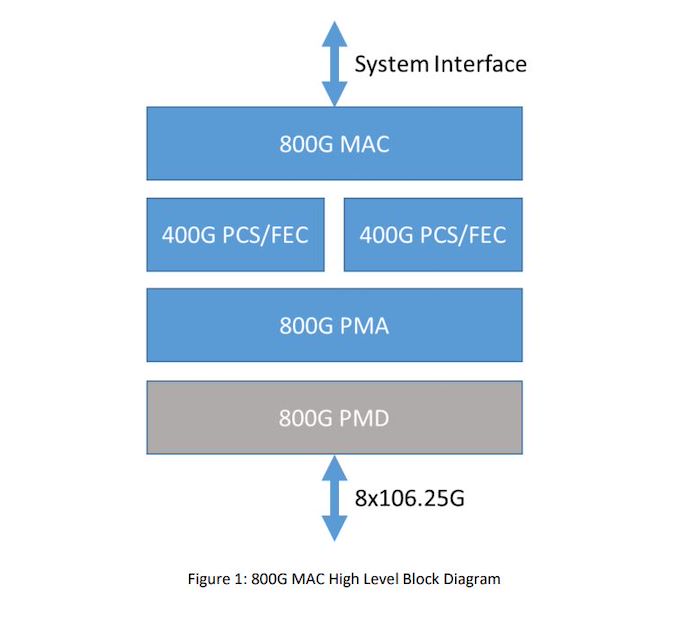

800 Gigabit Ethernet Block Diagram

As for their new 800 Gigabit Ethernet standard, at a high level 800GbE can be thought of as essentially a wider version of 400GbE. The standard is primarily based around using existing 106.25G lanes, which were pioneered for 400GbE, but doubling the number of total lanes from 4 to 8. And while this is a conceptually simple change, there is a significant amount of work involved in bonding together additional lanes in this fashion, which is what the new 800GbE standard has to sort out.

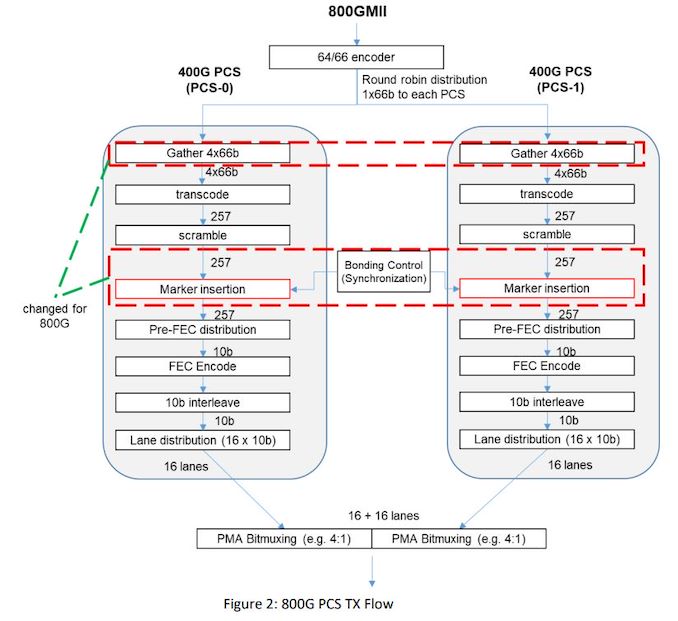

Diving in, the new 800GBASE-R specification defines a new Media Access Control (MAC) and a Physical Coding Sublayer (PCS), which in turn is built on top of two 400 GbE 2xClause PCS's to create a single MAC which operates at a combined 800 Gb/s. Each 400 GbE PCS uses 4 x 106.25 GbE lanes, which when doubled brings the total to eight lanes, which has been used to create the new 800 GbE standard. And while the focus is on 106.25G lanes, it's not a hard requirement; the ETC states that this architecture could also allow for larger groupings of slower lanes, such as 16x53.125G, if manufacturers decided to pursue the matter.

Focusing on the MAC itself, the ETC claims that 800 Gb Ethernet will inherit all of the previous attributes of the 400 GbE standard, with full-duplex support between two terminals, and with a minimum interpacket gap of 8-bit times. The above diagram depicts each 400 GbE with 16 x 10 b lanes, with each 400 GbE data stream transcoding and scrambling packet data separately, with a bonding control which synchronizes and muxes both PCS's together.

All told, the 800GbE standard is the latest step for an industry as a whole that is moving to Terabit (and beyond) Ethernet. And while those future standards will ultimately require faster SerDes to drive the required individual lane speeds, for now 800GBASE-R can deliver 800GbE on current generation hardware. All of which should be a boon for the standard's intended hyperscaler and HPC operator customers, who are eager to get more bandwidth between systems.

The Ethernet Technology Consortium outlines the full specifications of the 800 GbE on its website in a PDF. There's no information when we might see 800GbE in products, but as its largely based on existing technology, it should be a relatively short wait by datacenter networking standards. Though datacenter operators will probably have to pay for the luxury; with even a Cisco Nexus 400 GbE 16-port switch costing upwards of $11,000, we don't expect 800GbE to come cheap.

Related Reading

- Sonnet Unveils Solo5G: A USB-C to 5 GbE Network Adapter

- Intel Launches Atom P5900: A 10nm Atom for Radio Access Networks

- D-Link Announces Nuclias Remote Management Solutions for SMB Networks

- TP-Link Updates Deco Mesh Networking Family with Wi-Fi 6

Source: Ethernet Technology Consortium

QSFP-DD Image Courtesy Optomind

75 Comments

View All Comments

CaedenV - Thursday, April 9, 2020 - link

Big problem now is that most high end wifi can easily go over 1Gbps, while the port that ties them to the wire is only at 1Gbps... It is time for even basic wired equipment to do 2Gbps at least, if not making the full jump to 10TheinsanegamerN - Friday, April 10, 2020 - link

And the internet they are connected to can barely saturate fast ethernet. The 1GBe ethernet is not the bottleneck there, not yet anyway.Guspaz - Friday, April 10, 2020 - link

Millions of Canadians served by Bell Canada's FTTH network have access to service that tops out at 1.5 gigabits per second. The cost is CDN$95 per month, or roughly US$68 per month. Right now, they market it for maintaining high speeds when you have lots of wired and wireless devices in your home, but wouldn't it be nice if the ethernet side of that was faster than one gigabit?Brane2 - Thursday, April 9, 2020 - link

That's basically nothing. All they did is used 2x bigger connectors and packed those lanes in.Not that much better than existing 400G solution.

I can understand that someone might want it, but it's not newsworthy step ahead...

bcronce - Thursday, April 9, 2020 - link

All they did was pack a bunch of explosives and aimed towards the moon. Most breakthroughs don't result in sudden commercially viable 10x increases. 800Gb isn't that amazing anyway. We have 40Tb/s fiber. But it is a big step to make it an industry standard.Brane2 - Thursday, April 9, 2020 - link

This will never be an industry standard.WRT to Saturn V, yes, they employed Nazis and their tech as a foundation ( Von Braun etc) and indebted taxpayers to their eyeballs to achieve - what ?

Staurn V certainly didn't end as "industry standard"...

TheinsanegamerN - Friday, April 10, 2020 - link

Aside from your bone to pick with Nazis, which, who cares? Those Nazis put us on the moon, something no other country has achieved.Why does it matter? well, the information we gathered from the apollo missions was later used for advancements in control systems, modern rocketry used to launch the satellites that allow for instantaneous communication around the globe, unlocking the secrets of the universe, and of course that information led to the creation of semi-permanent space stations.

But I guess you're right, why should we ever try to do something unless it is blatantly obvious we will all benefit? That behavior works so well for the developing world, doesnt it?

Lord of the Bored - Saturday, April 11, 2020 - link

Apollo did not "indebt taxpayers to their eyeballs". At their peak, they took five percent of the national budget. Which is big enough to not just be filed under "other expenses", but not breaking the bank by any means.NASA has a proud tradition of doing amazing things on a shoestring budget, then getting raked over the coals for how ridiculously expensive they are because people simply ASSUME they have to be taking a huge swath of the federal budget without looking. Apollo was no exception.

Also, the list of technologies created for Apollo that found widespread usage is massive and includes cordless power tools(because they needed cordless power tools), the modern tennis shoe(based on techniques invented to manufacture moonwalk boots), and kidney dialysis machines(based on the spacecrafts' water-recycling systems).

More topical to the present audience: While not an invention of NASA, their unusually heavy reliance on integrated circuits(they were buying half of all ICs sold at the time) IS credited with advancing the state of the art by about twenty years. They were buying these parts because their market was one of the few where a computer was needed, but size and weight were important considerations.

What did your computer and cellular phone look like back in Y2K?

Deicidium369 - Wednesday, April 15, 2020 - link

And von braun used the wok of Goddard, an american.jfmonty2 - Thursday, April 9, 2020 - link

You can squeeze 40Tb/s over a single fiber, yes, but you don't exactly have a 40Tb/s link between two devices. As far as I'm aware, that kind of bandwidth is only achievable using extremely dense wavelength multiplexing to combine a whole bunch of links. So you can have maybe 128 machines on one end talking to 128 on the other end at a few hundred Gb/s each.