GeForce 6200 TurboCache: PCI Express Made Useful

by Derek Wilson on December 15, 2004 9:00 AM EST- Posted in

- GPUs

Introduction

Imagine if getting the support for current generation graphics technology didn't require spending more than $79. Sure, performance wouldn't be good at all, and resolution would be limited to the lower end. But the latest games would all run with the latest features. All the excellent water effects in Half-life 2 would be there. Far Cry would run in all its SM 3.0 glory. Any game coming out until a good year into the DirectX 10 timeframe would run (albeit slowly) feature-complete on your impressively cheap card.A solution like this isn't targeted at the hardcore gamer, but at the general purpose user. This is the solution that keeps people from buying hardware that's obsolete before they get it home. The idea is that being cheap doesn't need to translate to being "behind the times" in technology. This gives casual consumers the ability to see what having a "real" graphics card is like. Games will look much better running on a full DX9 SM 3.0 part that "supports" 128MB of RAM (we'll talk about that later) than on an Intel integrated solution. Shipping higher volume with cheaper cards and getting more people into gaming translates to raising the bar on the minimum requirements for game developers. The sooner NVIDIA and ATI can get current generation parts into the game-buying world's hands, the sooner all game developers can write games for DX9 hardware at a base level rather than as an extra.

In the past, we've seen parts like the GeForce 4 MX, which was just a repackaged GeForce 2. Even today, we have the X300 and X600, which are based on the R3xx architecture, but share the naming convention of the R4xx. It really is refreshing to see NVIDIA take a stand and create a product lineup that can run games the same way from the top of the line to the cheapest card out there (the only difference being speed and the performance hit of applying filtering). We hope (if this part ends up doing well and finding a good price point for its level of performance) that NVIDIA will continue to maintain this level of continuity through future chip generations. We hope that ATI will follow suit with their lineup next time around. Relying on previous generation higher end parts to fulfill current lower end needs is not something that we want to see as long term.

We've actually already taken a look at the part that NVIDIA will be bringing out in two new flavors. The 3 vertex/4 pixel/2 ROP GeForce 6200 that came out only a couple months ago is being augmented by two lower performance versions, both bearing the moniker GeForce 6200 with TurboCache.

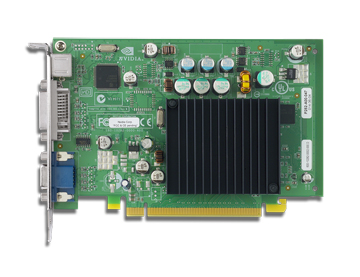

It's passively cooled, as we can see. The single memory module of this board is peeking out from beneath the heatsink on the upper right. NVIDIA has indicated that a higher performance version of the 6200 with TurboCache will follow to replace the current shipping 6200 models. Though better than non-existent parts such as the X700 XT, we would rather not see short-lived products hit the market. In the end, such anomalies only serve to waste the time of NVIDIA's partners and confuse customers.

For now, the two parts that we can expect to see will be differentiated by their memory bandwidth. The part priced at "under $129" will be a "13.6 GB/s" setup, while the "under $99" card will sport "10.8 GB/s" of bandwidth. Both will have core and memory clocks at 350/350. The interesting part is the bandwidth figure. On both counts, 8 GB/s of that bandwidth comes from the PCI Express bus. For the 10.8 GB/s part, the extra 2.8 GB/s comes from 16MB of local memory connected on a single 32bit channel running at a 700MHz data rate. The 13.6 GB/s version of the 6200 with TurboCache just gets an extra 32bit channel with another 16MB of RAM. We've seen pictures of boards with 64MBs of onboard RAM, pushing bandwidth way up. We don't know when we'll see a 64MB product ship, or what the pricing would look like.

So, to put it all together, either 112 or 96 MB of framebuffer is stored in system RAM and accessed via the PCI Express bus. Local graphics RAM holds the front buffer (what's currently on screen) and other high priority (low latency) data. If more than local graphics memory is needed, it is allocated dynamically from system RAM. The local graphics memory that is not set aside for high priority tasks is then used as a sort of software managed cache. And thus, the name of the product is born.

The new technology here is allowing writes directly from the GPU to system RAM. We've been able to perform reads from system RAM for quite some time, though technologies like AGP texturing were slow and never delivered on their promises. With a few exceptions, the GPU is able to see system RAM as a normal framebuffer, which is very impressive for PCI Express and current memory technology.

But it's never that simple. There are some very interesting problems to deal with when using system RAM as a framebuffer; this is not simply a driver-based software solution. The foremost and ever pressing issue is latency. Going from the GPU, across the PCI Express bus, through the memory controller, into the System RAM, and all the way back is a very long, round trip. Considering the fact that graphics cards are used to having instant access to data, something is going to have to give. And sure, the PCI Express bus may be 8 GB/s (4 up and 4 down, but it's less if you talk about actual utilization), but we are only going to be getting 6.4 GB/s out of the RAM. And that's if we are talking zero CPU utilization of memory and nothing else going on in the system, only what we're doing with the graphics card.

Let's take a closer look at why anyone would want to use system RAM as a framebuffer, and how NVIDIA has tried to solve the problems that lie within.

UPDATE: We got an email in our inbox from NVIDIA updating us on a change they have made to the naming of their TurboCache products. It seems they have listened to us and are including physical memory sizes on marketing/packaging. Here's what product names will look like:

GeForce 6200 w/ TurboCache supporting 128MB, including 16MB of local TurboCache: $79We were off on pricing a little bit, as the $129 figure we heard was actually for the 64MB/256MB part, and the 64-bit version we tested (which supports only 128MB) actually hits the price point we are looking for.

GeForce 6200 w/ TurboCache supporting 128MB, including 32MB of local TurboCache: $99

GeForce 6200 w/ TurboCache supporting 256MB, including 64MB of local TurboCache: $129

43 Comments

View All Comments

sphinx - Wednesday, December 15, 2004 - link

I think this is a good offering from NVIDIA. Passively cooled is a VERY good solution in my line of work. One less thing I have to worry about silencing. As I use my PC to make money, not for playing games. Although I like to play an occasional game from time to time don't get me wrong. I use my XBOX for gaming. When this card comes out I'll get one.DerekWilson - Wednesday, December 15, 2004 - link

#9, It'll only use 128Mb if a full 128 is needed at the same time -- which isn't usually the case, but we haven't done an indept study on this yet. Also, keep in mind that we still tested at the absolute highest quality settings with noAA/AF (excpet doom 3 even used 8x AF as well). We were not seeing slide show framerates. The FX5200 doesn't even support all the features of the FX5900, let alone the 6200TC. Nor does the FX5200 perform as well at equivalent settings.IGP is something I talked to NVIDIA about. This solution really could be an Intel Extreme Graphics killer (in the integrated market). In fact, with the developments in the mareketplace, Intel may finally get up and start moving to create a graphics solution that actually works. There are other markets to look for TurboCache solutions to show up as well.

#11 ... The packaging issue is touchy. We'll see how vendors pull it off when it happens. The cards do run as if they has a full 128MB of ram, so that's very important to get across. We do feel that talking about the physical layout of the card and the method of support is important as well.

#8, 1600x1200x32 only requires that 7.5MB be stored locally. As was mentioned in the artile, only the FRONT buffer needs to be local to the graphics card. This means that the depth buffer, back buffer and other render surfaces can all be in system memory. I know it's kind of hard to believe, but this card can actually draw everything diectly into system RAM from the pixel pipes and ROPs. When the buffers are swapped to display the back buffer, what's in system memory is copied into graphics memory.

It really is very cool for a low performance budget part.

And we might see higher performance version of turbo cache in the future ... though NVIDIA isn't talking about them yet. It might be nice to have the possibility of an expanded framebuffer with more system RAM if the user wanted to enable that feature.

TurboCache is actually a performance enahancing feature. It's just that it's enhancing the performance of a card with either 16MB or 32MB of on board ram and either a 32 or 64 bit memory bus ... :-)

DAPUNISHER - Wednesday, December 15, 2004 - link

"NVIDIA has defined a strict set of packaging standards around which the GeForce 6200 with TurboCache supporting 128MB will be marketed. The boxes must have text, which indicates that a minimum of 512MB of system RAM is necessary for the full 128MB of graphics RAM support. It doesn't seem to require that a discloser of the actual amount of onboard RAM be displayed, which is not something that we support. It is understandable that board vendors are nervous about how this marketing will go over, no matter what wording or information is included on the package."More bullsh!t deceptive advertising to bilk uninformed consumers out of their money.

MAValpha - Wednesday, December 15, 2004 - link

#7, I was thinking the same thing. This concept seems absolutely perfect for nForce5 IGP, should NVidia decide to go that route. And, once again, NVidia's approach to budget seems superior to ATI's, at least from an initial glance. A heavily-castrated 6200TC running off SHARED RAM STILL manages to outperform a full X300? Come on, ATI, get with it!I gotta wonder, though: this solution seems unbelievably dependent on "proper implementation of the PCIe architecture." This means that the card can never be coupled with HSI for older systems, and transitional boards will have trouble running the card (Gigabyte's PT880 with converted PEG, for example- PT880 natively supports AGP). Does this mean that a budget card on a budget motherboard will suffer significantly?

mindless1 - Wednesday, December 15, 2004 - link

IMO, even (as low as) $79 is too expensive. Taking 128MB of system memory away on a system budgetized to include one of these, would typically be leaving 384MB, robbing the system of memory to pay nVidia et al. for a part without (much) memory.I tend to disagree with the slant of the article too, that it's not necessarily a good thing to try pushing modern gaming eyecandy at expense of performance. What looks good isn't a crisp and anti-aliased slideshow, but a playable game. even someone just beginning at gaming can discern a lag when fragging it out.

We're only looking at current games now, the bar for performance needs will be raised but the cards are memory bandwidth limited due to the architecture. These might look like a good alternative for someone who went and paid $90 for an FX5200 from BestBuy last year but in a budget system it's going to be tough to justify ~ $80-100 when a few bucks more won't rob one of system memory or as much performance.

Even so, historically we've seen that initial price-points do fall, better to see modern support than a rehash of a FX5xxx.

PrinceGaz - Wednesday, December 15, 2004 - link

nVidia's marketing department must be really pleased with coming up with the name "TurboCache". It makes it sounds like its faster than a normal card without TurboCache, whereas in reality the opposite is true. Uninformed customers would probably choose a TurboCache version over a normal version, even if they were priced the same!----

Derek- does the 16MB 6200 have limitations on what resolutions can be used in games? I know you wouldn't want to run it at 1600x1200x32 in Far Cry for instance, but in older games like Quake 3 it should be fast enough.

Thing is that the frame-buffer at 1600x1200x32 requires 7.3MB, so with double-buffering you're using up a total of 14.65MB leaving just 1.35MB for the Z-buffer and anything else it needs to keep in local memory, which might not be enough. I'm assuming the frame the card is currently displaying must be held in local memory, as well as the frame being worked on.

The situation is even worse with anti-aliasing as the frame-buffer size of the frame being worked on is multiplied in size by the level of AA. At 1280x960x32 with 4xAA, the single frame-buffer alone is 18.75MB meaning it won't fit in the 16MB 6200. It might not even manage 1024x768 with 4xAA as the two frame buffers would total 15MB (12MB for the one being worked on, 3MB for the one being displayed).

It will be interesting to know what the resolution limits for the 16MB (and 32MB) cards are, with and without anti-aliasing.

Spacecomber - Wednesday, December 15, 2004 - link

I may be way off base with this question, but would this sort of GPU lend it self well to some sort of integrated, onboard graphics solution? Even if it is isn't integrated directly into the main chipset (or chip for Nvidia), could it simply be soldered to the motherboard somewhere?Somehow this seems to make more sense to me for what to do with this technology than use it on a dedicated video card, especially if the price point is not that much less than a regular 6200.

bamacre - Wednesday, December 15, 2004 - link

Great review.Wow, almost 50 fps on HL2 at 10x7, that is pretty good for a budget card.

I'd like to see MS, ATI, and Nvidia get more people into PC gaming, that would make for better and cheaper games for those of us who are already loving it.

DerekWilson - Wednesday, December 15, 2004 - link

Actually, nForce 4 + AMD systems are looking better than Intel non-925xe based systems for TurboCache parts. We haven't looked at the 925xe yet though ... that could be interesting. But overhead hurts utilization alot on a serial bus, and having more than 6.4GB/s from memory might not be that useful.The efficiency of getting bandwidth across the PCI Express bus will still be the main bottleneck in systems though. Chipsets need to impliment PCI Express properly and well. That's really the important part. The 915 chipset is an example of what not to do.

jenand - Wednesday, December 15, 2004 - link

Turbo cache and Hyper memory cards should do better on Intel based systems as they do not need to go via the HTT to det to the memory. So I agree with #3 show us som i925X(E) tests. I'm not expecting higher scores on the Intel systems however. Just a larger gain from this type of technology.