GeForce 6200 TurboCache: PCI Express Made Useful

by Derek Wilson on December 15, 2004 9:00 AM EST- Posted in

- GPUs

Architecting for Latency Hiding

RAM is cheap. But it's not free. Making and selling extremely inexpensive video cards means as few components as possible. It also means as simple a board as possible. Fewer memory channels, fewer connections, and less chance for failure. High volume parts need to work, and they need to be very cheap to produce. The fact that TurboCache parts can get by with either 1 or 2 32-bit RAM chips is key in its cost effectiveness. This probably puts it on a board with a few fewer layers than higher end parts, which can have 256-bit connections to RAM.But the only motivation isn't saving costs. NVIDIA could just stick 16MB on a board and call it a day. The problem that NVIDIA sees with this is the limitations that would be placed on the types of applications end users would be able to run. Modern games require framebuffers of about 128MB in order to run at 1024x768 and hold all the extra data that modern games require. This includes things like texture maps, normal maps, shadow maps, stencil buffers, render targets, and everything else that can be read from or draw into by a GPU. There are some games that just won't run unless they can allocate enough space in the framebuffer. And not running is not an acceptable solution. This brings us to the logical combination of cost savings and compatibility: expand the framebuffer by extending it over the PCI Express bus. But as we've already mentioned, main memory appears much "further" away than local memory, so it takes much longer to either get something from or write something to system RAM.

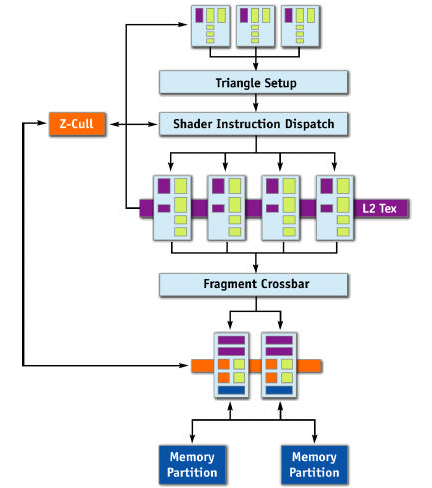

Let's take a look at the NV4x architecture without TurboCache first, and then talk about what needs to change.

The most obvious thing that will need to be added in order to extend the framebuffer to system memory is a connection from the ROPs to system memory. This will allow the TurboCache based chip to draw straight from the ROPs into things like back or stencil buffers in system RAM. We would also want to connect the pixel pipelines to system RAM directly. Not only do we need to read textures in normally, but we may also want to write out textures for dynamic effects.

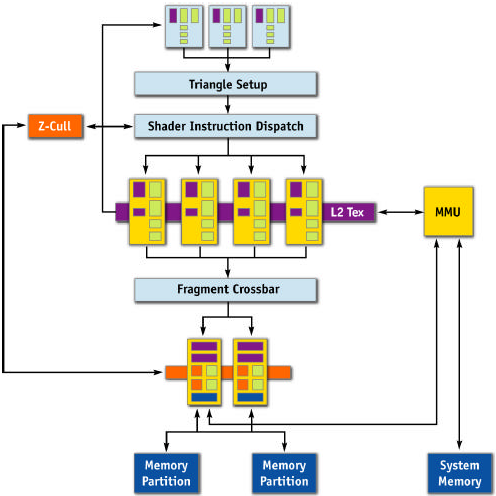

Here's what NVIDIA has offered for us today:

The parts in yellow have been rearchitected to accommodate the added latency of working with system memory. The only part that we haven't talked about yet is the Memory Management Unit. This block handles the requests from the pixel pipelines and ROPs and acts as the interface to system memory from the GPU. In NVIDIA's words, it "allows the GPU to seamlessly allocate and de-allocate surfaces in system memory, as well as read and write to that memory efficiently." This unity works in tandem with part of the Forceware driver called the TurboCache Manager, which allocates and balances system and local graphics memory. The MMU provides the system level functionality and hardware interface from GPU to system and back while the TurboCache Manager handles all the "intelligence" and "efficiency" behind the operations.

As far as memory management goes, the only thing that is always required to be on local memory is the front buffer. Other data often ends up being stored locally, but only the physical display itself is required to have known, reliable latency. The rest of local memory is treated as a something like the framebuffer and a cache to system RAM at the same time. It's not clear as to the architecture of this cache - whether it's write-back, write-through, has a copy of the most recently used 16MBx or 32MBs, or is something else altogether. The fact that system RAM can be dynamically allocated and deallocated down to nothing indicates that the functionality of local graphics memory as a cache is very adaptive.

NVIDIA hasn't gone into the details of how their pixel pipes and ROPs have been rearchitected, but we have a few guesses.

It's all about pipelining and bandwidth. A GPU needs to draw hundreds of thousands of independent pixels every frame. This is completely different from a CPU, which is mostly built around dependant operations where one instruction waits on data from another. NVIDIA has touted the NV4x as a "superscalar" GPU, though they haven't quite gone into the details of how many pipeline stages it has in total. The trick to hiding latency is to make proper use of bandwidth by keeping a high number of pixels "in flight" and not waiting for the data to come back to start work on the next pixel.

If our pipeline is long enough, our local caches are large enough, and our memory manager is smart enough, we get cycle of processing pixels and reading/writing data over the bus such that we are always using bandwidth and never waiting. The only down time should be the time that it takes to fill the pipeline for the first frame, which wouldn't be too bad in the grand scheme of things.

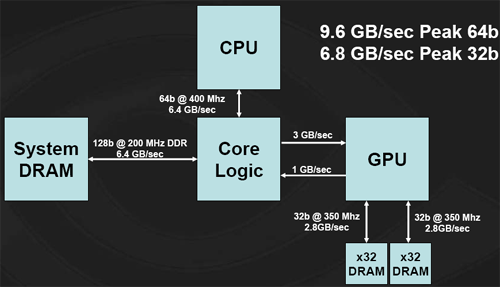

The big uncertainty is the chipset. There are no guarantees on when the system will get data back from system memory to the GPU. Worst case latency can be horrible. It's also not going to be easy to design around worst case latencies, as NVIDIA is a competitor to other chipset makers who aren't going to want to share that kind of information. The bigger the guess at the latency that they need to cover, the more pixels NVIDIA needs to keep in flight. Basically, covering more latency is equivalent to increasing die size. There is some point of diminishing returns for NVIDIA. But the larger presence that they have on the chipset side, the better off they'll be with their TurboCache parts as well. Even on the 915 chipset from Intel, bandwith is limited across the PCI Express bus. Rather than a full 4GB/s up and 4GB/s down, Intel offers only 3GB/s up and 1GB/s down, leaving the TurboCache architecture with this:

Graphics performance is going to be dependant on memory bandwidth. The majority of the bandwidth that the graphics card has available comes across the PCI Express bus, and with a limited setup such as this, the 6200 TuboCache will see a performance hit. NVIDIA has reported seeing something along the lines of a 20% performance improvement by moving from a 3-down, 1-up architecture to a 4-down, 4-up system. We have yet to verify these numbers, but it is not unreasonable to imagine this kind of impact.

The final thing to mention is system performance. NVIDIA only maps free idle pages from RAM using "an approved method for Windows XP." It doesn't lock anything down, and everything is allocated and freed on they fly. In order to support 128MB of framebuffer, 512MB of system RAM must be installed. With more system RAM, the card can map more framebuffer. NVIDIA chose 512MB as a minimum because PCI Express OEM systems are shipping with no less. Under normal 2D operation, as there is no need to store any more data than can fit in local memory, no extra system resources will be used. Thus, there should be no adverse system level performance impact from TurboCache.

43 Comments

View All Comments

Cybercat - Wednesday, December 15, 2004 - link

Basically, this is saying that this generation $90 part is no better than last generation $90 part. That's sad. I was hoping the performance leap of this generation would be felt through all segments of the market.mczak - Wednesday, December 15, 2004 - link

#12, IGP would indeed be interesting. In fact, TurboCache seems quite similar to ATI's Hypermemory/Sideport in their IGP.Cygni - Wednesday, December 15, 2004 - link

In other news, Nforce4 (2 months ago) and Xpress 200 (1 month ago) STLL arent on the market. Good lord. Talk about paper launches from ATI and Nvidia...ViRGE - Wednesday, December 15, 2004 - link

Ok, I have to admit I'm a bit confused here. Which cards did you exactly test, the 6200/16MB(32bit) and the 6200/32MB(64bit), or what? And what about the 6200/64MB, will it be a 64bit card, or a whole 128bit card?Cybercat - Wednesday, December 15, 2004 - link

What does 2 ROP stand for? :P *blush*PrinceGaz - Wednesday, December 15, 2004 - link

#15- I've got a Ti4200 but I'd never call it nVidia's best card. It is still the best card you can get in the bargain-bin price-range it is now sold at (other cards at a similar price are the FX5200 and Radeon 9200), though supplies of new Ti4200's are very limited these days.#12- Thanks Derek for answering my question about higher resolutions. As only the front-buffer needs to be in the onboard memory (because it's absolutely critical the memory accessed to send the signal to the display must always be available without any unpredictable delay), that means even the 16MB 6200 can run at any resolution, even 2560x1600 in theory though performance would probably be terrible as everything else would need to be in system memory.

housecat - Wednesday, December 15, 2004 - link

Another Nvidia innovation done right.MAValpha - Wednesday, December 15, 2004 - link

I would expect the 6200 to blow the Ti4200 out of the water, because the FX5700/Ultra is considered comparable to the GF4Ti. By comparison, many places are pitting the 6200 against the higher-end FX5900, and it holds its own.Even with the slower TurboCache, it should still be on par with a 4600, if not a little bit faster. Notice how the more powerful version beats an X300 across the board, a card derived from the 9600 series?

DigitalDivine - Wednesday, December 15, 2004 - link

how about raw perfomance numbers pitting the 6200 with nvidia's best graphics card imo, the ti4200.plk21 - Wednesday, December 15, 2004 - link

I like seing such an inexpensive part playing newer games, but I'd hardly call it real-world to pair a $75 video card with an Athlon64 4000+, which Newegg lists at $719 right now.It'd be interesting to see how these cards fare with a more realistic system for them to be paired with, i.e. a Sempron 2800+