IBM & Partners to Fight COVID-19 with Supercomputers, Forms COVID-19 HPC Consortium

by Anton Shilov on March 24, 2020 3:00 PM EST- Posted in

- Supercomputers

- HPC

- IBM

- Coronavirus

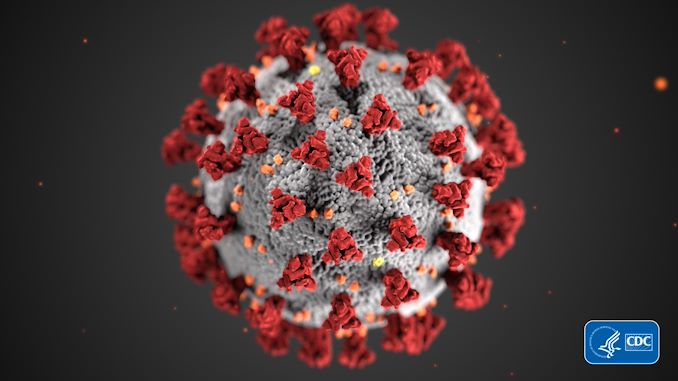

The SARS-CoV-2 coronavirus and the outbreak of the COVID-19 pandemic has disrupted multiple business events as well as high-tech product launches in the recent months and has all the chances to disrupt the world’s economy quite drastically. So in a bid to better better understand the disease and develop treatments as well as potential cures, IBM this week established the COVID-19 High Performance Computing Consortium, which will be enlisting the United States' various public and private supercomputers and compute clusters to run research projects related to the disease.

Together with IBM, the White House Office of Science and Technology Policy and the U.S. Department of Energy and others, the COVID-19 High Performance Computing Consortium pools together 16 supercomputers with that together offer a total of over 330 PetaFLOPS of compute power, a combination of 775,000 CPU cores as well as 34,000 GPUs. The systems will be used to run research simulations in epidemiology, bioinformatics, and molecular modeling. All of these virtual experiments are meant to greatly speed up research of the COVID-19 disease as well as possible treatments. Eventually, the knowledge obtained during this work could allow to develop vaccines and other treatments against the SARS-CoV-2 coronavirus itself. Meanwhile, the COVID-19 HPC Consortium will first prioritize projects that can have ‘the most immediate impact’. Researchers are advised to submit their proposals to the consortium via a special online portal.

So far, IBM’s Summit supercomputer at the Oak Ridge National Laboratory has enabled researchers from ORNL and the University of Tennessee to screen 8,000 compounds to discover those that are most likely to bind to the main “spike” protein of the coronavirus, and thus prevent it from infecting host cells. To date, scientists recommended 77 promising small-molecule mixtures that could now be evaluated experimentally.

The pool of supercomputers participating in IBM’s COVID-19 HPC Consortium currently includes machines operated by IBM, Lawrence Livermore National Lab (LLNL), Argonne National Lab (ANL), Oak Ridge National Laboratory (ORNL), Sandia National Laboratory (SNL), Los Alamos National Laboratory (LANL), the National Science Foundation (NSF), NASA, the Massachusetts Institute of Technology (MIT), Rensselaer Polytechnic Institute (RPI), and other technology companies (including Amazon, Google, Cloud, and Microsoft).

Related Reading:

- Help Fight COVID-19 and Tom's Hardware: Join The Great Folding@Home Coronavirus Race

- Computex 2020 Moved to September In Response to Coronavirus Pandemic

- Apple’s WWDC Confirmed For June; Becomes Latest Conference To Go Digital-Only Due to Coronavirus

- Mobile World Congress 2020 Cancelled

Sources: IBM, COVID-19 High Performance Computing Consortium

18 Comments

View All Comments

sharath.naik - Wednesday, March 25, 2020 - link

The virus has a protein spike that it uses to get into the cells, disable it either by binding it to something else or breaking it ends the viruses' ability to attack a living human cell. They need supercomputers to brute force iterate through all known molecules that could bind with this spike. In short, what was done in the lab once as trial and error is now being done by computers once they identify all the molecules that can bind, the next step is to narrow down the least harmful ones for testing. This reduces the time to bring an antiviral drug much quicker to the market.Santoval - Wednesday, March 25, 2020 - link

"The virus has a protein spike that it uses to get into the cells, disable it either by binding it to something else or breaking it ends the viruses' ability to attack a living human cell."Unless (or, god forbid, until) the virus mutates and rearranges that "protein spike". It wouldn't even need to switch to a different protein, which would be extremely difficult due to very high complexity. Rearranging the structure of the protein spikes to a slightly different shape, so that the antiviral no longer binds to them, would do the trick. Which is why an antiviral is only a temporary solution.

catavalon21 - Wednesday, March 25, 2020 - link

There are numerous ways; more info is probably available on compute-intensive approaches to understanding this disease on Stanford University's Folding@Home site, where this is one of their priorities right now.sharathc - Tuesday, March 24, 2020 - link

Waste of computing power 🤦🏻♂️surt - Tuesday, March 24, 2020 - link

Care to elaborate on why you think so?mandirabl - Wednesday, March 25, 2020 - link

He could use it for 360 Hz gaming for instance. /sGuspaz - Wednesday, March 25, 2020 - link

While 775,000 CPU cores and 34,000 GPUs is a decently sized supercomputer, folding@home has seen a massive surge in popularity since they started working on COVID-19, and currently represents 4,630,510 CPU cores and 435,563 GPUs.creed3020 - Wednesday, March 25, 2020 - link

My workstation is currently crunching on some units :)