The 64 Core Threadripper 3990X CPU Review: In The Midst Of Chaos, AMD Seeks Opportunity

by Dr. Ian Cutress & Gavin Bonshor on February 7, 2020 9:00 AM EST

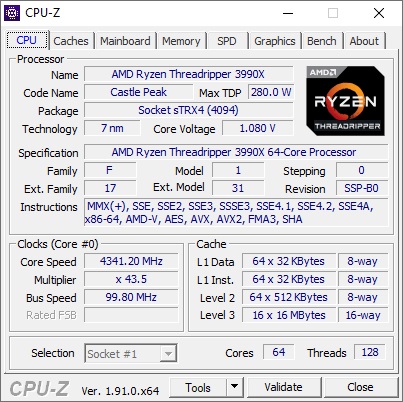

The recent renaissance of AMD as the performance choice in the high-end x86 market has been great for consumers, enabling a second offering at the top-end of the market. Where Intel offers 28 cores, AMD offers 24 and 32 core parts for the high-end desktop, and to rub salt into the wound, there is now a 64 core offering. This CPU isn’t cheap: the Ryzen Threadripper 3990X costs $3990 at retail, more than any other high-end desktop processor in history, but with it AMD aims to provide the best single socket consumer processor money can buy. We put it through its paces, and while it does obliterate the competition, there are a few issues with having this many cores in a single system.

I Want Performance, What Are My Options

The new AMD Ryzen Threadripper 3990X is a 64 core, 128 thread processor designed for the high-end desktop market. The CPU is a variant of AMD’s Enterprise EPYC processor line, offering more frequency and a higher power budget, but fewer memory channels, fewer PCIe, and a lower memory capacity support. The 3990X is at that cusp between consumer and enterprise based on its features and cost, and it’s ultimately going to compete against both. On paper, users who don’t necessarily need all of the 64 core EPYC features might turn to the 3990X, whereas consumers who need more than 32 cores are going to look here as well. We’re going to test against both.

The TR3990X is part of the Threadripper 3000 family, and will partner its 32 core and 24 core brethren in being paired with new TRX40 motherboards. Despite the same socket as the previous generation Threadrippers, AMD broke motherboard compatibility this time around in order to support PCIe 4.0 from the CPU to the chipset, allowing for higher bandwidth configurations for extra controllers. We’ve covered all 12 of the TRX40 motherboards on the market in our motherboard and chipset overview, with a lot of models focusing on 3x PCIe 4.0 x16 support, multi-gigabit Ethernet onboard, Wi-Fi 6, and one even adding in Thunderbolt 3.

ASUS ROG Zenith II Alpha Motherboard, Built for 3990X

All the Threadripper 3000 family CPUs support a total of 64 PCIe 4.0 lanes from the CPU, and another 24 from the chipset (however each of these use eight lanes to communicate with each other). There are four memory channels, supporting up to DDR4-3200 memory, and each CPU has a rated TDP of 280 W. We’ve tested the 3970X and 3960X when those CPUs were launched – you can read the review here.

| AMD Zen 2 Socketed CPUs | |||||||

| AnandTech | Cores/ Threads |

Base/ Turbo |

L3 | DRAM 1DPC |

PCIe | TDP | SRP |

| Third Generation Threadripper | |||||||

| TR 3990X | 64 / 128 | 2.9 / 4.3 | 256 MB | 4x3200 | 64 | 280 W | $3990 |

| TR 3970X | 32 / 64 | 3.7 / 4.5 | 128 MB | 4x3200 | 64 | 280 W | $1999 |

| TR 3960X | 24 / 48 | 3.8 / 4.5 | 128 MB | 4x3200 | 64 | 280 W | $1399 |

| Ryzen 3000 | |||||||

| Ryzen 9 3950X | 16 / 32 | 3.5 / 4.7 | 64 MB | 2x3200 | 24 | 105 W | $749 |

The new CPU, the 3990X, comes at the hefty price of $1 per 'X' (because it's called the 3990X and costs $3990, get it?). With 64 cores it has a rated base frequency of 2.9 GHz, and a turbo of 4.3 GHz. In our testing, we saw the single core frequency go as high as 4.35 GHz, above the rated turbo, and the all-core turbo around 3.45 GHz.

Who is This CPU Aimed At?

Not everyone needs 64 cores, and AMD has been very clear about this in their messaging. Even though the 3990X is part of AMD’s high-end desktop line, because it’s breaking new ground in core count and price, it sort of goes beyond the high-end, essentially eclipsing the prosumer/server market. This means users (and companies) that can amortize and justify the cost of the hardware as it enables them to complete projects (and therefore contracts) faster. For a user that needs to create something, rather than doing 25 prototypes a week, doing 100 per week makes their workflow a lot more complete, and it’s this sort of user AMD is going after.

Render farms that run on CPU is going to be a key example. AMD has already promoted the fact that several animation and VFX studios that produce effects in blockbuster films have been running engineering samples of the 64-core Threadripper processors for titles already in the market. Then there are video game production houses and architects, that want to rapidly prototype demo models and shorten the time to create each prototype – something that might not be able to be done on GPU (and isn’t AVX-512 accelerated).

The 3990X with 64 cores is $3990, double the cost of the 3970X with its 32 cores at $1999. Doubling the cores is an obvious step up, however there isn’t an increase in memory bandwidth or PCIe lanes, so users need to be sure that the CPU is the bottleneck of their workload.

| AMD TR3 | ||

| TR3 3990X | AnandTech | TR3 3970X |

| $3990 | SEP | $1999 |

| 64 / 128 | Cores/Threads | 32 / 64 |

| 2.9 GHz | Base Frequency | 3.7 GHz |

| 3.45 GHz | All-Core Freq (As Tested) | 3.81 GHz |

| 4.3 GHz | Single-Core Frequency | 4.5 GHz |

| 64 | PCIe 4.0 Lanes | 64 |

| 8 x DDR4-3200 | DDR4 Support | 8 x DDR4-3200 |

| 256 GB / 512 GB | Max DDR4 Capacity | 256 GB / 512 GB |

| 280 W | TDP | 280 W |

If we put the 3990X against the EPYC 7702P, the 64-core single socket offering on the enterprise side, then the 3990X has a higher thermal window (280W vs 200W) to enable higher frequencies (2.9/4.3 vs 2.0/3.35) and is cheaper ($3990 vs $4425), but it only has half the memory channels (only 4 compared to 8), half the PCIe lanes (only 64 compared to 128), and no registered memory support. The question here is whether the workload the user is looking at requires more memory/PCIe for the EPYC, or more raw CPU performance for the Threadripper.

Then there’s the competition against the Intel processors. In the high-end desktop market, Intel has nothing to compete, with the maximum product at 18 cores. It does offer a 28-core workstation part, the W-3175X, which is unlocked, with a TDP of 255W, six memory channels, 44 PCIe 3.0 lanes, at a high cost of $2999. Then there’s the server CPUs – if we want parity to the 64 cores of the 3990X, we either need to use a single Xeon Platinum 9282 with 56 cores, which isn’t available without a big contract and it has an unknown price ($25k+?), or dual Xeon Platinum 8280s, with two lots of 28 cores, at a tray price of $20018.

| 64-core Battle | ||

| 1 x TR3 3990X | AnandTech | 2 x Xeon 8280 |

| $3990 | Price | $20018 |

| 64 / 128 | Cores/Threads | 56 / 112 |

| 2.9 GHz | Base Frequency | 2.7 GHz |

| 3.45 GHz | All-Core Freq | 3.30 GHz |

| 4.3 GHz | Single-Core Freq | 4.0 GHz |

| 4.0 x64 | PCIe Lanes | 3.0 x96 |

| 8 x DDR4-3200 | DDR4 Support | 12 x DDR4-2933 |

| 256 GB / 512 GB | Max DDR4 Capacity | 1536 GB |

| 280 W | TDP | 410 W |

We’re testing against the dual 8280s and the W-3175X as well. Please note our 2x8280 results are from an older review, and so it hasn’t been run on some of our newer benchmarks.

This Review

In this review, we want to cover the Threadripper 3990X in terms of frequency, temperature, power, and performance. There’s a big caveat we have to discuss in terms of operating system choice, which we’ll go into in the next few pages. But our main comparison points are dependent on whether you are a consumer looking at a faster desktop, or an enterprise user looking at an alternative server replacement. We’ll cover both angles here.

279 Comments

View All Comments

GreenReaper - Saturday, February 8, 2020 - link

64 sockets, 64 cores, 64 threads per CPU - x64 was never intended to surmount these limits. Heck, affinity groups were only introduced in Windows XP and Server 2003.Unfortunately they hardcoded the 64-CPU limit in by using a DWORD and had to add Processor Groups as a hack added in Win7/2008 R2 for the sake of a stable kernel API.

Linux's sched_setaffinity() had the foresight to use a length parameter and a pointer: https://www.linuxjournal.com/article/6799

I compile my kernels to support a specific number of CPUs, as there are costs to supporting more, albeit relatively small ones (it assumes that you might hot-add them).

Gonemad - Friday, February 7, 2020 - link

Seeing a $4k processor clubbing a $20k processor to death and take its lunch (in more than one metric) is priceless.If you know what you need, you can save 15 to 16 grand building an AMD machine, and that's incredible.

It shows how greedy and lazy Intel has become.

It may not be the best chip for, say, a gaming machine, but it can beat a 20-grand intel setup, and that ensures a spot for the chip, not being useless.

Khenglish - Friday, February 7, 2020 - link

I doubt that really anyone would practically want to do this, but in Windows 10 if you disable the GPU driver, games and benchmarks will be fully CPU software rendered. I'm curious how this 64 core beast performs as a GPU!Hulk - Friday, February 7, 2020 - link

Not very well. Modern GPU's have thousands of specialized processors.Kevin G - Friday, February 7, 2020 - link

The shaders themselves are remarkably programmable. The only thing really missing from them and more traditional CPU's in terms of capability is how they handle interrupts for IO. Otherwise they'd be functionally complete. Granted the per-thread performance would be abyssal compared to modern CPUs which are fully pipelined, OoO monsters. One other difference is that since GPU tasks are embarrassing parallel by nature, these shaders have hardware thread management to quickly switch between them and partition resources to achieve some fairly high utilization rates.The real specialization are in in the fixed function units for their TMUs and ROPs.

willis936 - Friday, February 7, 2020 - link

Will they really? I don’t think graphics APIs fall back on software rendering for most essential features.hansmuff - Friday, February 7, 2020 - link

That is incorrect. Software rendering is never done by Windows just because you don't have rendering hardware. Games no longer come with software renderers like they used to many, many moons ago.Khenglish - Friday, February 7, 2020 - link

I love how everyone had to jump in and said I was wrong without spending 30 seconds to disable their GPU driver and try it themselves and finding they are wrong.There's a lot of issues with the Win10 software renderer (full screen mode mostly broken, only DX11 seems supported), but it does work. My Ivy Bridge gets fully loaded at 70W+ just to pull off 7 fps at 640x480 in Unigine Heaven, but this is something you can do.

extide - Friday, February 7, 2020 - link

No -- the Windows UI will drop back to software mode but games have not included software renderers for ~two decades.FunBunny2 - Friday, February 7, 2020 - link

" games have not included software renderers for ~two decades."which is a deja vu experience: in the beginning DOS was a nice, benign, control program. then Lotus discovered that the only way to run 1-2-3 faster than molasses uphill in winter was to fiddle the hardware directly, which DOS was happy to let it do. it didn't take long for the evil folks to discover that they could too, and virus was born. one has to wonder how much exposure these latest GPU hardware present?