NVIDIA SLI Performance Preview with MSI's nForce4 SLI Motherboard

by Anand Lal Shimpi on October 29, 2004 5:06 AM EST- Posted in

- GPUs

Setting up SLI

NVIDIA's nForce4 SLI reference design calls for a slot to be placed on the motherboard that will handle how many PCI Express lanes go to the second x16 slot. Remember that despite the fact that there are two x16 slots on the motherboard, there are still only 16 total lanes allocated to them at most - meaning that each slot is electrically still only a x8, but with a physical x16 connector. While having a x8 bus connection means that the slots have less bandwidth than a full x16 implementation, the real world performance impact is absolutely nothing. In fact, gaming performance doesn't really change down to even a x4 configuration; the performance impact of a x1 configuration itself is even negligible.

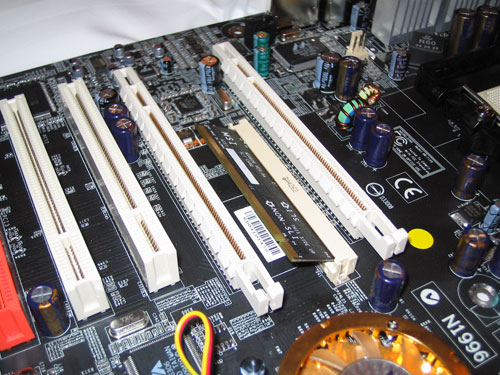

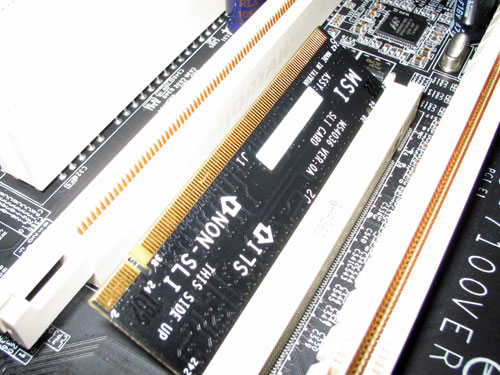

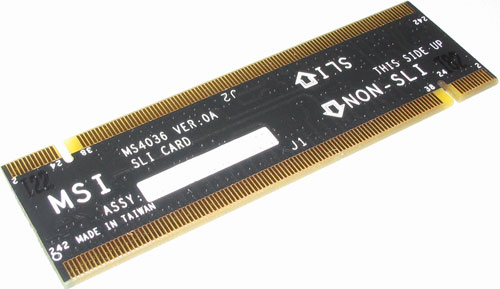

The SLI card slot looks much like a SO-DIMM connector:

The card itself has two ways of being inserted; if installed in one direction the card will configure the PCI Express lanes so that only one of the slots is a x16. In the other direction, the 16 PCI Express lanes are split evenly between the two x16 slots. You can run a single graphics card in either mode, but in order to run a pair of cards in SLI mode you need to enable the latter configuration. There are ways around NVIDIA's card-based design to reconfigure the PCI Express lanes, but none of them to date are as elegant as they require a long row of jumpers.

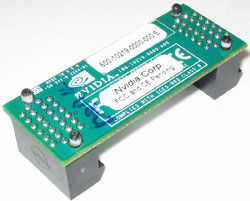

With two cards installed, a bridge PCB is used to connect the golden fingers atop both of the cards. Only GeForce 6600GT and higher cards will feature the SLI enabling golden fingers, although we hypothesize that nothing has been done to disable it on the lower-end GPUs other than a non-accommodating PCB layout. With a little bit of engineering effort we believe that the video card manufacturers could come up with a board design to enable SLI on both 6200 and 6600 non-GT cards. Although we've talked to manufacturers about doing this, we have to wait and see what the results are from their experiments.

As far as board requirements go, the main thing to make sure of is that both of your GPUs are identical. While clock speeds don't have to be the same, NVIDIA's driver will set the clocks on both boards to the lowest common denominator. It is not recommended that you combine different GPU types (e.g. a 6600GT and a 6800GT) although doing so may still be allowed, yet resulting in some rather strange results in certain cases.

You only need to connect a monitor to the first PCI Express card; despite the fact that you have two graphics cards, only the video outputs on the first card will work so anyone wanting to have a quad-display and SLI is somewhat out of luck. I say somewhat because if you toggle off SLI mode (a driver option), then the two cards work independently and you could have a 4-head display configuration. But with SLI mode enabled, the outputs on the second card go blank. While that's not too inconvenient, currently you need to reboot between SLI mode changes in software, which could get annoying for some that only want to enable SLI while in games and use 4-display outputs while not gaming.

We used a beta version of NVIDIA's 66.75 drivers with SLI support enabled for our benchmarks. The 66.75 driver includes a configuration panel for Multi-GPU as you can see below:

Clicking the check box requires a restart to enable (or disable) SLI, but after you've rebooted everything is good to go.

We mentioned before that the driver is very important in SLI performance, the reason behind this is that NVIDIA has implemented several SLI algorithms into their SLI driver to determine how to split up the rendering between the graphics cards depending on the application and load. For example, in some games it may make sense for one card to handle a certain percentage of the screen and the other card handle the remaining percentage, while in others it may make sense for each card to render a separate frame. The driver will alternate between these algorithms as well as even disabling SLI all-together, depending on the game. The other important thing to remember is that the driver is also responsible for the rendering split between the GPUs; each GPU rendering 50% of the scene doesn't always work out to be an evenly split workload between the two, so the driver has to best estimate what rendering ratio would put an equal load on both GPUs.

84 Comments

View All Comments

Sokaku - Friday, October 29, 2004 - link

#12 I'm with that on that one, however we did see how it went with 3dfx's SLI solution.

I had a voodoo2 and when it came to the point where I wanted more power, I could have bought another voodoo2, however the graphics card available at that point, out performed a dual voodoo2 configuration considerably, so as an upgrade path, it was never feasable to do so.

I'm afraid that if I should buy an 6800Ultra, when the time comes, I would not buy another one, because at that point, we have one 8600Ultra way outperforming dual 6800ultras...

#13 by lebe0024: I assumed that you've been banned 23 times from this forum and I sure hope you'll be banned for the 24th time.

lebe0024 - Friday, October 29, 2004 - link

Shut your pie hole #11xsilver - Friday, October 29, 2004 - link

#11 .... how bout this -- "if they could they would"nvidia is here to may money after all..... a single card solution should certainly be cheaper to produce than a dual one, but I don't think that's feasable right now so that's why its not made

Sokaku - Friday, October 29, 2004 - link

I find it horrible that NVIDIA is taking up SLI again. "Why?" you probably wonder... Well, in order to be able to gain anything from SLI, NVIDIA has to overpower the GPU considerably when it comes to geometry handling. Infact, the geometry engine must be able to outperform the pixel rendere by a factor 2, should the customer happen to use this card in a SLI configuration. This means that people who do NOT want a SLI configuration will have to pay for a geometry engine that is way more powerful than needed.Think of the additional cost of making a SLI configuration, you need a motherboard prepared for it, you need dual graphics card, gpu and all.. And what problem does this solve?

Well, basicly all it does is giving you twice the memory bandwidth and pixel render capacity.

This could be solved way more cost effective by doubling the data bus width and keeping the solution on one card. Also, this way the geometry engine would be dimmensioned to exactly match the capacity of the rendere.

This is a step back in innovation and not a step forward.

smn198 - Friday, October 29, 2004 - link

...meaning different RAM manufacturers/speeds on the graphics cards.smn198 - Friday, October 29, 2004 - link

Any one heard if you can get differing versions of the same board. e.g. one 6600GT from Asus and the other from MSI? Anyone heard of any tests with this and different RAM?(I think the dual 6600GT upgrade path makes up for the lack of SoundStorm. Hope they hurry up and make their add in SS boards tho!)

TimTheEnchanter25 - Friday, October 29, 2004 - link

Any word on when PCI-E versions of the 6800 GT will start showing up in stores? I'm not waiting for SLI boards, but I'm getting worried that the 6800GTs won't be out by time Nforce4 boards are in stores.ballero - Friday, October 29, 2004 - link

How about testing the last patch by crytek with hdr enabled?ballero - Friday, October 29, 2004 - link

Pete84 - Friday, October 29, 2004 - link

#4 Why would you want SLI for the 6200? It is the lowest end card - think 9200. No real use, other than Civ III and Firefox.