NVIDIA SLI Performance Preview with MSI's nForce4 SLI Motherboard

by Anand Lal Shimpi on October 29, 2004 5:06 AM EST- Posted in

- GPUs

Setting up SLI

NVIDIA's nForce4 SLI reference design calls for a slot to be placed on the motherboard that will handle how many PCI Express lanes go to the second x16 slot. Remember that despite the fact that there are two x16 slots on the motherboard, there are still only 16 total lanes allocated to them at most - meaning that each slot is electrically still only a x8, but with a physical x16 connector. While having a x8 bus connection means that the slots have less bandwidth than a full x16 implementation, the real world performance impact is absolutely nothing. In fact, gaming performance doesn't really change down to even a x4 configuration; the performance impact of a x1 configuration itself is even negligible.

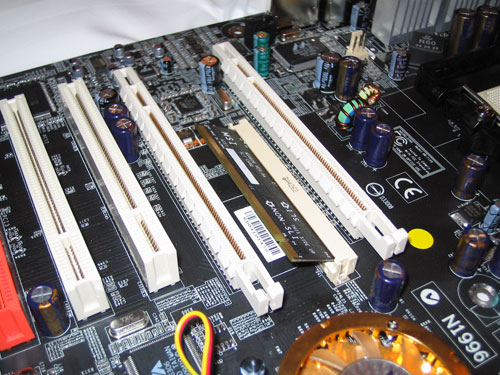

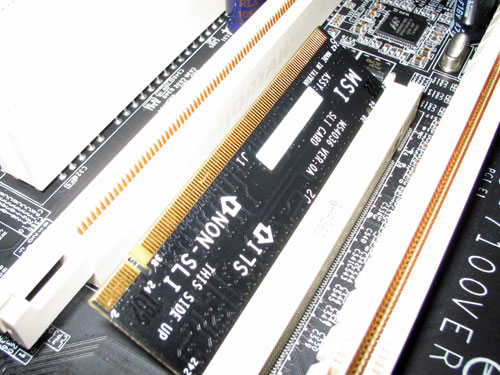

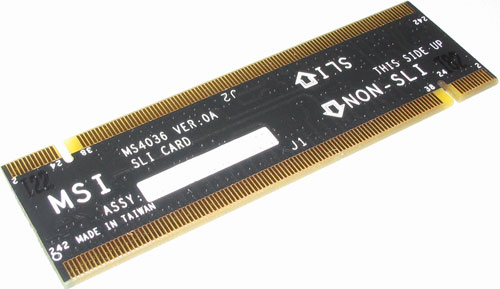

The SLI card slot looks much like a SO-DIMM connector:

The card itself has two ways of being inserted; if installed in one direction the card will configure the PCI Express lanes so that only one of the slots is a x16. In the other direction, the 16 PCI Express lanes are split evenly between the two x16 slots. You can run a single graphics card in either mode, but in order to run a pair of cards in SLI mode you need to enable the latter configuration. There are ways around NVIDIA's card-based design to reconfigure the PCI Express lanes, but none of them to date are as elegant as they require a long row of jumpers.

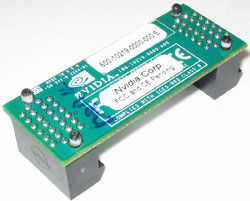

With two cards installed, a bridge PCB is used to connect the golden fingers atop both of the cards. Only GeForce 6600GT and higher cards will feature the SLI enabling golden fingers, although we hypothesize that nothing has been done to disable it on the lower-end GPUs other than a non-accommodating PCB layout. With a little bit of engineering effort we believe that the video card manufacturers could come up with a board design to enable SLI on both 6200 and 6600 non-GT cards. Although we've talked to manufacturers about doing this, we have to wait and see what the results are from their experiments.

As far as board requirements go, the main thing to make sure of is that both of your GPUs are identical. While clock speeds don't have to be the same, NVIDIA's driver will set the clocks on both boards to the lowest common denominator. It is not recommended that you combine different GPU types (e.g. a 6600GT and a 6800GT) although doing so may still be allowed, yet resulting in some rather strange results in certain cases.

You only need to connect a monitor to the first PCI Express card; despite the fact that you have two graphics cards, only the video outputs on the first card will work so anyone wanting to have a quad-display and SLI is somewhat out of luck. I say somewhat because if you toggle off SLI mode (a driver option), then the two cards work independently and you could have a 4-head display configuration. But with SLI mode enabled, the outputs on the second card go blank. While that's not too inconvenient, currently you need to reboot between SLI mode changes in software, which could get annoying for some that only want to enable SLI while in games and use 4-display outputs while not gaming.

We used a beta version of NVIDIA's 66.75 drivers with SLI support enabled for our benchmarks. The 66.75 driver includes a configuration panel for Multi-GPU as you can see below:

Clicking the check box requires a restart to enable (or disable) SLI, but after you've rebooted everything is good to go.

We mentioned before that the driver is very important in SLI performance, the reason behind this is that NVIDIA has implemented several SLI algorithms into their SLI driver to determine how to split up the rendering between the graphics cards depending on the application and load. For example, in some games it may make sense for one card to handle a certain percentage of the screen and the other card handle the remaining percentage, while in others it may make sense for each card to render a separate frame. The driver will alternate between these algorithms as well as even disabling SLI all-together, depending on the game. The other important thing to remember is that the driver is also responsible for the rendering split between the GPUs; each GPU rendering 50% of the scene doesn't always work out to be an evenly split workload between the two, so the driver has to best estimate what rendering ratio would put an equal load on both GPUs.

84 Comments

View All Comments

KraftyOne - Friday, October 29, 2004 - link

Thanks bob661! Anyone have any info on AGP x700's? If ATI gets them out first, they get my money... :-)HardwareD00d - Friday, October 29, 2004 - link

Those benchmarks are amazing! I wasn't going to shell out the dough for SLI, but now I'm going to reconsider.I was glad to read that the 2 PCIe slots being only 8x will not really be a performance issue. A lot of people are down on nForce4 because it won't do 2 16x slots. F***ing A nVidia Nice JOB! Now if only NF4 had soundstorm...

bob661 - Friday, October 29, 2004 - link

See http://www.theinquirer.net/?article=19340 for 6600GT AGP availability.KraftyOne - Friday, October 29, 2004 - link

Yes, this is all fine and great, but when will the x700 and/or 6600GT be available for AGP ports for those of us who can't afford all the latest and greatest?HardwareD00d - Friday, October 29, 2004 - link

I have only two words to say:Anand Rules!

Nyati13 - Friday, October 29, 2004 - link

On page one it says the SLI slots are electrically x8 slots instead of x16. That is not correct, they are x8 data slots, but will still provide the full x16 electrical power needed to the graphics card.

Jeremy

mongoosesRawesome - Friday, October 29, 2004 - link

What PSU were you using for these tests?suave3747 - Friday, October 29, 2004 - link

I would expect that a 6800 Ultra Extreme SLI setup will not be outdone by a new nVidia card until at least a year from now. And at that time, when you bought the second one, it would push you from mid-range back towards the top for much less than that new card would be at the time.This is brilliant by nVidia, because:

A. It allows you a way to buy half of the GPU setup that you want now, and half later. That's a great plan for a budget-oriented consumer. It will make GPU purchases a lot easier for parents to swallow on holidays. It allows for someone to give them $1200 for a ridiculously powerful GPU setup.

B. It will keep their high end cards of today selling well all the way into next year. The way the market is now, people want a new-gen card. They don't want a 5950 right now, they'd rather buy a 6600. This will keep 6800 series and 6600 GTs selling all the way through 2005.

Subhuman25 - Friday, October 29, 2004 - link

2 Video cards - No thanks.Price factor,heat factor,noise factor,space factor,extra & new technology factor(guinea pig)

No thanks to this avenue.

I feel sorry for the poor saps who buy into this crap and spend $800+ alone for 2 x 6800GT's only to be outperformed in a year by a newer generation single card setup.

I highly doubt this will ever become a trend.If you think so,then ask how many people have dual CPU systems.

jkostans - Friday, October 29, 2004 - link

Hmm lets see, I can get a 6800 GT for less than two 6600GTs, and the 6800GT is faster..... Let me think about this, no SLI for me! MAYBE if you want a mid-range card now, then get the 6600GT and upgrade when another 6600GT is very cheap and have the equivalent of low-end card then?. I still think it's worth dropping the extra cash on the 6800 GT. But I guess when it cam time to upgrade, I could just add another 6800 GT? I kinda doubt it.