NVIDIA SLI Performance Preview with MSI's nForce4 SLI Motherboard

by Anand Lal Shimpi on October 29, 2004 5:06 AM EST- Posted in

- GPUs

Setting up SLI

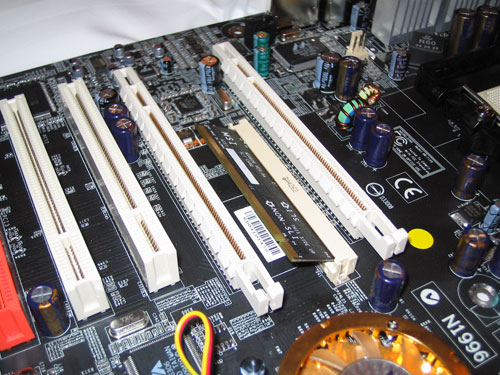

NVIDIA's nForce4 SLI reference design calls for a slot to be placed on the motherboard that will handle how many PCI Express lanes go to the second x16 slot. Remember that despite the fact that there are two x16 slots on the motherboard, there are still only 16 total lanes allocated to them at most - meaning that each slot is electrically still only a x8, but with a physical x16 connector. While having a x8 bus connection means that the slots have less bandwidth than a full x16 implementation, the real world performance impact is absolutely nothing. In fact, gaming performance doesn't really change down to even a x4 configuration; the performance impact of a x1 configuration itself is even negligible.

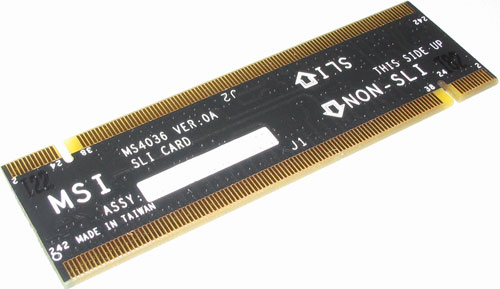

The SLI card slot looks much like a SO-DIMM connector:

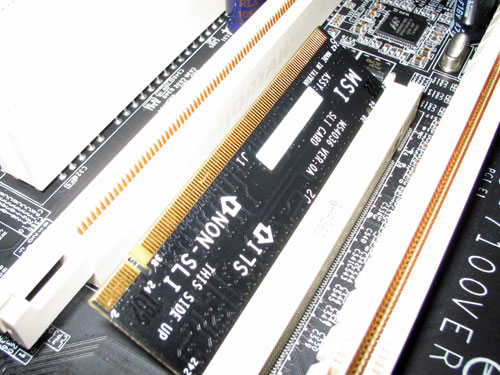

The card itself has two ways of being inserted; if installed in one direction the card will configure the PCI Express lanes so that only one of the slots is a x16. In the other direction, the 16 PCI Express lanes are split evenly between the two x16 slots. You can run a single graphics card in either mode, but in order to run a pair of cards in SLI mode you need to enable the latter configuration. There are ways around NVIDIA's card-based design to reconfigure the PCI Express lanes, but none of them to date are as elegant as they require a long row of jumpers.

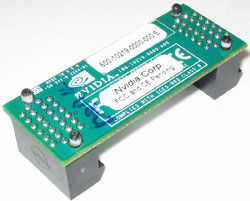

With two cards installed, a bridge PCB is used to connect the golden fingers atop both of the cards. Only GeForce 6600GT and higher cards will feature the SLI enabling golden fingers, although we hypothesize that nothing has been done to disable it on the lower-end GPUs other than a non-accommodating PCB layout. With a little bit of engineering effort we believe that the video card manufacturers could come up with a board design to enable SLI on both 6200 and 6600 non-GT cards. Although we've talked to manufacturers about doing this, we have to wait and see what the results are from their experiments.

As far as board requirements go, the main thing to make sure of is that both of your GPUs are identical. While clock speeds don't have to be the same, NVIDIA's driver will set the clocks on both boards to the lowest common denominator. It is not recommended that you combine different GPU types (e.g. a 6600GT and a 6800GT) although doing so may still be allowed, yet resulting in some rather strange results in certain cases.

You only need to connect a monitor to the first PCI Express card; despite the fact that you have two graphics cards, only the video outputs on the first card will work so anyone wanting to have a quad-display and SLI is somewhat out of luck. I say somewhat because if you toggle off SLI mode (a driver option), then the two cards work independently and you could have a 4-head display configuration. But with SLI mode enabled, the outputs on the second card go blank. While that's not too inconvenient, currently you need to reboot between SLI mode changes in software, which could get annoying for some that only want to enable SLI while in games and use 4-display outputs while not gaming.

We used a beta version of NVIDIA's 66.75 drivers with SLI support enabled for our benchmarks. The 66.75 driver includes a configuration panel for Multi-GPU as you can see below:

Clicking the check box requires a restart to enable (or disable) SLI, but after you've rebooted everything is good to go.

We mentioned before that the driver is very important in SLI performance, the reason behind this is that NVIDIA has implemented several SLI algorithms into their SLI driver to determine how to split up the rendering between the graphics cards depending on the application and load. For example, in some games it may make sense for one card to handle a certain percentage of the screen and the other card handle the remaining percentage, while in others it may make sense for each card to render a separate frame. The driver will alternate between these algorithms as well as even disabling SLI all-together, depending on the game. The other important thing to remember is that the driver is also responsible for the rendering split between the GPUs; each GPU rendering 50% of the scene doesn't always work out to be an evenly split workload between the two, so the driver has to best estimate what rendering ratio would put an equal load on both GPUs.

84 Comments

View All Comments

PrinceGaz - Saturday, October 30, 2004 - link

The geometry processing *should* be shared between the cards to some extent as each card only renders a certain area of the screen when it is divided between them. So polygons that fall totally outside its area can be skipped. At least thats what I imagine happens but I don't know for sure. Obviously when each card is rendering alternate frames then the geometry calculation is effectively totally shared between the cards as they each have twice as long to work on it to maintain the same framerate.As for the 105% improvement of the 6800GT SLI over a single 6800GT in Far Cry 1600x1200. All I can say is No! It's against the laws of physics! That or the drivers are doing something fishy.

stephenbrooks - Saturday, October 30, 2004 - link

--[I do not need a 21 years young webmaster’s article as a reference for making these claims,]--So what's wrong with 21 y/o webmasters then, HUH? I could be very offended by that. :)

I'm surprised no-one's picked up this before but I just love the 105% improvement of the 6800GT on Far Cry highest res. I'm surprised because normally when a review has something like that in it, a load of people turn up and say "No! It's against the laws of physics!" Well, it isn't _technically_, but it makes you wonder what on Earth has gone on in that setup to make two cards more efficient per transistor than one.

[Incidentally, does anyone here know _for sure_ whether or not the geometry calculation is shared between these cards via SLI?]

PrinceGaz - Saturday, October 30, 2004 - link

#61 Sokaku- your understanding of the new form of SLI (Scalable Link Interface) is incorrect. You are referring to Scan-Line Interleave which was used with two Voodoo 2 cards.Using Scalable Link Interface, one card renders the upper part of the screen, the other card renders the lower part. Note that I say "part" of the screen, instead of "half"-- the amount of the screen rendered by each card varies depending on the complexity so that each card has a roughly equal load. So if most of the action is occurring low down, the first card may render the upper two-thirds, while the second card only does the lower third.

The current form of SLI can also be used in an alternative mode where each card renders every other frame, so card A does frames 1,3,5 etc, while card B does frames 2,4,6 etc.

However regardless of which method is used, SLI is only really viable when used in conjunction with top-end cards such as the 6800Ultra or 6800GT. It doesn't make sense to buy a second 6600GT later when better cards will be available more cheaply, or to buy two 6600GTs together now when a single 6800GT would be a better choice. Therefore the $800+ required for two SLI graphics-cards will mean only a tiny minority ever use it (though some fools will go and buy a second 6600GT a year later no doubt).

Sokaku - Saturday, October 30, 2004 - link

#49 "Do you have some reference that actually states this? Seems to me like it's just a blatant guess with not a lot of thought behind it.":I often wonder how come people are this rude on the net, probably because they don’t sit face to face with the ones they talk to. I do not need a 21 years young webmaster’s article as a reference for making these claims, I think therefore I claim. And if you want a conversation beyond this point, sober up your language and tone.

In a SLI configuration card 1, renders the 1st scan line, card 2 the 2nd scan line, card 1 the third, card 2 the fourth and so on.

It is done this way because it’s easier to keep the cards synchronized. If you had card 1 render the left half and card 2 render the right, then card 1 may lag seriously if the left part of the scene is vastly more complex than the right part.

So, in SLI both cards need to do the complete geometry processing for the entire frame. When the cards then render the pixels, they only have to do half each.

Thus, a card needs a geometry engine that is twice as fast (at a given target resolution) as the pixel rendering capacity on one card, because the geometry engine must be prepared for the SLI situation.

If the geometry engine were exactly (yeah I know, it all depends on the game and all) matched to the pixel rendering process, you wouldn't gain anything from a SLI configuration, because the geometry engine would be the bottleneck.

This didn’t matter anything back in the voodoo2 days, because all the geometry calculations was done by the CPU, the cards only did the rendering and therefore nothing was "wasted". Now the CPU offloads the calculations to the GPU, hence the need to have twice the triangle calculation capacity on the GPU.

AtaStrumf - Saturday, October 30, 2004 - link

First of all congrats to Anand for this exclusive preview!Now to those that think next GPU will outperform a 6800GT SLI, you must have been living under a rock for the last 2 years.

How much is 9800XT faster than 9700 Pro? Not nearly as much as two 6800GTs are faster than a single 6800GT is the correct answer!

Now consider that 9700 Pro and 9800XT are 3 generations apart, and 6800GT/SLI are let's say one generation apart in terms of time to market.

How can you complain about that?!?! And don't forget that 6800GT is not a $500 high end card!

If you get one 6800 GT now and one in say 6 months you're still way ahead performancewise, than if you buy the next top of the line $500 GPU and you only spend about $200 more, plus you get to do it in two payments.

This is definately a good thing as long as there are no driver/chipset problems.

Last but not least. Just as we have seen with CPUs, GPUs will also hit the wall probably with the next generation, and SLI is to GPUs what dual core is to CPUs only a hell of a lot better.

My only gripe is that SLI chipset availability will probably be a big problem for quite some time to come and I would not buy the first revision, so add additional 4 months for this to be a viable solution.

Me being stuck in S754 and AGP may seem like a problem, but I intend to buy a 6800GT AGP sometime next year and wait all this SLI/90nm/dual core out. I'll let others be free beta testers :-)

thomas35 - Saturday, October 30, 2004 - link

pretty cool review once agian.Though most people seem to miss one big glaring thing. SLI while nice a pretty toy for games, is going to have a huge impact on the 3D animation industry. Modern video cards have hugefully powerfull processors on them, but because AGP isn't duplex and can't work with more than one GPU at a time, video card based hardware rendering isn't used much. But now with PCI-e and SLI, I can take 2 powerfull professional cards (FireGL and Quadro's) and have them start helping to render animations. This means, rather than add extra computers to cut down on render times, I just simply add in a couple of video cards. And that in turn, means I have more time to develope a high quality animation that I would have in the past.

So in the middle of next year, I'll be buying a dual (dual core) cpu system and dual video card system to render. And then will have the same power as I get from the 6 computers I own now, in your standard ATX case.

Nick4753 - Saturday, October 30, 2004 - link

Thanks Anand!!!!Reflex - Saturday, October 30, 2004 - link

Ghandi - Actually the nVidia SLI solution should outperform X2 in most scenerios. The reason for that is that their driver will intelligently load balance the rendering job to both graphics chips rather than simply split a scene in half and give each chip a half. Much of the time there is more action in one part of a scene than the other, so the second card would be completely wasted at those times. On average, the SLI solution should outperform X2.X2 has other drawbacks as well. Few techies really want to buy a complete system, preffering to build their own. So something like X2, which is only able to be acquired with a very overpriced PC that I can build on my own for a lot less money(and use nVidia's SLI if I really need that kinda power) is not a very attractive solution for a power user. You also point out a part about drivers as an advantage that is truly a drawback. What kind of experience does Alienware have with drivers? How long do they intend to support that hardware? I can get standard Ati or nVidia drivers dating back to 1996, will Alienware guarnatee me that in 8 years that rig will still be able to run at the least an OS with full driver support? What kind of issues will they have, seeing as they have never had to do that before, writing drivers is NOT a simple process.

I have nothing against the X2, but I do know it was first mentioned months ago and I have yet to see any evidence of it since. It would not suprise me if they ditched it and just went with nVidia's solution. At this point they are more or less just doing design for Ati, as anyone who wants to do SLI with nVidia cards can now do it without paying a premium for Alienware's solution.

Your comment about nVidia vs. Ati is kinda odd. It really depends on what games you play as to which you prefer. Myself, I am perfectly happy with my Radeon 9600SE, yes its crappy, but it plays Freedom Force and Civilization 3 just fine. ;)

GhandiInstinct - Saturday, October 30, 2004 - link

I don't consider my statement any form of criticism it is merely a realization that the high-end user might want to wait for X2 because the current concensus reveals that Nvidia cards aren't a big hype compared to ATi. Ask that high-end user would he rather waste that $200 on dual-Nvidias or Dual-Ati's?SleepNoMore - Saturday, October 30, 2004 - link

I think you could build this and RENT it to friends and gawkers at 20 bucks an hour. Who knows maybe some gaming arcade places will do this?Cause that's what it is at this point: a really cool, ooh-ahh, novelty. I'd rather rent it as a glimpse at the future than go broke trying to buy it. The future will come soon enough.

The final touch - for humor - you just need to build it into a special case with a Vornado fan ;) to make it totally unapologetically brute force funky.