NVIDIA Details DRIVE AGX Orin: A Herculean Arm Automotive SoC For 2022

by Ryan Smith on December 18, 2019 8:30 AM EST

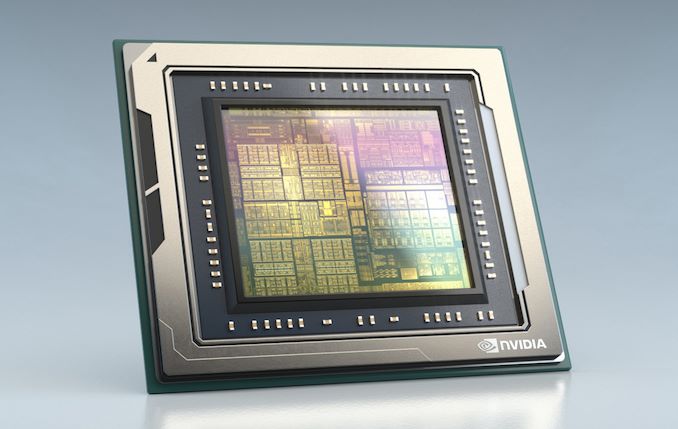

While NVIDIA’s SoC efforts haven’t gone entirely to plan since the company first started on them over a decade ago, NVIDIA has been able to find a niche that works in the automotive field. Backing the company’s powerful DRIVE hardware, these SoCs have become increasingly specialized as the DRIVE platform itself evolves to meet the needs of the slowly maturing market for the brains behind self-driving cars. And now, NVIDIA’s family of automotive SoCs is growing once again, with the formal unveiling of the Orin SoC.

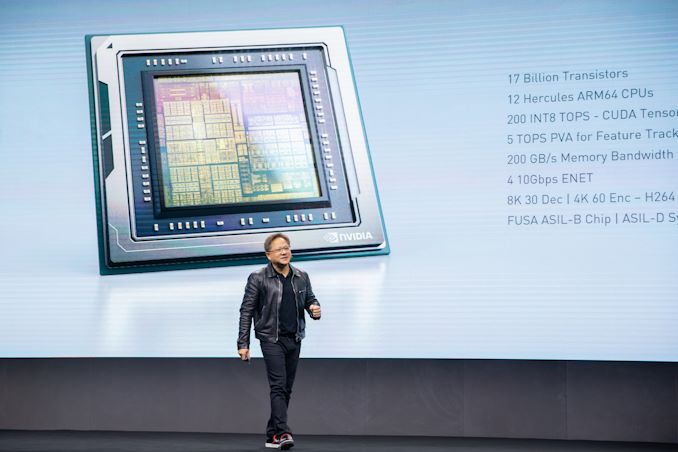

First outlined as part of NVIDIA’s DRIVE roadmap at GTC 2018, NVIDIA CEO Jensen Huang took the stage at GTC China this morning to properly introduce the chip that will be powering the next generation of the DRIVE platform. Officially dubbed the NVIDIA DRIVE AGX Orin, the new chip will eventually succeed NVIDIA’s currently shipping Xavier SoC, which has been available for about the last year now. In fact, as has been the case with previous NVIDIA DRIVE unveils, NVIDIA is announcing the chip well in advance: the company isn't expecting the chip to be fully ready for automakers until 2022.

What lies beneath Orin then is a lot of hardware, with NVIDIA going into some high-level details on certain parts, but skimming over others. Overall, Orin is a 17 billion transistor chip, almost double the transistor count of Xavier and continuing the trend of very large, very powerful automotive SoCs. NVIDIA is not disclosing the manufacturing process being used at this time, but given their timeframe, some sort of 7nm or 5nm process (or derivative) is pretty much a given. And NVIDIA will definitely need a smaller manufacturing process – to put things in comparison, the company’s top-end Turing GPU, TU102, takes up 754mm2 for 18.6B transistors, so Orin will pack in almost as many transistors as one of NVIDIA’s best GPUs today.

| NVIDIA ARM SoC Specification Comparison | |||||

| Orin | Xavier | Parker | |||

| CPU Cores | 12x Arm "Hercules" | 8x NVIDIA Custom ARM "Carmel" | 2x NVIDIA Denver + 4x Arm Cortex-A57 |

||

| GPU Cores | "Next-Generation" NVIDIA iGPU | Xavier Volta iGPU (512 CUDA Cores) |

Parker Pascal iGPU (256 CUDA Cores) |

||

| INT8 DL TOPS | 200 TOPS | 30 TOPS | N/A | ||

| FP32 TFLOPS | ? | 1.3 TFLOPs | 0.7 TFLOPs | ||

| Manufacturing Process | 7nm? | TSMC 12nm FFN | TSMC 16nm FinFET | ||

| TDP | ~65-70W? | 30W | 15W | ||

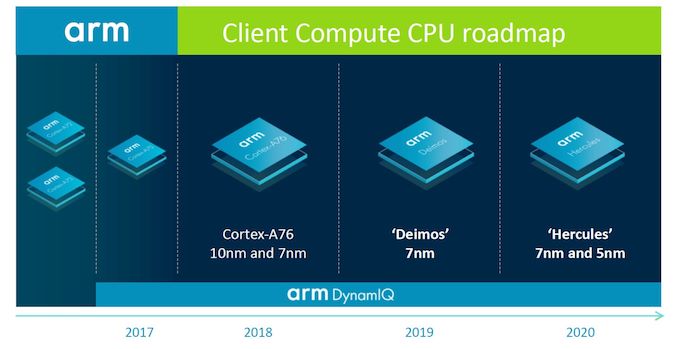

Those transistors, in turn, will be driving several elements. Surprisingly, for today’s announcement NVIDIA has confirmed what CPU core they’ll be using. And even more surprisingly, it isn’t theirs. After flirting with both Arm and NVIDIA-designed CPU cores for several years now, NVIDIA has seemingly settled down with Arm. Orin will include a dozen of Arm’s upcoming Hercules CPU cores, which are from Arm’s client device line of CPU cores. Hercules, in turn, succeeds today’s Cortex-A77 CPU cores, with customers recently receiving the first IP for the core. For the moment we have very little information on Hercules itself, but Arm has previously disclosed that it will be a further refinement of the A76/A77 cores.

I won’t spend too much time dwelling on NVIDIA’s decision to go with Arm’s Cortex-A cores after using their own CPU cores for their last couple of SoCs, but it’s consistent with the direction we’ve seen most of Arm’s other high-end customers take. Developing a fast, high-performance CPU core only gets harder and harder every generation. And with Arm taking a serious stab at the subject, there’s a lot of sense in backing Arm’s efforts by licensing their cores as opposed to investing even more money in further improving NVIDIA’s Project Denver-based designs. It does remove one area where NVIDIA could make a unique offering, but on the flip side it does mean they can focus more on their GPU and accelerator efforts.

Speaking of GPUs, Jensen revealed very little about the GPU technology that Orin will integrate. Besides confirming that it’s a “next generation” architecture that offers all of the CUDA core and tensor functionality that NVIDIA has become known for, nothing else was stated. This isn’t wholly surprising since NVIDIA hasn’t disclosed anything about their forthcoming GPU architectures – we haven’t seen a roadmap there in a while – but it means the GPU side is a bit of a blank slate. Given the large gap between now and Orin’s launch, it’s not even clear if the architecture will be NVIDIA’s next immediate GPU architecture or the one after that, however given how Xavier’s development went and the extensive validation required for automotive, NVIDIA’s 2020(ish) GPU architecture seems like a safe bet.

Meanwhile NVIDIA’s Deep Learning Accelerator (DLA) blocks will also be making a return. These blocks don’t get too much attention since they’re unique to NVIDIA’s DRIVE SoCs, but these are hardware blocks to further offload neural network inference, above and beyond what NVIDIA’s tensor cores already do. On the programmable/fixed-function scale they’re closer to the latter, with the task-specific hardware being a good fit for the power and energy-efficiency needs NVIDIA is shooting for.

All told, NVIDIA expects Orin to deliver 7x the 30 INT8 TOPS performance of Xavier, with the combination of the GPU and DLA pushing 200 TOPS. It goes without saying that NVIDIA is still heavily invested in neural networks as the solution to self-driving systems, so they are similarly heavily investing in hardware to execute those neural nets.

Rounding out the Orin package, NVIDIA’s announcement also confirms that the chip will offer plenty of hardware for supporting features. The chip will offer 4x 10 Gigabit Ethernet hosts for sensors and in-vehicle communication, and while the company hasn’t disclosed how many camera inputs the SoC can field, it will offer 4Kp60 video stream encoding and 8Kp30 decoding for H.264/HEVC/VP9. The company has also set a goal for 200GB/sec of memory bandwidth. Given the timeframe for Orin and what NVIDIA does for Xavier today, an 256-bit memory bus with LPDDR5 support sounds like a shoe-in, but of course this remains to be confirmed.

Finally, while NVIDIA hasn’t disclosed any official figures for power consumption, it’s clear that overall power usage is going up relative to Xavier. While Orin is expected to be 7x faster than Xavier, NVIDIA is only claiming it’s 3x as power efficient. Assuming NVIDIA is basing all of this on INT8 TOPS as they usually do, then the 1 TOPS/Watt Xavier would be replaced by the 3 TOPS/Watt Orin, putting the 200 TOPS chip at around 65-70 Watts. Which is admittedly still fairly low for a single chip at a company that sells 400 Watt GPUs, but it could add up if NVIDIA builds another multi-processor board like the DRIVE Pegasus.

Overall, NVIDIA certainly has some lofty expectations for Orin. Like Xavier before it, NVIDIA intends for various forms of Orin to power everything from level 2 autonomous cars right up to full self-driving level 5 systems. And, of course, it will do so while being able to provide the necessary ASIL-D level system integrity that will be expected for self-driving cars.

But as always, NVIDIA is far from the only silicon vendor with such lofty goals. The company will be competing with a number of other companies all providing their own silicon for self-driving cars – ranging from start-ups to the likes of Intel – and while Orin will be a big step forward in single-chip performance for the company, it’s still very much the early days for the market as a whole. So NVIDIA has their work cut out for them across hardware, software, and customer relations.

Source: NVIDIA

33 Comments

View All Comments

webdoctors - Wednesday, December 18, 2019 - link

???I just got a Shield that has a Tegra chip, same with the Nintendo Switch and the Jetson boards. Obviously you won't see the automotive chips in consumer tablets, and I think consumer tablets don't have any demand for faster and faster SoCs. Look at how old the SoCs in the Amazon tablets are.

Alistair - Wednesday, December 18, 2019 - link

That chip is based on 5 year old Cortex A57 cores (from the Galaxy Note 4...). That's not a new chip by any stretch of the imagination.blazeoptimus - Wednesday, December 18, 2019 - link

Actually, let's do look at Amazon tablets. The Fire HD10 uses an Octo-Core High/Low CPU released by mediatek at the end of last year. The Cortex-A73 Processors that it utilizes were released by Arm in 2016. Given that the tablet can be had for $100, and how long it takes from the release of design to it actually making it into devices, a 3yr gap is reasonable. Amazon's SoCs aren't old, there just cheap - which is to be expected of very low priced devices. As to the demand, I'm pretty sure Apple and Microsoft's very profitable tablet divisions would disagree with you.Stoly - Wednesday, December 18, 2019 - link

I think its better for nvidia to use ARM designs which are pretty good BTW.Even though nvidia has the resources and engineering team to do its own, they don't really need an ultra fast CPU. Their forte is in the gpu which is where they should put their efforts on.

Fataliity - Thursday, December 19, 2019 - link

You guys are comparing to the wrong chips, just like the other websites. Seriously, did Nvidia provide you with the list and you just copied it?Nvidia has a very similar chip to this ALREADY OUT. It's called the Tesla T4. It's a 2060 SUPER underclocked to 75 Watts. It gets 130 TOPS. About 2 TOPS/Watt. So Compared to Current Gen, its 30% more efficient when underclocked.

While a T102 is 295Watts and 206 TOPS. With 30% Efficiency added, you get 267.8 Tops. And at a 320W Envelope you'd have about 300 TOPS.

_____

And for AI, judging by claims from Tesla, they only hit about 3.4 outta 30 TOPS real world (10%)

Because TOPS is calculated synthetic, with easiest possible calculation (like 1+1 over and over). Real-world uses dont work like this.

Even in Resnet-50, only about 25% of the TOPS can be used, and that's still synthetic but compared to real-world it's best case. If you lower Batch size from 15-30 down to a 1 batch size, the performance is closer to what Tesla got, about 10%.

Tesla's design is getting 50% efficiency (37 of 74 TOPs).

So even Orin at 200 TOPS would only get about 20 in real world.

And that's for its intended purpose. Autonomous Driving.

Fataliity - Thursday, December 19, 2019 - link

Edit:: And if the CPU cores include the AI accelerators on it like SoC's for mobile phones and is included in TOPS calculation, then it's even further off. So 30% efficiency vs turing is best case. And that's with a node shrink.Fataliity - Thursday, December 19, 2019 - link

Edit:: 50% more efficient than Tesla T4. Once you go up to gaming clock speeds, 30% or less is more likely.But most of performance will be in RT cores.

Drive Xavier (Pascal) was 30TOPS / 30 Watts.

Turing (Non RT cores - 30% improvement) - 40TOPs/30 watts. x2 = 80TOPs/60 watts

Turing with RT Cores Tesla T4 - 130TOPs, 70 watts.

So about 50 TOPs is the RT cores.

Add another 60TOPs in RT cores (about 77, so 150 Total). and 12.5% improvement to Turing itself (12 TOPs) = 200 TOPs.

Or double RT cores to 128, (100 TOPs), and 20 TOPS from Arch enhancement, so 25% boost. = 200 TOPS.

BenSkywalker - Thursday, December 19, 2019 - link

When was Tesla using Xavier? I never saw any information on them using any of nVidia's tensor core products, can't find any now either.edzieba - Thursday, December 19, 2019 - link

"Nvidia has a very similar chip to this ALREADY OUT. It's called the Tesla T4."The Tesla T4 (like the rest of the Tesla lineup) is an add-in accelerator card. The Drive series chipsa re SoCs that include not only the GPU, but also multiple CPU cores, interfaces (for a larger number of cameras and network ports), and other ancillary fixed-function blocks. The two are not comparable products.

edzieba - Thursday, December 19, 2019 - link

And on top of that there's all the internal redundancy and fail-safe error handling that does into ASIL certification. In practice, the T4 is not a comparable product on that issue alone: you cannot just drop a few T4s into a system, shove it in the boot of a car, and go "here's our self-driving system!".