The AMD Radeon RX 5500 XT Review, Feat. Sapphire Pulse: Navi For 1080p

by Ryan Smith on December 12, 2019 9:00 AM ESTMeet the Sapphire Pulse Radeon RX 5500 XT

As I mentioned earlier in the article, while AMD does have a reference card, they aren’t going to be releasing it to retail. So instead, today’s launch is all about partner cards. To that end, AMD has sampled us with both the 8GB and 4GB versions of Sapphire’s Pulse RX 5500 XT. Sapphire’s card is a pretty good example of what to expect for basic (at-MSRP) RX 5500 XT cards, offering a solid build quality, but otherwise being pretty close to reference specifications.

| Radeon RX 5500 XT Card Comparison | ||||

| Radeon RX 5500 XT (Reference Specification) |

Sapphire Pulse RX 5500 XT (Default/Perf Mode) |

|||

| Base Clock | 1607MHz | 1607MHz | ||

| Game Clock | 1717MHz | 1737MHz | ||

| Boost Clock | 1845MHz | 1845MHz | ||

| Memory Clock | 14Gbps GDDR6 | 14Gbps GDDR6 | ||

| VRAM | 8GB | 8GB | ||

| GPU Power Limit | 120W | 135W | ||

| Length | N/A | 9.15-inches | ||

| Width | N/A | 2-Slot | ||

| Cooler Type | N/A | Open Air, Dual Fan | ||

| Price | $199 | $209 | ||

Sapphire has several sub-brands of cards, including the Nitro and the Pulse. Whereas the former are their higher-priced factory overclocked cards, the Pulse cards are more straightforward. For their RX 5500 XT cards, Sapphire does ship them with a higher than reference GPU power limit – leading to a 20MHz higher game clock rating – but the actual GPU base and boost clockspeeds remain unchanged. This goes for both the 4GB and 8GB cards, which aside from their memory capacities are identical in both build and specifications.

At a high level, the Sapphire Pulse RX 5500 XT is a pretty typical design for a 150 Watt(ish) card. Sapphire has gone with a dual slot, dual fan open air cooled design, which is more than enough to keep up with Navi 14.

In fact, once we break out the rulers and really dig into the Sapphire Pulse, it becomes increasingly clear that Sapphire may have very well overbuilt the card. The card’s cooling system and shroud is longer than the PCB itself – it’s the board that’s bolted to the cooler, and not the other way around. Similarly, Sapphire has gone for a taller than normal design as well, allowing them to fit a larger heatsink and fans than we’d otherwise see with cards that are built exactly to PCIe spec. The resulting card is quite sizable, measuring about 9.15-inches long, and 4.25-inches from the bottom of the PCB to the top of the shroud.

The payoff for this oversized design is that Sapphire can use larger, lower RPM fans to minimize the fan noise. The Pulse 5500 XT packs 2 95mm fans, which in our testing never got past 1100 RPM. And even then, the card supports zero fan speed idle, so the fans aren’t even on until the card is running a real workload and starts warming up. The net result is that the already quiet card is completely silent when it’s idling.

Meanwhile beneath the fans is a similarly oversized heatsink, which runs basically the entire length and height of the board. A trio of heatpipes runs from the core to various points on the heatsink, helping to draw heat away from the GPU and thermal pad-attached GDDR6 memory. The fins are arranged horizontally, so the card tends to push air out via its I/O bracket as well as towards the front of a system. The card also comes with a metal backplate – no doubt needed to hold the oversized cooler together – with some venting that allows air to flow through the heatsink and out the back of the card.

Overall, Sapphire offers two BIOSes for the card, which are selectable via a switch on the top. The default BIOS is Sapphire’s performance BIOS, which has the GPU power limit increased to 135 Watts. As we’ll see in our benchmarks, the net performance impact of this is not very substantial, but it does allow the card to average slightly higher clockspeeds. Meanwhile the second BIOS is rigged for quiet operation, bringing the card back down to a GPU power limit of 120W.

Speaking of power, the card relies on an 8-pin external PCIe power cable as well as PCIe slot power for its electricity needs. From a practical perspective this seems overprovisioned for a card that shouldn’t pass 150W for the entire board – so a 6 pin connector should work just fine here – but with Sapphire’s higher power limit bringing the card right up to that 150W threshold, I’m not surprised to see them playing it safe.

While we're on the subject of PCIe, it’s worth mentioning quickly that while the Sapphire Pulse is physically a PCIe x16 card, electrically it’s only a x8 card. A traditional cost-optimization move for AMD, they have only given the underlying Navi 14 GPU 8 PCIe lanes, so while the card uses a full length x16 connector, it only actually has 8 lanes to work with. This won’t present a performance problem for the card on PCIe 3.0 systems, and better still as Navi offers PCIe 4.0 connectivity, it means those 8 lanes are twice as fast when paired with a PCIe 4.0 host – where coincidentally enough, AMD’s Ryzen processors are the only game in town right now.

Finally for hardware features, for display I/O we’re looking at a pretty typical quad port setup. Sapphire has equipped the card with 3x DisplayPort 1.4 outputs, as well as an HDMI 2.0b output. With daisy chaining or MST splitters, it’s possible to drive up to 5 monitors from the card.

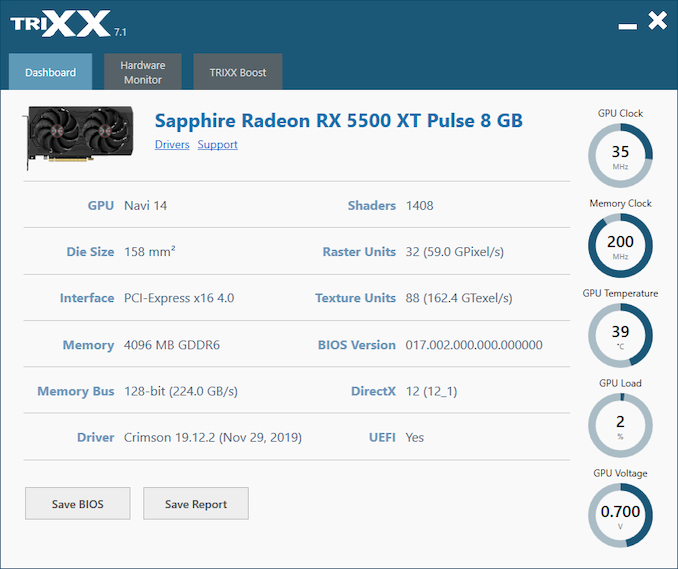

As for software, Sapphire ships their cards with their TriXX monitoring and overclocking software. It’s been some number of years since we’ve last taken a look at TriXX, and while the basic functionality has remained largely unchanged, the most recent iteration of the software comes with a very streamlined and functional UI. Overall as far as overclocking goes, TriXX ultimately just hooks into AMD’s drivers, so while it’s capable, it’s no more capable than AMD’s constantly improving Radeon Software.

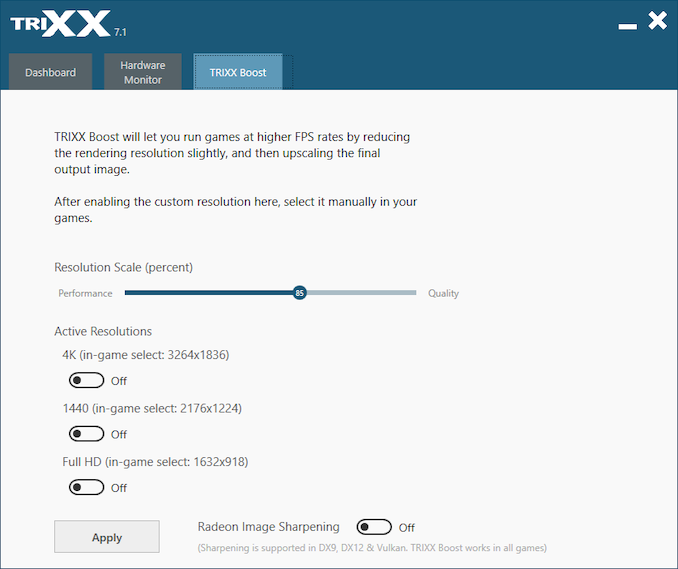

The one area where Sapphire is still able to stand apart from AMD, however, is with their TriXX Boost feature. Not to be confused with the somewhat similar Radeon Boost feature in AMD’s drivers, TriXX Boost is Sapphire’s take on resolution scaling. The software combines custom resolution creation with GPU resolution scaling controls, essentially allowing a user to create a lower resolution and engage GPU resolution scaling all in a single go. The basic idea being to make it super easy to create a slightly lower resolution (.e.g. 1632x918) for better performance, and then having the GPU scale it back up to the full resolution of the monitor. The impact on image quality is as expected, and while it’s nothing that can’t technically already be done with AMD’s software, Sapphire has put a nice UI in front of it.

97 Comments

View All Comments

Valantar - Thursday, December 12, 2019 - link

What? This class of GPU is in no way whatsoever capable of gaming at 4K. Why include a bunch of tests where the results are in the 5-20fps range? That isn't useful to anyone.Zoomer - Saturday, December 21, 2019 - link

AT used to include. I just ignored it for a card of this class; probably others did as well.Ravynmagi_ - Thursday, December 12, 2019 - link

I lean more Nvidia too and I didn't get that impression from the article. I felt it was fair to AMD and Nvidia in it's comparison of the performance and facts. I wasn't bothered by where they decided to cut off their chart.FreckledTrout - Friday, December 13, 2019 - link

Same here. I don't need to see numbers elucidating how bad these low end cards are at 4k. Let's move on.Dragonstongue - Thursday, December 12, 2019 - link

I <3 how compute these days adamantly refuse to use the "old standard"i.e MINING

this shows Radeon in vastly different light, as the different forms of such absolutely show difference generation on generation, more so Radeon than Ngreedia err I mean Nvidia.

seeing as one can take the wee bit of time to have a -pre set that really needs very little change (per brand and per specific GPU being used)

instead of using "canned" style bechmarks, that often are very much *bias* towards those who hold more market share and/or have the heavier fist to make sure they are shown as "best" even when the full story simply is NOT being fully told...yep am looking direct at INTC/NVDA ... business is business, they certainly walk that BS line constantly, to very damaging consequence for EVERYONE

............

I personally think in this regard, AMD likely would have been "best off" to up the power budget a wee touch, so the "clear choice" between going with older stuff they probably and likely not want to be producing as much anymore (likely costlier) that is RX 4/5xx generation such as the 570-580 more importantly 590, this "little card" would be that much better off, instead, they seem to "adamant" want to target the same limiting factor of limited memory bus size (even though fast VRAM) still wanting to be @ the "claimed golden number" of "sub" $200 price point --- means USA or this price often moves from "acceptable" to, why bother when get older far more potent stuff for either not much more or as of late, about the same (rarely less, though it does happen)

1080p, I can see this, myself still using a Radeon 7870 on a 144Hz monitor "~3/4" jacked up settings (granted it is not running at full rate as the GPU does not support run this at full speed, but my Ryzen 3600 helps huge.

still, a wee bit more power budget or something would effectively "bury" or make moot 580 - 590, then wanting to sell for that "golden" $200 price point, would make much more sense, seeing as they launched the 480 - 580 "at same pricing" (for USA) in my mind, and all I have read, with the terrific yields TSMC has managed to get as well as the "reasonable low cost to produce due to very very few "errors" THIS should have targeted 175 200 max.

They are a business, no doubt, though they in all honesty should have looked at the "logical side" that is, "we know we cannot take down the 1660 super / Ti the way we would like to, while sticking with the shader count / memory bus, so why not say fudge it, add that extra 10w (effectively matching 7870 from many many generations back in the real world usage) so we at least give potential buyers a real hard time to decide between an old GPU (570-580-590) or a brand spanking new one that is very cool running AND not at all same power use, I am sure it will sell like hotcakes, provided we do what we can to make sure buyers everywhere can get this "for the most part" at a guaranteed $200 or less price point, will that not tick our competition right off?"

..........

thesavvymage - Thursday, December 12, 2019 - link

What are you even trying to say here.....Valantar - Thursday, December 12, 2019 - link

I was lost after the first sentence. If it can be called a sentence. I truly have no idea what this rant is about.Fataliity - Thursday, December 12, 2019 - link

I think the game bundle is what they chose as their selling point. I'm sure they get a good deal with game pass being the supplier of CPU/GPU on xbox. So their bundle is most likely almost free for them. Which pushes the value up. Without bundle I imagine 5500 4gb being 130 and 8gb being 180.TheinsanegamerN - Sunday, December 15, 2019 - link

That's a LOTTA words just to say "AMD just made another 580 for $20 less, please clap."kpb321 - Thursday, December 12, 2019 - link

The ~$100ish 570's still look like a great deal as long as they are still available. For raw numbers they have basically the same memory bandwidth and compute as a 5500 but the newer card ends up being slightly faster and uses a bit less power. It is overall more efficient but IMO no where near enough to justify the price premium over the older cards. I'm not as sure that the 570/580 or 5500 will have enough compute power for the 4 vs 8gb of memory to really make a difference but my 570 happens to be an 8gb card anyway.