Analyzing Intel’s Discrete Xe-HPC Graphics Disclosure: Ponte Vecchio, Rambo Cache, and Gelato

by Dr. Ian Cutress on December 24, 2019 9:30 AM ESToneAPI: Intel’s Solution to Software

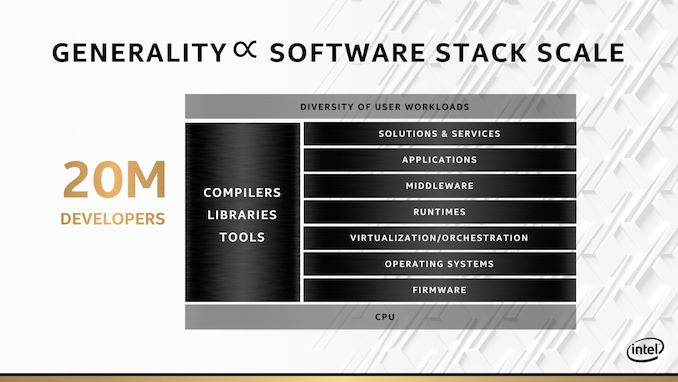

Having the hardware is all well and good, but the other angle (and perhaps more important angle) is software. Intel is keen to point out that before this new oneAPI initiative, it had over 200+ software angles and projects across the company to do with software development. oneAPI is meant to bring all of those angles and projects under one roof, and provide a single entry point for developers to access whether they are programming for CPU, GPU, AI, or FPGA.

The slogan ‘no transistor left behind’ is going to be an important part of Intel’s ethos here. It’s a nice slogan, even if it does come across as if it is a bit of a gimmick. It should also be noted that this slogan is missing a key word: ‘no Intel transistor left behind’. oneAPI won’t help you as much with non-Intel hardware.

This sounds somewhat too good to be true. There is no way that a single entry point can do all things to all developers, and Intel knows this. The point of oneAPI is more about unifying the software stack such that high-level programmers can do what they do regardless of hardware, and low level programmers that want to target specific hardware and do micro-optimizations at the lowest level can do that too.

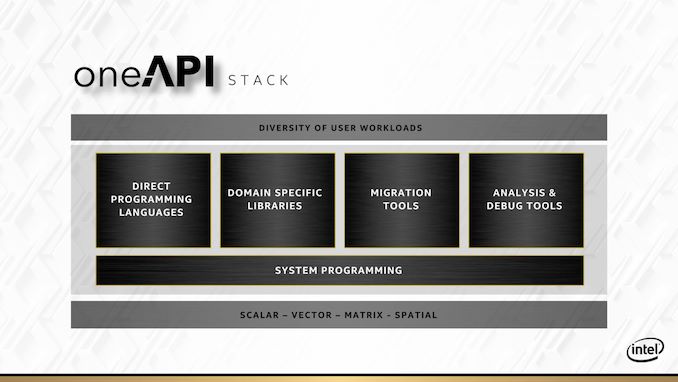

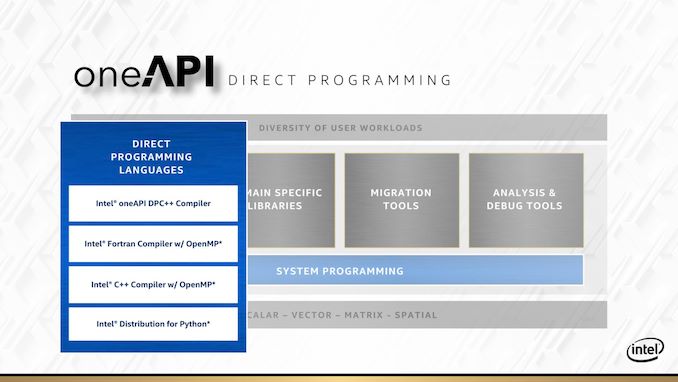

Everything for oneAPI is going to be driven through the oneAPI stack. At the bottom of the stack is the hardware, and at the top of the stack is the user workload – in between there are five areas which Intel is going address.

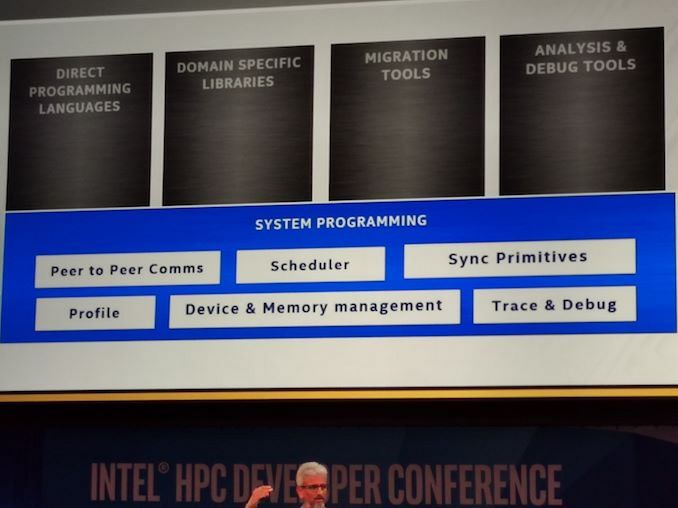

The underlying area that covers the rest is system programming. This includes scheduler management, peer-to-peer communications, device and memory management, but also trace and debug tools. The latter of which will appear in its own context as well.

For direct programming languages, Intel is leaning heavily on its ‘Distributed Parallel C++’ standard, or DPC++. This is going to be the main language that it encourages people to use if they want portable code over all different types of hardware that oneAPI is going to cover. DPC++ is an intrinsic mix of C++ and SYCL, with Intel in charge of where that goes.

But not everyone is going to want to re-write their code in a new programming paradigm. To that end, Intel is also working to build a Fortran with OpenMP compiler, a standard C++ with OpenMP compiler, and a python distribution network that also works with the rest of oneAPI.

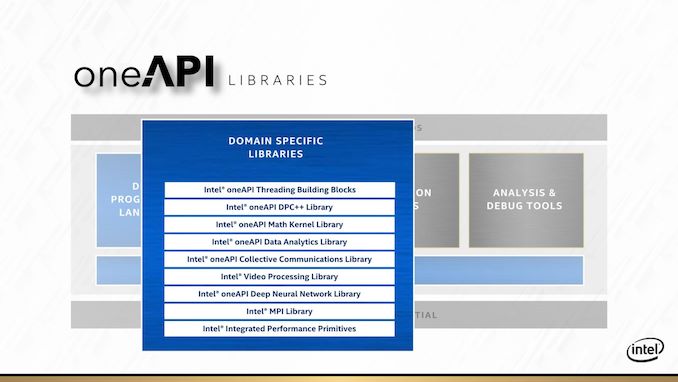

For anyone with a categorically popular workload, Intel is going to direct you to its library of libraries. Most of these users will have heard of before, such as the Intel Math Kernel Library (MKL) or the MPI libraries. What Intel is doing here is refactoring its most popular libraries specifically for oneAPI, so all the hooks needed for hardware targets are present and accounted for. It’s worth noting that these libraries, like their non oneAPI counterparts, are likely to be sold on a licencing model.

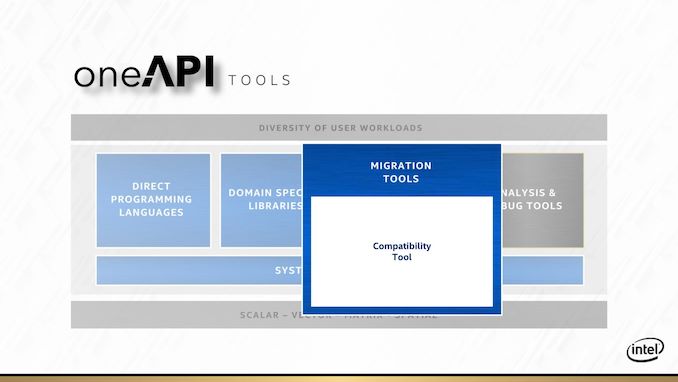

One big element to oneAPI is going to be migration tools. Intel has made a big deal what they want to be able to support CUDA translation to Intel hardware. If that sounds familiar, it’s because Raja Koduri already tried to do that with HIP at AMD. The HIP tool works well in some cases, although in almost all instances it still requires adjustment to the code to get something in CUDA to work on AMD. When we asked Raja about what he learned about previous conversion tools and what makes it different for Intel, Raja said that the issue is when code written for a wide vector machine gets moved to a narrower vector machine, which was AMD’s main issue. With Xe, the nature of the variable vector width means that oneAPI shouldn’t have as many issues translating CUDA to Xe in that instance. Time will tell, for obvious reasons. If Intel wants to be big in HPC, that’s the one trick they’ll need to execute on.

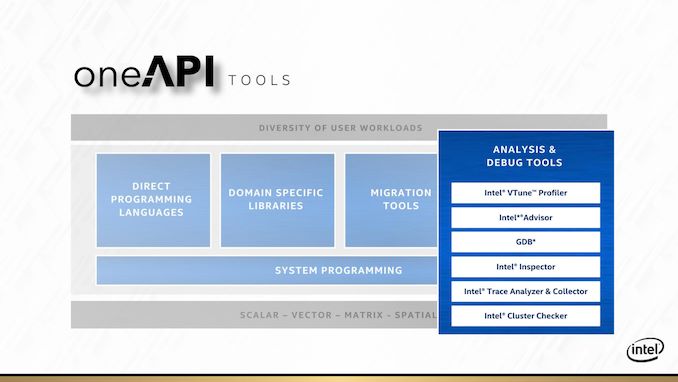

The final internal pillar of oneAPI are the analysis and debug tools. Popular products like vTune and Trace Analyzer will be getting the oneAPI overhaul so they can integrate more easily for a variety of hardware and code paths.

At the Intel HPC Developer Conference, Intel announced that the first version of the public beta is now available. Interested parties can start to use it, and Intel is interested in feedback.

The other angle to Intel’s oneAPI strategy is supporting it with its DevCloud platform. This allows users to have access to oneAPI tools without needing the hardware or installing the software. Intel stated that they aim to provide a wide variety of hardware on DevCloud such that potential users who are interested in specific hardware but are unsure what works best for them will be able to try it out before making a purchasing decision. DevCloud with the oneAPI beta is also now available.

47 Comments

View All Comments

Spunjji - Friday, December 27, 2019 - link

They were even worse on the notebook side of things. They were happy to sling dual-core + HT CPUs as "i7" processors until AMD announced the 2500U / 2700U; suddenly Intel came up with a "new" 15W Kaby Lake R CPU that looked suspiciously like a TDP-limited Kaby Lake, which itself was just a voltage-tweaked Skylake on an improved 14nm process.Any interested hobbyists can observe the extent of the truth in this by taking a notebook with a 45W quad-core Skylake CPU, undervolting it by 100-125mV (the vast majority will do this) and dropping in a TDP limit. The barely perceptible change in performance that results is truly something to behold. My own tweaked 6700HQ averages a 22W TDP under load just from the undervolt.

extide - Monday, December 30, 2019 - link

Yeah just described what a mobile CPU is, why are you surprised?JayNor - Wednesday, January 1, 2020 - link

I think if you asked Intel, they'd say ADAS, FPGAs and Optane are still very exciting programs for them, and probably already making money on ADAS and FPGAs.Intel shipped 88 million LTE modems for iphones this year. How many LTE modems did AMD deliver?

Korguz - Thursday, January 2, 2020 - link

sources ????Spunjji - Friday, December 27, 2019 - link

Depends whether you're focusing entirely on the CPU side of their business or making a more general assessment. Generally speaking, they've burned money on all sorts of unsuccessful projects (see: Atom cores in phones and tablets, their 4G modem projects).Even on the CPU side they had their fair share of struggles prior to the 10nm-induced disasters. Ivy Bridge was a weak and somewhat cheapened follow-up to the absolute blockbuster that was Sandy, while Broadwell arrived late and barely showed its face at all on the desktop due to early struggles with 14nm. These things didn't have larger effects because AMD and their foundry competitors were performing so terribly at the time, but they were missteps nonetheless.

Spunjji - Friday, December 27, 2019 - link

Agreed re: worryingly light on technical details. I'm sure it all feels very real to the engineers working on it, but from an end-user perspective this is still very much a marketing exercise.Sychonut - Wednesday, December 25, 2019 - link

Raja "The Wood Elf" Koduri better not let his mouth write a check his ass can't cash.Spunjji - Friday, December 27, 2019 - link

Those who observed the Vega launch know he has no such compunctions :DGreenReaper - Thursday, December 26, 2019 - link

Hmm. That HPC block diagram . . . where have I seen it before . . .https://www.resetera.com/threads/how-would-a-ryzen...

peevee - Monday, December 30, 2019 - link

Can it deal with compressed representations of sparse matrices?