nForce4: PCI Express and SLI for Athlon 64

by Wesley Fink on October 19, 2004 12:01 AM EST- Posted in

- CPUs

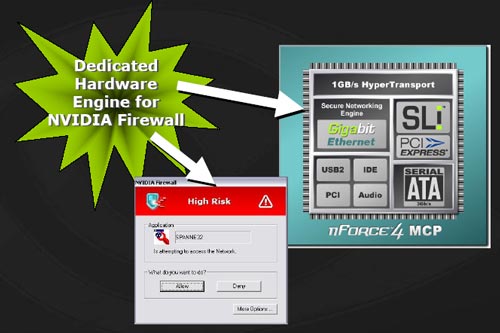

ActiveArmor: nVidia Secure Networking Engine

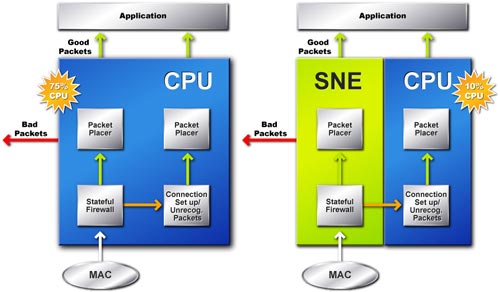

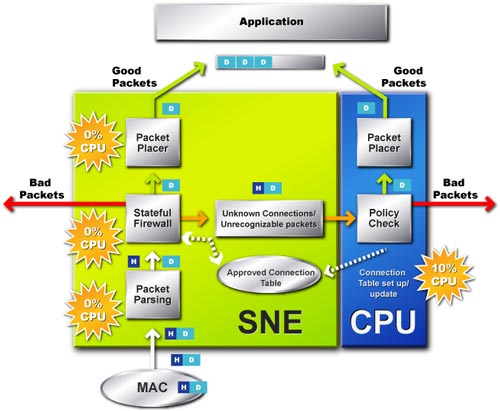

nVidia's on-chip Gigabit Ethernet is a popular feature of the nForce3-250 chipset. This was combined with the hardware based nVidia Firewall on all but the basic 250 chipset. On-chip Gigabit Ethernet and the hardware firewall are still a significant part of the nForce4 chipset, and all of the nForce4 chipsets feature both. However, nVidia has expanded the Network Security features in the Ultra and SLI chipsets to provide further protection against network attacks.The new network security features are called ActiveArmor, which are implemented as a dedicated hardware engine for the nVidia hardware Firewall.

nVidia's ActiveArmor enhances nVidia Firewall performance in several ways to protect from network attacks:

- Dedicated hardware engine enhances networking security while reducing CPU overhead

- Specialized features defend against hacker attacks

- User-friendly interface offers advanced management features

- Supports new Microsoft TCP Chimney Architecture for fast and secure networking

This compares to about 10% CPU overhead with the nVidia ActiveArmor hardware solution, which handles most of the network security management in the nForce4 chipset.

101 Comments

View All Comments

AlphaFox - Tuesday, October 19, 2004 - link

I take it no one here has used soundstorm with doom3: crackling and cutting out, having to reset the sound all the time. pain in the butt, how is it great??jm0ris0n - Tuesday, October 19, 2004 - link

I still think that Anyone who would want SLI-PCIe WOULD NOT use onboard sound.Viper96720 - Tuesday, October 19, 2004 - link

Ah i see I thought that was agp it is the 16x pci-e.LotoBak - Tuesday, October 19, 2004 - link

55 -I take it your refering to this pic

http://www.anandtech.com/cpuchipsets/showimage.htm...

That long slot is NOT agp. It is PCIe 16x. The two above it are PCIe 4x I believe (could be wrong on the 4x)

jediknight - Tuesday, October 19, 2004 - link

nVidia's decision to dump SoundStorm makes no sense. If it was a business decision because the OEMs and media (??) as an earlier posted pointed out.. how can they justify the extra expense of SLI? What OEM is going to use that high-end tech? (Hint: Not Alienware.. they've got their own stuff)The same people who want SLI want SoundStorm.. these enthusiasts are nVidia's core business (not by sales volume, by prestige, reputation, etc. in the marketplace) and not listening to your customers is a bad idea in my book..

jm0ris0n - Tuesday, October 19, 2004 - link

I could care less about the soundstorm :-p*Drool@SLI goodness :-D*

Viper96720 - Tuesday, October 19, 2004 - link

#45 the board has AGP in case you didn't notice the long brown slot next to the PCI. The 2 small ones right above the audio is the PCI-E.RebolMan - Tuesday, October 19, 2004 - link

The reason soundstorm is nice is because it outpreforms "real" cards - thus leading to better enjoyment of said games by soaking up less CPU time!http://www.tweaktown.com/document.php?dType=review...

It produces a _better_ gaming experience in terms of sound, and still gets better frame-rates than a PC equipped with a SB Audigy Platinum Pro!

http://www.tweaktown.com/document.php?dType=review...

and...

http://www.tweaktown.com/document.php?dType=articl...

Why don't vendors pay as much attention to "APU" performance as they do to GPU performance?

RebolMan - Tuesday, October 19, 2004 - link

BAH! Where's my SoundStorm!!!?!?!?Aquila76 - Tuesday, October 19, 2004 - link

#44 - That dual SLI board (page 3) looks like an MSI (VIA chipset? has an Envy controller at top) board, not the nForce4 SLI reference board. The nVidia reference board design may be different.