Two Phase Immersion Liquid Cooling at Supercomputing 2019

by Dr. Ian Cutress on November 29, 2019 2:00 PM EST

It would now appear we are saturated with two phase immersion liquid cooling (2PILC) – pun intended. One common element from the annual Supercomputing trade show, as well as the odd system at Computex and Mobile World Congress, is the push from some parts of the industry towards fully immersed systems in order to drive cooling. Last year at SC19 we saw a large number of systems featuring this technology – this year the presence was limited to a few key deployments.

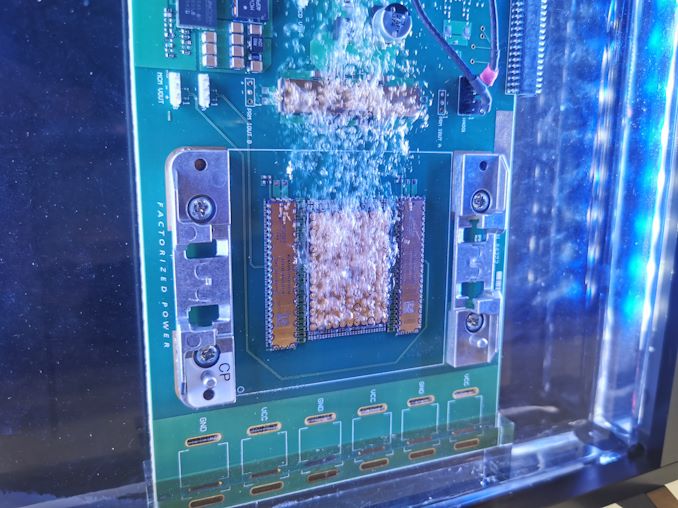

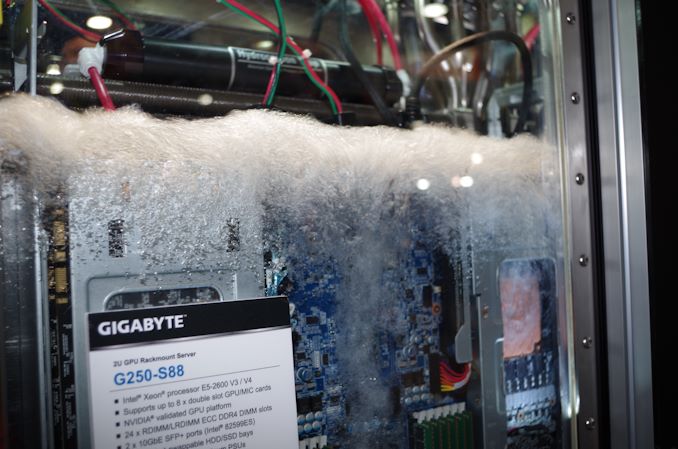

Two Phase Immersion Liquid Cooling (2PILC) involves a server with next to no heatsinks, and putting it into a liquid that has a low boiling point. These liquids are often organic compounds (so not water, or oil) that give direct contact to the silicon and as the silicon is used it will give off heat which is transferred into the liquid around it, causing it to boil. The most common liquids are variants of 3M Novec of Fluorinert, which can have boiling points around 59C. Because it turns the liquid into a gas, the gas rises, forcing convection of the liquid. The liquid then condenses on a cold plate / water pipe and falls back into the system.

These liquids are obviously non-ionic and so do not transfer electricity, and are of a medium viscocity in order to facilitate effective natural convection. Some deployments have extra forced convection which helps with liquid transport and supports higher TDPs. But the idea is that with a server or PC in this material, everything can be kept at a reasonable temperature, and it also supports super dense designs.

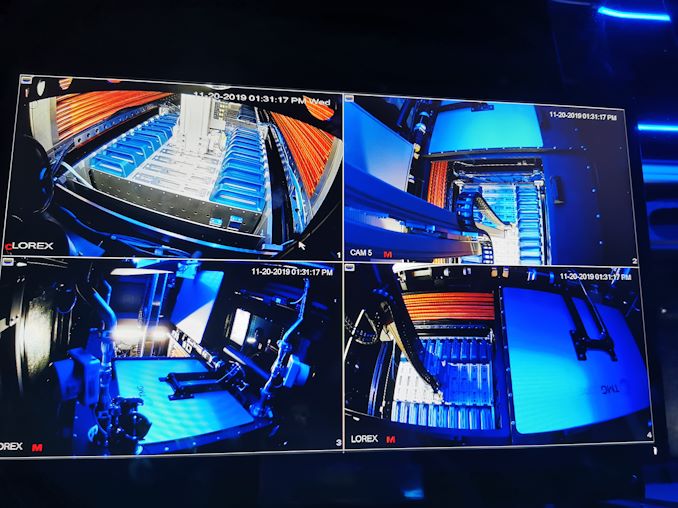

OTTO automated system with super dense racking

We reported on TMGcore’s OTTO systems, which involve this 2PILC technology to create data center units up to 60 kilowatts in 16 square feet – all the customer needs to do is supply power, water, and a network connection. Those systems also had automated pickup and removal, should maintenance be required. Companies like TMGcore cite that the 2PILC technology often allows for increased longevity of the hardware, due to the controlled environment.

One of the key directions of this technology last year was for crypto systems, or super-dense co-processors. We saw some of that again at SC19 this year, but not nearly as much. We also didn’t see any 2PILC servers directed towards 5G compute at the edge, which was also a common theme last year. All the 2PILC companies on the show floor this year were geared towards self-contained easy-to-install data center cubes that require little maintenance. This is perhaps unsurprising, given that 2PILC support without a dedicated unit is quite difficult without a data center ground up design.

One thing we did see was that component companies, such as companies building VRMs, were validating their hardware for 2PILC environments.

Typically a data center will discuss its energy efficiency in terms of PUE, or Power Usage Effectiveness. A PUE of 1.50 for example means that for every 1.5 megawatts of power used, 1 megawatt of useful work is performed. Standard air-cooled data centers can have a PUE of 1.3-1.5, or purpose built air-cooled datacenters can go as low as a PUE of 1.07. Liquid cooled datacenters are also around this 1.05-1.10 PUE, depending on the construction. The self-contained 2PILC units we saw at Supercomputing this year were advertising PUE values of 1.028, which is the lowest I’ve ever seen. That being said, given the technology behind them, I wouldn’t be surprised if a 2PILC rack would cost 10x of a standard air-cooled rack.

35 Comments

View All Comments

sharath.naik - Sunday, December 1, 2019 - link

So if the load pushes the whole liquid reservoir to above 59c it all evoporates? Not a smart thing to do, it is wiser to have enough thermal head room up to 100c. The thermal cooling is more efficient at a higher temperature difference anyway.destorofall - Monday, December 2, 2019 - link

under optimal condition one would want these liquids to be at the saturation temperature(boiling point) to take full advantage of nucleate boiling region of the boiling curves. provided the condensers have adequate ability to condense the amount vapor being produced by the load there is never the ability for all the fluid to boil away.mode_13h - Tuesday, December 3, 2019 - link

Forget what you know about PC cooling. It would take a tremendous amount of energy to boil off all the fluid at once. Most of the energy transfer, in this type of cooling, happens during the phase-change from liquid to gas, so it's not like your PC case temperatures which can gradually increase until they exceed some threshold.Basically, you have to know the upper range of how much heat the chips can dissipate, and then make sure your heat exchanger can more than keep up. In the event that something in the chain fails, the CPUs/GPUs/etc. will thermally throttle, worst case.

alufan - Monday, December 2, 2019 - link

weird I can remember this was all the rage for high end systems about 10 years ago and then it went away due to the unobtainable cost of the liquid from 3m, mineral oil was a big thing as well but only for the board components plus of course its difficult to remove the heat soak from the oil without a big reservoir anyone else remember the Zalman resorator? you needed similar to keep the oil under check after a while or a very specific case with lots of finsdestorofall - Monday, December 2, 2019 - link

If only the board and power supply manufactures could make their products denser then fluid cost becomes less of a concern.