The NVIDIA GeForce GTX 1650 Super Review, Feat. Zotac Gaming: Bringing Balance To 1080p

by Ryan Smith on December 20, 2019 9:00 AM ESTPower, Temperature, & Noise

Last, but not least of course, is our look at power, temperatures, and noise levels. While a high performing card is good in its own right, an excellent card can deliver great performance while also keeping power consumption and the resulting noise levels in check.

| GeForce Video Card Voltages | |||||

| 1650S Max | 1660 Max | 1650S Idle | 1660 Idle | ||

| 1.05v | 1.05v | 0.65v | 0.65v | ||

If you’ve seen one TU116 card, then you’ve seen them all as far as voltages are concerned. Even with this cut-down part, NVIDIA still lets the GTX 1650 Super run at up to 1.05v, allowing it to boost as high as 1950MHz.

| GeForce Video Card Average Clockspeeds | ||||

| Game | GTX 1660 | GTX 1650 Super | GTX 1650 | |

| Max Boost Clock | 1935MHz | 1950MHz | 1950MHz | |

| Boost Clock | 1785MHz | 1725MHz | 1695MHz | |

| Shadow of the Tomb Raider | 1875MHz | 1860MHz | 1845MHz | |

| F1 2019 | 1875MHz | 1875MHz | 1860MHz | |

| Assassion's Creed: Odyssey | 1890MHz | 1890MHz | 1905MHz | |

| Metro: Exodus | 1875MHz | 1875MHz | 1860MHz | |

| Strange Brigade | 1890MHz | 1860MHz | 1860MHz | |

| Total War: Three Kingdoms | 1875MHz | 1890MHz | 1875MHz | |

| The Division 2 | 1860MHz | 1830MHz | 1800MHz | |

| Grand Theft Auto V | 1890MHz | 1890MHz | 1905MHz | |

| Forza Horizon 4 | 1890MHz | 1875MHz | 1890MHz | |

Meanwhile the clockspeed situation looks relatively good for the GTX 1650 Super. Despite having a 20W lower TDP than the GTX 1660 and an official boost clock 60MHz lower, in practice our GTX 1650 Super card is typically within one step of the GTX 1660. This also keeps it fairly close to the original GTX 1650, which boosted higher in some cases and lower in others. In practice this means that the performance difference between the three cards is being driven almost entirely by the differences in CUDA core counts, as well as the use of GDDR6 in the GTX 1650 Super. Clockspeeds don’t seem to be a major factor here.

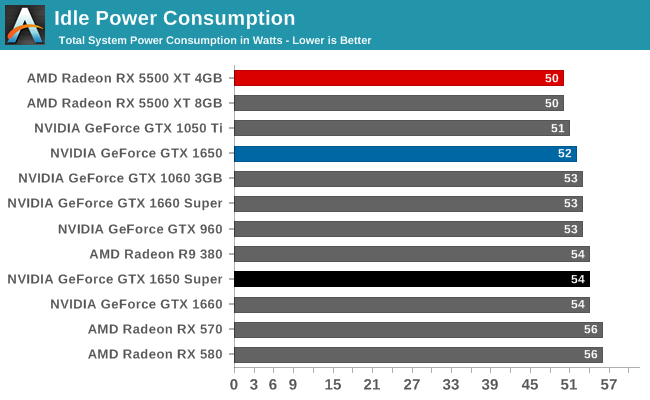

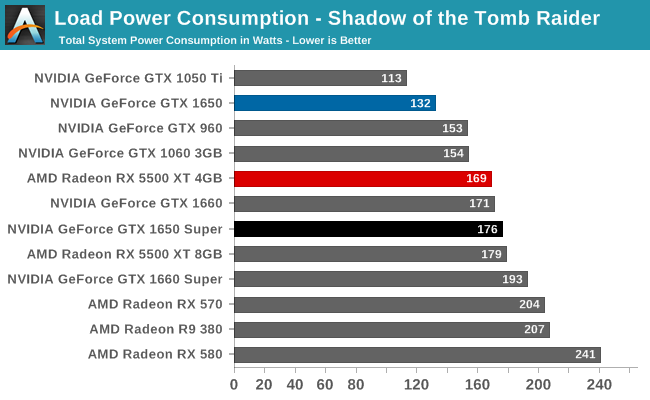

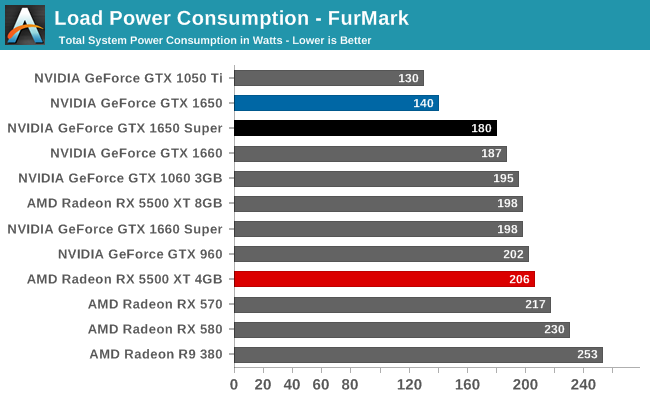

Shifting to power consumption, we see the cost of the GTX 1650 Super’s greater performance. It’s well ahead of the GTX 1650, but it’s pulling more power in the process. In fact I am a bit surprised by just how close it is (at the wall) to the GTX 1660, especially under Tomb Raider. While on paper it has a 20W lower TDP, in practice it actually fares a bit worse than the next level TU116 card. It’s only under FurMark, a pathological use case, that we see the GTX 1650 Super slot in under the GTX 1660. The net result is that the GTX 1650 Super seems to be somewhat inefficient, at least by NVIDIA standards. It doesn’t save a whole lot of power versus the GTX 1660 series, despite the lower performance.

Which also means it doesn’t fare especially well against the Radeon RX 5500 XT series. As with the GTX 1660, the RX 5500 XT is drawing less power than the GTX 1650 Super under Tomb Raider. It’s only by maxing out all of the cards with FurMark that the GTX 1650 Super pulls ahead. In practice I expect real world conditions to be between these two values – Tomb Raider may be a bit too hard on these low-end cards – but regardless, this would put GTX 1650 Super only marginally ahead of RX 5500 XT.

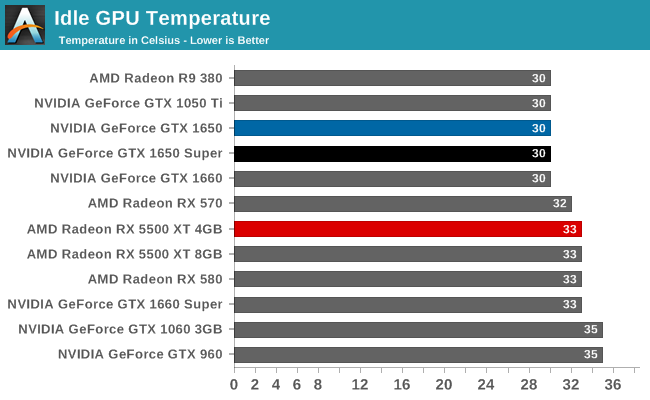

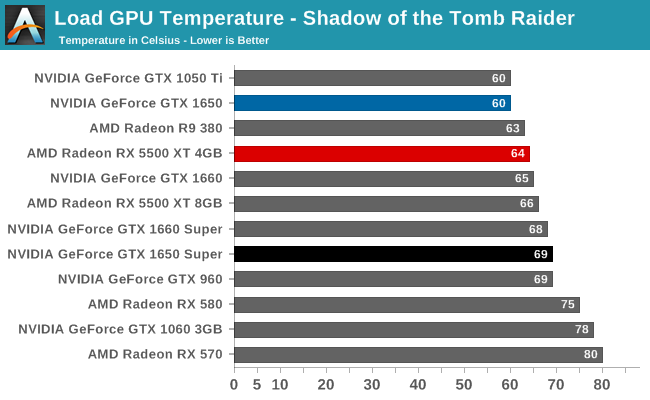

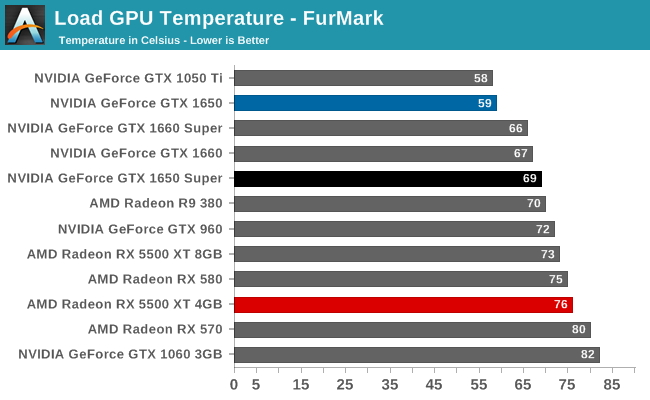

Looking at GPU temperatures, Zotac’s GTX 1650 Super card puts up decent numbers. While the 100W card understandably gets warmer than it’s 75W GTX 1650 sibling, we never see the GPU temperatures cross 70C. The card is keeping plenty cool.

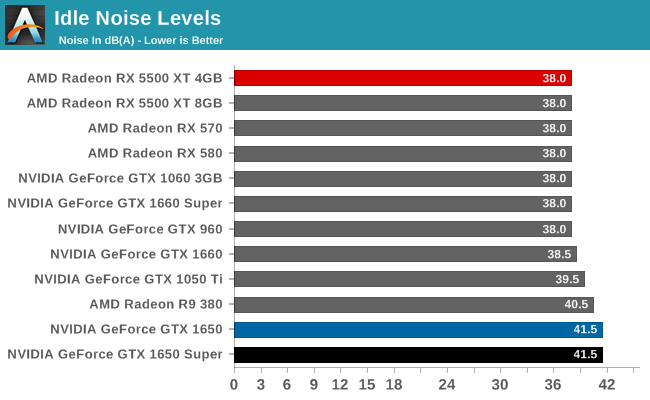

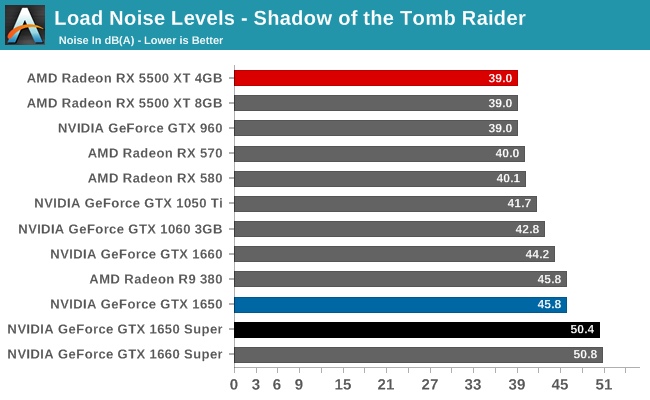

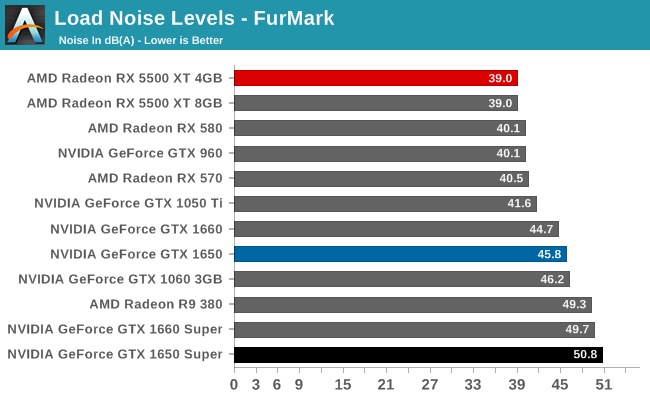

But when it comes time to measure how much noise the card is producing, we find a different picture. In order to keep the GPU at 69C, the Zotac GTX 1650 Super’s fans are having to do some real work. And unfortunately, the small 65mm fans just aren’t very quiet once they have to spin up. To be sure, the fans as a whole aren’t anywhere near max load – we recorded just 55% as reported by NVIDIA’s drivers – however this is still enough to push noise levels over 50 dB(A).

Zotac’s single-fan GTX 1650 card didn’t fare especially well here either, but by the time we reach FurMark, the GTX 1650 Super does even worse. It’s louder than any GTX 1660 card we’ve tested, even marginally exceeding the GTX 1660 Super.

All of this makes for an interesting competitive dichotomy given last week’s launch of the Radeon RX 5500 XT. The card we test there, Sapphire’s Pulse RX 5500 XT, is almost absurd in how overbuilt it is for a 130W product, with a massive heatsink and equally massive fans. But it moves more heat than Zotac’s GTX 1650 Super with a fraction of the noise.

If nothing else, this is a perfect example of the trade-offs that Sapphire and Zotac made with their respective cards. Zotac opted to maximize compatibility so that the GTX 1650 Super would fit in virtually any machine that the GTX 1650 (vanilla) can fit in, at the cost of having to use a relatively puny cooler. Sapphire went the other direction, making a card that’s hard to fit in some machines, but barely has to work at all to keep itself cool. Ultimately neither approach is the consistently better one – despite its noise advantage, the Sapphire card’s superiority ends the moment it can’t fit in a system – underscoring the need for multiple partners (or at least m multiple board designs). Still, it’s hard to imagine that Zotac couldn’t have done at least a bit better here; a 50 dB(A) card is not particularly desirable, especially for as something as low-powered as a GTX 1650 series card.

67 Comments

View All Comments

guachi - Friday, December 20, 2019 - link

The 1650S overall is the card to get if buying a new card. But I'd still recommend a used 570 or 580 (and maybe a new 570 if you get one in sale).Polaris will never die.

I just wouldn't buy THIS 1650S. The noise. Ouch! 50dB?

No.

lmcd - Friday, December 20, 2019 - link

It's a convenient size, enough so that airflow in my case will result in better overall acoustics in my case compared to a larger card. Agreed that there's better designs yet but this isn't as awful as you're implying.Spunjji - Monday, December 23, 2019 - link

50db is terrible under any circumstance.lmcd - Friday, December 20, 2019 - link

Gonna be honest I don't quite understand why the 1050 Ti and 1060 3GB both need to be in this graph set while the 1070 didn't make it in. Usually there aren't performance regressions from one generation to another, so it's more interesting to compare a higher card from the previous generation to a lower card from the current generation.Ryan Smith - Friday, December 20, 2019 - link

The 1070 was a $350 card its entire life. Whereas the 1050 Ti and 1060 3GB were the cards closest to being the 1650 Super's predecessor (1050 Ti was positioned a bit lower, 1060 3GB a bit higher). So the latter two are typically the most useful for generational comparisons.At any rate, this is why we have Bench. So you can use that to make any card comparisons you'd like to see that aren't in this article itself. https://www.anandtech.com/bench/GPU19/2638

catavalon21 - Saturday, December 21, 2019 - link

In doing so, it paints an interesting light for those of us who do not upgrade every generation. While the 970 can't be compared directly to it in Bench, it's interesting to see how many benchmarks show it besting the 980 - which was a $550 card when it debuted. Maybe the RTX series cards are worthy of their criticisms for gen-over-gen improvement in performance per dollar, but not this guy. Yes, I know 980 was 2 generations ago, but still. The 980 takes some of the benchmarks, especially CUDA, but across the board, the 1650S competes very well. For a card to have 980-like performance for $160 at 100 watts, I'm impressed.The_Assimilator - Saturday, December 21, 2019 - link

No you're wrong, according to forum keyboard warriors there's been no improvement in price/perf in the last half decade because they can't get top-tier performance for $100. ;)Spunjji - Monday, December 23, 2019 - link

That we're only seeing a price/performance improvement over Pascal more than half-way into the Turing generation kinda proves those "keyboard warriors" correct, though. It's nice, but it was annoying when on release a large chunk of the press decided to sing songs about how new boundaries of performance were being pushed (true!) while downplaying how perf/$ remained still or regressed (equally true). Throwing up some straw men now doesn't change that.Spunjji - Monday, December 23, 2019 - link

The 1060 6GB already beat out the 980 under most circumstances - at worst it was roughly equal. That was a very nice perf/$ improvement indeed for a single generation, and it's where we got most of the gains the 1650 Super is now building on.The 2060 is an instructive example of how the RTX series disappointed in that regard, as the cost increase roughly matched the performance increase and its RTX features are arguably useless.

Kangal - Saturday, December 21, 2019 - link

Thanks for the review Ryan.But I have to go against you on the mention of 4GB VRAM capacity for 2020. You have forgotten something very important. Timing.

Sure, PC Gaming makes a lot more money than Console Gaming (and Mobile Gaming is even larger!!), but that is because the wealth is not distributed fairly, it's quite concentrated. Whereas the Console Market is more spread out, so publishers can make profits more universally and over a longer timeframe. On top of that, there's the marketing and the fear of piracy. Which is the reason why Game Publishers target the consoles first, then afterwards port their titles to PC... even though originally they developed them on PC!

I needed to mention that above background first to give some clarification. Games for 2020 will primarily be made to target the PS4, and they might get ported to the PS5 or Xbox X. Or even those launch titles for the PS5/XbX, they will actually be made for the PS4 first, and had enhancements made. Remember the 2014 games which were still very much PS3/360 games?

And it will take AT LEAST a full-year for the transition to occur. So games in Early 2022 will still target the PS4, which means their PC Ports will be fine for current day low-end PCs. I mean even with the PS5 release, the PS4 sales will continue, and that's a huge market base for the companies to simply ignore. And even in the PC Market, most gamers have something that's slower than a GTX 1660 Ti. Besides, low VRAM isn't too much of an issue, most of the time the game will only require 3GB RAM to run perfectly. If you have more available, say 8GB, then without any changes from your end, you will see it now start using say 6GB of VRAM. That's Double! And you didn't even change the settings! Why? Most games now use the VRAM to store assets it thinks it might use later on, so that it doesn't have to load them when required. This is analogous to how Mac/Linux uses System RAM, as opposed to say Windows does. If it does have to load them, performance will take a momentary dip, but perfectly playable.

And even if the games now require more VRAM by default to be playable, in most cases that problem too can be solved. You can change individual settings one-by-one and see which has the most effect to the graphical fidelity, and how much it penalises your VRAM/RAM usage, and your framerates. I mean look at lowspecgamer, to see how far he pushes it. Though for a better idea, have a look at HardwareUnboxed on YouTube, and see how they optimise graphics for the recently released Red Dead Redemption 2 (PC) game. They fiddled with the graphics to get a negligible downgrade, but boosted their framerates by +66%.

So I think 4GB VRAM will become the new 2GB VRAM (which itself replaced the 1GB VRAM), but that doesn't mean they're compromising on the longevity of the card. I think 4GB will be viable for the midrange upto 2022, then they're strictly just low-end. Asking gamers to get 8GB instead of 4GB for these low-midrange cards is not really sensible at the prices... it is exactly like asking the GTX 960 buyers to get the 4GB Variants instead of the 2GB Variants.