It’s a Cascade of 14nm CPUs: AnandTech’s Intel Core i9-10980XE Review

by Dr. Ian Cutress on November 25, 2019 9:00 AM ESTPower Consumption

We’ve covered in detail across multiple articles the story of Intel’s turbo: about how the TDP on the box doesn’t mean a whole lot, the motherboard you’re using can ultimately determine how long your CPU does turbo for, and how the power limits of the processor are ultimately decided by the motherboard manufacturer’s settings in the BIOS unless you change them. Intel only gives recommendations on peak turbo power and the length of the turbo: the only thing Intel defines is the peak frequency based on load when turbo is allowed.

Talking Intel’s TDP and Turbo

Interviewing an Intel Fellow about TDP and Turbo

Comparing Turbo: AMD and Intel

This means that an extremely conservative system might not allow the power to go above TDP, but the system capable of all the power might allow the processor to turbo ad infinitum. The reality is usually somewhere in the middle, but for a high-end desktop platform, where most of the motherboards are engineered to withstand almost anything that comes at it, we’re going to often see the situation of an elongated or persistent turbo.

It’s worth noting that for consumer workloads, most of the work can happen within a reasonable turbo – and thus sustained performance metrics aren’t that important. But today we are testing high-end desktop hardware, and because Intel doesn’t explicitly define peak turbo power or turbo length (it only provides recommendations that motherboard manufacturers can and do ignore), I obviously asked Intel what it believes that reviewers should do when trying to compare performance. The answer wasn’t very helpful: test with a range of motherboards’.

In our previous review of the Core i9-9900KS, I did two sets of tests: benchmarks at the motherboard default, and tests at the Intel recommended turbo settings. The motherboard defaults, for that motherboard in question, were essentially full turbo all the time. Intel’s recommended settings gave some decreases in the long benchmarks, around 7%, but the rest of the tests were about the same.

For this review, we’re using ASRock’s X299 OC Formula motherboard. This is a motherboard designed for high-end and extreme overclocking, and is built accordingly. As a result, our sustained turbo and power limits are set very high. This is a HEDT system, and most HEDT motherboards are built and engineered this way, and so we expect our results here to be consummate with most users’ performance.

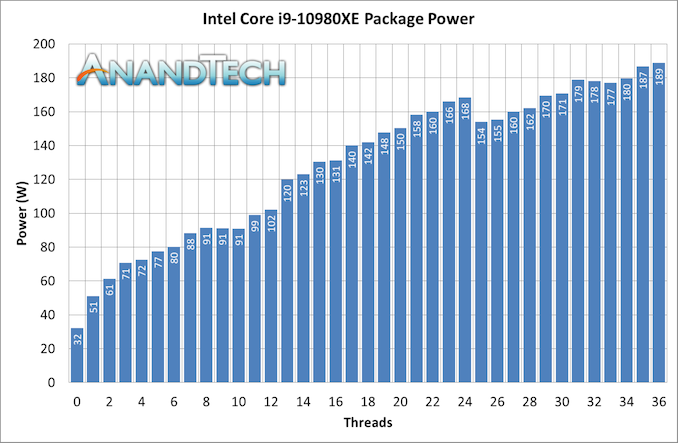

For our power consumption metrics, Intel actually does some obfuscation on its high-end platform. Unlike AMD, we cannot extract the per-core power numbers from the internal registers during a sustained workload. As a result, all we get are total package numbers, which show the cooling requirements of the processor but also include the power consumption of the DRAM controller, uncore, and PCIe root complexes.

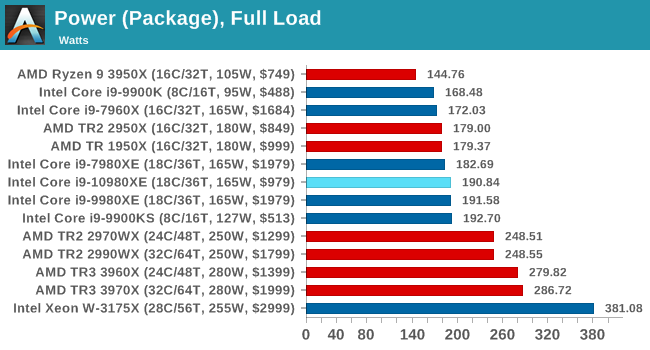

The TDP of this chip is 165 W – normally Intel recommends a peak power of 1.25x, which would be 207 W, and so the 189 W value we see is under this. The chip we got is technically an engineering sample, not a retail part, although we usually expect the final stepping engineering samples to be identical to what is sold in the market. Despite this, your mileage may vary.

When we compare this peak to other CPUs:

79 Comments

View All Comments

Thanny - Wednesday, November 27, 2019 - link

Zen does not support AVX-512 instructions. At all.AVX-512 is not simply AVX-256 (AKA AVX2) scaled up.

Something to consider is that AVX-512 forces Intel chips to run at much slower clock speeds, so if you're mixing workloads, using AVX-512 instructions could easily cause overall performance to drop. It's only in an artificial benchmark situation where it has such a huge advantage.

Everett F Sargent - Monday, November 25, 2019 - link

Obviously, AMD just caught up with Intel's 256-bit AVX2, prior to Ryzen 3 AMD only had 128-bit AVX2 AFAIK. It was the only reason I bought into a cheap Ryzen 3700X Desktop (under $600US complete and prebuilt). To get the same level of AVX support, bitwise.I've been using Intel's Fortran compiler since 1983 (back then it was on a DEC VAX).

So I only do math modeling at 64-bits like forever (going back to 1975), So I am very excited that AVX-512 is now under $1KUS. An immediate 2X speed boost over AVX2 (at least for the stuff I'm doing now).

rahvin - Monday, November 25, 2019 - link

I'd be curious how much the AVX512 is used by people. It seems to be a highly tailored for only big math operations which kinda limits it's practical usage to science/engineering. In addition the power use of the module was massive in the last article I read, to the point that the main CPU throttled when the AVX512 was engaged for more than a few seconds.I'd be really curious what percentage of people buying HEDT are using it, or if it's just a niche feature for science/engineering.

TEAMSWITCHER - Tuesday, November 26, 2019 - link

If you don't need AVX512 you probably don't need or even want a desktop computer. Not when you can get an 8-core/16-thread MacBook Pro. Desktops are mostly built for show and playing games. Most real work is getting done on laptops.Everett F Sargent - Tuesday, November 26, 2019 - link

LOL, that's so 2019.Where I am from it's smartwatches all the way down.

Queue Four Yorkshiremen.

AIV - Tuesday, November 26, 2019 - link

Video processing and image processing can also benefit from AVX512. Many AI algorithms can benefit from AVX512. Problem for Intel is that in many cases where AVX512 gives good speedup, GPU would be even better choice. Also software support for AVX512 is lacking.Everett F Sargent - Tuesday, November 26, 2019 - link

Not so!https://software.intel.com/en-us/parallel-studio-x...

It compiles and runs on both Intel and AMD. Full AVX-512 support on AVX-512 hardware.

You have to go full Volta to get true FP64, otherwise desktop GPU's are real FP64 dogs!

AIV - Wednesday, November 27, 2019 - link

There are tools and compilers for software developers, but not so much end user software actually use them. FP64 is mostly required only in science/engineering category. Image/video/ai processing is usually just fine with lower precision. I'd add that also GPUs only have small (<=32GB) RAM while intel/amd CPUs can have hundreds of GB or more. Some datasets do not fit into a GPU. AVX512 still has its niche, but it's getting smaller.thetrashcanisfull - Monday, November 25, 2019 - link

I asked about this a couple of months ago. Apparently the 3DPM2 code uses a lot of 64b integer multiplies; the AVX2 instruction set doesn't include packed 64b integer mul instructions - those were added with AVX512, along with some other integer and bit manipulation stuff. This means that any CPU without AVX512 is stuck using scalar 64b muls, which on modern microarchitectures only have a throughput of 1/clock. IIRC the Skylake-X core and derivatives have two pipes capable of packed 64b muls, for a total throughput of 16/clock.I do wish AnandTech would make this a little more clear in their articles though; it is not at all obvious that the 3DPM2 is more of a mixed FP/Integer workload, which is not something I would normally expect from a scientific simulation.

I also think that the testing methodology on this benchmark is a little odd - each algorithm is run for 20 seconds, with a 10 second pause in between? I would expect simulations to run quite a bit longer than that, and the nature of turbo on CPUs means that steady-state and burst performance might diverge significantly.

Dolda2000 - Monday, November 25, 2019 - link

Thanks a lot, that does explain much.