The Intel Core i9-9990XE Review: All 14 Cores at 5.0 GHz

by Dr. Ian Cutress on October 28, 2019 10:00 AM ESTCPU Performance: Web and Legacy Tests

While more the focus of low-end and small form factor systems, web-based benchmarks are notoriously difficult to standardize. Modern web browsers are frequently updated, with no recourse to disable those updates, and as such there is difficulty in keeping a common platform. The fast paced nature of browser development means that version numbers (and performance) can change from week to week. Despite this, web tests are often a good measure of user experience: a lot of what most office work is today revolves around web applications, particularly email and office apps, but also interfaces and development environments. Our web tests include some of the industry standard tests, as well as a few popular but older tests.

We have also included our legacy benchmarks in this section, representing a stack of older code for popular benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

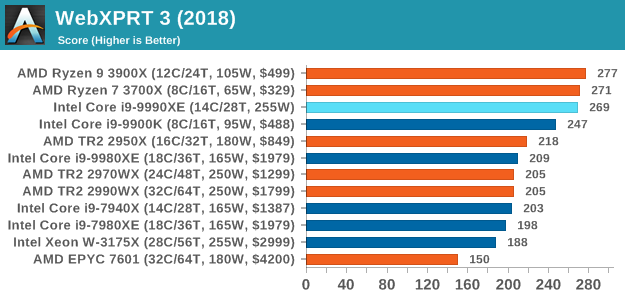

WebXPRT 3: Modern Real-World Web Tasks, including AI

The company behind the XPRT test suites, Principled Technologies, has recently released the latest web-test, and rather than attach a year to the name have just called it ‘3’. This latest test (as we started the suite) has built upon and developed the ethos of previous tests: user interaction, office compute, graph generation, list sorting, HTML5, image manipulation, and even goes as far as some AI testing.

For our benchmark, we run the standard test which goes through the benchmark list seven times and provides a final result. We run this standard test four times, and take an average.

Users can access the WebXPRT test at http://principledtechnologies.com/benchmarkxprt/webxprt/

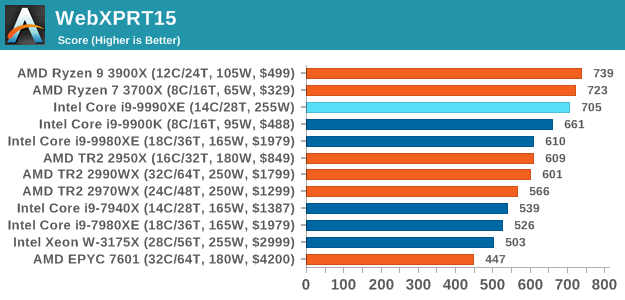

WebXPRT 2015: HTML5 and Javascript Web UX Testing

The older version of WebXPRT is the 2015 edition, which focuses on a slightly different set of web technologies and frameworks that are in use today. This is still a relevant test, especially for users interacting with not-the-latest web applications in the market, of which there are a lot. Web framework development is often very quick but with high turnover, meaning that frameworks are quickly developed, built-upon, used, and then developers move on to the next, and adjusting an application to a new framework is a difficult arduous task, especially with rapid development cycles. This leaves a lot of applications as ‘fixed-in-time’, and relevant to user experience for many years.

Similar to WebXPRT3, the main benchmark is a sectional run repeated seven times, with a final score. We repeat the whole thing four times, and average those final scores.

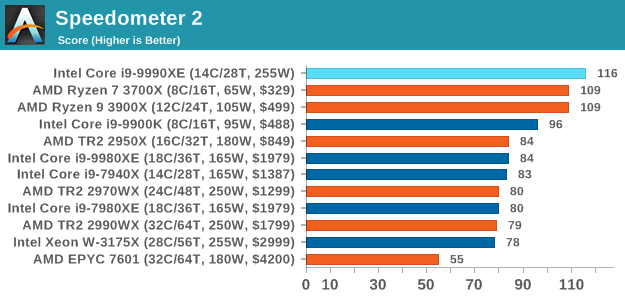

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a accrued test over a series of javascript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics. We report this final score.

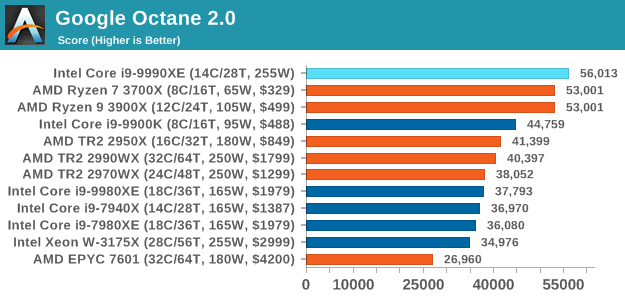

Google Octane 2.0: Core Web Compute

A popular web test for several years, but now no longer being updated, is Octane, developed by Google. Version 2.0 of the test performs the best part of two-dozen compute related tasks, such as regular expressions, cryptography, ray tracing, emulation, and Navier-Stokes physics calculations.

The test gives each sub-test a score and produces a geometric mean of the set as a final result. We run the full benchmark four times, and average the final results.

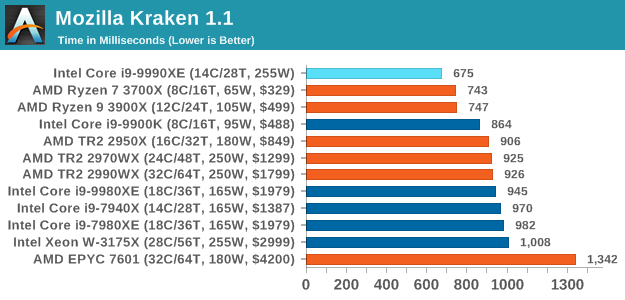

Mozilla Kraken 1.1: Core Web Compute

Even older than Octane is Kraken, this time developed by Mozilla. This is an older test that does similar computational mechanics, such as audio processing or image filtering. Kraken seems to produce a highly variable result depending on the browser version, as it is a test that is keenly optimized for.

The main benchmark runs through each of the sub-tests ten times and produces an average time to completion for each loop, given in milliseconds. We run the full benchmark four times and take an average of the time taken.

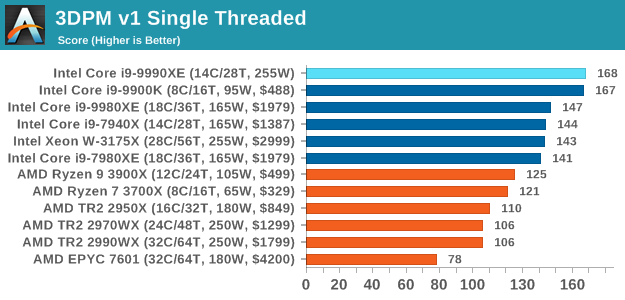

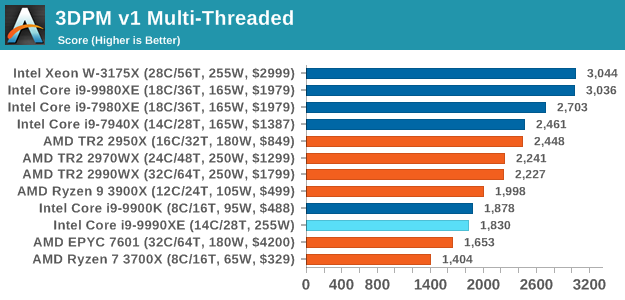

3DPM v1: Naïve Code Variant of 3DPM v2.1

The first legacy test in the suite is the first version of our 3DPM benchmark. This is the ultimate naïve version of the code, as if it was written by scientist with no knowledge of how computer hardware, compilers, or optimization works (which in fact, it was at the start). This represents a large body of scientific simulation out in the wild, where getting the answer is more important than it being fast (getting a result in 4 days is acceptable if it’s correct, rather than sending someone away for a year to learn to code and getting the result in 5 minutes).

In this version, the only real optimization was in the compiler flags (-O2, -fp:fast), compiling it in release mode, and enabling OpenMP in the main compute loops. The loops were not configured for function size, and one of the key slowdowns is false sharing in the cache. It also has long dependency chains based on the random number generation, which leads to relatively poor performance on specific compute microarchitectures.

3DPM v1 can be downloaded with our 3DPM v2 code here: 3DPMv2.1.rar (13.0 MB)

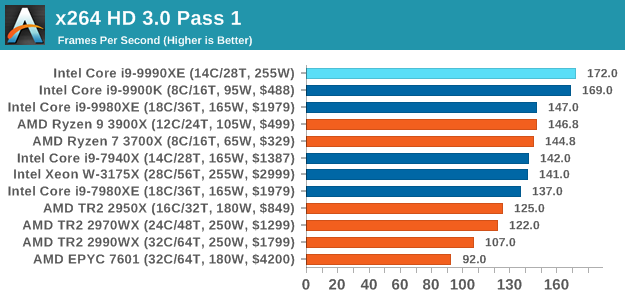

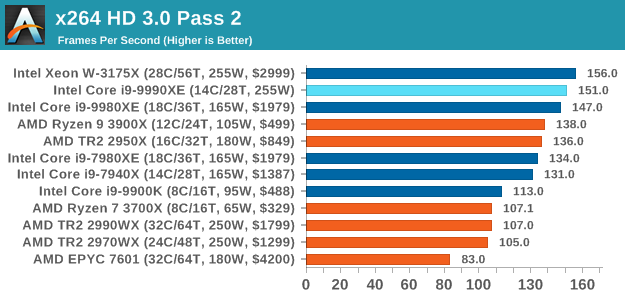

x264 HD 3.0: Older Transcode Test

This transcoding test is super old, and was used by Anand back in the day of Pentium 4 and Athlon II processors. Here a standardized 720p video is transcoded with a two-pass conversion, with the benchmark showing the frames-per-second of each pass. This benchmark is single-threaded, and between some micro-architectures we seem to actually hit an instructions-per-clock wall.

145 Comments

View All Comments

Sivar - Monday, October 28, 2019 - link

Why such an angry statement?14 is a very respectable number of cores. 14 at 5GHz is a world exclusive.

I wouldn't even call this a product -- more of a hand-picked specialty part auction, which is perfectly reasonable (if uncommon) for any manufacturer to do. The fact that the parts sold indicates the demand is there. Why ignore the demand?

Spunjji - Wednesday, October 30, 2019 - link

The fact that they sold very few of them indicates that the demand is barely there.FunBunny2 - Monday, October 28, 2019 - link

"Stories of companies spending 10s of millions to implement line-of-sight microwave transmitter towers to shave off 3 milliseconds from the latency time is a story I once heard. "There was reporting, mainstream source (Lewis: https://www.telegraph.co.uk/finance/newsbysector/b... that a broker(s) installed a fiber line from the Chicago office to an exchange in NJ.

“It needed its burrow to be straight, maybe the most insistently straight path ever dug into the earth. It needed to connect a data centre on the South Side of Chicago to a stock exchange in northern New Jersey. Above all, apparently, it had to be secret," Mr Lewis said.

bji - Monday, October 28, 2019 - link

I call BS on that story. Why would you spend hundreds of millions (it must have cost at least that right?) to dig a straight 800+ mile tunnel between Chicago and NYC to get a 13 ms latency just so you could be destroyed by offices in NYC with 5 ms latency. Makes no sense. Your only choice is to move physically close to the source, if lowest latency is the winner then that's the only way to get it and be competitive.Authors happily embellish existing stories, misrepresent details, and just plain old make sh** up to sell books. And then news outlets happily garbage-in, garbage-out these stories to get hits. I'm pretty sure that's what happened with that "story".

eek2121 - Monday, October 28, 2019 - link

Companies have done it. Hell years ago I INTERVIEWED with a company that did it. It would blow your mind to find out what the financial folks will do to accelerate trading. A large portion of stock market trades are automated and driven by machine learning or predictive algorithms. How do I know, that position I interviewed for years ago (2003) was for a software developer for such an algorithm. I didn't get the job, because I didn't have the skills they were looking for at the time, but we did have a very interesting conversation about how their platform worked. It's fascinating how finance pushes everything forward.FunBunny2 - Monday, October 28, 2019 - link

" It would blow your mind to find out what the financial folks will do to accelerate trading."yes, yes it would - here: https://www.marketplace.org/2019/10/07/fight-nyse-...

bji - Monday, October 28, 2019 - link

Yes, I believe that those companies probably often spend lots of money to buy competitive advantages. I am simply stating that they'd not be buying a competitive advantage here (since the real competition is based in NYC had has an insurmountable advantage - the laws of physics not letting signals travel between Chicago and Wall St. faster than 13 ms) so they wouldn't spend the money. They would spend money buying an actual competitive advantage, i.e. offices in NYC.mode_13h - Tuesday, October 29, 2019 - link

> Why would you spend hundreds of millions (it must have cost at least that right?) to dig a straight 800+ mile tunnel between Chicago and NYC to get a 13 ms latency just so you could be destroyed by offices in NYC with 5 ms latency. Makes no sense. Your only choice is to move physically close to the source, if lowest latency is the winner then that's the only way to get it and be competitive.When something doesn't seem to make sense, maybe the error is in your understanding of the situation. Did you ever consider that there are financial markets outside of NYC, and that some people might be trading between markets, or using signals from one market to inform trades in others?

Joel Busch - Tuesday, October 29, 2019 - link

This one is easy to answer, because there are two stock exchanges in play. NYSE in New York and CHX in Chicago. If you can send information from one exchange to the other quicker than others then you have an opportunity for arbitrage.One of my professors is Ankit Singla, he works on c-speed networking, he cited this paper in class https://doi.org/10.1111/fire.12036

They say for example:

"Our analysis of the market data confirms that as of April 2010, the fastest communication route connecting the Chicago futures markets to the New Jersey equity markets was through fiber optic lines that allowed equity prices to respond within 7.25–7.95 ms of a price change in Chicago (Adler, 2012). In Au-gust of 2010, Spread Networks introduced a new fiber optic line that was shorter than the pre-existing routes and used lower latency equipment. This technology reduced Chicago–New Jersey latency to approximately 6.65 ms (Steiner, 2010; Adler,2012)."

I don't have the time to read the whole paper right now, I'll just trust my professor here. If there is actually something wrong with their methodology then I think the world would like to hear it.

rahvin - Monday, October 28, 2019 - link

<<“It needed its burrow to be straight, maybe the most insistently straight path ever dug into the earth. It needed to connect a data centre on the South Side of Chicago to a stock exchange in northern New Jersey. Above all, apparently, it had to be secret," Mr Lewis said>>That's just a bunch of hogwash. You couldn't dig a straight line from Chicago to Jersey. It's just fancy sounding hogwash meant to convince those without the logic or background to see it for the hogwash it is. It's no more true than grimm's fairy tales.