The Apple iPhone 11, 11 Pro & 11 Pro Max Review: Performance, Battery, & Camera Elevated

by Andrei Frumusanu on October 16, 2019 8:30 AM ESTThe A13's Memory Subsystem: Faster L2, More SLC BW

The memory subsystem of a chip is an essential backbone for the performance of not only the CPU cores, but also the whole rest of the system. In this case we’re taking a closer look at how the memory subsystem behaves on the CPU side of things.

Last year we saw Apple make significant changes to the SoC’s memory subsystem with the inclusion of a new architecture system level cache (SLC), which serves as the last level cache for not only the CPU, but also a lot of other SoC components like the GPU.

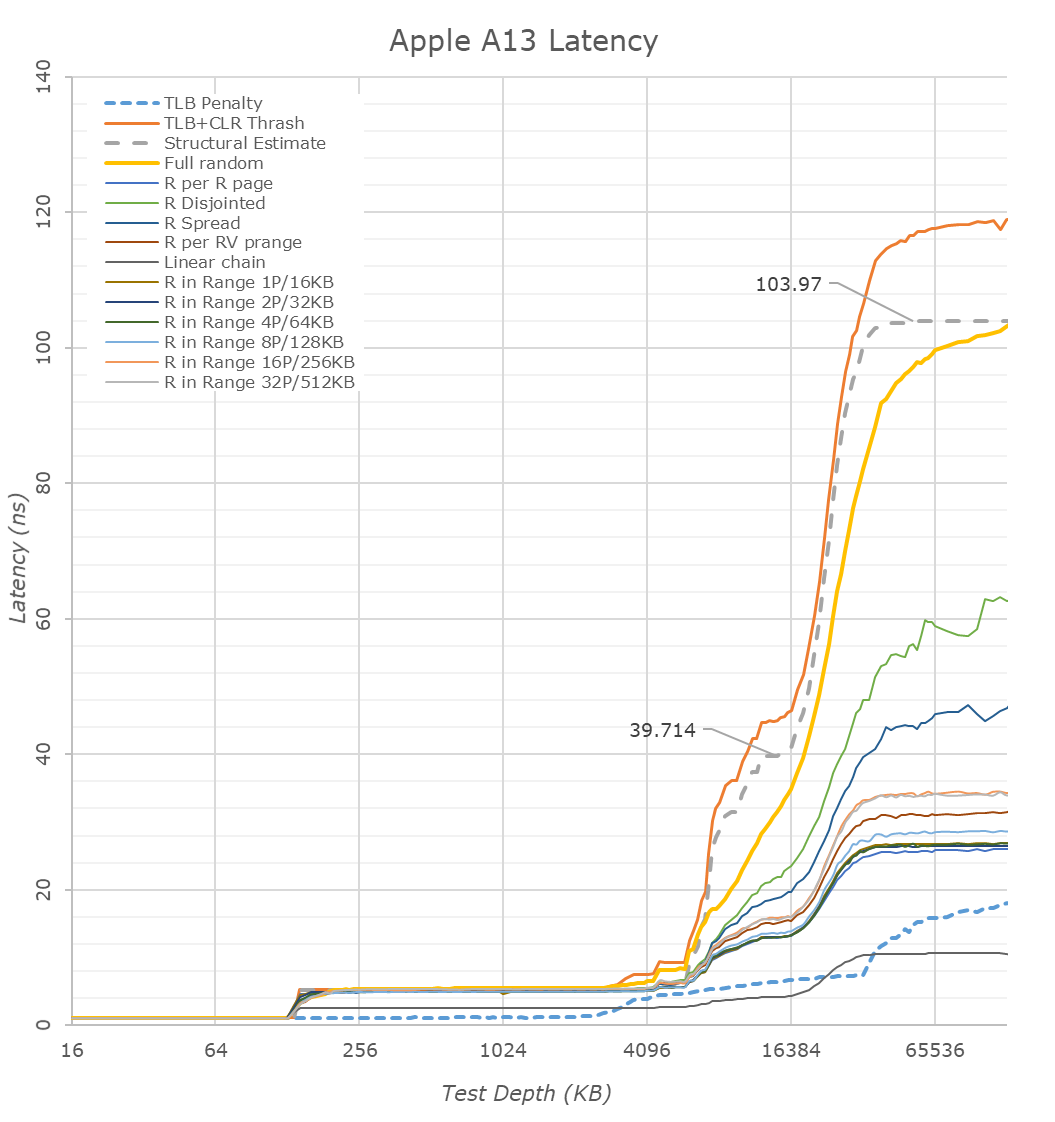

Looking first at the linear graphed memory latencies, we see that the A13’s structural DRAM latency falls in at ~104ns, a very slight regression to the 102.8ns of the A12. Apple in this regard isn’t the best among mobile SoCs, HiSilicon’s newest Kirin 990 now falls in at ~96ns and the Exynos 9820 should also fall into a similar range, however this doesn’t matter too much in the grand scheme of things given Apple’s massive cache hierarchy. Patterns such as full random pointer chasing is significantly more performant on Apple’s cores and this should be tightly linked to the strong TLBs as well as iOS’s system configuration choice of using 16KB pages.

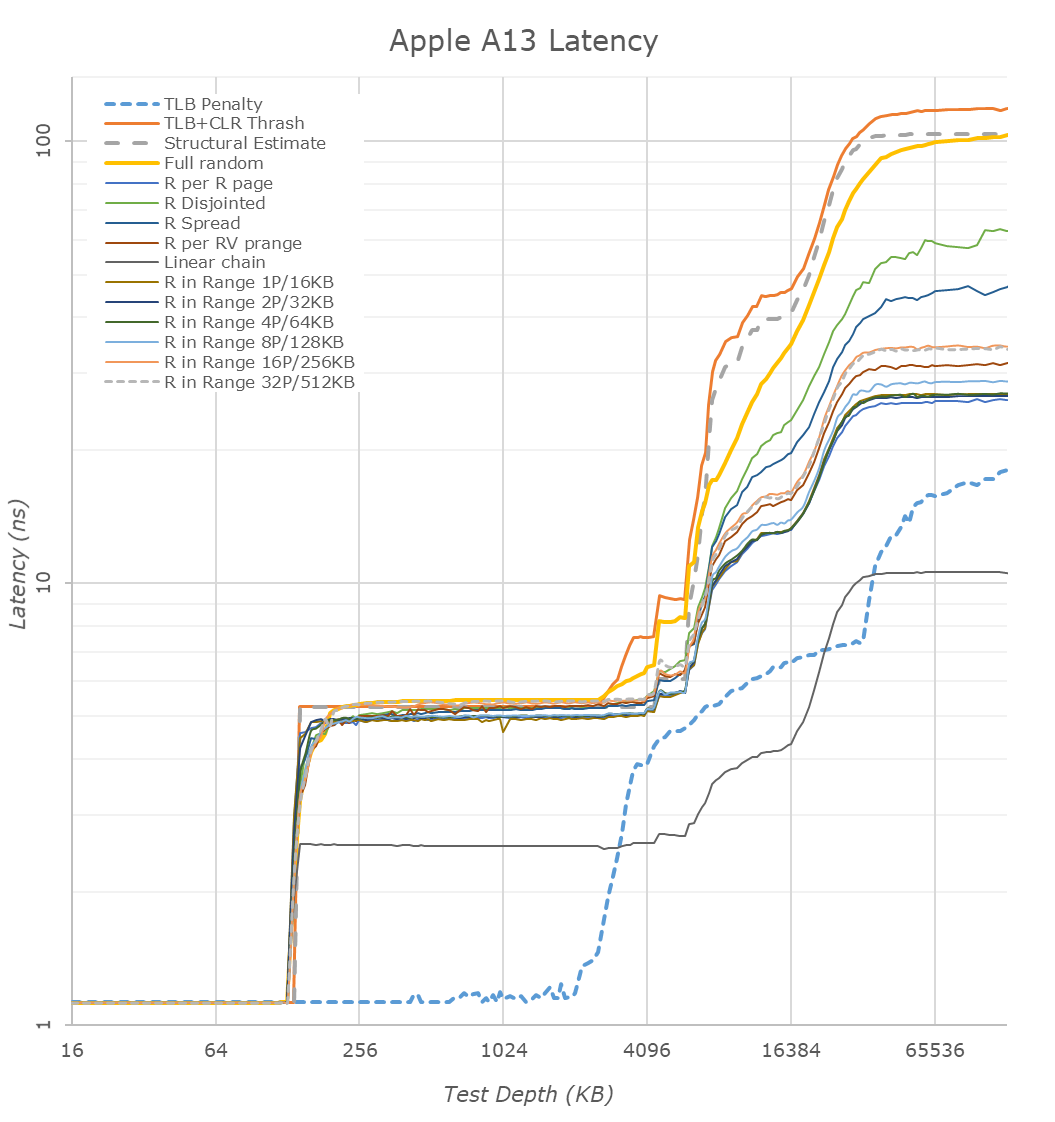

Moving to a logarithmic chart we better see the transitions between the cache hierarchies. We can clearly see Apple’s 128KB L1D cache here. The L2 cache is also relatively straightforward till 4MB as the latencies are relatively flat. From here on things become quite complicated and things differ a bit compared to the A12. Last year we determined that the L2 cache structure physically must be around 8MB in size, however we saw that it only looks as if the big cores only have access to around 6MB. Apple employs an “L2E” cache – this is seemingly a region of the big core L2 cache that serves as an L3 to the smaller efficiency cores (which themselves have their own shared L2 underneath in their CPU group).

In this region the new A13 behaves slightly different as there’s an additional “step” in the latency ladder till about 6MB. Frankly I don’t have any proper explanation as to what the microarchitecture is doing here till the 8MB mark. It does look however that the physical structure has remained at 8MB.

Going further out into the cache hierarchy we’re hitting the SLC, which would act as an L3 to the large performance cores, but should be shared with other IP blocks in the SoC. Here we see a significant change in behavior to the A12. If one had to guess as to what happening you’d think that the SLC has grown in size beyond the 8MB we estimated to have been used in the A12. Short of analyzing the die shot and see if the structure indeed has doubled, I’m a bit skeptical and I feel it’s more likely that Apple is using a partitioning system and has possibly enabled the CPU complex to access more of the SLC. What is evident here, is the doubling of the SLC cache from 8MB to 16MB.

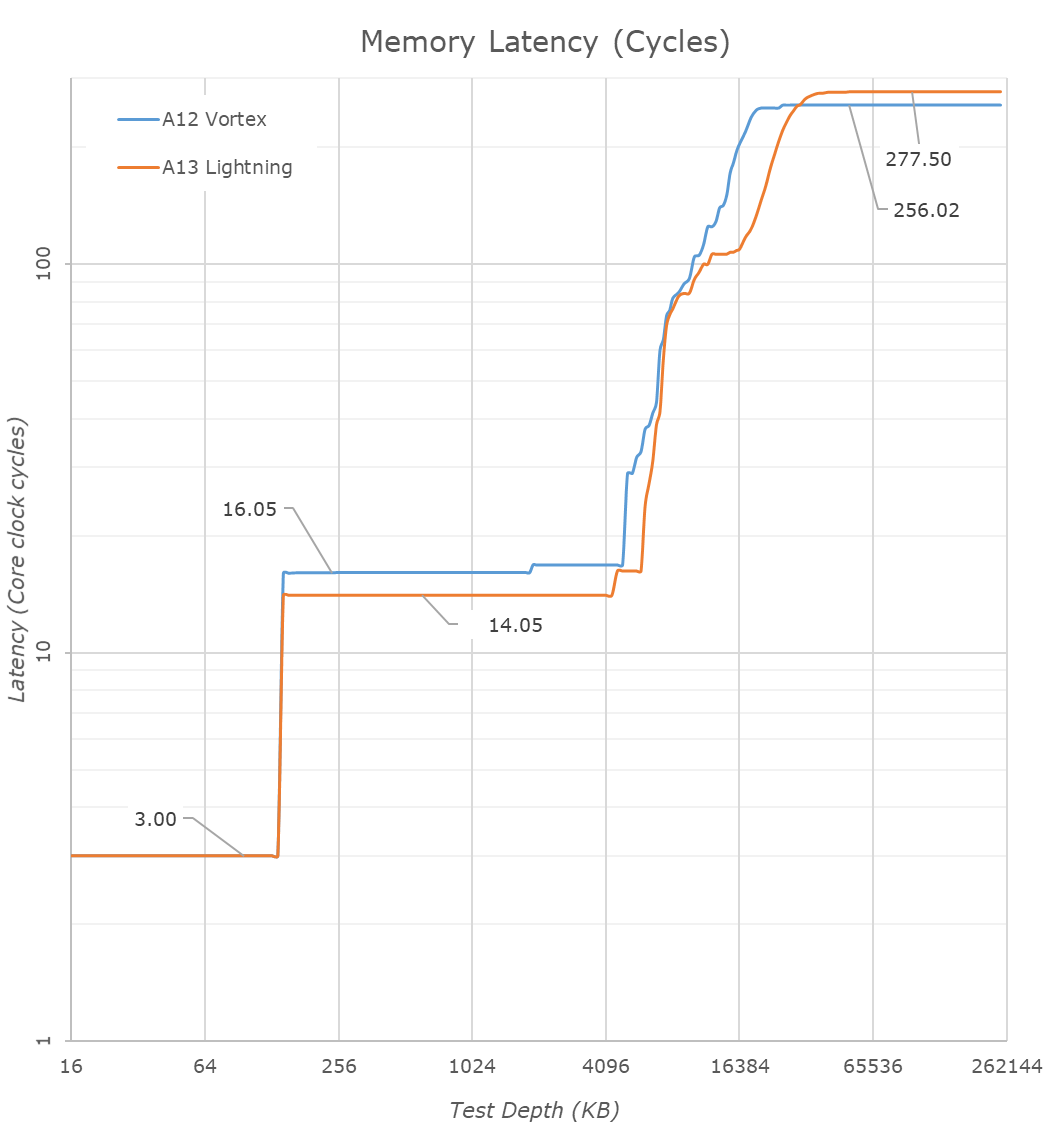

We mentioned that the Lightning cores L2 is faster now: Converting the measured latencies from nanoseconds to core cycles, we see the structural speed changes to the caches. The L1 remains at 3 cycles which is massively impressive given its 128KB size. The L2 cache has been reduced from 16 cycles down to 14 cycles, which is again extremely impressive given its purported 8MB physical size. Accounting for the core’s frequency increase, we do more noticeably see that the structural memory latency has increased a bit on the A13, adding about 21-22 cycles. It’s possible that the microarchitectural changes that made the SLC so much faster this generation had a knock-on effect in adding more total latency to DRAM.

Looking at the new Thunder cores versus last year’s Tempest microarchitecture, we see the new cache hierarchy differences. The L1D has grown from 32KB to 48KB – straightforward until now.

The L2 cache size also has evidently increased. Last year we estimated that the small core cluster had 2MB of shared L2, but was partitioned in such as way that a given core only has access to about 1.5MB, and this depth depended on the power management policy and DVFS state of the cores, appearing to only have access to 512KB when at the lowest performance states.

This year, this 1.5MB access size has seemingly increased to 2.5MB. I thus estimate the shared L2 of the small cores has increased from a physical 2MB to 3MB. Past this we’re seeing a step-wise behavior in latency up to 4MB – it’s possible this would be part of the L2E cache of the CPU complex, so in other words we’d possibly be accessing a special partition of the big core’s L2.

Update October 27th: The die shot reveals that the L2 of the Thunder cluster is half the size of the Lightning cluster L2, thus we estimate it's 4MB large in total.

In this graph we continue to see the behavior change of the A13’s SLC. At first glance it appears bigger, which still can be the case, but I rather think the CPU complex has much better access to the 4 (or more) cache slices of the SLC in this generation.

Another change of the new Thunder cores here is that we’re obviously seeing an increase in the L2 TLB capacity of the core. While the L1 TLB seems to have remained unchanged at 128 pages / 2MB, the L2 TLB has increased from 512 pages to 1024 pages – covering up to 16MB, a quite ideal size as it’s covering the depth of the SLC.

Finally, we see that the efficiency cores in the A13 this time around don’t have access to faster DRAM on their own – the memory controller remains very slow and DRAM latencies are in excess of 340ns while on the A12 the Tempest cores were able to enjoy latencies of 140-150ns. This explains some of the performance regressions of the new Thunder cores we measured earlier.

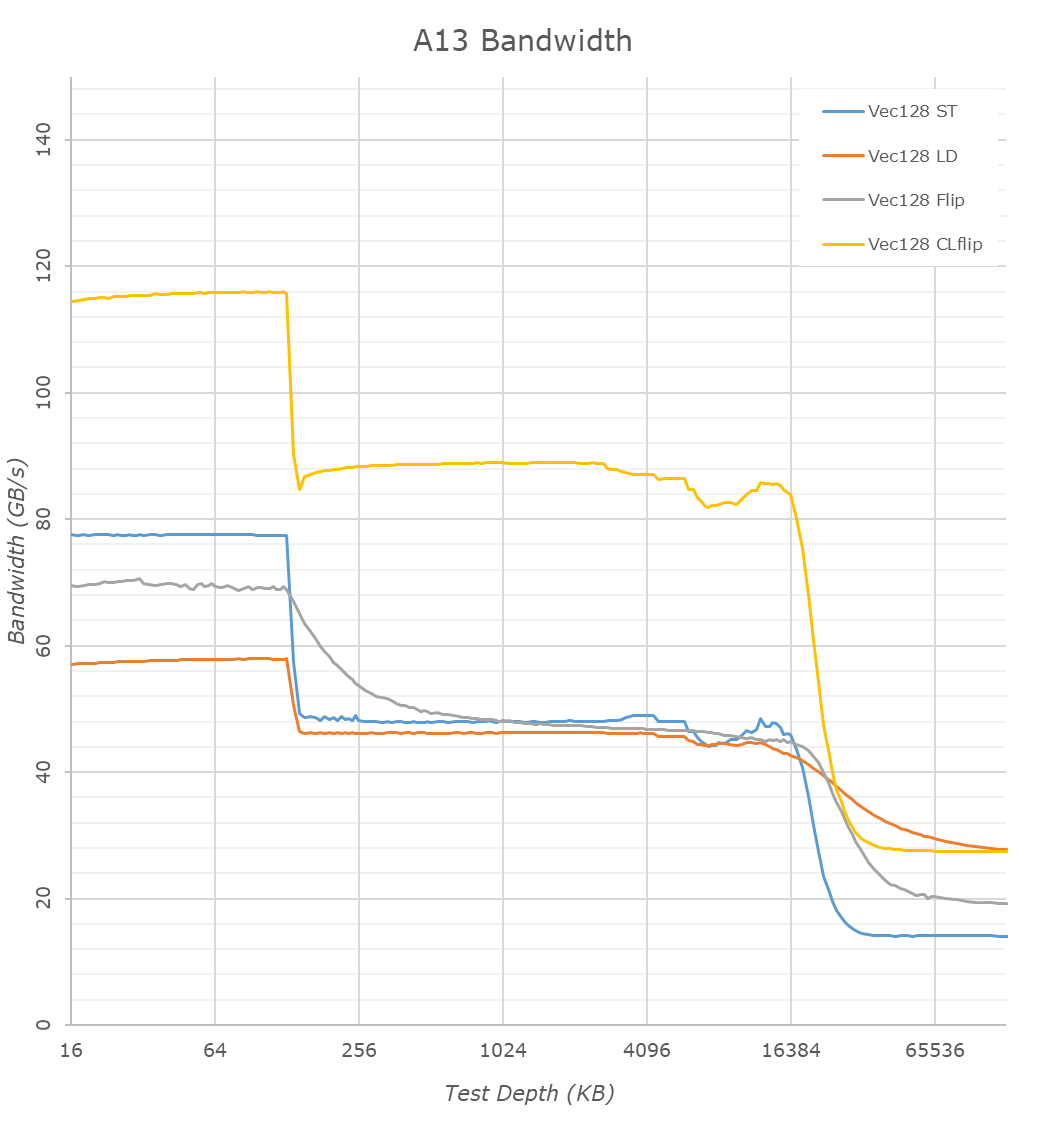

Bandwidth between the A13 and A12 doesn’t majorly differ in the L1 and DRAM regions beyond minor clock speed changes. In the L2, we notice there’s been a more noticeable increase in performance for read+writes into the same cache line, increasing performance by 25%.

It’s again in the SLC region where we see major changes – while on the A12 the bandwidth here slowly fell off in depth, the A13 is able to sustain the same bandwidth over the 16MB of system cache. It’s impressive that the bandwidth here is essentially equal to the L2 – albeit of course quite notably worse latency as we noted earlier. The smaller dips at the 8MB region is an artifact of the cache behavior between the big L2 and the SLC.

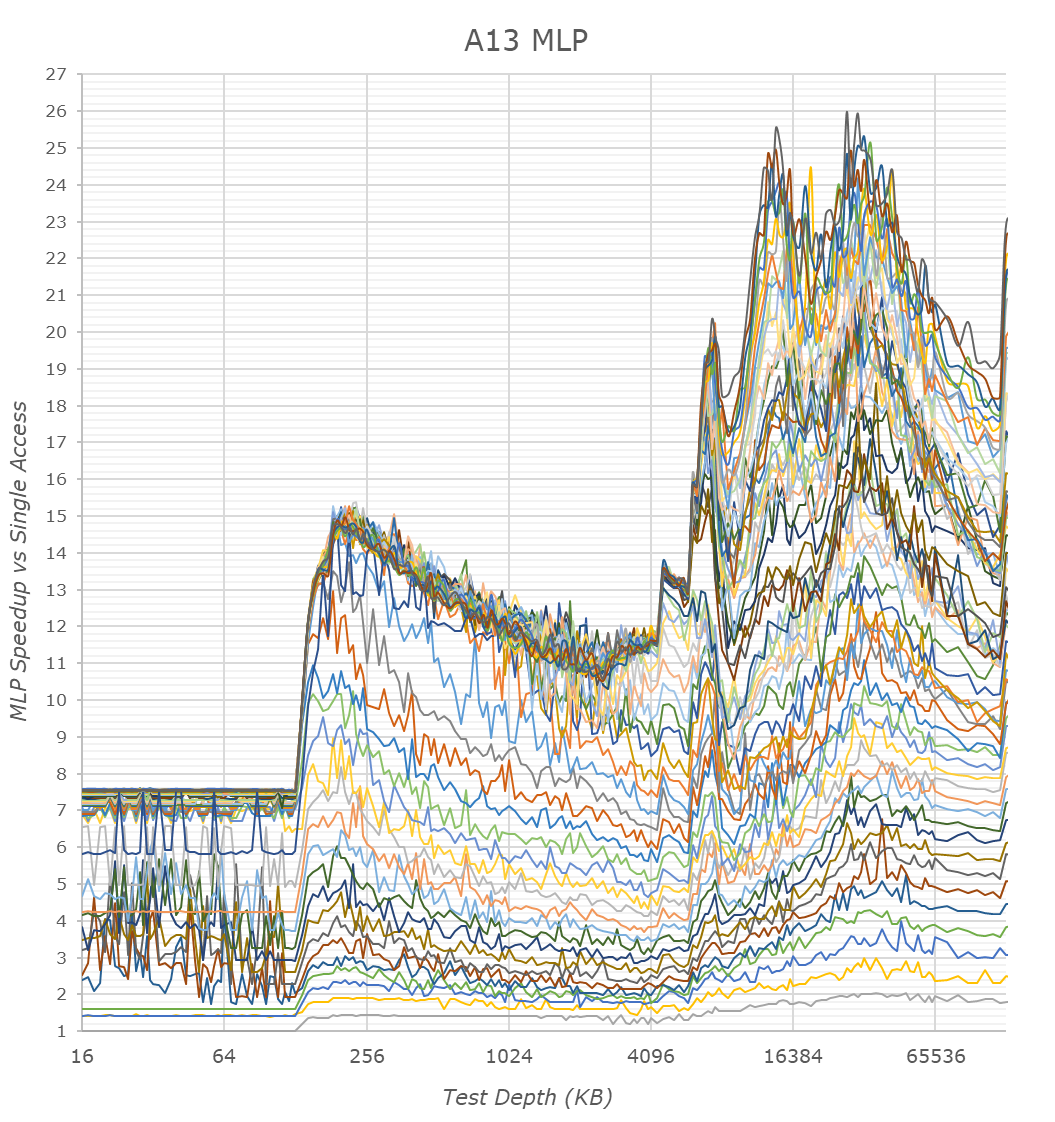

Finally, the MLP graphs showcase the memory level parallelism capacity of the CPU cores and the memory subsystem. MLP is the ability for the CPU to “park” memory requests missing the caches and to continue executing in out-of-order fashion other requests. High MLP ability is extremely important to be able to extract the most from out-of-order execution of code, which has higher memory pressure and more complex memory access patterns.

The A13 here again remains quite unique in its behavior, which is vastly more complex that what we see in any other microarchitecture. The non-linearity of the MLP speedup versus access count is something I can’t find a viable explanation for. We do see that the new A13 is a little bit better and more “even” than the A12, although what this practically means is something only Apple’s architects know. In general, Apple’s MLP ability is only second to AMD’s Zen processors, and clearly trounces anything else in the mobile space.

The overall conclusion for the A13’s memory subsystem is that Apple has evidently made very large changes to the system level cache, which is now significantly faster than what we’ve seen in the A12. The L2 cache of the big cores benefit from a 2-cycle latency reduction, but otherwise remain the same. Finally, the new Thunder efficiency cores have seen large changes with increased L1D, L2 and TLB capacity increases.

242 Comments

View All Comments

Henk Poley - Saturday, October 19, 2019 - link

Does the A13 have more security features, such as the pointer encryption that was added with the A12 (essentially binding pointers to their origin (e.g. processes)) ? It was kinda interesting that the recent mass exploitation of iPhones uncovered, didn't touch any of the A12 iDevices (and neither does jailbreaks).techsorz - Sunday, October 20, 2019 - link

I'm sorry Anandtech, but your GPU review is absolutely horrendous. You are using 3Dmark on iOS, which hasn't recieved an update since IOS 10 and then compare it to the Android version which was updated June 2019. There is a reason you are getting conflicted results when you switch over to GFXbench, which was updated on iOS in 2018. How this didn't make you wonder, is amazing.Andrei Frumusanu - Sunday, October 20, 2019 - link

The 3D workloads do not get updated between the update versions, so your whole logic is moot.techsorz - Sunday, October 20, 2019 - link

Are you kidding me? The load won't change, but the score sure will. It makes it look like the iPhone throttles much more than it does in reality. That the score is 50% less due to unoptimized garbage does not mean that the chipset actually throttled with 50%.I can't believe that I have to explain this to you, 3Dmark supports an operative system that is 3 years old, for all we know it is running in compatibility mode and is emulated.

Andrei Frumusanu - Sunday, October 20, 2019 - link

Explain to me how the score will change if the workload doesn't change? That makes absolutely zero sense.You're just spouting gibberish with stuff as compatibility mode or emulation as those things don't even exist - the workload is running on Metal and the iOS version is irrelevant in that regard.

techsorz - Monday, October 21, 2019 - link

In computing you have what is called a low-level 3D API. This is what Metal and DirectX is. This is what controls how efficiently you use the hardware you have available. If you have a new version of this API in say, IOS 13, and you run an iOS 10 application, you will run into compatibility issues. These issues can degrade performance without it being proportional to the actual throttling taking place. On android however, it is compatible with the latest low-level API's as well as various performance modes.The hillarious thing is that Anandtech even contradict themselves, using an "only" 1 year outdated benchmark, where the iPhone suddenly throttles less at full load. This entire article is just a box full of fail, if you want to educate yourself, I suggest you watch Speedtest G on Youtube. Or Gary Explains. He has a video on both 'REAL' iOS and Android throttling, done using the latest version of their respective API

Andrei Frumusanu - Monday, October 21, 2019 - link

> If you have a new version of this API in say, IOS 13, and you run an iOS 10 application, you will run into compatibility issues. These issues can degrade performance without it being proportional to the actual throttling taking place. On android however, it is compatible with the latest low-level API's as well as various performance modes.Complete and utter nonsense. You literally have no idea what you're talking about.

techsorz - Monday, October 21, 2019 - link

How about you provide a proper response instead of saying it's nonsense. How can the throttling be different at full load on 2 different benchmarks otherwhise? There is clearly no connection between actual throttling and the score itself. You are literally contradicting yourself in your own review.Andrei Frumusanu - Monday, October 21, 2019 - link

A proper response to what exactly? Until now all you managed to do is complain is that the test is somehow broken and wrong and I need to educate myself.The whole thing has absolutely nothing to do with software versions or OS version or whatever other thing. The peak and sustained scores are performed with the same workloads and nothing other than the phone's temperature has changed - the % throttling is a physical attribute of the phone, the benchmark doesn't decide to suddenly throttle more on one benchmark more than the other simply because it's somehow been released a few years ago.

The throttling is different on the different tests *because they are different workloads*. 3DMark and Aztec High will put very high stress the ALUs on the GPU, more than the other tests and create more heat on and hotspot temperatures the GPU, resulting into more throttling in and reduced frequencies those tests. T-Rex for example will be less taxing on the GPU in terms of its computation blocks have more load spread out to the CPU and DRAM, also spreading out temperature, and that's why it throttles the least amount.

techsorz - Monday, October 21, 2019 - link

Thank you for your informative reply. Then, is it crazy to assume that 3-year-old 3Dmark benchmark is not providing the same workload as the 2019 version on Android? Maybe you could run an outdated buggy benchmark on a rog 2 as well and it would stress the ALU even more? Possibly, the rog 2 is getting a much more sensible workload while the iPhone is getting unrealistic loads that don't utilize the archiecture at all. In which case, it is pretty unfair and misleading. It's like taking a car and only testing 1 wheel and the other cars get to use all 4.