Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics

by Dr. Ian Cutress on September 17, 2019 10:00 AM ESTAMD’s Turbo

With AMD introducing Turbo after Intel, as has often been the case in their history, they've had to live in Intel's world. And this has repercussions for the company.

By the time AMD introduced their first Turbo-enabled processors, everyone in the desktop space ‘knew’ what Turbo meant, because we had gotten used to how Intel did things. For everyone, saying ‘Turbo’ meant only one thing: Intel’s definition of Turbo, which we subconsciously took as the default, and that’s all that mattered. Every time an Intel processor family is released, we ask for the Turbo tables, and life is good and easy.

Enter AMD, and Zen. Despite AMD making it clear that Turbo doesn’t work the same way, the message wasn’t pushed home. AMD had a lot of things to talk about with the new Zen core, and Turbo, while important, wasn’t as important as the core performance messaging. Certain parts of how the increased performance were understood, however the finer points were missed, with users (and press) assuming an Intel like arrangement, especially given that the Zen core layout kind of looks like an Intel core layout if you squint.

What needed to be pushed home was the sense of a finer grained control, and how the Ryzen chips respond and use this control.

When users look at an AMD processor, the company promotes three numbers: a base frequency, a turbo frequency, and the thermal design power (TDP). Sometimes an all-core turbo is provided. These processors do not have any form of turbo tables, and AMD states that the design is not engineered to decrease in frequency (and thus performance) when it detects instructions that could cause hot spots.

It should be made clear at this point that Zen (Ryzen 1000, Ryzen 2000) and Zen2 (Ryzen 3000) act very differently when it comes to turbo.

Turbo in Zen

At a base level, AMD’s Zen turbo was just a step function implementation, with two cores getting the higher turbo speed. However, most cores shipped with features that allowed the CPU to get higher-than-turbo frequencies depending on its power delivery and current delivery limitations.

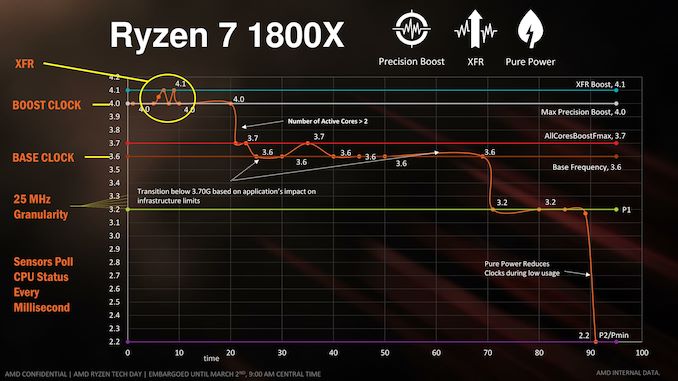

You may remember this graph from the Ryzen 7 1800X launch:

For Zen processors, AMD enabled a 0.25x multiplier increment, which allows the CPU to jump up in 25 MHz steps, rather than 100 MHz. This bit was easy to understand: it meant more flexibility in what the frequency could be at any given time. AMD also announced XFR, or ‘eXtreme Frequency Range’, which meant that with sufficient cooling and power headroom, the CPU could perform better than the rated turbo frequency in the box. Users that had access to a better cooling solution, or had lower ambient temperatures, would expect to see better frequencies, and better performance.

So the Ryzen 7 1800X was a CPU with a 3.6 GHz base frequency and a 4.0 GHz turbo frequency, which it achieves when 2 or fewer cores are active. If possible, the CPU will use the (now depreciated in later models) eXtended Frequency Range feature to go beyond 4.1 GHz if the conditions are correct (thermals, power, current). When more than two cores are active, the CPU drops down to its all-core boost, 3.7 GHz, and may transition down to 3.6 GHz depending on the conditions (thermals, power, current).

Turbo in Zen+, then Zen2

AMD dropped XFR from its marketing materials, tying it all under Precision Boost. Ultimately the boost function of the processor relied on three new metrics, alongside the regular thermal and total power consumption guidelines:

PPT: Socket Power Capacity

TDC: Sustained VRM Capacity

EDC: Peak/Transient VRM Capacity

In order to get the highest turbo frequencies, users would have to score big on all three metrics, as well as cooling, to stop one being a bottleneck. The end result promised by AMD was an aggressive voltage/frequency curve that would ride the limit of the hardware, right up to the TDP listed on the box.

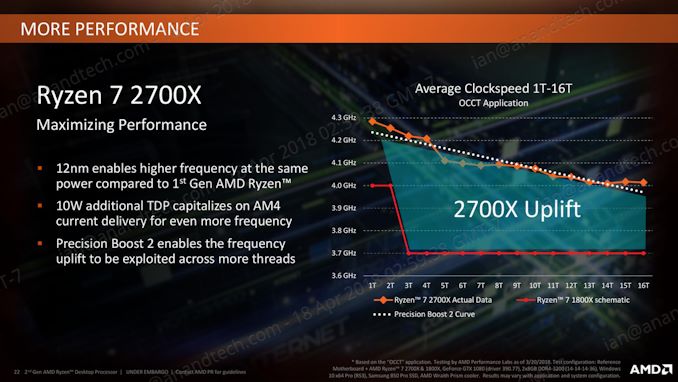

This means we saw a much tighter turbo boost algorithm compared to Zen. Both Zen+ and Zen2 then moved to this boost algorithm that was designed to offer a lot more frequency opportunities in mixed workloads. This was known as Precision Boost 2.

In this algorithm, we saw more than a simple step function beyond two threads, and depending on the specific chip performance as well as the environment the chip was in, the non-linear curve would react to the conditions and the workload to match hit the total power consumption of the chip as listed. The benefit of this was more performance in mixed workloads, in exchange for a tighter power consumption and frequency algorithm.

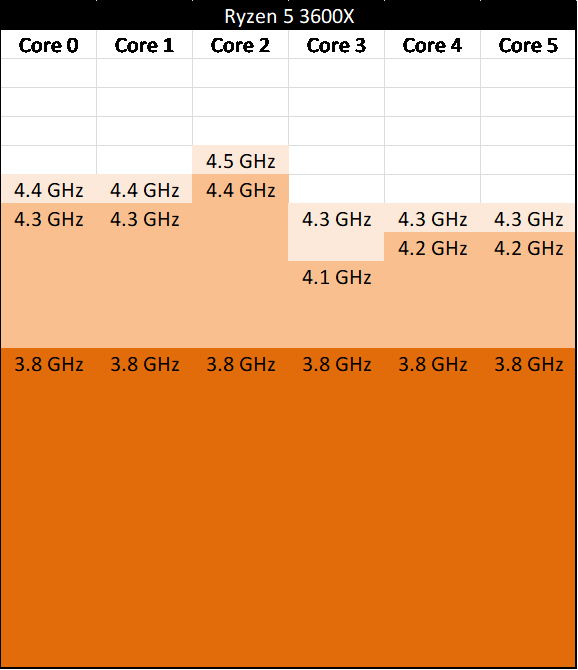

Move forward to Zen2, and one of the biggest differences for Zen2 is how the CPUs are binned. Since Zen, AMD’s own Ryzen Master software had been listing ‘best cores’ for each chip – for every Ryzen CPU, it would tell the user which cores had performed best based on internal testing, and were predicted to have this best voltage frequency curve. AMD took this a step further, and with the new 7nm process, in order to get the best frequencies out of every chip, it would perform binning per core, and only one core was required to reach the rated turbo speed.

So for example, here is a six-core Ryzen 5 3600X, with a base frequency of 3.8 GHz and a turbo frequency of 4.4 GHz. By binning tightly to the silicon maximums (for a given voltage), AMD was able to extract more performance on specific cores. If AMD had followed Intel’s binning strategy relating to turbo here, we would see a chip that would only be 4.2 GHz or 4.1 GHz maximum turbo – by going close to the chip limits for the given voltage, AMD is arguably offering more turbo functionality and ultimately more immediate performance.

There is one thing to note here though, which was the point of Paul’s article. In order to achieve maximum performance in a given workload, AMD had to adjust the Windows CPPC scheduler in order to assign a workload to the best core. By identifying the best cores on a chip, it meant that when a single threaded workload needed the best speed, it could be assigned to the best core (in our theoretical chip above that would be Core 2).

Note that with an Intel binning strategy, as the binning does not go to the per-core limits but rather relies on per-chip limits, it doesn’t matter what core the work is assigned to: this is the benefit of a homogeneous turbo binning design, and ultimately makes the scheduler algorithm in the operating system very simple. With AMD’s solution, that single best core is frequency scheduled that work, and as such the software stack in place needs to know the operation of the CPU and how to assign work to that specific core.

Does this make any difference to the casual user? No. For anyone just getting on with their daily activities, it makes absolutely zero difference. While the platform exposes the best cores, you need to be able to use tools to see it, and unless you uninstall the driver stack or micromanage where threads are allocated, you can’t really modify it. For casual users, and for gamers, it makes no difference to their workflow.

This binning strategy however does affect casual overclockers looking to get more frequency – based on AMD’s binning, there isn’t much headroom. All-core overclocks don’t really work in this scenario, because the chip is so close to the voltage/frequency curve already. This is why we’re not seeing great all-core overclocks on most Ryzen 3000 series CPUs. In order to get the best overall system overclocks this time around, users are going to have to play with each core one-by-one, which makes the whole process time consuming.

A small note about Precision Boost Overdrive (PBO) here. AMD introduced PBO in Zen and Zen+, and given the binning strategy on those chips, along with the mature 14/12nm process, users with the right thermal environment and right motherboards could extract another 100-200 MHz from the chip without doing much more than flicking a switch in the Ryzen Master software. Because of the new binning strategy – and despite what some of AMD's poorly executed marketing material has been saying – PBO hasn't been having the same effect, and users are seeing little-to-no benefit. This isn’t because PBO is failing, it’s because the CPU out of the box is already near its peak limits, and AMD’s metrics from manufacturing state that the CPU has a lifespan that AMD is happy with despite being near silicon limits. It ends up being a win-win, although people wanting more performance from overclocking aren’t going to get it – because they already have some of the best performance that piece of silicon has to offer.

The other point of assigning workloads to a specific core does revolve around lifespan. Typically over time, silicon is prone to electromigration, where electrons over time will slowly adjust the position of the silicon atoms inside the chip. Adjusting atom positioning typically leads to higher resistance paths, requiring more voltage over time to drive the same frequency, but which also leads to more electromigration. It’s a vicious cycle.

With electromigration, there are two solutions. One is to set the frequency and voltage of the processor low enough that over the expected age of the CPU it won’t ever become an issue, as it happens at such a slow rate – alternatively set the voltage high enough that it won’t become an issue over the lifetime. The second solution is to monitor the effect of electromigration as the core is used over months and years, then adjust the voltage upwards to compensate. This requires a greater level of detection and management inside the CPU, and is arguably a more difficult problem.

What AMD does in Ryzen 3000 is the second solution. The first solution results in lower-than-ideal performance, and so the second solution allows AMD to ride the voltage/frequency limits of a given core. The upshot of this is that AMD also knows (through TSMC’s reporting) how long each chip or each core is expected to last, and the results in their eyes are very positive, even with a single core getting the majority of the traffic. For users that are worried about this, the question is, do you trust AMD?

Also, to point out, Intel could use this method of binning by core. There’s nothing stopping them. It all depends on how comfortable the company is with its manufacturing process aligning with the expected longevity. To a certain extent, Intel already kind of does this with its Turbo Boost Max 3.0 processors, given that they specify specific cores to go beyond the Turbo Boost 2.0 frequency – and these cores get all the priority programs to run at a higher frequency and would experience the same electromigration worries that users might have by running the priority core more often. There difference between the two companies is that AMD has essentially applied this idea chip-wide and through its product stack, while Intel has not, potentially leaving out-of-the-box performance on the table.

144 Comments

View All Comments

StrangerGuy - Wednesday, September 18, 2019 - link

Pretty much the only people left OCing CPUs are epeen wavers with more money than sense."$300 mobo and 360mm AIO for that Intel 8 core <10% OC at >200W...Look at all that *free* performance! Amirite?"

Korguz - Wednesday, September 18, 2019 - link

" Pretty much the only people left OCing CPUs are epeen wavers with more money than sense" that a pretty bold statement. i know quite a few people who overclock their cpus, because intel charged too much for the higher end ones, so they had to get a lower tier chip. with zen, thats not the case as much any more as they have switched over to amd, because by this time, they would of had to get a new mobo any way, because intels upgrade paths are only 2, maybe 3 years if they are lucky.dont " need " and AIO, as there are some pretty good air coolers out there, and some, dont like the idea of water, or liquid in their comps :-)

Xyler94 - Thursday, September 19, 2019 - link

Sorry, here's where I'll have to disagree with you.You'll never overclock an i3 to i5/i7 levels. If my choices were between an overclocking i3, with a Z series board, or a locked i5 with an H series board, I'd choose the i5 in a heartbeat, as that's just generally better. Overclocking will never make up the lack of physical cores.

So I agree, mostly these days overclocking is reserved for A: People with e-peens, and B:, people who genuinely need 5ghz on a single core... which are fewer than those who can utilize the multi-threaded horsepower of Ryzen... so yeah,

evernessince - Tuesday, September 17, 2019 - link

Does it deliver well? I see plenty of people on the Intel reddit not hitting advertised turbo speeds. That's considering they are using $50+ CPU coolers as well."Pretty impressive to see a server cpu with 20% lower ST performance only because the

low power process utilized is unable to deliver a clock speed near 4Ghz, absurd thing considering

that Intel 14nm LP gives 4GHz at 1V without struggles."

What CPU are you talking about? Even AMD's 64 core monster has damn near the same IPC as the Intel Xeon 8280 (thanks to Zen 2 IPC improvements) and that CPU has LESS THEN HALF THE CORES and only consumes 20w more. The Intel CPU also costs almost twice as much. Only a moron brings up single threaded performance in a server chip conversation anyways, it's one of the least important metric for server chips. AMD's new EPYC chip crushes Intel in Core count, TCO, power consumption, and security. Everything that is important to server.

yankeeDDL - Wednesday, September 18, 2019 - link

You do realize that the clock speed does not depend only by the process, right? Your comment sounds like that that of a disgruntled Intel fanboy trying to put AMD in under a bar light. For 25MHz.Spunjji - Monday, September 23, 2019 - link

Absolute codswallop. AMD are getting 100-300Mhz more on their peak clock speeds for Zen2 with this first-gen 7nm process tech than they were seeing with Zen+ 12nm (and nearly 400Mhz more than Zen on 14nm), so nothing about that implies that 7nm is slower than 14nm. Intel's architecture and process tech are not remotely comparable to AMD's, and we don't know what is the primary limiting factor on Zen clockspeeds.Not sure why you're claiming lower ST performance on the server parts either - Rome is better in every single regard than its predecessors, and it's better pound-for-pound than anything Intel will be able to offer in the next 12-18 months.

PeachNCream - Tuesday, September 17, 2019 - link

I see a tempest in a teapot on the stove of a person who is busy splitting hairs at the kitchen table. It would be more interesting to calculate how much energy and time was expended on the issue to see if the performance uplift from the fix will offset the global societal cost of all the clamoring this generated. For that, I suppose you'd have to know how many Ryzen chips are actually doing something productive as opposed to crunching at video games.The idea of buying cheaper hardware for non-work needs sticks here. Less investment means less worry about maximizing your return on your super-happy-fun-box and less heartburn over a little bit of clockspeed on a component that plays second fiddle to GPU performance when it comes to entertainment anyway.

psychobriggsy - Tuesday, September 17, 2019 - link

Ultimately in the end it isn't MHz that counts, it is observed performance in the software that you care about. That's why we read reviews, and why the review industry is so large.If performance X was good enough, does it matter if it was achieved at 4.5GHz or 4.425GHz? Not really. But if the CPU manufacturer is using it as a primary competitive comparison metric (rather than a comparative metric with their other SKUs) then it has to be considered, like in this article.

It is sad that MHz is still a major metric in the industry, although now Intel IPC is similar to AMD IPC, it is actually kinda relevant.

What I'd like is better CPU power draw measurements versus what the manufacturer says. Because TDP advertising seems to be even more fraught with lies/marketing than MHz marketing! Obviously most users don't care about 10 or 20% extra power draw at a CPU level, as at a system level it will be a tiny change, but it's when it is 100% that it matters.

IMO, I'd like TDPs to be reported at all core sustained turbo, not base clocks. Sure, have a typical TDP measurement as well as the more information the better, but don't hide your 200W CPU under a 95W TDP.

TechnicallyLogic - Tuesday, September 17, 2019 - link

Personally, I feel that AMD should have 2 numbers for the max frequency of the CPU; "Boost Clock" and "Burst Clock". Assuming that you have adequete cooling and power delivery, the boost clock would be sustainable indefinitely on a single core, while the burst clock would be the peak frequency that a single core on the CPU can reach, even if it's just for a few ms.fatweeb - Tuesday, September 17, 2019 - link

I could see them eventually going in this direction considering Navi already has three clocks: Base, Gaming, and Boost. The first two would be guaranteed, the last not so much.