Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics

by Dr. Ian Cutress on September 17, 2019 10:00 AM ESTDefining Turbo, Intel Style

Since 2008, mainstream multi-core x86 processors have come to the market with this notion of ‘turbo’. Turbo allows the processor, where plausible and depending on the design rules, to increase its frequency beyond the number listed on the box. There are tradeoffs, such as Turbo may only work for a limited number of cores, or increased power consumption / decreased efficiency, but ultimately the original goal of Turbo is to offer increased throughput within specifications, and only for limited time periods. With Turbo, users could extract more performance within the physical limits of the silicon as sold.

In the beginning, Turbo was basic. When an operating system requested peak performance from a processor, it would increase the frequency and voltage along a curve within the processor power, current, and thermal limits, or until it hit some other limitation, such as a predefined Turbo frequency look-up table. As Turbo has become more sophisticated, other elements of the design come into play: sustained power, peak power, core count, loaded core count, instruction set, and a system designer’s ability to allow for increased power draw. One laudable goal here was to allow component manufacturers the ability to differentiate their product with better power delivery and tweaked firmwares to give higher performance.

For the last 10 years, we have lived with Intel’s definition of Turbo (or Turbo Boost 2.0, technically) as the defacto understanding of what Turbo is meant to mean. Under this scheme, a processor has a sustained power level, and peak power level, a power budget, and assuming budget is available, the processor will go to a Turbo frequency based on what instructions are being run and how many cores are active. That Turbo frequency is governed by a Turbo table.

The Turbo We All Understand: Intel Turbo

So, for example. I have a hypothetical processor that has a sustained power level (PL1) of 100W. The peak power level (PL2) is 150W*. The budget for this turbo (Tau) is 20 seconds, or the equivalent of 1000 joules of energy (20*(150-100)), which is replenished at a rate of 50 joules per second. This quad core CPU has a base frequency of 3.0 GHz, but offers a single core turbo of 4.0 GHz, and 2-core to 4-core of 3.5 GHz.

So tabulated, our hypothetical processor gets these values:

| Sustained Power Level | PL1 / TDP | 100 W |

| Peak Power Level | PL2 | 150 W |

| Turbo Window* | Tau | 20 s |

| Total Power Budget* | (150-100) * 20 | 1000 J |

| *Turbo Window (and Total Power Budget) is typically defined for a given workload complexity, where 100% is a total power virus. Normally this value is around 95% | ||

*Intel provides ‘suggested’ PL2 values and ‘suggested’ Tau values to motherboard manufacturers. But ultimately these can be changed by the manufacturers – Intel allows their partners to adjust these values without breaking warranty. Intel believes that its manufacturing partners can differentiate their systems with power delivery and other features to allow a fully configurable value of PL2 and Tau. Intel sometimes works with its partners to find the best values. But the take away message about PL2 and Tau is that they are system dependent. You can read more about this in our interview with Intel’s Guy Therien.

Now please note that a workload, even a single thread workload, can be ‘light’ or it can be ‘heavy’. If I created a piece of software that was a never ending while(true) loop with no operations, then the workload would be ‘light’ on the core and not stressing all the parts of the core. A heavy workload might involve trigonometric functions, or some level of instruction-level parallelism that causes more of the core to run at the same time. A ‘heavy’ workload therefore draws more power, even though it is still contained with a single thread.

If I run a light workload that requires a single thread, it will start the processor at 4.0 GHz. If the power of that single thread is below 100W, then I will use none of my budget, as it is refilled immediately. If I then switch to a heavy workload, and the core now consumes 110W, then my 1000 joules of turbo budget would decrease by 10 joules every second. In effect, I would get 100 seconds of turbo on this workload, and when the budget is depleted, the sustained power level (PL1) would kick in and reduce the frequency to ensure that the consumption on the chip stayed at 100W. My budget of energy for turbo would not increase, because the 100 joules/second that is being added is immediately taken away by the heavy workload. This frequency may not be the 3.0 GHz base frequency – it depends on the voltage/power characteristics of the individual chip. That 3.0 GHz base value is the value that Intel guarantees on its hardware – so every one of this hypothetical processor will be a minimum of 3.0 GHz at 100W on a sustained workload.

To clarify, Intel does not guarantee any turbo speed that is part of the specification sheet.

Now with a multithreaded workload, the same thing occurs, but you are more likely to hit both the peak power level (PL2) of 150W, and the 1000 joules of budget will disappear in the 20 seconds listed in the firmware. If the chip, with a 4-core heavy workload, hits the 150W value, the frequency will be decreased to maintain 150W – so as a result we may end up with less than the ‘3.5 GHz’ four-core turbo that was listed on the box, despite being in turbo.

So when a workload is what we call ‘bursty’, with periods of heavy and light work, the turbo budget may be refilled quicker than it is used in light workloads, allowing for more turbo when the workload gets heavy again. This makes it important when benchmarking software one after another – the first run will always have the full turbo budget, but if subsequent runs do not allow the budget to refill, it may get less turbo.

As stated, that turbo power level (PL2) and power budget time (Tau) are configurable by the motherboard manufacturer. We see that on enterprise motherboards, companies often stick to Intel’s recommended settings, but with consumer overclocking motherboards, the turbo power might be 2x-5x higher, and the power budget time might be essentially infinite, allowing for turbo to remain. The manufacturer can do this if they can guarantee that the power delivery to the processor, and the thermal solution, are suitable.

(It should be noted that Intel actually uses a weighted algorithm for its budget calculations, rather than the simplistic view I’ve given here. That means that the data from 2 seconds ago is weighted more than the data from 10 seconds ago when determining how much power budget is left. However, when the power budget time is essentially infinite, as how most consumer motherboards are set today, it doesn’t particularly matter either way given that the CPUs will turbo all the time.)

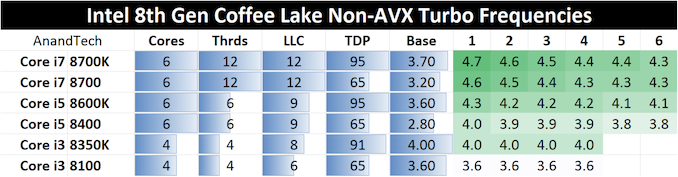

Ultimately, Intel uses what are called ‘Turbo Tables’ to govern the peak frequency for any given number of cores that are loaded. These tables assume that the processor is under the PL2 value, and there is turbo budget available. For example, here are Intel’s turbo tables for Intel’s 8th Generation Coffee Lake desktop CPUs.

So Intel provides the sustained power level (PL1, or TDP), the Base frequency (3.70 GHz for the Core i7-8700K), and a range of turbo frequencies based on the core loading, assuming the motherboard manufacturer set PL2 isn’t hit and power budget is available.

The Effect of Intel’s Turbo Regime, and Intel’s Binning

At the time, Intel did a good job in conveying its turbo strategy to the press. It helped that staying on quad-core processors for several generations meant that the actual turbo power consumption of these quad-core chips was actually lower than sustained power value, and so we had a false sense of security that turbo could go on forever. With the benefit of hindsight, the nuances relating to turbo power limits and power budgets were obfuscated, and people ultimately didn’t care on the desktop – all the turbo for all the time was an easy concept to understand.

One other key metric that perhaps went under the radar is how Intel was able to apply its turbo frequencies to the CPU.

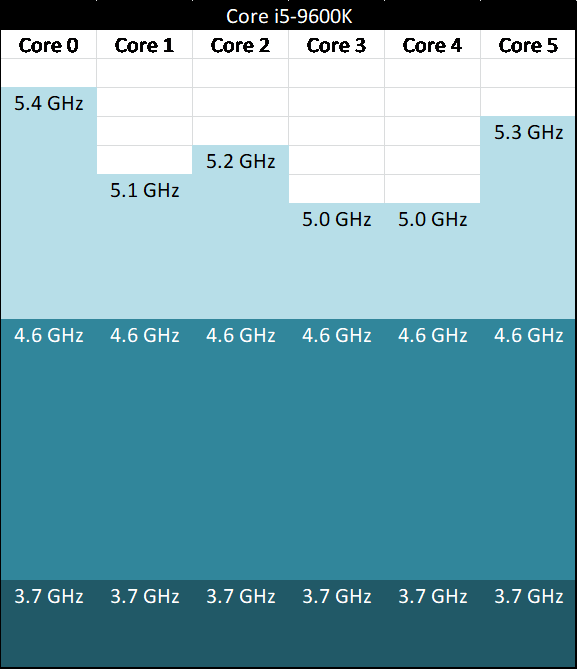

For any given CPU, any core within that design could hit the top turbo. It allowed for threads to be loaded onto whatever core was necessary, without the need to micromanage the best thread positioning for the best performance. If Intel stated that the single core turbo frequency was 4.6 GHz, then any core could go up to 4.6 GHz, even if each individual core could go beyond that.

For example, here’s a theoretical six-core Core i5-9600K, with a 3.7 GHz base frequency, and a 4.6 GHz turbo frequency. The higher numbers represent theoretical maximums of each core at the turbo voltage.

This is actually a strategy related to how Intel segments its CPUs after manufacturing, a process called binning. If a processor has the right power/thermal characteristics to reach a given frequency in a given power, then it could be labelled as the most appropriate CPU for retail and sold as such. Because Intel aimed for a homogeneous monolithic design, every core in the design was tested such that it performed equally (or almost equally) with every other core. Invariably some cores will perform better than others, if tweaked to the limits, but under Intel’s regime, it helped Intel to spread the workloads around as to not create thermal hotspots on the processor, and also level out any wear and tear that might be caused over the lifetime of the product. It also meant that in a hypervisor, every virtual machine could experience the same peak frequencies, regardless of the cores they used.

With binning, Intel (or any other company), is selecting a set of voltages and frequencies for a processor to which it is guaranteed. From the manufacturing, Intel (or others) can see the predicted lifespan of a given processor for a range of frequencies and voltages, and the ones that hit the right mark (based on internal requirements) means that a silicon chip ends up as a certain CPU. For example, if a piece of silicon does hit 9900K voltages and frequencies, but the lifespan rating of that piece of silicon is only two years, Intel might knock it down to a 9700K, which gives a predicted lifespan of fifteen years. It’s that sort of thing that determines how high a chip can perform. Obviously chips that can achieve high targets can also be reclassified as slower parts based on inventory levels or demand.

This is how the general public, the enthusiasts, and even the journalists and reviewers covering the market, have viewed Turbo for a long time. It’s a well-known part of the desktop space and to a large extent is easy to understand. If someone said ‘Turbo’ frequency, everyone was agreed on the same basic principles and no explanation was needed. We all assumed that when Turbo was mentioned, this is what they meant, and this is what it would mean for eternity.

Now insert AMD, March 2017, with its new Zen core microarchitecture. Everyone assumed Turbo would work in exactly the same way. It does not.

144 Comments

View All Comments

Exodite - Wednesday, September 18, 2019 - link

I'll take the opportunity to free-ride eastcoast_pete's comment to second its content! :)Awesome article Ian, this is the kind of stuff that brings me to AnandTech.

Also, in particular I found it fascinating to read about AMD's solution to electromigration - Zen seems to carry around a lot of surprises still! Adding to pete's ask re: overclocking vs. lifespan I'd be very interested to read more about how monitoring and counteracting the effects of electromigration actually works with AMD's current processors.

Thanks again!

HollyDOL - Wednesday, September 18, 2019 - link

I used to have factory OCed GTX 580 (EVGA hydro model, bought when it was fresh new merchandise)... More than half of it's life time I was also running BOINC on it. Swapped for GTX 1080 when it was fresh new. So when replaced with faster card it was 5-6yrs old.Out of this one case I guess unless you go extereme OC or fail otherwise (condensation on subambient, very bad airflow, wrongly fitted cooler etc. etc.) you'll sooner replace with new one anyway since the component will get to age where no OC saves it from being obsolete anyway.

Though I'd be curious about more reliable numbers as well.

Gondalf - Tuesday, September 17, 2019 - link

Intel do not guarantee the turbo still it deliver well, AMD at least for now nope.Fix or not fix it is pretty clear actual 7nm processes are clearly slower than 14nm, nothing

can change this. Are decades that a new process have always an higher drive current of

the older one, this time this do not happen.

Pretty impressive to see a server cpu with 20% lower ST performance only because the

low power process utilized is unable to deliver a clock speed near 4Ghz, absurd thing considering

that Intel 14nm LP gives 4GHz at 1V without struggles.

Anyway.....this is the new world in upcomin years.

Korguz - Tuesday, September 17, 2019 - link

intel also does not guarantee your cpu will use only 95 watts when at max speed... whats your point ? cap that cpu at the watts intel specifies.. and look what happens to your performance.Gondalf - Tuesday, September 17, 2019 - link

My point power consumption is not a concern in actual desktop landscape only done of entusiasts with an SSD full of games, they want top ST perf at any cost, no matter 200 W of power consumption.Absolutely different is the story in mobile and server, but definitevely not in all workloads around.

vanilla_gorilla - Tuesday, September 17, 2019 - link

> they want top ST perf at any costThey actually don't. Because no one other than the farmville level gamer is CPU bound. Everyone is GPU bound. The only exception is possibly people playing at 1080p (or less) and their framerates are 200-300 or more. There are no real situations where you will see any perceptible difference between the high end AMD or Intel CPU for gaming while using a modern discreet GPU.

The difference is buying AMD is cheaper, both the CPU and the platform, which has a longer lifetime by the way (AM4) and you get multicore performance that blows Intel away "for free".

N0Spin - Monday, October 21, 2019 - link

I have seen reviews of demanding current generation gaming titles like Battlefield 5 in which reviewers definitely noted that the CPU level/and # of cores indeed influences the performance. I am not stating that this is always the case, but CPUs/cores can and do matter in a number of instances even if all you do is game, after running a kill all extraneous processes script.Xyler94 - Tuesday, September 17, 2019 - link

You're speaking for yourself here...I don't care if my CPU gets me 5 more FPS when I'm already hitting 200+ FPS, I care whether the darn thing doesn't A: Cook itself to death and B: Doesn't slow down when I'm hitting it with more tasks.

People have done the test, and you can too if you have an overclocking friendly PC. disable all but 1 core, and run it at 4GHZ, and see how well your PC performs. Then, enable 4 cores, and set them at 1GHZ, see how well the PC feels. It was seen that 4 cores at 1GHz was better than 1 core at 4ghz. The reality? More cores do more work. It's that simple.

You either don't pay electricity or are in a spot where the electricity cost of your computer doesn't factor into your monthly bill. Some people do care if a single part of their PC draws 200W of power. I certainly care, because the lower the wattage, I don't have to buy a super expensive UPS to power my device. Also, gaming is becoming more multi-threaded, so eventually, the ST performance won't matter anyways.

Korguz - Tuesday, September 17, 2019 - link

Gondalf, sorry but nope.. for some how much power a cpu uses is a concern, specially when one goes to choose HSF to cool that cpu, and they buy one, only to find that it isnt enough to keep it cool enough to run at the specs intel says.. and labeling a cpu to use 95 watts, and have it use 200 or more, is a HUGE difference. but you are speaking for your self, on the ST performance, as Xyler94 mentioned.evernessince - Tuesday, September 17, 2019 - link

How about no. 200w for a few FPS sounds like a terrible trade off unless you are cooking eggs on your nipples with the 120 F room you are sitting in after that PC is running for 1 hour.