The Ice Lake Benchmark Preview: Inside Intel's 10nm

by Dr. Ian Cutress on August 1, 2019 9:00 AM EST- Posted in

- CPUs

- Intel

- GPUs

- 10nm

- Core

- Ice Lake

- Cannon Lake

- Sunny Cove

- 10th Gen Core

Cache and TLB Updates

One of the biggest changes in the new Sunny Cove core is the cache hierarchy. Throughout most of the last decade, Intel has kept the same cache configuration among its server cores with very few changes, aside from improving the micro-op cache through Sandy Bridge today, but with Sunny Cove, we get some key updates.

From our microarchitecture disclosure article, we know that Sunny Cove brings with it an increased L1 data cache, as well as a doubled L2 cache, from Skylake. With this comes more associativity as well.

| Core Cache Comparison | ||||||

| Sunny Cove |

AnandTech | Cannon Lake |

Skylake | Sandy Bridge |

AMD Zen 2 |

|

| 48 KB 12-way |

L1-D | 32 KB 8-way |

32 KB 8-way |

32 KB 8-way |

32 KB 8-way |

|

| 32 KB 8-way |

L1-I | 32 KB 8-way |

32 KB 8-way |

32 KB 8-way |

32 KB 8-way |

|

| 512 KB 8-way |

L2 | 256 KB 4-way |

256 KB 4-way |

256 KB 8-way |

512 KB 8-way |

|

| 2 MB 16-way |

L3/core | 2 MB 16-way |

2 MB 16-way |

2MB 16-way |

4 MB 16-way |

|

| 2304 | uOp Cache | 1536 | 1536 | 1536 | 4096 | |

I’ve also included the size of the micro-op cache in this comparison, because we understand that the L1-I and the micro-op cache are typically located next to each other in the die design – increasing the size of one typically means limiting the size of the other, however given that both of them work to accelerate the same types of data, it is important to find the right balance: AMD for Zen 2 decided to halve the L1-I and double its associativity, and then double the micro-op cache from 2k to 4k entries. For Sunny Cove, Intel has increased the L1-D by 50%, but also increased the micro-op cache by 50%, taking advantage of the second generation 10nm process.

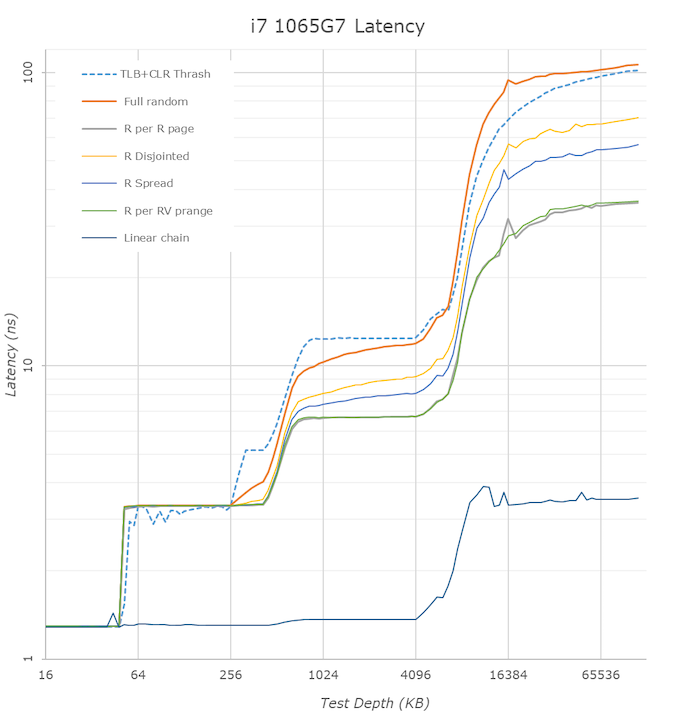

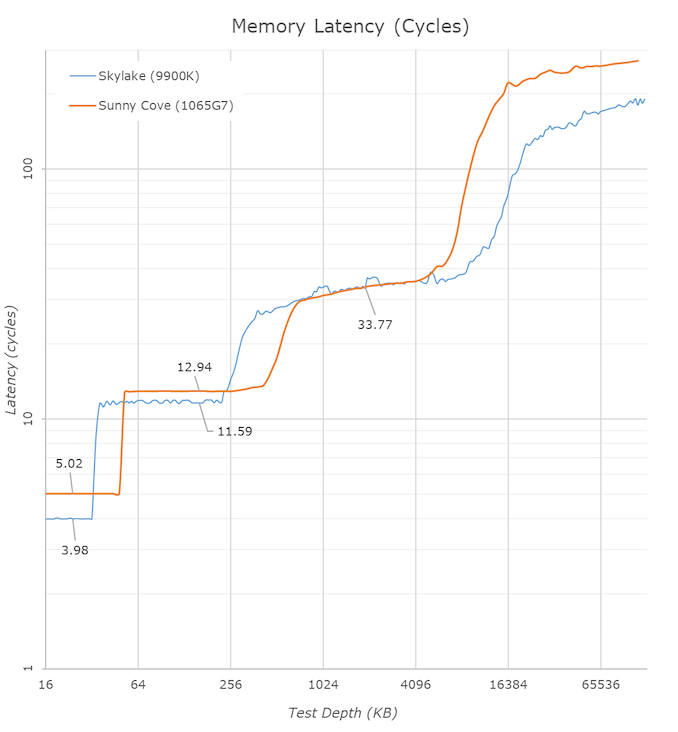

In the above latency graph comparison, we can notably see the new increased cache capacities. The L1’s increase from 32KB to 48KB is well visible, as well as the L2 increase from 256KB to 512KB.

On the part of the L2 it’s interesting to see that again the new core’s TLB structures don’t quite cover the whole capacity of the L2 and that’s why the latter half of the L2 has worse access latencies for L1D TLB miss patterns.

L3 latencies look similar, however we’ll dwell into that just in a bit. The DRAM latencies of course are apples and oranges in this case as Intel’s new LPPDR4X memory controller in the new ICL part doesn’t have a counter-part we can compare to, but as expected the memory latency is notably worse than a desktop part so no big surprises there.

What’s rather disappointing however is that we’re not really seeing any kind of change to the prefetchers and our access patterns between the two microarchitectures look identical. We had expected Intel to improve in this regard, but alas it seems, at least for our test cases, there’s been no improvements.

One of the major balancing acts with cache sizes is also cache latency. The bigger the cache, the longer it takes to retrieve data from it – increasing associativity can do this as well. Intel for the longest time has cited a 4-cycle latency to its L1 cache, and a 12-cycle latency to its L2 cache. This changes, in quite a substantial way.

| Core Cache Latency (in cycles) | ||||||

| Sunny Cove | AnandTech | Cannon Lake |

Skylake | Sandy Bridge |

AMD Zen 2 |

|

| 5 | L1 | 4 | 4 | 4 | 4 | |

| 13 | L2 | 12 | ~12 | 12 | 12 | |

| 30-36 | L3 | 26-37 | 34 | |||

Due to the increase in the L1 cache, that 4-cycle latency is now a 5-cycle latency. Intel is hoping that its double L1 store bandwidth will help hide that latency, however adding 20% latency onto every data fetch is a heavy hit to take. This is why a lot of the rest of the core is also beefed up, so work can still continue to cover for this extra cycle. It is worth noting that Intel’s Atom cores in the past had an L1 latency of 3 cycles, however they have also since moved to 4-cycle.

The L2 also changes, from 12 cycles to 13 cycles. You can’t double a cache for free, and it is interesting to note here that now AMD and Intel share the same L2 cache size and the same associativity, however AMD has a cycle advantage on Intel. This has a knock on effect for L3 cache latency too, which has increased from ~26-37 cycles to ~30-36 cycles on Intel, from Skylake. The latency here isn’t uniform because the cache isn’t uniform, and accessing non-local L3 slices comes with a latency penalty, as say opposed to AMD’s uniform latency L3.

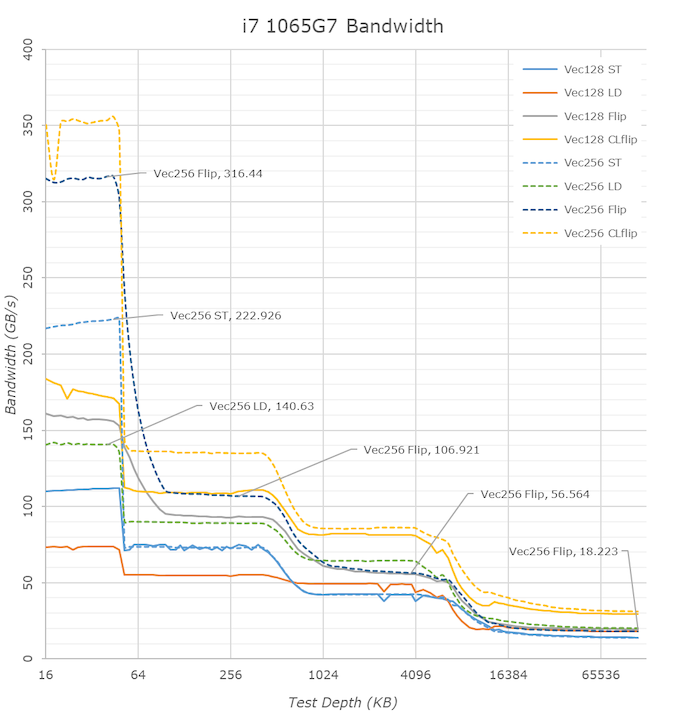

One of the biggest additions Intel was keen to make in our Sunny Cove microarchitecture disclosure is the increase in the L1 bandwidth. Based on our testing, Skylake’s L1 data cache was capable of 2x32-byte read and 1x32-byte write per clock. For Sunny Cove this has increased, but it gets a bit more complicated. In one clock Sunny cove can perform 2x64-byte reads and either 1x64-byte write, or 1x32-byte write, or 2x16-byte writes. So both reads and writes, at peak, have doubled with bit width, with the write configurations being a bit more complex.

We can see the direct effects of the bandwidth change in our bandwidth tests. The L1 store bandwidth is extremely evident as it measures in at 222GB/s on the ICL part, while only reaching 139GB/s on the 28% higher clocked 9900K. If we normalise for frequency, we indeed see a doubling of the store bandwidth of the new core.

The L2 cache bandwidth looks unchanged from Skylake – the part here lags behind with an equal amount to the frequency difference. Surprisingly, the L3 bandwidth fares quite well here and is almost at parity to the desktop 9900K even though the frequency difference. The DRAM bandwidth is inferior on the ICL mobile chip, but again that’s to be expected due to the apples and oranges DRAM architecture comparison.

Moving on to the TLB configurations, and it turns out that Intel has moved where this data is stored. This data is typically stored in CPUID.02h, however it is now found in CPUID.18h, and the info between different page sizes and capabilities is now given as subdivisions. The TLBs look as follows, and broken down into configurations:

| Sunny Cove TLB | |||

| AnandTech | Type | Entries | Associativity |

| L1-D | 4K | 64 | 4-way |

| L1-D | 2M | 32 | 4-way |

| L1-D | 1G | 8 | full |

| L1-I | 4K+2M | 8 | full |

| L1-I | 4K+2M+1G | 16 | full |

| L2 | 4K+2M | 1024 | 8-way |

| L2 | 4K+1G | 1024 | 8-way |

This means that for 4K and 2M L1-I entries, there are a total 8+16 = 24 possible, but only 16 1G possible. For 4K L2 entries, there are 1024 + 1024 = 2048 entries possible, however only 1024 each of 2M/1G are possible. The peak numbers align with what Intel has told us in our microarchitecture disclosure, however it is good to dive into what is possible.

261 Comments

View All Comments

rangerdavid - Friday, August 2, 2019 - link

What Moizy said. Ian, you are quite fair and diplomatic in your responses. And if you are in some kind of Intel marketing conspiracy, for heaven's sake, I hope you are getting a good cut! Buy yourself something pretty, fella... (grin)0ldman79 - Friday, August 2, 2019 - link

Rock on man.Well said.

Sailor23M - Monday, August 5, 2019 - link

+1 Moizy thanks. I really do not have the time to go searching and collecting all the leaks out there, so this article was well timed for me and at a high level lets me know what to expect from these chips.close - Monday, August 5, 2019 - link

@Moizy: I guess props to AT if this turns out to be the real situation.Curious what happens if we have another "oh our puff piece didn't notice they were using a sub 0 chiller under the table" kind of situation. Last time there was an anemic "oh, yeah, Intel could have been more straight forward" type reaction. So you can understand why people are skeptical about results that can't be independently validated, even (especially? ...given past experiences) if they come from AT.

tijag - Thursday, August 1, 2019 - link

You sir are a first rate obtuse troll.chowmanga - Thursday, August 1, 2019 - link

Would you say there was a difference between getting a product in advance and getting one when the rest of the press gets one?Moizy - Thursday, August 1, 2019 - link

To me, the only meaningful difference is timing. The unsound logic of the argument is a) Intel invites a few select press to a preview event months before availability, b) the select few press are flattered by the privilege and develop positively biased feelings toward Intel, therefore c) they report positively biased, flawed findings due to the privilege.It's true that inviting a select few to the event could psychologically influence those few, making them slightly less objective than they may have otherwise been. But Ian's reporting isn't built on subjective opinions on Icy Lake, 10nm, and Intel. His reporting is built on his objective testing, using his standardized benchmarks. So even if Ian's view of Intel and Icy Lake were a little swayed by this privilege (which I doubt they were, he's not a new kid on the block, he deals with PR and Marketing and the like all the time), for this to translate into flawed, biased reporting, Ian would need to purposefully alter his standardized benchmarks in order to produced positively biased results. There is zero evidence that he did that here, and there is zero precedence to him doing that in the past, so the original logic is extremely flawed.

uberDoward - Friday, August 2, 2019 - link

Based on the fact (as Ian mentioned at the beginning of the article) it DOES sound like others that were invited came unprepared, and willing to just spout Intel's rhetoric without objective measurements. Kudos to Ian for arriving prepared!Santoval - Friday, August 2, 2019 - link

You are ranting but saying nothing substantial or even anything that makes sense. "Conflict of interest"? Really? In what way exactly, do you think they gifted Ian and the other AnandTech editors a few hundreds of Intel shares each? Maybe they gave them free vacations to Bali just to "thank" them? Or free top-end Intel based laptops and Intel SSDs for the next 5 years?These examples *would* be conflicts of interest. Previewing an Ice Lake reference design while pointing out an entire list of caveats and limitations is not an example of a conflict of interest. "Professional distance" does not mean declining a product preview (why on Earth should they?), it means keeping an equal distance from all the companies the products of which you preview.

bcronce - Friday, August 2, 2019 - link

I learned a lot. Like trade-offs between latency and throughput in several different ways. Or increase in cache-hits vs latency.