AMD Joins CXL Consortium: Playing in All The Interconnects

by Anton Shilov on July 19, 2019 5:00 PM EST- Posted in

- Interconnect

- AMD

- CXL

- PCIe 5.0

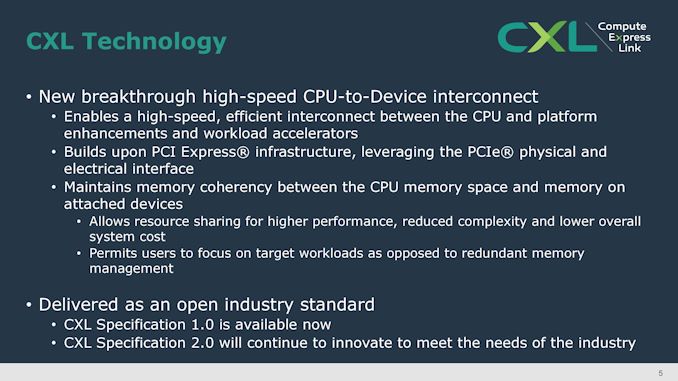

AMD's CTO, Mark Papermaster, has stated in a blog that AMD has joined the Compute Express Link (CXL) Consortium. The industry group is led by nine industry giants including Intel, Alibaba, Google, and Microsoft, but has over 35 members. The CXL 1.0 technology uses the PCIe 5.0 physical infrastructure to enable a coherent low-latency interconnect protocol that allows to share CPU and non-CPU resources efficiently and without using complex memory management. The announcement indicates that AMD now supports all of the current and upcoming non-proprietary high-speed interconnect protocols, including CCIX, Gen-Z, and OpenCAPI.

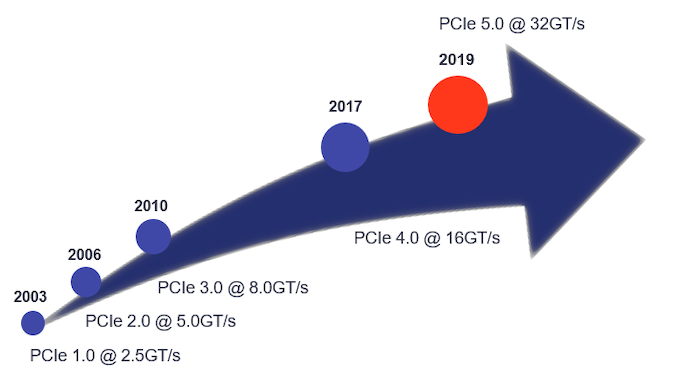

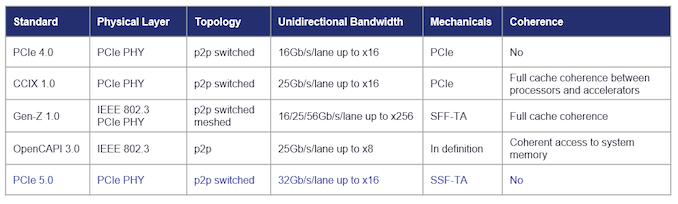

PCIe has enabled a tremendous increase of bandwidth from 2.5 GT/s per lane in 2003 to 32 GT/s per lane in 2019 and is set to remain a ubiquitous physical interface of upcoming SoCs. Over the past few years it turned out that to enable efficient coherent interconnect between CPUs and other devices, specific low-latency protocols were needed, so a variety of proprietary and open-standard technologies built upon PCIe PHY were developed, including CXL, CCIX, Gen-Z, Infinity Fabric, NVLink, CAPI, and other. In 2016, IBM (with a group of supporters) went as far as developing the OpenCAPI interface relying on a new physical layer and a new protocol (but this is a completely different story).

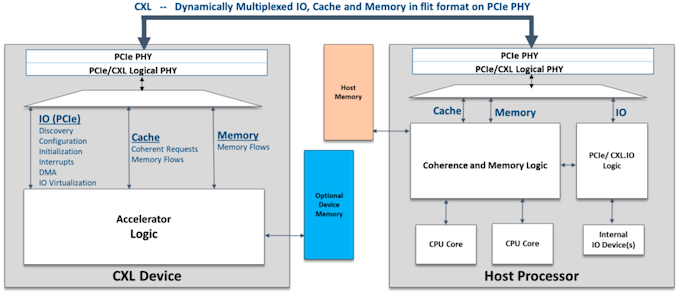

Each of the protocols that rely on PCIe have their peculiarities and numerous supporters. The CXL 1.0 specification introduced earlier this year was primarily designed to enable heterogeneous processing (by using accelerators) and memory systems (think memory expansion devices). The low-latency CXL runs on PCIe 5.0 PHY stack at 32 GT/s and supports x16, x8, and x4 link widths natively. Meanwhile, in degraded mode it also supports 16.0 GT/s and 8.0 GT/s data rates as well as x2 and x1 links. In case of a PCIe 5.0 x16 slot, CXL 1.0 devices will enjoy 64 GB/s bandwidth in each direction. It is also noteworthy that the CXL 1.0 features three protocols within itself: the mandatory CXL.io as well as CXL.cache for cache coherency and CXL.memory for memory coherency that are needed to effectively manage latencies.

In the coming years computers in general and machines used for AI and ML processing will require a diverse combination of accelerators featuring scalar, vector, matrix and spatial architectures. For efficient operation, some of these accelerators will need to have low-latency cache coherency and memory semantics between them and processors, but since there is no ubiquitous protocol that supports appropriate functionality, there will be a fight between some of the standards that do not complement each other.

The biggest advantage of CXL is that it is not only supported by over 30 companies already, but its founding members include such heavyweights as Alibaba, DellEMC, Facebook, Google, HPE, Huawei, Intel, and Microsoft. All of these companies build their own hardware architectures and their support for CXL means that they plan to use the technology. Since AMD clearly does not want to be left behind the industry, it is natural for the company to join the CXL party.

Since CXL relies on PCIe 5.0 physical infrastructure, companies can use the same physical interconnects but develop the transmission logic required. At this point AMD is not committing to enabling CXL on future products, but is throwing its hat into the ring to discuss how the protocol develops, should it appear in a future AMD product.

Related Reading:

- Compute Express Link (CXL): From Nine Members to Thirty Three

- CXL Specification 1.0 Released: New Industry High-Speed Interconnect From Intel

- Gen-Z Interconnect Core Specification 1.0 Published

- Hot Chips: Intel EMIB and 14nm Stratix 10 FPGA Live Blog (8:45am PT, 3:45pm UTC)

Sources: AMD, CXL Consortium, PLDA

43 Comments

View All Comments

ats - Sunday, July 21, 2019 - link

Actually, it is DRAM latency that has remained basically unchanged for the last 20+ years. That's because the fundamental operation of DRAM has remained unchanged for 20+ years. The only changes that have taken place are in the DRAM interfaces in order to deliver more bandwidth via increasing levels of prefetch/multiplexing.Latency is a fundamental aspect of computer memory and a first order performance impact on actual real world workloads. And no, there is no memory module that is doing 8 or 10ns except for some very very specialized designs that cost order of magnitude what commodity dram costs.

ats - Sunday, July 21, 2019 - link

No one is going to shift main memory to PCIe based technologies. I'm fairly confident in that. You say only, I say that is higher than loaded latency in a 4 socket system....And no, it will be forever. Memory is on a dedicated low latency non-switched interface with full dedicated pathing and logic. Memory hanging off of PCIe Infinity, or Gen-Z++++++++++++ isn't going to ever be competitive.

I have multiple actual high performance CPUs across multiple architectures under my belt. Its not a matter of saying never say never, its a matter of physics.

thomasg - Saturday, July 20, 2019 - link

Firstly, mainstream is still at PCIe 3.0 (even with PCIe 4.0 now partially available due to Zen2), i. e. a x16 slot is well below the typical DDR4 single-channel speed (2666 MT, 21 Gbyte/s).PCIe 4.0 moves this up to 32 Gbyte/s, but for these platforms ram has moved to typically 3200 MT DDR4, 50 Gbyte/s in dual-channel.

So even for PCIe 4.0, dual-channel is just fine.

DDR5 is in the pipeline and it will allow at least 32 Gbyte/s per channel and thus satisfy even a PCIe 5.0 x16 configuration with dual-channel.

It is expected that DDR5 will specify up to 6400 MT/s per pin and move from the 1*64 pin interface of DDR4 to a 2*40 pin interface.

This means, mainstream will not move up to more than 2 channels as we know it. But mainstream will move to DDR5 in the next years and DDR5 comes with narrower channels which will be doubled.

So we will see 2*2*40 bit interfaces in mainstream platforms instead of 2*64 bit interface, allowing - in the same pincount - for up to 128 Gbyte/s.

voicequal - Friday, July 19, 2019 - link

DDR4-3200 dual channel:64-bit * 1600 MHz * 2 DDR * 2 channel = 51.2 GB/s

PCIe 5.0 x16:

32.0 GT/s * 128b/130b * 16 lanes = 63.02 GB/s

PCIe 5.0 has more bandwidth and has 16 lanes in each direction, whereas the DDR4 memory bus is shared for both reads & writes.

qwertymac93 - Saturday, July 20, 2019 - link

First of all, it will likely take at least two years before we see PCI-E 5 hit mainstream desktop and by then DDR5 will be a thing.Secondly, mainstream desktop might be limited to 16 PCI-E 5 lanes of bandwidth TOTAL. I don't think many people realize the number of high speed lanes available in mainstream platforms has gone down with each PCI-E generation. Back in the 2.0 days we had mainstream platforms with 44 high speed lanes from the northbridge, then with 3.0 we moved to ~16-24 lanes, now with 4.0 again we have just 16-24 lanes, except now you have to shut some devices down(namely SATA) to use all of the lanes. With 5.0, your primary PCI-E slot might only be an 8x slot, instead of the 16x we are used to.

Luffy1piece - Friday, July 19, 2019 - link

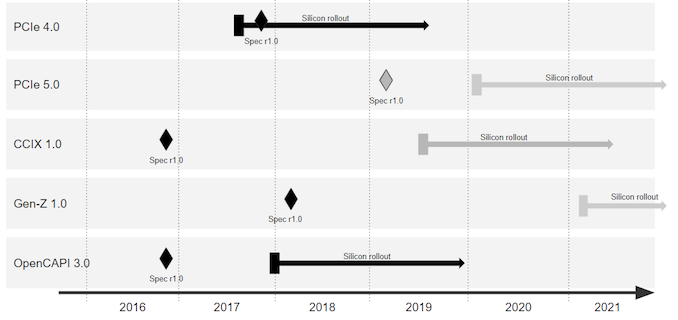

@Anton Shilov: Could you please share the source for the last graph with silicon rollout timelines? Puzzled as to why Gen-Z rollout is so late, despite it having great specs - bandwidth, latency, memory semantics, coherency, etc.ajc9988 - Friday, July 19, 2019 - link

That is an easy one. Gen Z relies on a couple extra techs, like FPGA and PCIe 5.0 to operate, just like CXL relies on PCIe 5.0 Phy. That means, they have to wait for PCIe 5.0 to hit products, among other considerations. It takes 18-24 months from publication of the new PCIe standard into incorporation in products. So, considering that means PCIe 5.0 likely will not show up in products until 2021, about the time AMD is on 5nm and Intel, if they meet their roadmap, is on 7nm, we will have to wait for the implementation into hardware until then. PCIe 6.0 spec is set to be published in 2021, which CXL explains the acceleration of that standard so that 4, 5, and 6 are all published within 2 years of each other (it was 3 years each for up to PCIe 3.0, then slowed to a 6 year wait for PCIe 4.0 published in 2017, then 5 in 2019 when 4.0 is implemented, 6 in 2021 when 5 is implemented, setting up for the harder hitting coherency being in place for 2023 with PCIe 6.0 and future implementations of Gen Z and CXL).So it is not really late relative to the other standards, it is waiting on Phy to be introduced supporting it, along with completion of development for incorporation, which is time and money. Patience is key.

catavalon21 - Friday, July 19, 2019 - link

Ian tweeted the link a few weeks agohttps://www.plda.com/blog/category/technical-artic...

catavalon21 - Friday, July 19, 2019 - link

Also, it's the "PLDA" source at the end of the article.Luffy1piece - Monday, July 22, 2019 - link

Thanks for the link :)