The AMD Radeon RX 5700 XT & RX 5700 Review: Navi Renews Competition in the Midrange Market

by Ryan Smith on July 7, 2019 12:00 PM ESTPower, Temperatures, & Noise

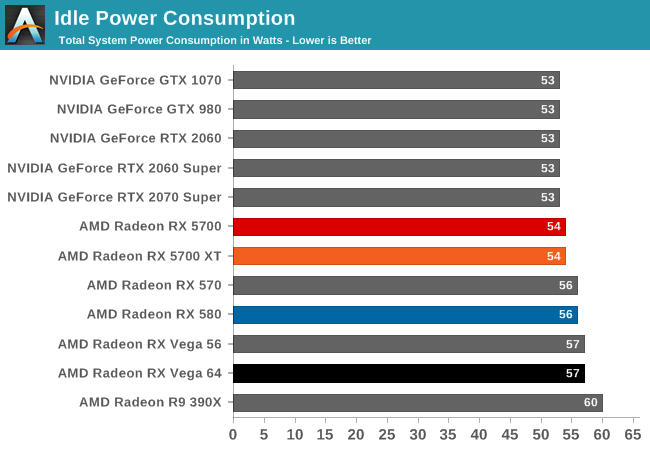

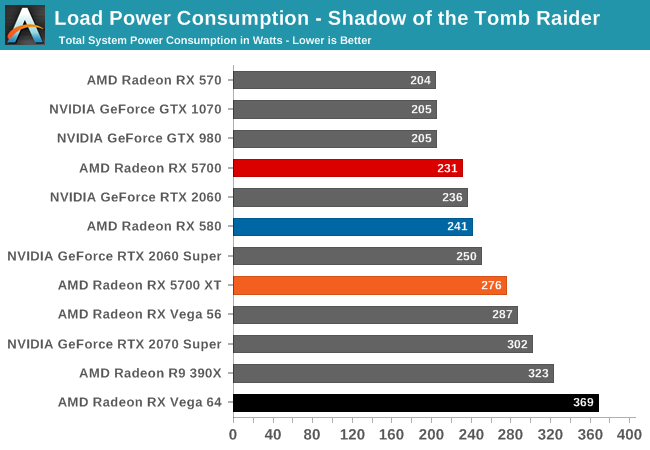

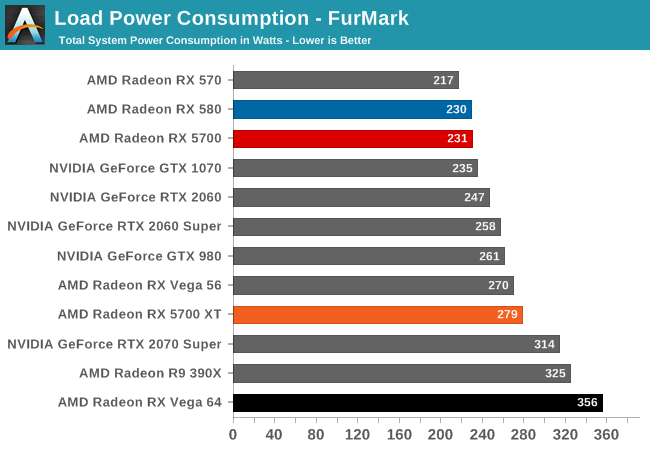

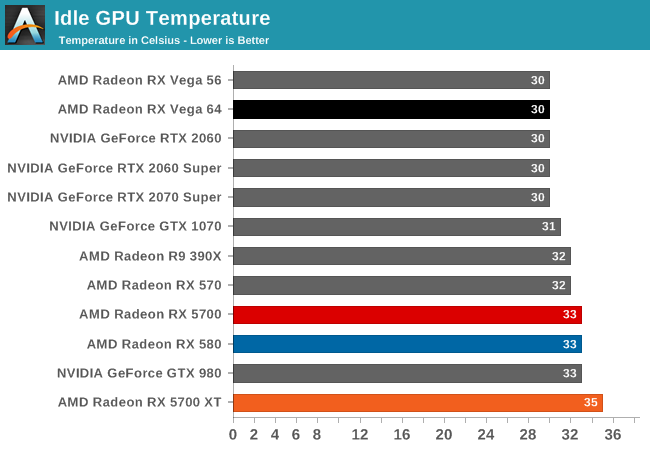

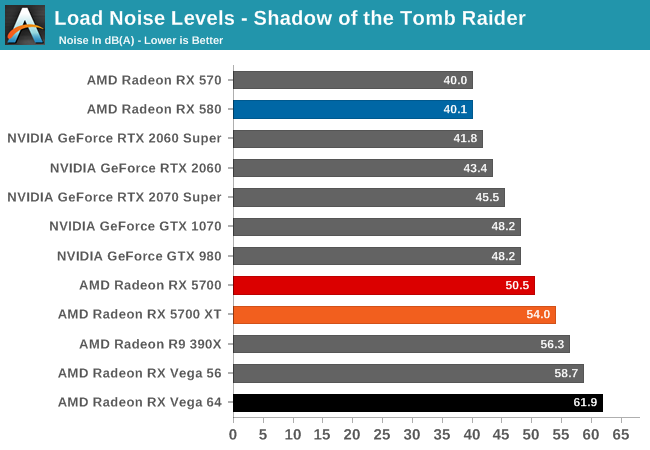

Last, but not least of course, is our look at power, temperatures, and noise levels. While a high performing card is good in its own right, an excellent card can deliver great performance while also keeping power consumption and the resulting noise levels in check.

| Radeon Video Card Voltages | |||||

| 5700 XT Max | 5700 Max | 5700 XT Idle | 5700 Idle | ||

| 1.2v | 1.025v | 0.725v | 0.775v | ||

Looking at boost voltages for AMD's new midrange 7nm cards, we don't have too many points of comparison right now. But still, with AMD's drivers reporting a maximum boost voltage of 1.2v for the 5700 XT, not even the incredibly juiced Polaris 30-based Radeon RX 590 took quite so much voltage. It may very well be that TSMC's high-performance 7nm process simply requires a lot of voltage here, but it may also be a sign that AMD is riding the voltage/frequency curve pretty hard to get those high clockspeeds.

By contrast, the 5700 (vanilla) is a much more mundane card. With its lower clockspeeds, the card never goes above 1.025v according to AMD's drivers. Which given the impact of voltage on power consumption, it's actually a bit surprising the spread is so large.

| Radeon Video Card Average Clockspeeds (Rounded to the Nearest 10MHz) |

|||

| Game | 5700 XT | 5700 | |

| Max Boost Clock | 2044MHz | 1750MHz | |

| Official Game Clock | 1755MHz | 1625MHz | |

| Tomb Raider | 1780MHz | 1680MHz | |

| F1 2019 | 1800MHz | 1650MHz | |

| Assassin's Creed | 1900MHz | 1700MHz | |

| Metro Exodus | 1780MHz | 1640MHz | |

| Strange Brigade | 1780MHz | 1660MHz | |

| Total War: TK | 1830MHz | 1690MHz | |

| The Division 2 | 1760MHz | 1630MHz | |

| Grand Theft Auto V | 1910MHz | 1690MHz | |

| Forza Horizon 4 | 1870MHz | 1700MHz | |

Meanwhile clockspeeds are also an interesting story. AMD said that they would no longer be holding back their chips' top boost clocks, and instead let the silicon lottery run its course, allowing the best chips to reach their highest clockspeeds. The end result is that our 5700 XT is allowed to clock up to 2044 MHz, 139MHz better than AMD's official Boost Clock metric guarantees. More to the point, this is a substaintial jump in frequency over both AMD's RX Vega and RX 500 series cards, which would top out around the mid-1500s.

That said, the 5700 XT doesn't have the TDP or thermal cap to susntain this; I couldn't actually hit 2044MHz even in LuxMark, which as a "light" compute workload tends to bring out the highest clockspeeds in processors. Instead, the best clockspeed I was able to hit was a bit lower, at 2008MHz. So while the silicon is willing, the physics of powering a Navi 10 at such high clockspeeds are another matter.

At any rate, even with TDP and cooling keeping the 5700 XT more down to earth, the card is still able to hit high clockspeeds. More than half of the games in our benchmark suite average clockspeeds of 1800MHz or better, and a few get to 1900MHz. Even The Division 2, which appears to be the single most punishing game in this year's suite in terms of clockspeeds, holds the line at 1760MHz, right above AMD's official game clock.

As for the 5700, with its more conservative TDP, clockspeed specifications, and likely some binning, the card doesn't reach quite as high. Its 1750MHz max boost clock is just 25MHz over AMD's guaranteed clock. Meanwhile its clockspeeds are overall a bit more densely packed than the 5700 XT's; all of our games see average clockspeeds between 1630MHz and 1700MHz.

135 Comments

View All Comments

bananaforscale - Sunday, July 7, 2019 - link

I expected to be buying a 2070 Super next, but now I'm absolutely waiting for third party cards on both sides. Didn't expect 5700XT to have a lower power draw than 2070S under full load either.Skiddywinks - Sunday, July 7, 2019 - link

It's the slightly slower than a 2070S but 20% better perf/cost that's got me.Will have to see how those numbers stack up with the third party cards. But the XT is looking better than I expected.

Kevin G - Sunday, July 7, 2019 - link

Given the pricing structure, AMD as initially targeting the RTX 2070 performance for the RX 5700 and the result point to a victory in that comparison. The gotcha is that nVidia went Super and AMD has pre-emtpively dropped prices. The result is that what was the performance at $599 nine months ago from nVidia can now be hand for $399 from AMD. Street pricing over time should be more interesting as AMD has more room to move downward while boosting performance over time with some driver updates. Due to the restructuring of nVidia's line up, the RX 5700XT isn't a clear win but certainly not a wrong choice to make.Huh, I wonder what feature set AMD has for HDMI for it not work at boot on your workbench. Makes me wonder what would happen with this card in a 2010 Mac Pro which exhibits similar display oddities with 3rd party GPUs at boot.

Drivers do need some polish looking at the benchmark data. Strange Brigade's 99th percentile numbers are very similar at 1440p and 1080p where the averages are more divergent. Chances are that there is a hiccup there that might be able to be flattened out. Similarly the synthetic numbers are troublesome and point to a driver issue: the buffer compression figures indicate, well, that there is buffer compression going on. Vega on the other hand has a small amount going on which helps. I'd be really curious if compression data from real games can be extracted to fully isolate it to drivers. Also how well do Vulkan games run? I know it isn't part of the normal test bench any cursory data on this API support with the launch drivers?

imaheadcase - Sunday, July 7, 2019 - link

AMD has never been known for good drivers, or slow to fix stuff. I doubt they have more room to move downward, they pretty much knew the pricing of cards at release and made it seem like it price drop.For a brand new "cheap" pc build these be ok cards, but if already got a decent nvidia gpu no reason at all to upgrade to it, considering the time it takes AMD to catch up in hardware, they always fall back behind for years.

just4U - Sunday, July 7, 2019 - link

Wait... what? I am sitting on last gen 1080s(sli) and Vega's(cf) and while I don't see any card out there I want besides the Vega VII currently.. to suggest these would only be good in a "cheap" build is nonsense.mapesdhs - Sunday, July 7, 2019 - link

imaheadcase, you're out of date re drivers, AMD has more reliable drivers these days, and (it seems) generally better image quality, hence why Google chose AMD over NVIDIA for Stadia. There's always an exception of course, Radeon VII's launch drivers were awful, that card was launched two weeks too early.haukionkannel - Monday, July 8, 2019 - link

True... AMD drivers have been better than Nvidia drivers for a some time and that is small miracle considering how big programming team Nvidia has... wonder what They have been doing lately? Optimising RT performance?tamalero - Monday, July 8, 2019 - link

The usual, gaining exclusives by optimizing the games FOR their hardware first.zodiacfml - Sunday, July 7, 2019 - link

I guess the choice of games matter.Korguz - Sunday, July 7, 2019 - link

yep.. and i dont play any of the games tested.. so.. to attempt to choose a new vid card.. will be interesting....