The AMD Radeon RX 5700 XT & RX 5700 Review: Navi Renews Competition in the Midrange Market

by Ryan Smith on July 7, 2019 12:00 PM ESTPower, Temperatures, & Noise

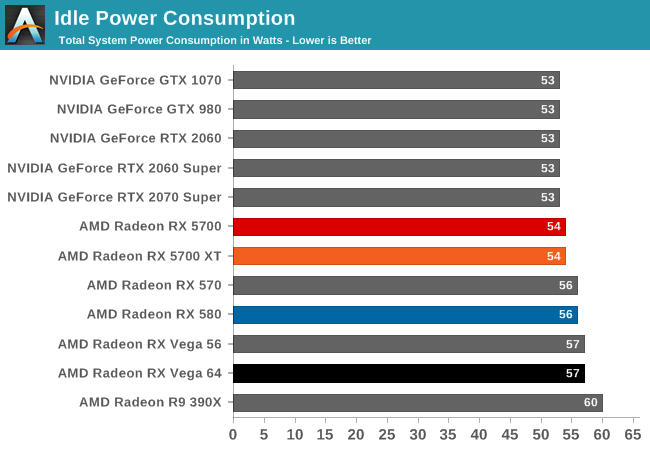

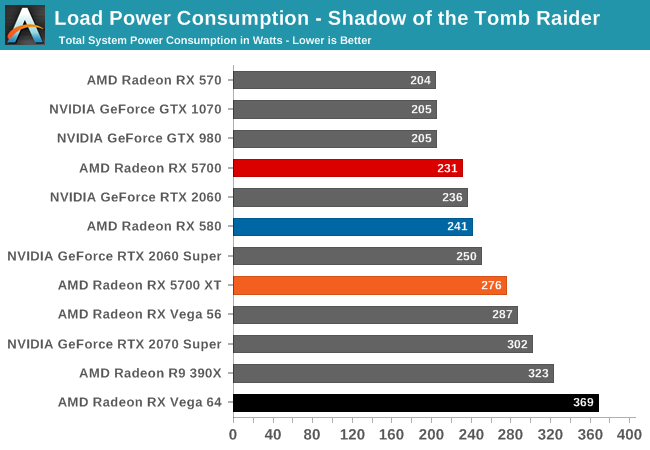

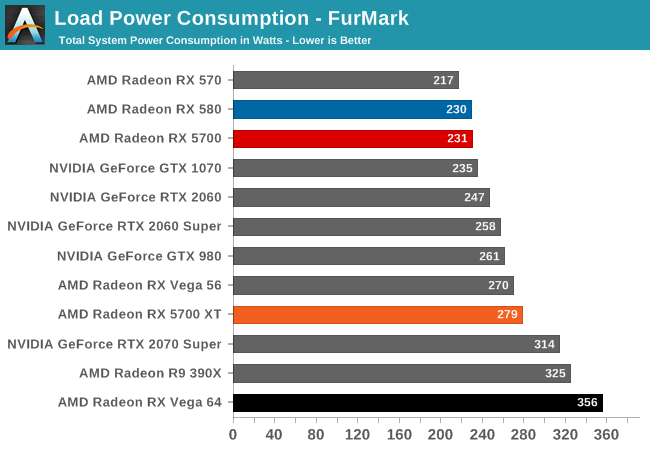

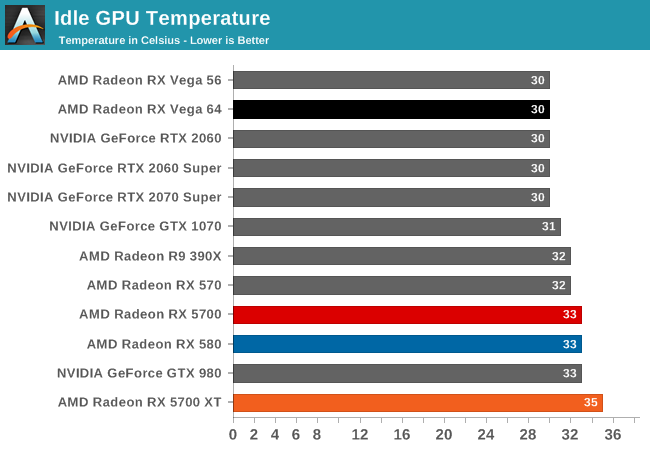

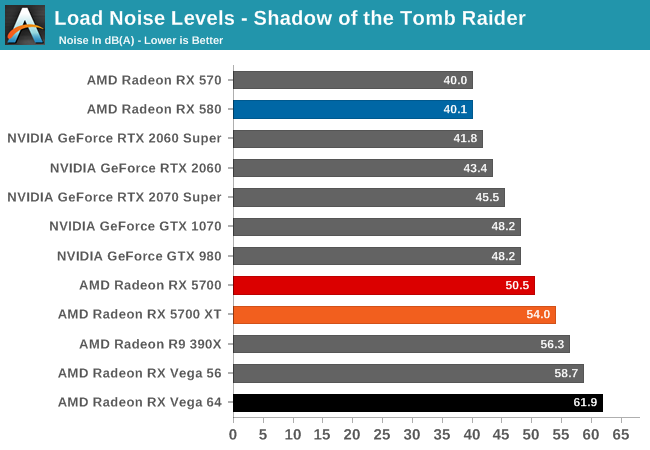

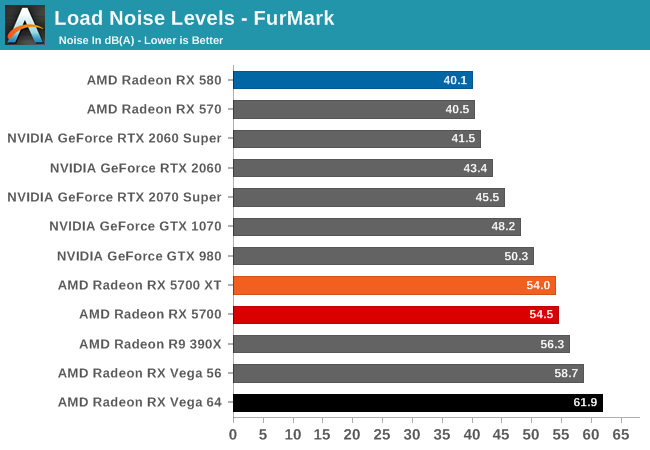

Last, but not least of course, is our look at power, temperatures, and noise levels. While a high performing card is good in its own right, an excellent card can deliver great performance while also keeping power consumption and the resulting noise levels in check.

| Radeon Video Card Voltages | |||||

| 5700 XT Max | 5700 Max | 5700 XT Idle | 5700 Idle | ||

| 1.2v | 1.025v | 0.725v | 0.775v | ||

Looking at boost voltages for AMD's new midrange 7nm cards, we don't have too many points of comparison right now. But still, with AMD's drivers reporting a maximum boost voltage of 1.2v for the 5700 XT, not even the incredibly juiced Polaris 30-based Radeon RX 590 took quite so much voltage. It may very well be that TSMC's high-performance 7nm process simply requires a lot of voltage here, but it may also be a sign that AMD is riding the voltage/frequency curve pretty hard to get those high clockspeeds.

By contrast, the 5700 (vanilla) is a much more mundane card. With its lower clockspeeds, the card never goes above 1.025v according to AMD's drivers. Which given the impact of voltage on power consumption, it's actually a bit surprising the spread is so large.

| Radeon Video Card Average Clockspeeds (Rounded to the Nearest 10MHz) |

|||

| Game | 5700 XT | 5700 | |

| Max Boost Clock | 2044MHz | 1750MHz | |

| Official Game Clock | 1755MHz | 1625MHz | |

| Tomb Raider | 1780MHz | 1680MHz | |

| F1 2019 | 1800MHz | 1650MHz | |

| Assassin's Creed | 1900MHz | 1700MHz | |

| Metro Exodus | 1780MHz | 1640MHz | |

| Strange Brigade | 1780MHz | 1660MHz | |

| Total War: TK | 1830MHz | 1690MHz | |

| The Division 2 | 1760MHz | 1630MHz | |

| Grand Theft Auto V | 1910MHz | 1690MHz | |

| Forza Horizon 4 | 1870MHz | 1700MHz | |

Meanwhile clockspeeds are also an interesting story. AMD said that they would no longer be holding back their chips' top boost clocks, and instead let the silicon lottery run its course, allowing the best chips to reach their highest clockspeeds. The end result is that our 5700 XT is allowed to clock up to 2044 MHz, 139MHz better than AMD's official Boost Clock metric guarantees. More to the point, this is a substaintial jump in frequency over both AMD's RX Vega and RX 500 series cards, which would top out around the mid-1500s.

That said, the 5700 XT doesn't have the TDP or thermal cap to susntain this; I couldn't actually hit 2044MHz even in LuxMark, which as a "light" compute workload tends to bring out the highest clockspeeds in processors. Instead, the best clockspeed I was able to hit was a bit lower, at 2008MHz. So while the silicon is willing, the physics of powering a Navi 10 at such high clockspeeds are another matter.

At any rate, even with TDP and cooling keeping the 5700 XT more down to earth, the card is still able to hit high clockspeeds. More than half of the games in our benchmark suite average clockspeeds of 1800MHz or better, and a few get to 1900MHz. Even The Division 2, which appears to be the single most punishing game in this year's suite in terms of clockspeeds, holds the line at 1760MHz, right above AMD's official game clock.

As for the 5700, with its more conservative TDP, clockspeed specifications, and likely some binning, the card doesn't reach quite as high. Its 1750MHz max boost clock is just 25MHz over AMD's guaranteed clock. Meanwhile its clockspeeds are overall a bit more densely packed than the 5700 XT's; all of our games see average clockspeeds between 1630MHz and 1700MHz.

135 Comments

View All Comments

rUmX - Sunday, July 7, 2019 - link

It's no longer the same architecture. RDNA vs GCN. The fact that a 36 CU (5700) consistently beats Vega 56 (56 CU) shows the design changes. Sure part of it is clock speeds and having 64 ROPs but still Navi is much more efficient than GCN, and it's doing it with much less shaders. Imagine a bigger Navi can match it exceed the 2080 TI.Kevin G - Sunday, July 7, 2019 - link

Not all those transistors are for the improved CUs either. There is a new memory controller to support GDDR6, new video codec engine and some spent on the new display controller to support DSC for 4K120 on DP 1.4.peevee - Thursday, July 11, 2019 - link

Why would GDDR6 need substantially more transistors than GDDR5? Video codec seems more or less the same also.Looks like there are some hidden features not enabled yet, hard to explain that increase in transistors per stream processor (not CU) otherwise (CUs are just twice as wide).

Meteor2 - Monday, July 8, 2019 - link

I was wondering the same thing.JasonMZW20 - Tuesday, July 16, 2019 - link

Because it's now a VLIW2 architecture via RDNA. Each CU is actually a dual-CU set (2x32SPs, 64 SPs total) and is paired with another dual-CU to form of workgroup processor (4x32) or 128 SPs. Tons of cache has been added and rearranged. This requires space and extra logic.Geometry engines (via Primitive Units) are fully programmable and are no longer fixed function. This also requires extra logic. Rasterizer, ROPs, and Primitive Unit with 128KB L1 cache are closely tied together.

Navi definitely replaces both Polaris and Vega 10/20 for gaming, so average out Polaris 30 (5.7B) and Vega 10 (12.5B) transistor amounts and you'll be somewhere near Navi 10. Vega 20 is still great at compute tasks, so I don't see it being phased out in professional markets soon.

Cooe - Tuesday, March 23, 2021 - link

... RDNA is NOT VLIW (like Terascale) ANYTHING. It's still exclusively a scaler SIMD architecture like GCN.tipoo - Sunday, July 7, 2019 - link

Do those last (at least two) Beyond3D tests look a little suspect to anyone? Multiple AMD generations all clustering around 1.0, almost looks like a driver cap.rUmX - Sunday, July 7, 2019 - link

Ugh no edit... I meant "Big Navi can match or exceed 2080 TI".Kevin G - Sunday, July 7, 2019 - link

Looking at the generations it doesn't surprise me about the RX 580 but it is odd to see the RX 5700 there, especially when Vega is higher. An extra 20% of bandwidth for the RX 5700 via compression would go a long way at 4K resolutions.Ryan Smith - Sunday, July 7, 2019 - link

It's a weird situation. The default I use for the test is to try to saturate the cards with several textures; however AMD's cards do better with just 1-2 textures. I'll have a longer explanation once I get caught up on writing.From my notes: (in GB/sec, random/black)

UINT8 1 Tex: 333/472

FP32 1 Tex: 445/469

UNIT8 6 Tex: 389/406

FP32 6 Tex: 406/406