The AMD Radeon RX 5700 XT & RX 5700 Review: Navi Renews Competition in the Midrange Market

by Ryan Smith on July 7, 2019 12:00 PM ESTPower, Temperatures, & Noise

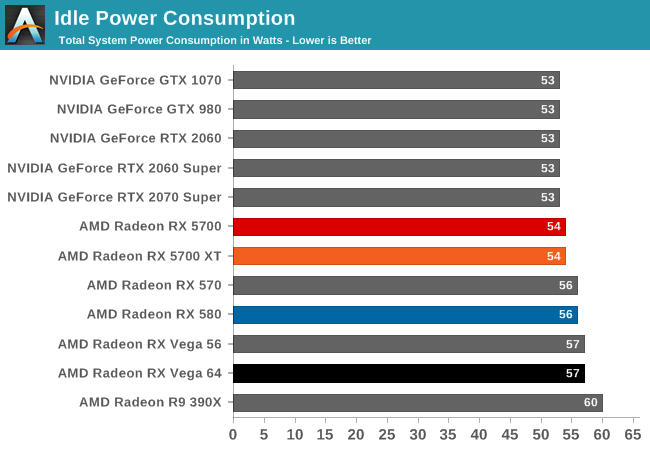

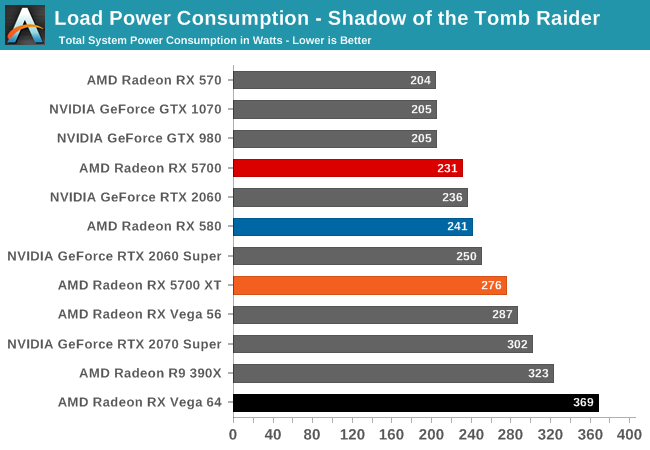

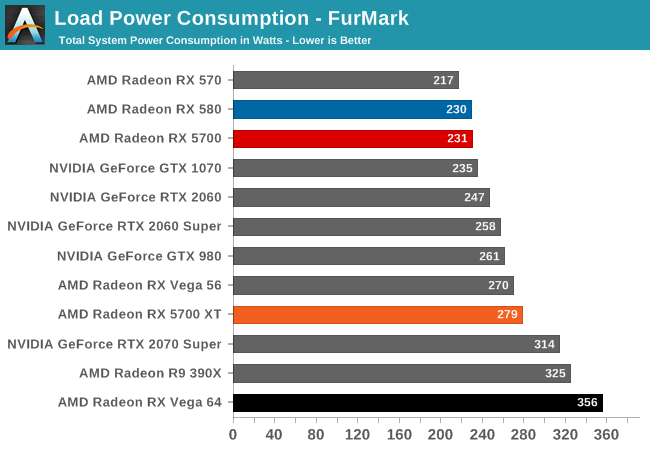

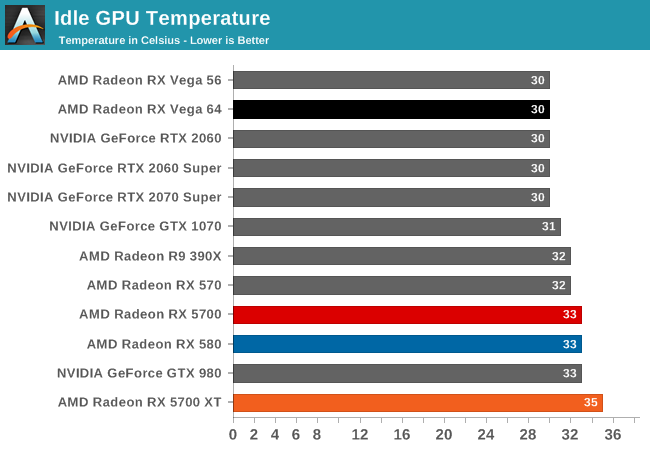

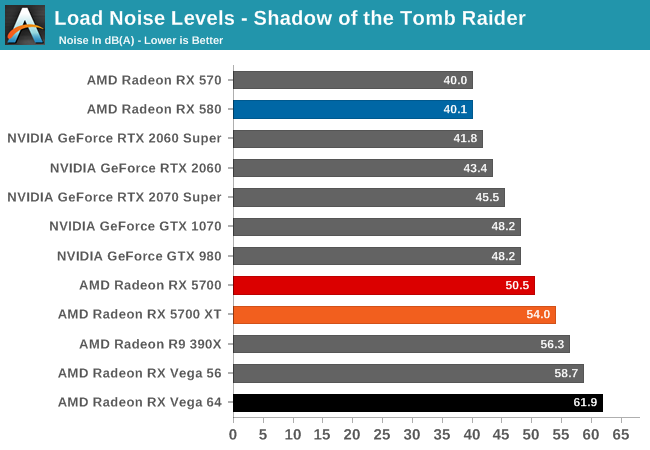

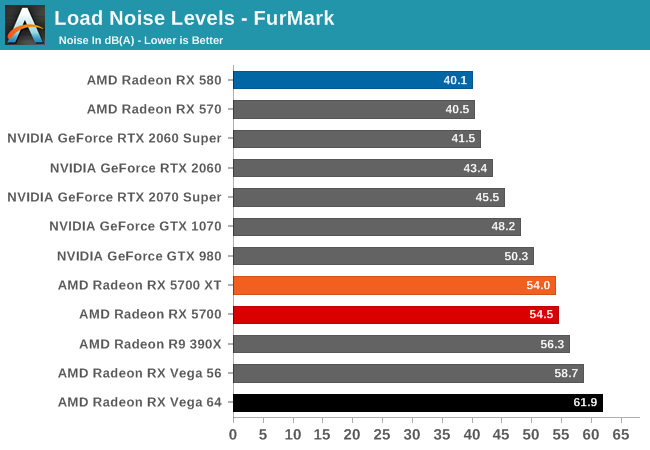

Last, but not least of course, is our look at power, temperatures, and noise levels. While a high performing card is good in its own right, an excellent card can deliver great performance while also keeping power consumption and the resulting noise levels in check.

| Radeon Video Card Voltages | |||||

| 5700 XT Max | 5700 Max | 5700 XT Idle | 5700 Idle | ||

| 1.2v | 1.025v | 0.725v | 0.775v | ||

Looking at boost voltages for AMD's new midrange 7nm cards, we don't have too many points of comparison right now. But still, with AMD's drivers reporting a maximum boost voltage of 1.2v for the 5700 XT, not even the incredibly juiced Polaris 30-based Radeon RX 590 took quite so much voltage. It may very well be that TSMC's high-performance 7nm process simply requires a lot of voltage here, but it may also be a sign that AMD is riding the voltage/frequency curve pretty hard to get those high clockspeeds.

By contrast, the 5700 (vanilla) is a much more mundane card. With its lower clockspeeds, the card never goes above 1.025v according to AMD's drivers. Which given the impact of voltage on power consumption, it's actually a bit surprising the spread is so large.

| Radeon Video Card Average Clockspeeds (Rounded to the Nearest 10MHz) |

|||

| Game | 5700 XT | 5700 | |

| Max Boost Clock | 2044MHz | 1750MHz | |

| Official Game Clock | 1755MHz | 1625MHz | |

| Tomb Raider | 1780MHz | 1680MHz | |

| F1 2019 | 1800MHz | 1650MHz | |

| Assassin's Creed | 1900MHz | 1700MHz | |

| Metro Exodus | 1780MHz | 1640MHz | |

| Strange Brigade | 1780MHz | 1660MHz | |

| Total War: TK | 1830MHz | 1690MHz | |

| The Division 2 | 1760MHz | 1630MHz | |

| Grand Theft Auto V | 1910MHz | 1690MHz | |

| Forza Horizon 4 | 1870MHz | 1700MHz | |

Meanwhile clockspeeds are also an interesting story. AMD said that they would no longer be holding back their chips' top boost clocks, and instead let the silicon lottery run its course, allowing the best chips to reach their highest clockspeeds. The end result is that our 5700 XT is allowed to clock up to 2044 MHz, 139MHz better than AMD's official Boost Clock metric guarantees. More to the point, this is a substaintial jump in frequency over both AMD's RX Vega and RX 500 series cards, which would top out around the mid-1500s.

That said, the 5700 XT doesn't have the TDP or thermal cap to susntain this; I couldn't actually hit 2044MHz even in LuxMark, which as a "light" compute workload tends to bring out the highest clockspeeds in processors. Instead, the best clockspeed I was able to hit was a bit lower, at 2008MHz. So while the silicon is willing, the physics of powering a Navi 10 at such high clockspeeds are another matter.

At any rate, even with TDP and cooling keeping the 5700 XT more down to earth, the card is still able to hit high clockspeeds. More than half of the games in our benchmark suite average clockspeeds of 1800MHz or better, and a few get to 1900MHz. Even The Division 2, which appears to be the single most punishing game in this year's suite in terms of clockspeeds, holds the line at 1760MHz, right above AMD's official game clock.

As for the 5700, with its more conservative TDP, clockspeed specifications, and likely some binning, the card doesn't reach quite as high. Its 1750MHz max boost clock is just 25MHz over AMD's guaranteed clock. Meanwhile its clockspeeds are overall a bit more densely packed than the 5700 XT's; all of our games see average clockspeeds between 1630MHz and 1700MHz.

135 Comments

View All Comments

LoneWolf15 - Thursday, July 11, 2019 - link

Or we do it because technology really stagnated up until this year.Until Coffee Lake-R and Ryzen 3xxx (and the significant DDR4 price drop) I couldn't justify replacing a 4790K with 32GB of DDR3 and a good board.

just4U - Monday, July 8, 2019 - link

Oh well hell.. here let me help you then.. the RX480 was only slightly slower than the RX580.. although the 380 was substantially slower than the 480 and a bit of a power sucker... If your sitting on 300 series cards (or shoot 700 series Nvdia) then anything today is a substantial upgrade across the board... this should be fairly easy to see.yankeeDDL - Tuesday, July 9, 2019 - link

It was a general statement (at least, mine was). There's so much that has changed from the time that the 300 and 400 series was tested (drivers changes) that there's simply no correct information today. Even more so on the CPU side, with all the mitigation that came into play and with the new scheduler of Windows 1903. My personal opinion is that a "quick" review when the embargo drops, showing the changes over the previous gen, is great. But 1 or 2 weeks later, the data could be extended to cover previous generations, and have a very clear, up to date picture. I think it would be very helpful.just4U - Wednesday, July 10, 2019 - link

@YankeeDDL,I try to look at the bench when gauging overall performance of older cards, find a reference with something newer and then calculate that based on a current review. That doesn't always tell the whole tale though. The 390X is listed in this review yet I struggled with a 390 at the tail end of 2017 on new games. Video lag was getting the better of me on a fast system. So it's a mixed bag, all you can do is gauge how it is in comparison..

artk2219 - Tuesday, July 9, 2019 - link

Also I'd argue that if you're sitting on a 390x you compare very well against an RX580, like within 5%. So the minimum you should be looking at for an upgrade would be a 1070 ti\2060\Vega 56\RX 5700. But if you only game at 1080p, that card still has some time left in it.Ryan Smith - Monday, July 8, 2019 - link

You are absolutely not forgotten. It's why a 390X was included. That was a $400 card on launch (and got cheaper pretty quickly), making it a good comparison point to the $400 5700XT.Meteor2 - Monday, July 8, 2019 - link

Thank you RyanIUU - Thursday, August 1, 2019 - link

I feel you. Just an "irrelevant note". The upgrade of a midrange gpu which delivers between 5 and 8 teraflops single precision costs 350 if cheap, 500 if expensive. To get flagship performance in cellphones you need to cash out about 350 if cheap and 1000+ if expensive, for the "overwhelming " gpu performance between half and 1 teraflops. Just to set things straight. Hardcore computer users who are price conscious , and maybe environmentally conscious are stuck on desktop and think twice and under full investugation what to spend.Holiday goers , that haven't ever heard of a gpu or gflops , are happily cashing out for inferior computationally products, without any deep thought; thus strengthening the manufacture of products that do not contribute sybstantially to the increase of home users computing capacity. Food for thought.

russz456 - Monday, July 29, 2019 - link

m just now starting to look hard at the newer cards. Direct comparisons would be appreciated, however, direct comparisons are already found on the Bench.https://thegreatsaver.com/lennyssurvey-lennys-subs...

0ldman79 - Sunday, July 7, 2019 - link

Awesome.Any info you can give us on hardware encoding/decoding is appreciated.

Half of the use of my Nvidia cards is NVENC. I recently tried my wife's laptop (A6-6320 I believe) and was pleasantly surprised with it's encoding capabilities. Might take a look at AMD again, but I need info before a purchase. The hardware encoding capabilities are mostly overlooked outside of streaming games online.