The AMD Radeon RX 5700 XT & RX 5700 Review: Navi Renews Competition in the Midrange Market

by Ryan Smith on July 7, 2019 12:00 PM ESTCompute

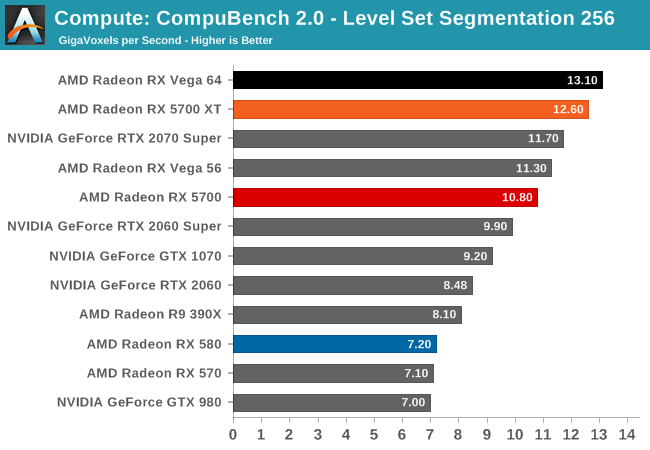

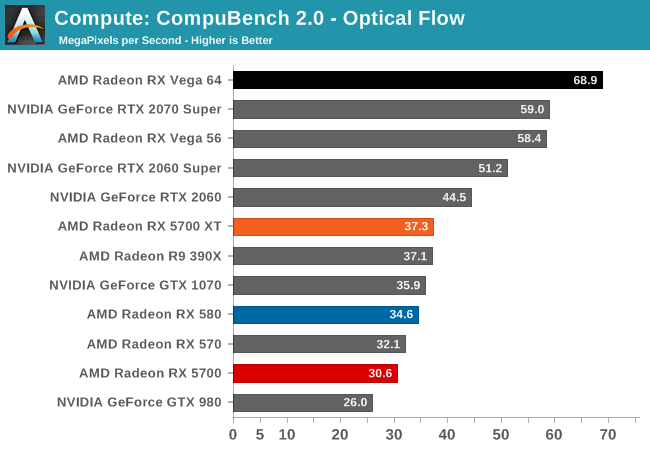

Unfortunately, as I mentioned earlier in my testing observations, the state of AMD's OpenCL driver stack at launch is quite poor. Most of our compute benchmarks either failed to have their OpenCL kernels compile, triggered a Windows Timeout Detection and Recovery (TDR), or would just crash. As a result, only three of our regular benchmarks were executable here, with Folding@Home, parts of CompuBench, and Blender all getting whammied.

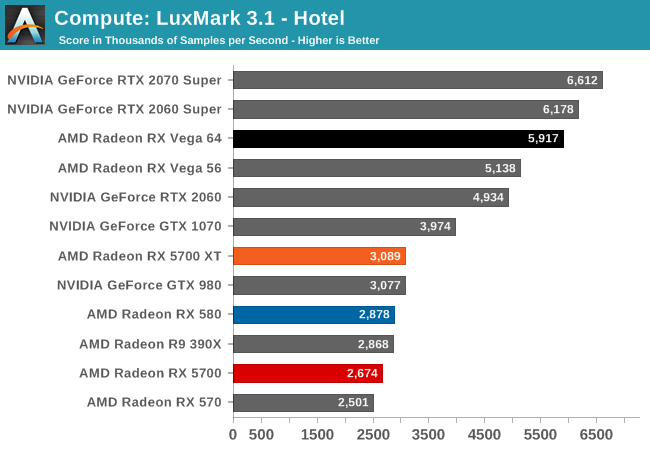

And "executable" is the choice word here, because even though benchmarks like LuxMark would run, the scores the RX 5700 cards generated were nary better than the Radeon RX 580. This a part that they can easily beat on raw FLOPs, let alone efficiency. So even when it runs, the state of AMD's OpenCL drivers is at a point where these drivers are likely not indicative of anything about Navi or the RDNA architecture; only that AMD has a lot of work left to go with their compiler.

That said, it also serves to highlight the current state of OpenCL overall. In short, OpenCL doesn't have any good champions right now. Creator Apple is now well entrenched in its own proprietary Metal ecosystem, NVIDIA favors CUDA for obvious reasons, and even AMD's GPU compute efforts are more focused on the Linux-exclusive ROCm platform, since this is what drives their Radeon Instinct sales. As a result, the overall state of GPU computing on the Windows desktop is in a precarious place, and at this rate I wouldn't be entirely surprised if future development is centered around compute shaders instead.

135 Comments

View All Comments

LoneWolf15 - Thursday, July 11, 2019 - link

Or we do it because technology really stagnated up until this year.Until Coffee Lake-R and Ryzen 3xxx (and the significant DDR4 price drop) I couldn't justify replacing a 4790K with 32GB of DDR3 and a good board.

just4U - Monday, July 8, 2019 - link

Oh well hell.. here let me help you then.. the RX480 was only slightly slower than the RX580.. although the 380 was substantially slower than the 480 and a bit of a power sucker... If your sitting on 300 series cards (or shoot 700 series Nvdia) then anything today is a substantial upgrade across the board... this should be fairly easy to see.yankeeDDL - Tuesday, July 9, 2019 - link

It was a general statement (at least, mine was). There's so much that has changed from the time that the 300 and 400 series was tested (drivers changes) that there's simply no correct information today. Even more so on the CPU side, with all the mitigation that came into play and with the new scheduler of Windows 1903. My personal opinion is that a "quick" review when the embargo drops, showing the changes over the previous gen, is great. But 1 or 2 weeks later, the data could be extended to cover previous generations, and have a very clear, up to date picture. I think it would be very helpful.just4U - Wednesday, July 10, 2019 - link

@YankeeDDL,I try to look at the bench when gauging overall performance of older cards, find a reference with something newer and then calculate that based on a current review. That doesn't always tell the whole tale though. The 390X is listed in this review yet I struggled with a 390 at the tail end of 2017 on new games. Video lag was getting the better of me on a fast system. So it's a mixed bag, all you can do is gauge how it is in comparison..

artk2219 - Tuesday, July 9, 2019 - link

Also I'd argue that if you're sitting on a 390x you compare very well against an RX580, like within 5%. So the minimum you should be looking at for an upgrade would be a 1070 ti\2060\Vega 56\RX 5700. But if you only game at 1080p, that card still has some time left in it.Ryan Smith - Monday, July 8, 2019 - link

You are absolutely not forgotten. It's why a 390X was included. That was a $400 card on launch (and got cheaper pretty quickly), making it a good comparison point to the $400 5700XT.Meteor2 - Monday, July 8, 2019 - link

Thank you RyanIUU - Thursday, August 1, 2019 - link

I feel you. Just an "irrelevant note". The upgrade of a midrange gpu which delivers between 5 and 8 teraflops single precision costs 350 if cheap, 500 if expensive. To get flagship performance in cellphones you need to cash out about 350 if cheap and 1000+ if expensive, for the "overwhelming " gpu performance between half and 1 teraflops. Just to set things straight. Hardcore computer users who are price conscious , and maybe environmentally conscious are stuck on desktop and think twice and under full investugation what to spend.Holiday goers , that haven't ever heard of a gpu or gflops , are happily cashing out for inferior computationally products, without any deep thought; thus strengthening the manufacture of products that do not contribute sybstantially to the increase of home users computing capacity. Food for thought.

russz456 - Monday, July 29, 2019 - link

m just now starting to look hard at the newer cards. Direct comparisons would be appreciated, however, direct comparisons are already found on the Bench.https://thegreatsaver.com/lennyssurvey-lennys-subs...

0ldman79 - Sunday, July 7, 2019 - link

Awesome.Any info you can give us on hardware encoding/decoding is appreciated.

Half of the use of my Nvidia cards is NVENC. I recently tried my wife's laptop (A6-6320 I believe) and was pleasantly surprised with it's encoding capabilities. Might take a look at AMD again, but I need info before a purchase. The hardware encoding capabilities are mostly overlooked outside of streaming games online.