AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

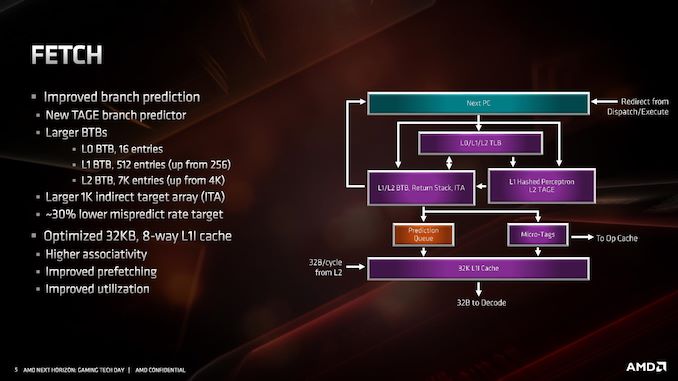

Fetch/Prefetch

Starting with the front end of the processor, the prefetchers.

AMD’s primary advertised improvement here is the use of a TAGE predictor, although it is only used for non-L1 fetches. This might not sound too impressive: AMD is still using a hashed perceptron prefetch engine for L1 fetches, which is going to be as many fetches as possible, but the TAGE L2 branch predictor uses additional tagging to enable longer branch histories for better prediction pathways. This becomes more important for the L2 prefetches and beyond, with the hashed perceptron preferred for short prefetches in the L1 based on power.

In the front end we also get larger BTBs, to help keep track of instruction branches and cache requests. The L1 BTB has doubled in size from 256 entry to 512 entry, and the L2 is almost doubled to 7K from 4K. The L0 BTB stays at 16 entries, but the Indirect target array goes up to 1K entries. Overall, these changes according to AMD affords a 30% lower mispredict rate, saving power.

One other major change is the L1 instruction cache. We noted that it is smaller for Zen 2: only 32 KB rather than 64 KB, however the associativity has doubled, from 4-way to 8-way. Given the way a cache works, these two effects ultimately don’t cancel each other out, however the 32 KB L1-I cache should be more power efficient, and experience higher utilization. The L1-I cache hasn’t just decreased in isolation – one of the benefits of reducing the size of the I-cache is that it has allowed AMD to double the size of the micro-op cache. These two structures are next to each other inside the core, and so even at 7nm we have an instance of space limitations causing a trade-off between structures within a core. AMD stated that this configuration, the smaller L1 with the larger micro-op cache, ended up being better in more of the scenarios it tested.

216 Comments

View All Comments

Kjella - Thursday, June 13, 2019 - link

The Ryzen 1800x got dropped $150 in MSRP nine months after launch, I think AMD thought octo-core might be a niche market they needed strong margins on but realized they'd make more money making it a mainstream part. I bought one at launch price and knew it probably wouldn't be great value but it was like good enough, let's support AMD by splurging on the top model. Very happy it's now paying off, even though I'm not in the market for a replacement yet.deltaFx2 - Tuesday, June 11, 2019 - link

@jjj: Rriigght... Moore's law applies to transistors. You are getting more transistors per sq. mm, and that translates to more cores. ST performance is an arbitrary metric you came up with. It's like expecting car power output (HP/W) go increase linearly every new model and it does not work that way. Physics. So, they innovate on other things like fuel economy, better drive quality, handling, safety features... it's life. We aren't in the 1980s anymore where you got 2x ST perf from a process shrink. Frequency scaling is long dead.The other thing you miss is that the economies of scale you talk about are dying. 7nm is *more* expensive per transistor than 28nm. Finfet, quad patterning, etc etc. So "TSMC gives them more perf per dollar" compared to what? 28nm? No way. 14nm? Nope.

RedGreenBlue - Tuesday, June 11, 2019 - link

Multi-threaded performance does have an effect on single threaded performance in a lot of use cases. If you can afford a 12 core cpu instead of an 8, you would end up with better performance in the following situation: You have one or two multithreaded workloads that will have the most throughput when maxing out 7 strong threads, you want to play a game or run one task that is single-threaded. That single-threaded task is now hindered by the OS and any random updates or processes running in the background.Point being, if you ever do something that maxes out (t - 1) cores, even if there's only one thread running on each, then suddenly your single threaded performance can suffer at the expense of a random OS or background process. So being able to afford more cores will improve single-thread performance in a multitasking environment, and yes multitasking like that is a reality today in the target market AMD is after. So get over it, because that's what a lot of people need. Nobody cares about you, it's the target market AMD and Intel care about.

FunBunny2 - Wednesday, June 12, 2019 - link

"You have one or two multithreaded workloads that will have the most throughput when maxing out 7 strong threads, you want to play a game or run one task that is single-threaded."the problem, as stated for years: 'they ain't all that many embarrassingly parallel user space problems'. IOW, unless you've got an app *expressly* compiled for parallel execution, you get nothing from multi-core/thread. what you do get is the ability to run multiple, independent programs. since we only have one set of hands and one set of eyes, humans don't integrate very well with OS/MFT. that's from the late 360 machines.

yankeeDDL - Thursday, June 13, 2019 - link

I am doing office work, and, according to Task Manager, there are ~3500 threads running on my laptop. Obviously, most threads are dormant, however, as soon as I start downloading something, while listening to music and editing an image, while the email client checks the email and the antivirus scans "everything", I will certainly have more than "10" threads active and running. Having more cores is nearly always beneficial, even for office use. I do swap often between a Core i7 5500 (2 cores, 4 threads) and a desktop with Ryzen 5 1600 (6C, 12T). It is an apples to oranges comparison, granted, but the smoothness of the desktop does not compare. The laptop chokes easily when it is downloading updates, the antivirus is scanning and I'm swapping between multiple applications (and it has 16GB of RAM - it was high end, 4 years back).2C4T is just not enough in a productivity environment, today. 4C8T is the baseline, but anyone looking to future-proof its purchase should aim for 6C12T or more. Intel's 9 gen is quite limited in that respect.

Ratman6161 - Friday, June 14, 2019 - link

Personally I would ignore anything to do with pricing at this point. The MSRP can be anything they want, but the street prices on AMD processors have traditionally been much lower. At ouor local Microcenter for example, 2700X can be had for $250 while the 2700 is only $180. On the new processors, if history is any indicator, prices will fall rapidly after release.mode_13h - Tuesday, June 11, 2019 - link

I think the launch prices won't hold, if that's your main gripe.I would like to be able to buy an 8-core that turbos as well as the 16-core, however. I hope they offer such a model, as their yields improve. I don't need 16 cores, but I do care about single-thread perf.

azazel1024 - Tuesday, June 11, 2019 - link

I have a financial drain right now, but once that gets resolved in (hopefully) a couple of months I think I am finally going to upgrade my desktop with Zen 2. Probably look at one of the 8-core variants. I am running an i5-3570 driven at 4Ghz right now.So the performance improvement should be pretty darned substantial. And I DO a lot of multithread heavy applications like Handbrake, Photoshop, lightroom and a couple of others. The last time I upgraded was from a Core 2 Duo E6750 to my current Ivy Bridge. That was around a 4 year upgrade (I got a Conroe after they were deprecated, but before official EOL and manufacturing ceasing) IIRC. Now we are talking something like 6-7 years from when I got my Ivy Bridge to a Zen 2 if I finally jump on one.

E6750 to i5-3570 represented about a 4x increase in performance multithreaded in 4 years (or ballpark). i5-3570 to 3800x would likely represent about a 3x improvement in multithreaded in 6-7 years.

I wonder if I can swing a 3900x when the time comes. That would be probably somewhat over 4x performance improvement (and knowing how cheap I am, probably get a 3700x).

Peter2k - Tuesday, June 11, 2019 - link

Yeah, but you have to say something negative about everythingbobhumplick - Tuesday, June 11, 2019 - link

i agree. i mean its an incredible cpu line. but just throwing all of that extra die space and power budget at just more cores and cahce. i mean look at the cache to core ratio. when cpu makers just throw more cache or cores at a node shrink it can be because that makes the most sense at the time(the market, workloads, or supporting tech like dram have to catch up to enaable more per core performance) but it can also mean they just didnt know what else to do with the space.maybe its just not possible to go much beyond the widths modern cpus are approaching. even intel is using a lot of space up for avx 512 which is similar to just adding more cores (more fpu crunchers ) so its possible that neither company knows how to balance all those intructions in flight or make use of more execution resources. maybe cores cant get much more performance.

but if so that means a prettyy boring future. especially if programmers cant find ways to use those cores in more common tasks