AMD Zen 2 Microarchitecture Analysis: Ryzen 3000 and EPYC Rome

by Dr. Ian Cutress on June 10, 2019 7:22 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- EPYC

- Infinity Fabric

- PCIe 4.0

- Zen 2

- Rome

- Ryzen 3000

- Ryzen 3rd Gen

Performance Claims of Zen 2

At Computex, AMD announced that it had designed Zen 2 to offer a direct +15% raw performance gain over its Zen+ platform when comparing two processors at the same frequency. At the same time, AMD also claims that at the same power, Zen 2 will offer greater than a >1.25x performance gain at the same power, or up to half power at the same performance. Combining this together, for select benchmarks, AMD is claiming a +75% performance per watt gain over its previous generation product, and a +45% performance per watt gain over its competition.

These are numbers we can’t verify at this point, as we do not have the products in hand, and when we do the embargo for benchmarking results will lift on July 7th. AMD did spend a good amount of time going through the new changes in the microarchitecture for Zen 2, as well as platform level changes, in order to show how the product has improved over the previous generation.

It should also be noted that at multiple times during AMD’s recent Tech Day, the company stated that they are not interested in going back-and-forth with its primary competition on incremental updates to try and beat one another, which might result in holding technology back. AMD is committed, according to its executives, to pushing the envelope of performance as much as it can every generation, regardless of the competition. Both CEO Dr. Lisa Su, and CTO Mark Papermaster, have said that they expected the timeline of the launch of their Zen 2 portfolio to intersect with a very competitive Intel 10nm product line. Despite this not being the case, the AMD executives stated they are still pushing ahead with their roadmap as planned.

| AMD 'Matisse' Ryzen 3000 Series CPUs | |||||||||||

| AnandTech | Cores Threads |

Base Freq |

Boost Freq |

L2 Cache |

L3 Cache |

PCIe 4.0 |

DDR4 | TDP | Price (SEP) |

||

| Ryzen 9 | 3950X | 16C | 32T | 3.5 | 4.7 | 8 MB | 64 MB | 16+4+4 | 3200 | 105W | $749 |

| Ryzen 9 | 3900X | 12C | 24T | 3.8 | 4.6 | 6 MB | 64 MB | 16+4+4 | 3200 | 105W | $499 |

| Ryzen 7 | 3800X | 8C | 16T | 3.9 | 4.5 | 4 MB | 32 MB | 16+4+4 | 3200 | 105W | $399 |

| Ryzen 7 | 3700X | 8C | 16T | 3.6 | 4.4 | 4 MB | 32 MB | 16+4+4 | 3200 | 65W | $329 |

| Ryzen 5 | 3600X | 6C | 12T | 3.8 | 4.4 | 3 MB | 32 MB | 16+4+4 | 3200 | 95W | $249 |

| Ryzen 5 | 3600 | 6C | 12T | 3.6 | 4.2 | 3 MB | 32 MB | 16+4+4 | 3200 | 65W | $199 |

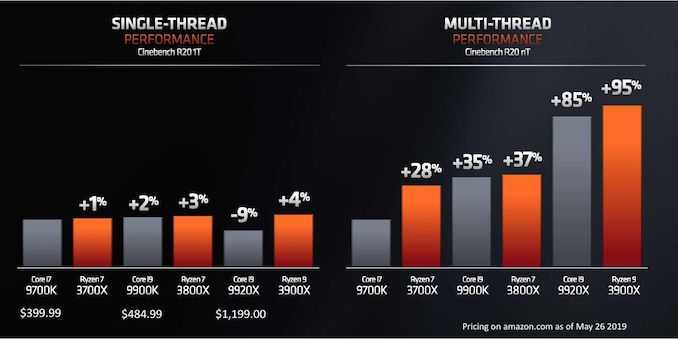

AMD’s benchmark of choice, when showcasing the performance of its upcoming Matisse processors is Cinebench. Cinebench a floating point benchmark which the company has historically done very well on, and tends to probe the CPU FP performance as well as cache performance, although it ends up often not involving much of the memory subsystem.

Back at CES 2019 in January, AMD showed an un-named 8-core Zen 2 processor against Intel’s high-end 8-core processor, the i9-9900K, on Cinebench R15, where the systems scored about the same result, but with the AMD full system consuming around 1/3 or more less power. For Computex in May, AMD disclosed a lot of the eight and twelve-core details, along with how these chips compare in single and multi-threaded Cinebench R20 results.

AMD is stating that its new processors, when comparing across core counts, offer better single thread performance, better multi-thread performance, at a lower power and a much lower price point when it comes to CPU benchmarks.

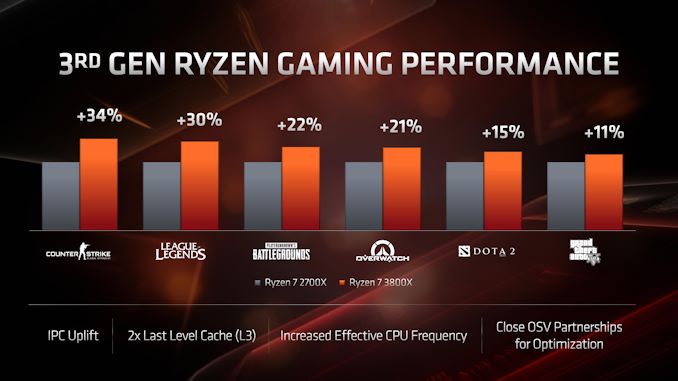

When it comes to gaming, AMD is rather bullish on this front. At 1080p, comparing the Ryzen 7 2700X to the Ryzen 7 3800X, AMD is expecting anywhere from a +11% to a +34% increase in frame rates generation to generation.

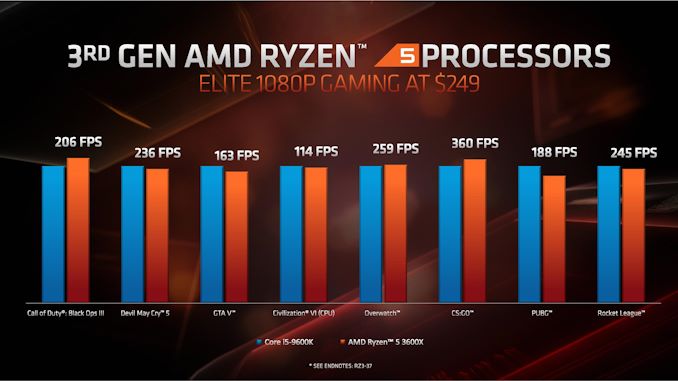

When it comes to comparing gaming between AMD and Intel processors, AMD stuck to 1080p testing of popular titles, again comparing similar processors for core counts and pricing. In pretty much every comparison, it was a back and forth between the AMD product and the Intel product – AMD would win some, loses some, or draws in others. Here’s the $250 comparison as an example:

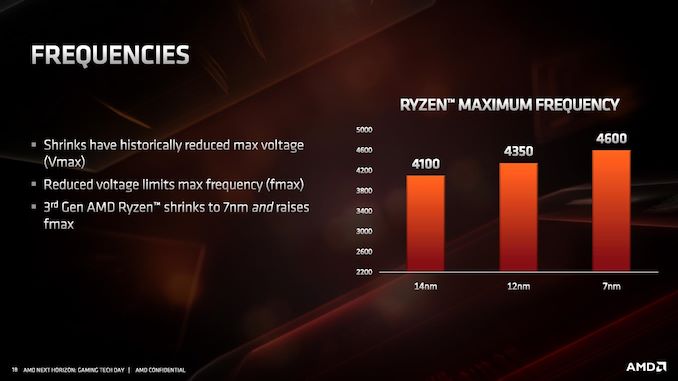

Performance in gaming in this case was designed to showcase the frequency and IPC improvements, rather than any benefits from PCIe 4.0. On the frequency side, AMD stated that despite the 7nm die shrink and higher resistivity of the pathways, they were able to extract a higher frequency out of the 7nm TSMC process compared to 14nm and 12nm from Global Foundries.

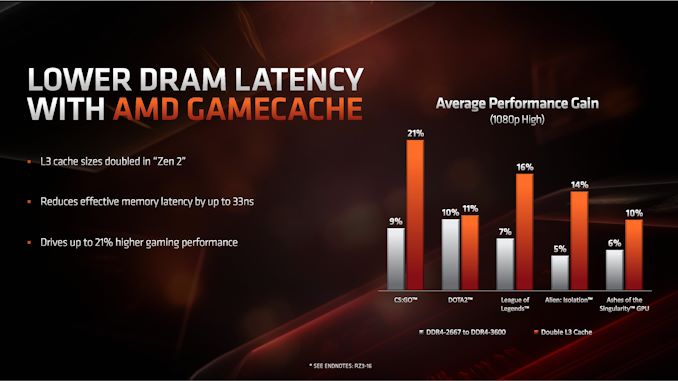

AMD also made commentary about the new L3 cache design, as it moves from 2 MB/core to 4 MB/core. Doubling the L3 cache, according to AMD, affords an additional +11% to +21% increase in performance at 1080p for gaming with a discrete GPU.

There are some new instructions on Zen 2 that would be able to assist in verifying these numbers.

216 Comments

View All Comments

nandnandnand - Tuesday, June 11, 2019 - link

Shouldn't we be looking at highest transistors per square millimeter plotted over time? The Wikipedia article helpfully includes die area for most of the processors, but the graph near the top just plots number of transistors without regard to die size. If Intel's Xe hype is accurate, they will be putting out massive GPUs (1600 mm^2?) made of multiple connected dies, and AMD already does something similar with CPU chiplets.I know that the original Moore's law did not take into account die size, multi chip modules, etc. but to ignore that seems cheaty now. Regardless, performance is what really matters. Hopefully we see tight integration of CPU and L4 DRAM cache boosting performance within the next 2-3 years.

Wilco1 - Wednesday, June 12, 2019 - link

Moore's law is about transistors on a single integrated chip. But yes density matters too, especially actual density achieved in real chips (rather than marketing slides). TSMC 7nm does 80-90 million transistors/mm^2 for A12X, Kirin 980, Snapdragon 8cx. Intel is still stuck at ~16 million transistors/mm^2.FunBunny2 - Wednesday, June 12, 2019 - link

enough about Moore, unless you can get it right. Moore said nothing about transistors. He said that compute capability was doubling about every second year. This is what he actually wrote:"The complexity for minimum component costs has increased at a rate of roughly a factor of two per year. Certainly over the short term this rate can be expected to continue, if not to increase. Over the longer term, the rate of increase is a bit more uncertain, although there is no reason to believe it will not remain nearly constant for at least 10 years. "

[the wiki]

the main reason the Law has slowed is just physics: Xnm is little more (teehee) than propaganda for some years, at least since the end of agreed dimensions of what a 'transistor' was. couple that with the coalescing of the maths around 'the best' compute algorithms; complexity has run into the limiting factor of the maths. you can see it in these comments: gimme more ST, I don't care about cores. and so on. Mother Nature's Laws are fixed and immutable; we just don't know all of them at any given moment, but we're getting closer. in the old days, we had the saying 'doing the easy 80%'. we're well into the tough 20%.

extide - Monday, June 17, 2019 - link

"The complexity for minimum component costs..."He was directly referring to transistor count with the word "complexity" in your quote -- so yes he was literally talking about transistor count.

crazy_crank - Tuesday, June 11, 2019 - link

Actually the number of cores doesn't matter AFAIK, as Moores Law originally only was about transistor density, so all you need to compare is transistors per square millimeter. Looked at it like this, it actually doesn't even look that badchada - Wednesday, June 12, 2019 - link

Moore's law specifically talks about density doubling. If they can fit 6 cores into the same footprint, you can absolutely consider 6 cores for a density comparison. That being said, we have been off this pace for a while.III-V - Wednesday, June 12, 2019 - link

>Moore's law specifically talks about density doubling.No it doesn't.

Jesus Christ, why is Moore's Law so fucking hard for people to understand?

LordSojar - Thursday, June 13, 2019 - link

Why it ever became known as a "law" is totally beyond me. More like Moore's Theory (and that's pushing it, as he made a LOT of suppositions about things he couldn't possibly predict, not being an expert in those areas. ie material sciences, quantum mechanics, etc)sing_electric - Friday, June 14, 2019 - link

This. He wasn't describing something fundamental about the way nature works - he was looking at technological advancements in one field over a short time frame. I guess 'Moore's Observation" just didn't sound as good.And the reason why no one seems to get it right is that Moore wrote and said several different things about it over the years - he'd OBSERVED that the number of transistors you could get on an IC was increasing at a certain rate, and from there, that this lead to performance increases, so both the density AND performance arguments have some amount of accuracy behind them.

And almost no one points out that it's ultimately just a function of geometry: As process decreases linearly (say, 10 units to 7 units) , you get a geometric increase in the # of transistors because you get to multiply that by two dimensions. Other benefits - like decreased power use per transistor, etc. - ultimately flow largely from that as well (or they did, before we had to start using more and more exotic materials to get shrinks to work.)

FunBunny2 - Thursday, June 13, 2019 - link

"Jesus Christ, why is Moore's Law so fucking hard for people to understand?"because, in this era of truthiness, simplistic is more fun than reality. Moore made his observation in 1965, at which time IC fabrication had not even reached LSI levels. IOW, the era when node size was dropping like a stone and frequency was rising like a Saturn rocket; performance increases with each new iteration of a device were obvious to even the most casual observer. just like prices in the housing market before the Great Recession, the simpleminded still think that both vectors will continue forevvvvaaahhh.