Examining Intel's Ice Lake Processors: Taking a Bite of the Sunny Cove Microarchitecture

by Dr. Ian Cutress on July 30, 2019 9:30 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Microarchitecture

- Ice Lake

- Project Athena

- Sunny Cove

- Gen11

Thunderbolt 3: Now on the CPU*

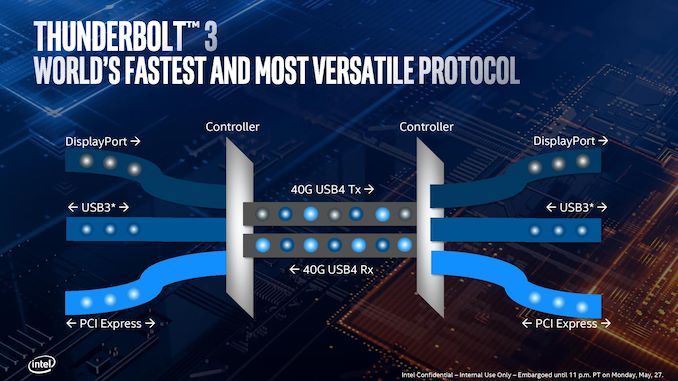

One of the big failures of the Thunderbolt technology since its inception has been its adoption beyond that Apple ecosystem. In order to use it, both the host and the device needed TB controllers supplied by Intel. It wasn’t until Thunderbolt 3 started to use USB Type-C, and Thunderbolt 3 having enough bandwidth to support external graphics solutions, that we started to see the number of available devices start to pick up. The issue still remains that the host and device need an expensive Intel-only controller, but the ecosystem was starting to become more receptive to its uses.

With Ice Lake, that gets another step easier.

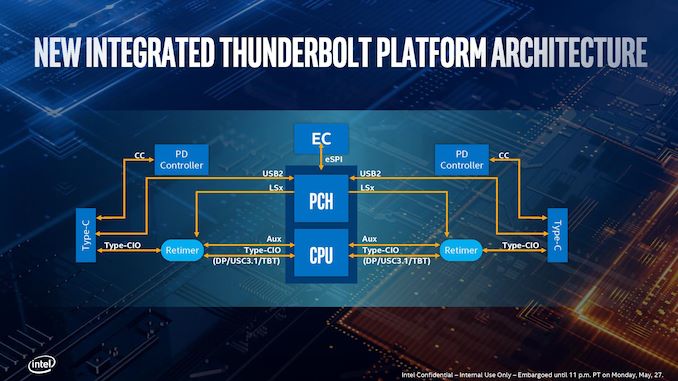

Rather than bundle TB3 support into the chipset, Intel has integrated it on the die of Ice Lake, and it takes up a sizable amount of space. Each Ice Lake CPU can support up to four TB3 ports, with each TB3 port getting a full PCIe 3.0 x4 root complex link internally for full bandwidth. (For those keeping count, it means Ice Lake technically has 32 PCIe 3.0 lanes total).

Intel has made it so each side of the CPU can support two TB3 links direct from the processor. There is still some communication back and forth with the chipset (PCH), as the Type-C ports need to have USB modes implemented. It’s worth noting that TB3 can’t be directly used out of the box, however.

Out of the four ports, it will be highly OEM dependent on how many of those will actually make it into the designs – it’s not as simple as just having the CPU in the system, but other chips (redrivers) are needed to support the USB Type-C connector. Power delivery too requires extra circuitry, which costs money. So while Intel advertises TB3 support on Ice Lake, it still needs something extra from the OEMs. Intel states that a retimer for the integrated solution is only half the size compared to the ones needed with the TB3 external chips, as well as supporting two TB3 ports per retimer, therefore halving the number of retimers needed.

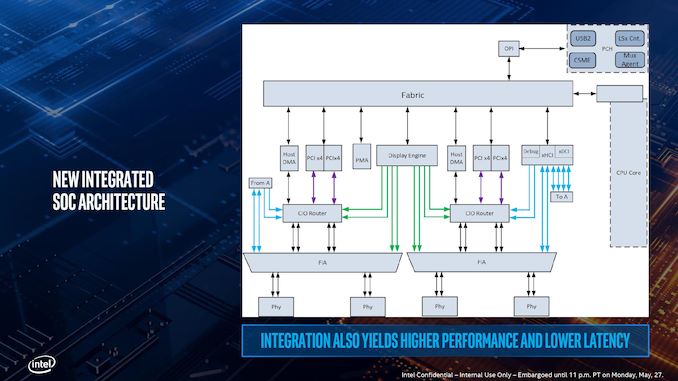

Here’s a more detailed schematic, showing the complexities of adding in TB3 into a chip, with the four PCIe x4 complexes shown moving out to each of the individual PHYs at the bottom, and connected back into the main SoC interconnect fabric. The display engine also has to control what mode the TB3 ports are in, and what signals are being sent. Wake up times for TB3 in this fashion, according to Intel, are actually slightly longer compared to a controller implementation, because the SoC is so tightly integrated. This sounds somewhat counterintuitive, given that the requisite hardware blocks are now closer together, but it all comes down to power domains – in a separate chip design, each segment has a separate domain with individual power up/down states. In an integrated SoC, Intel has unified the power domains to reduce complexity and die area, which means that more careful management is required but latency ultimately increases a little bit.

The other upside to the tightly coupled integration is that Intel stated that this method of TB3 is a lot more power efficient that current external chip implementations. However they wouldn’t comment on the exact power draw of the TB3 block on the chip as it corresponds to the full TDP of the design, especially in relation to localized thermal density (Intel was initially very confused by my question on this, ultimately saying that the power per bit was lower compared to the external chip, so overall system power was lower – they seemed more interested in discussing system power over chip power). Intel did state that the difference between an idle and a fully used link was 300 mW, which suggests that if all four links are in play, we’re looking at 1.2 W. When asked, Intel stated that there are three different power delivery domains within the TB3 block depending on the logic, that the system uses integrated voltage regulation, and the TB3 region has an internal power rail that is shared with some of the internal logic of the CPU. This has implications when it comes to time-to-wake and idle power, but Intel believes it has found a good balance.

Regarding USB4 support, Intel stated that it is in the design, and they are USB4 compliant at this point, but there might be changes and/or bugs which stop it from being completely certified further down the line. Intel said that it ultimately comes down to the device side of the specification, although they have put as much in as they were able given the time constraints of the design. They hope to be certified, but it’s not a guarantee yet.

Depending on who you speak to, this isn’t Intel’s first crack at putting TB3 into CPU silicon: the chip that Intel never wants to talk about, Cannon Lake, supposedly also had an early TB3 design built inside that never worked. But Intel is confident in its Ice Lake implementation, especially with supporting four ports. I wouldn’t be surprised if this comes to desktop when Intel releases its first generation 10nm desktop processors.

*The asterisk in the title of this page is because you still need external hardware in order to enable TB3.

107 Comments

View All Comments

vFunct - Tuesday, July 30, 2019 - link

Why did they not go with HDMI 2.1 and PCIe 4.0?bug77 - Tuesday, July 30, 2019 - link

AMD'd newly released 5700(XT) doesn't support HDMI 2.1, it's not surprising Intel doesn't support it either.And PCIe 4.0 would be power hog.

ToTTenTranz - Wednesday, July 31, 2019 - link

The 5700 cards don't support VirtuaLink either, despite AMD belonging to the consortium since the beginning like nvidia and the RTX cards having it for about a year.First generation Navi cards are just very, very late.

tipoo - Tuesday, July 30, 2019 - link

PCI-E 4 currently needs chipset fans on desktop parts, the power needed isn't suitable for 15-28W mobile yet.DanNeely - Tuesday, July 30, 2019 - link

Because Intel product releases have been a mess since the 10nm trainwreck began. Icelake was originally supposed to be out a few years ago. I suspect PCIe4 is stuck on whatever upcoming design was supposed to be the 7nm launch part.HDMI 2.1 is probably even farther down the pipeline; NVidia and AMD don't have 2.1 support on their discrete GPUs yet. Intel has historically been a lagging supporter of new standards on their IGPs, so that's probably a few years out.

nathanddrews - Tuesday, July 30, 2019 - link

This whole argument that "real world" benchmarks equate to "most used" is rather dumb anyway. We don't need benchmarks to tell us how much faster Chrome opens Reddit, because the answer is always the same: fast enough to not matter. We need benchmarks at the fringes for those reasons brought up in the room: measuring extremes in single/multi threaded scenarios, power usage, memory speeds; finding weaknesses in hardware and finding flaws in software; and taking a large enough sample to be meaningful across the board.Intel wants to eat its cake and still have it - to be fair - who doesn't? But let's get real, AMD is kicking some major butt right now and Intel has to spin it any way they can. What's funny is that the BEST arguments that I've heard from reviewers to go AMD actually has nothing to do with performance, but rather the Zen platform as a whole in terms of features, upgradeability, and cost.

I say this as a total Intel shill, too. The only AMD systems running in my house right now are game consoles. All my PCs/laptops are Intel.

twotwotwo - Tuesday, July 30, 2019 - link

Interesting to read what Intel suggested some of their arguments in the server space would be: lower TCO like the old Microsoft argument against Linux, and having to revalidate all your stuff to use an AMD platform. Some quotes (from a story in their internal newsletter; the full thing is floating around out there, but couldn't immediately find):https://www.techspot.com/news/80683-intel-internal...

I mean, they'll be fine long term, but trying to change the topic from straightforward bang-for-buck, benchmark results, etc. is an approach you only take in a...certain sort of situation.

eek2121 - Wednesday, July 31, 2019 - link

Unfortunately, your average IT infrastructure guy no longer knows how fast a Xeon Platinum 8168 is vs an AMD EPYC 7601. They just ask OEMs like Dell or HP to sell them a solution. I've even seen cases where faster solutions were replaced with slower solutions because they were more expensive and the numbers looked bigger. It turns out that the numbers that looked bigger were not the numbers that they should have been paying attention to.One company I worked at almost bought a $100,000 (yeah I know, small change, but it was a small company) pre-built system. We, as software developers, talked them into letting us handle it instead. We knew a lot about hardware and as a result? We spent around $15,000 in hardware costs. Yes there were labor costs involved in setting everything up, but it only took about 2 weeks for 4 guys, 2 of which were juniors. Had we gone with the blade system, there would have been extensive training needed, which would have costed about the same in labor. Our solution was fully redundant, a hell of a lot faster (the blade system used hardware that was slower than our solution, and it was also a proprietary system that we would be locked into, so there was an additional service contract that costed $$$ and would have to be signed). During my entire time there, we had very few issues with the solution we built outside the occasional hard drive (2 drives in 4 years IIRC) dying and having to pop it out, pop in a new one, and let the RAID rebuild. Zero downtime. In addition, our wifi solution allowed roaming all over a giant building without dropping the signal. Speeds were lightning fast and QoS allowed us to keep someone from taking up too much bandwidth on the guest network. The entire setup worked like a dream.

We also wanted to use a different setup for the phone system, but they opted to work with a vendor instead. They paid a lot of money for that, and constantly had issues. The administration software was buggy, sometimes the entire system would go down, even adding a user would take down the entire system until things were updated. IIRC after I left they finally switched to the system we wanted to use and had no issues after that.

wrkingclass_hero - Tuesday, July 30, 2019 - link

Uh, I would not be putting cobalt anywhere near my mouthPeachNCream - Tuesday, July 30, 2019 - link

Real men aren't scared of a few toxic chemicals entering their digestive systems! Clearly you and I are not real men, but we now have a role model to emulate over the course of our soon-to-be-shortened-by-cancer lives.