Examining Intel's Ice Lake Processors: Taking a Bite of the Sunny Cove Microarchitecture

by Dr. Ian Cutress on July 30, 2019 9:30 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Microarchitecture

- Ice Lake

- Project Athena

- Sunny Cove

- Gen11

Performance Claims:

+18% IPC vs. Skylake,

+47% Performance vs. Broadwell

With every new product generation, the company releasing the product has to put some level of expectations on performance. Depending on the company, you’ll either get a high level number summarizing performance, or you’ll get reams and reams of benchmark data. Intel did both, especially with a headline ‘+18%’ value, but in recent months the company has also been on a charge about what sort of benchmarking is worth doing. I want to take a quick diversion down that road, and give my thoughts on the matter.

First, I want to define some terms, just so we’re all on the same page.

- A synthetic test is a benchmark engineered to probe a feature of the processor, often to find its peak capability in one or several specific task. A synthetic test does not often reflect a real-world scenario, and likely doesn’t use real world software. Synthetic benchmarks are designed to be stable and repeatable, and the analysis often describing how a processor performs in an ideal scenario.

- A real-world test uses software that the user ends up using, along with a representative workload for that software. These tests are usually most applicable to end-users looking to purchase a product, as they can see actual use-case results. Real-world tests can have obvious pitfalls: it can be hard to test across multiple machines with only a single license, and testing one piece of software has no guarantee on performance on another.

A typical analysis of a processor does two things: what can it do (synthetic) and how does it perform (real-world). Users interested in the development of a platform, how it will expand and grow, or engineers peering over the fence, or even investors looking at the direction the company is going, will look at what products can do. People looking at what to use, what to work with, are more interested in the performance. Reviewers should get this concept, and companies like Intel should get this too – with Intel hiring a number of ex-reviewers of late, this is coming through.

A couple of months ago, Intel approached subsets of reviewers to discuss best benchmarking practices. On the table were real-world benchmarks, and which benchmarks represent the widest array of the market. Under fire was Cinebench, a semi-synthetic test (it uses a real-world engine on example data) that Intel believed didn’t represent the performance of a processor.

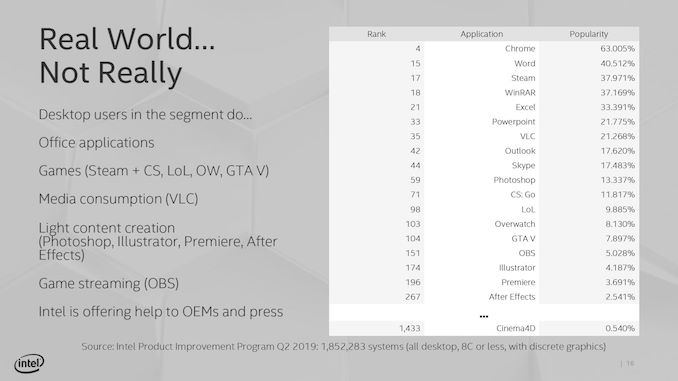

Intel provided data from one of its commissioned surveys on software that people use. Their data was based on a list of all consumers, from entry-level users up to prosumers, casual gamers, and enthusiasts, but also covering commercial use cases. At the top of the list were the obvious examples, such as OS and browsers: Explorer.exe, Edge, Chrome. In the top set were important widely distributed software packages, such as Photoshop (all versions), Steam, WinRAR, Office programs, and popular games like Overwatch. The point Intel was trying to make with this list is that a lot of reviewers run software that isn’t popular, and should aim to cover the widest market as possible.

The key point they were trying to make was that Cinebench, while based on Cinema4D and a rendering tool used by a number of the community, wasn’t the be-all and end-all of performance. Now this is where Intel’s explanation became bifurcated: despite this being a discussion on what benchmarks reviewers should consider using, Intel’s perspective was that citing a single number, as Intel’s competitors have done, doesn’t represent true performance in all use cases. There was a general feeling that users were taking single numbers like this and jumping to conclusions. So despite the fact that the media in the room all test multiple software angles, Intel was clear in that they didn’t want a single number to dominate the headlines, especially when it’s from software that is ranked (according to Intel’s survey) somewhere in the 1400s.

Needless to say, Intel got a bit of backlash from the press in the room at the time. Key criticisms were that those present, when they get hardware, test a variety of software, not just Cinebench, to try and give a more overall view. Other key elements included that the survey covered all users, from consumer, commercial, and workstation: a number of the press in the room have audiences that are enthusiasts, so they will cater their benchmark accordingly. There was also a discussion that a number of software packages listed in the top 100 are actually difficult to benchmark, due to licensing arrangements designed to stop repeated installs across multiple systems. Typically most software vendors aren’t interested in working with the benchmark community to help evaluate performance, in the event that it exposes deficiencies in their code base. There was also the way in that readers were adapting over time: most focused readers want their specific software tested, and it is impossible to test 50 different software packages, so a few that can be streamlined in a benchmark suite are used as a representative sample, and typically Cinebench is one of those in the rendering arena, alongside POV-Ray, Corona, etc.

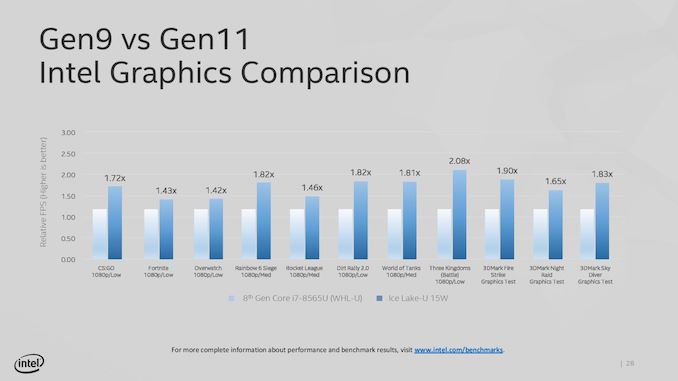

Intel, at this stage in the discussion, still went on to show how the new hardware performs on a variety of tests. We’ve covered these images before on previous pages, but Intel stated a significant uplift in graphics compared to the current 14nm offerings, from 40% up to 108%:

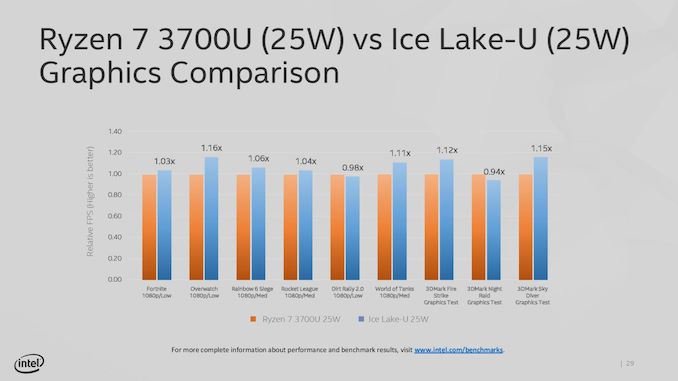

As well as comparisons to the competition:

Aside from 3DMark, these are all ‘real-world’ tests.

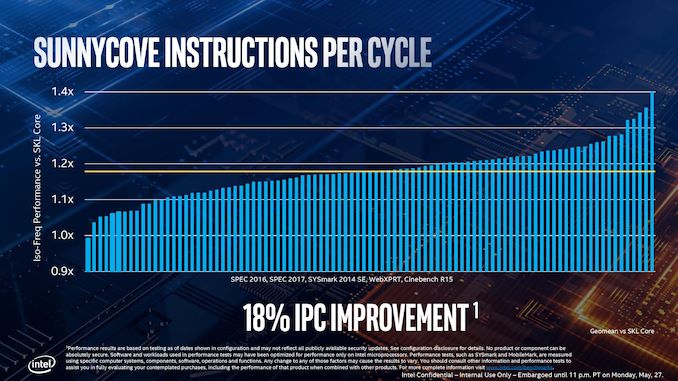

Move forward a few weeks, and Intel’s Tech Day where Ice Lake is discussed, and Intel brings up IPC.

Intel’s big statement is that Sunny Cove, a 2019 product, offers 18% more instructions per clock against Skylake, a 2015 product. In order to come to that conclusion, as expected, Intel has to turn to synthetic testing: SPEC2006, SPEC2017, SYSMark 2014 SE, WebXPRT, and Cinebench R15. Wait, what was that last one? Cinebench?

So there are two topics to discuss here.

First is the 18% increase over four years – that’s the equivalent to a 4.2% compound annual growth rate. Some users will state that we should have had more, and that Intel’s issues with its 10nm manufacturing process means that this should have been a 2017 product (which would have been an 8.6% CAGR). Ultimately Intel built enough of an IPC increase lead over the last decade to afford something like this, and it shows that there isn’t an IPC wall just yet.

Second is the use of Cinebench, and the previous version at that. Given what was discussed above, various conclusions could be drawn. I’ll leave those up to you. Personally, I wouldn’t have included it.

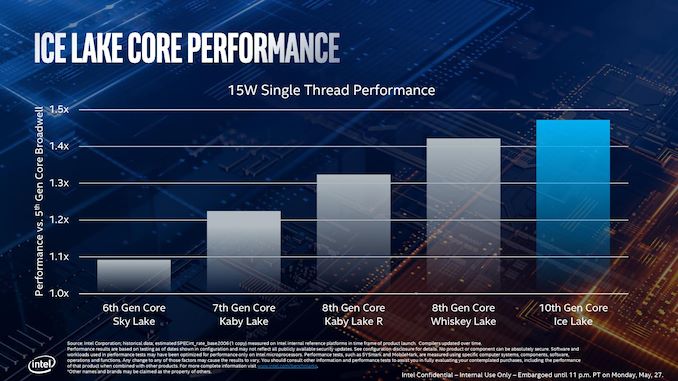

Aside from IPC, Intel also spoke about actual single-threaded performance about Sunny Cove in its 15W mode.

At a brief glance, I would have expected this graph to be from real-world analysis. But given the blurb at the bottom it shows that these results are derived from SPEC2006, specifically 1-thread int_rate_base, which means that these are synthetic results, so we’ll analyze them with that in mind. This test also gets lots of benefit from turbo, with each test likely to fit inside the turbo window of an adequately cooled system.

The base line here is Broadwell, Intel’s 5th Generation processor, which if you remember was the first Intel processor to have an integrated FIVR on the mobile parts for power efficiency. In this case we see that Intel puts Skylake as +9% above Broadwell, then moving through Kaby Lake and Whiskey Lake we see the effect of increasing that peak turbo frequency and power budget: when we moved from dual core to quad core 15W mobile processors, that peak turbo power budget increased from 19W to 44W, allowing longer turbo. Overall we hit +42% for 8th Gen Whiskey Lake over Broadwell.

Ice Lake, by comparison, is +47% over Broadwell. When moving from Broadwell to Ice Lake, which Intel expects most of its users to do, that’s a sizable single threaded performance jump, I won’t dispute that, although I will wait until we see real world data to come to a better conclusion.

However, if we compare Ice Lake to Whiskey Lake, we see only a +3.5% increase in single threaded performance. For a generation-on-generation increase, that’s even lower than the four-year CAGR from Skylake. Some of you might be questioning why this is happening, and it all comes down to frequency.

Intel’s current 8th Gen Whiskey Lake, the i7-8565U, has a peak turbo frequency of 4.8 GHz. In 15W mode, we understand that the peak frequency of Ice Lake is under 4.0 GHz, essentially handing Whiskey Lake a ~20% frequency advantage.

If this sounds odd, turn over to the next page. Intel is going to start tripping over itself with its new product lines, and we’ll do the math.

107 Comments

View All Comments

vFunct - Tuesday, July 30, 2019 - link

Why did they not go with HDMI 2.1 and PCIe 4.0?bug77 - Tuesday, July 30, 2019 - link

AMD'd newly released 5700(XT) doesn't support HDMI 2.1, it's not surprising Intel doesn't support it either.And PCIe 4.0 would be power hog.

ToTTenTranz - Wednesday, July 31, 2019 - link

The 5700 cards don't support VirtuaLink either, despite AMD belonging to the consortium since the beginning like nvidia and the RTX cards having it for about a year.First generation Navi cards are just very, very late.

tipoo - Tuesday, July 30, 2019 - link

PCI-E 4 currently needs chipset fans on desktop parts, the power needed isn't suitable for 15-28W mobile yet.DanNeely - Tuesday, July 30, 2019 - link

Because Intel product releases have been a mess since the 10nm trainwreck began. Icelake was originally supposed to be out a few years ago. I suspect PCIe4 is stuck on whatever upcoming design was supposed to be the 7nm launch part.HDMI 2.1 is probably even farther down the pipeline; NVidia and AMD don't have 2.1 support on their discrete GPUs yet. Intel has historically been a lagging supporter of new standards on their IGPs, so that's probably a few years out.

nathanddrews - Tuesday, July 30, 2019 - link

This whole argument that "real world" benchmarks equate to "most used" is rather dumb anyway. We don't need benchmarks to tell us how much faster Chrome opens Reddit, because the answer is always the same: fast enough to not matter. We need benchmarks at the fringes for those reasons brought up in the room: measuring extremes in single/multi threaded scenarios, power usage, memory speeds; finding weaknesses in hardware and finding flaws in software; and taking a large enough sample to be meaningful across the board.Intel wants to eat its cake and still have it - to be fair - who doesn't? But let's get real, AMD is kicking some major butt right now and Intel has to spin it any way they can. What's funny is that the BEST arguments that I've heard from reviewers to go AMD actually has nothing to do with performance, but rather the Zen platform as a whole in terms of features, upgradeability, and cost.

I say this as a total Intel shill, too. The only AMD systems running in my house right now are game consoles. All my PCs/laptops are Intel.

twotwotwo - Tuesday, July 30, 2019 - link

Interesting to read what Intel suggested some of their arguments in the server space would be: lower TCO like the old Microsoft argument against Linux, and having to revalidate all your stuff to use an AMD platform. Some quotes (from a story in their internal newsletter; the full thing is floating around out there, but couldn't immediately find):https://www.techspot.com/news/80683-intel-internal...

I mean, they'll be fine long term, but trying to change the topic from straightforward bang-for-buck, benchmark results, etc. is an approach you only take in a...certain sort of situation.

eek2121 - Wednesday, July 31, 2019 - link

Unfortunately, your average IT infrastructure guy no longer knows how fast a Xeon Platinum 8168 is vs an AMD EPYC 7601. They just ask OEMs like Dell or HP to sell them a solution. I've even seen cases where faster solutions were replaced with slower solutions because they were more expensive and the numbers looked bigger. It turns out that the numbers that looked bigger were not the numbers that they should have been paying attention to.One company I worked at almost bought a $100,000 (yeah I know, small change, but it was a small company) pre-built system. We, as software developers, talked them into letting us handle it instead. We knew a lot about hardware and as a result? We spent around $15,000 in hardware costs. Yes there were labor costs involved in setting everything up, but it only took about 2 weeks for 4 guys, 2 of which were juniors. Had we gone with the blade system, there would have been extensive training needed, which would have costed about the same in labor. Our solution was fully redundant, a hell of a lot faster (the blade system used hardware that was slower than our solution, and it was also a proprietary system that we would be locked into, so there was an additional service contract that costed $$$ and would have to be signed). During my entire time there, we had very few issues with the solution we built outside the occasional hard drive (2 drives in 4 years IIRC) dying and having to pop it out, pop in a new one, and let the RAID rebuild. Zero downtime. In addition, our wifi solution allowed roaming all over a giant building without dropping the signal. Speeds were lightning fast and QoS allowed us to keep someone from taking up too much bandwidth on the guest network. The entire setup worked like a dream.

We also wanted to use a different setup for the phone system, but they opted to work with a vendor instead. They paid a lot of money for that, and constantly had issues. The administration software was buggy, sometimes the entire system would go down, even adding a user would take down the entire system until things were updated. IIRC after I left they finally switched to the system we wanted to use and had no issues after that.

wrkingclass_hero - Tuesday, July 30, 2019 - link

Uh, I would not be putting cobalt anywhere near my mouthPeachNCream - Tuesday, July 30, 2019 - link

Real men aren't scared of a few toxic chemicals entering their digestive systems! Clearly you and I are not real men, but we now have a role model to emulate over the course of our soon-to-be-shortened-by-cancer lives.