Examining Intel's Ice Lake Processors: Taking a Bite of the Sunny Cove Microarchitecture

by Dr. Ian Cutress on July 30, 2019 9:30 AM EST- Posted in

- CPUs

- Intel

- 10nm

- Microarchitecture

- Ice Lake

- Project Athena

- Sunny Cove

- Gen11

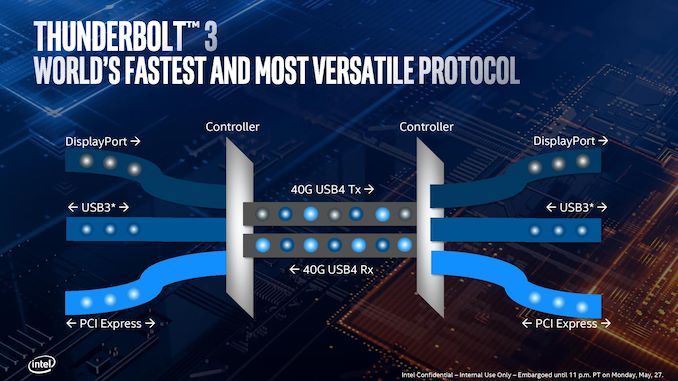

Thunderbolt 3: Now on the CPU*

One of the big failures of the Thunderbolt technology since its inception has been its adoption beyond that Apple ecosystem. In order to use it, both the host and the device needed TB controllers supplied by Intel. It wasn’t until Thunderbolt 3 started to use USB Type-C, and Thunderbolt 3 having enough bandwidth to support external graphics solutions, that we started to see the number of available devices start to pick up. The issue still remains that the host and device need an expensive Intel-only controller, but the ecosystem was starting to become more receptive to its uses.

With Ice Lake, that gets another step easier.

Rather than bundle TB3 support into the chipset, Intel has integrated it on the die of Ice Lake, and it takes up a sizable amount of space. Each Ice Lake CPU can support up to four TB3 ports, with each TB3 port getting a full PCIe 3.0 x4 root complex link internally for full bandwidth. (For those keeping count, it means Ice Lake technically has 32 PCIe 3.0 lanes total).

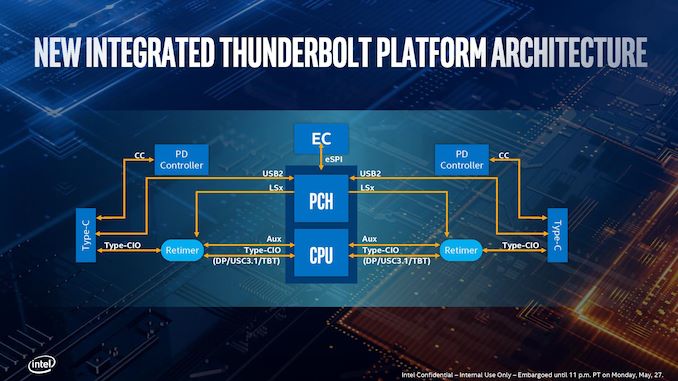

Intel has made it so each side of the CPU can support two TB3 links direct from the processor. There is still some communication back and forth with the chipset (PCH), as the Type-C ports need to have USB modes implemented. It’s worth noting that TB3 can’t be directly used out of the box, however.

Out of the four ports, it will be highly OEM dependent on how many of those will actually make it into the designs – it’s not as simple as just having the CPU in the system, but other chips (redrivers) are needed to support the USB Type-C connector. Power delivery too requires extra circuitry, which costs money. So while Intel advertises TB3 support on Ice Lake, it still needs something extra from the OEMs. Intel states that a retimer for the integrated solution is only half the size compared to the ones needed with the TB3 external chips, as well as supporting two TB3 ports per retimer, therefore halving the number of retimers needed.

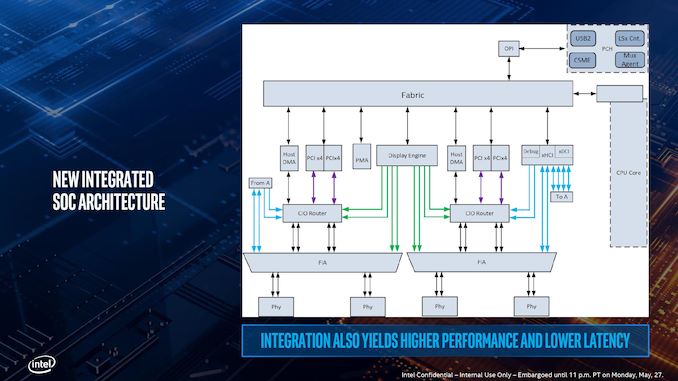

Here’s a more detailed schematic, showing the complexities of adding in TB3 into a chip, with the four PCIe x4 complexes shown moving out to each of the individual PHYs at the bottom, and connected back into the main SoC interconnect fabric. The display engine also has to control what mode the TB3 ports are in, and what signals are being sent. Wake up times for TB3 in this fashion, according to Intel, are actually slightly longer compared to a controller implementation, because the SoC is so tightly integrated. This sounds somewhat counterintuitive, given that the requisite hardware blocks are now closer together, but it all comes down to power domains – in a separate chip design, each segment has a separate domain with individual power up/down states. In an integrated SoC, Intel has unified the power domains to reduce complexity and die area, which means that more careful management is required but latency ultimately increases a little bit.

The other upside to the tightly coupled integration is that Intel stated that this method of TB3 is a lot more power efficient that current external chip implementations. However they wouldn’t comment on the exact power draw of the TB3 block on the chip as it corresponds to the full TDP of the design, especially in relation to localized thermal density (Intel was initially very confused by my question on this, ultimately saying that the power per bit was lower compared to the external chip, so overall system power was lower – they seemed more interested in discussing system power over chip power). Intel did state that the difference between an idle and a fully used link was 300 mW, which suggests that if all four links are in play, we’re looking at 1.2 W. When asked, Intel stated that there are three different power delivery domains within the TB3 block depending on the logic, that the system uses integrated voltage regulation, and the TB3 region has an internal power rail that is shared with some of the internal logic of the CPU. This has implications when it comes to time-to-wake and idle power, but Intel believes it has found a good balance.

Regarding USB4 support, Intel stated that it is in the design, and they are USB4 compliant at this point, but there might be changes and/or bugs which stop it from being completely certified further down the line. Intel said that it ultimately comes down to the device side of the specification, although they have put as much in as they were able given the time constraints of the design. They hope to be certified, but it’s not a guarantee yet.

Depending on who you speak to, this isn’t Intel’s first crack at putting TB3 into CPU silicon: the chip that Intel never wants to talk about, Cannon Lake, supposedly also had an early TB3 design built inside that never worked. But Intel is confident in its Ice Lake implementation, especially with supporting four ports. I wouldn’t be surprised if this comes to desktop when Intel releases its first generation 10nm desktop processors.

*The asterisk in the title of this page is because you still need external hardware in order to enable TB3.

107 Comments

View All Comments

s.yu - Thursday, August 1, 2019 - link

"Charge 4+hrs in 30 mins"...Ok, I think "4+hrs battery life under 30 min. charging" sounds better, or just Intel's version.

29a - Thursday, August 1, 2019 - link

Should Intel go ahead with the naming scheme, it is going to offer a cluster of mixed messages.I believe the word you are looking for there is clusterfuck.

ifThenError - Friday, August 2, 2019 - link

To bad the article doesn't state any further details about the HEVC encoders. Would be interesting to hear if Intel only improved the speed or if they also worked on compression and quality.I bought a Gemini Lake system last year to try the encoding in hardware and have very mixed feelings about Intel's Quick Sync since. The encoding speed is impressive with the last generation already, and all the while CPU and GPU are practically in idle. On the downside the image quality and compression ratio is highly underwhelming and not even near usable for “content creation“ or mere transcoding. It suffices for video calls at best. Even encoding h264 in software reaches far better compression efficiency while being not much slower on a low end CPU.

IIRC Intel promised some “quality mode” for their upcoming encoders, but I can't remember if that was for the gen11 graphics.

intel_gene - Friday, August 2, 2019 - link

There is some information on GNA available. It is accessed through Intel's OpenVINO.https://docs.openvinotoolkit.org/latest/_docs_IE_D...

https://github.com/opencv/dldt/tree/2019/inference...

There is some background information here:

https://sigport.org/sites/default/files/docs/Poste...

urbanman2004 - Friday, August 2, 2019 - link

I wonder what happens to Project Athena if none of the products released by the vendor partners/OEMs meet the criteria that Intel's established.GreenReaper - Saturday, August 3, 2019 - link

Plagues of snakes, owls, eagles, Asari, etc.gambita - Monday, August 5, 2019 - link

nice of you to do intels bidding and promote and help their prhowtomakedeliciousfood - Thursday, August 8, 2019 - link

www.howtomakedeliciousfood.comHikariWS - Sunday, August 11, 2019 - link

These improvements on serial performance are great, it's awesome to have bigger buffers and more execution units. But in clock area it seems to be a big drawback.I'm sure clock issues is the reason we won't have any Ice Lake on desktop, and Comet Lake on laptops on the same generation. But, why no 6C Ice Lake? This opened a but alert sign on me.

But what also called my attention is its IGP power. Most mid range and above laptops ae using nVidia GPU. That's sad for us who want performance and won't play on it, because mid laptops are alrdy all coming with nVidia GPU which makes them more expensive.

Now I hope to have these segments using Intel IGP and not have nVidia GPU anymore. Good to us on having less money wasted on hardware we don't need, bad for nVidia.

nils_ - Wednesday, August 14, 2019 - link

Can you please stop eating the chips? Yield must be bad enough as it is!