Spotted at Computex: An AMD EPYC-Based System with 108 Intel Ruler SSDs

by Anton Shilov on June 6, 2019 11:00 AM EST- Posted in

- Servers

- AMD

- Trade Shows

- EPYC

- Computex 2019

- EchoStreams

- FlacheSAN

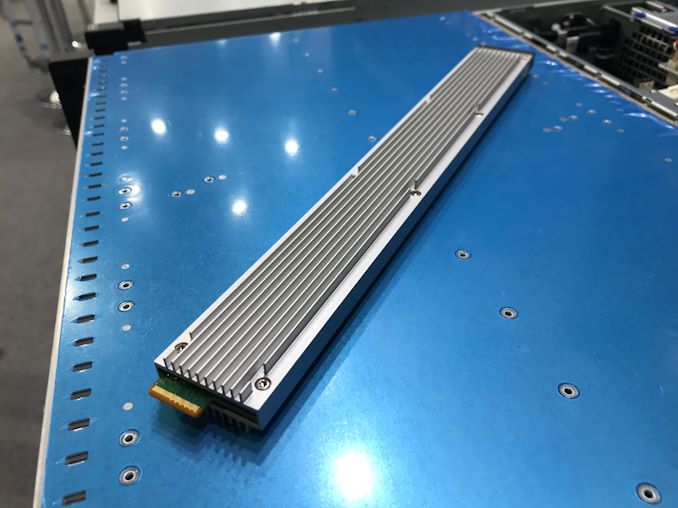

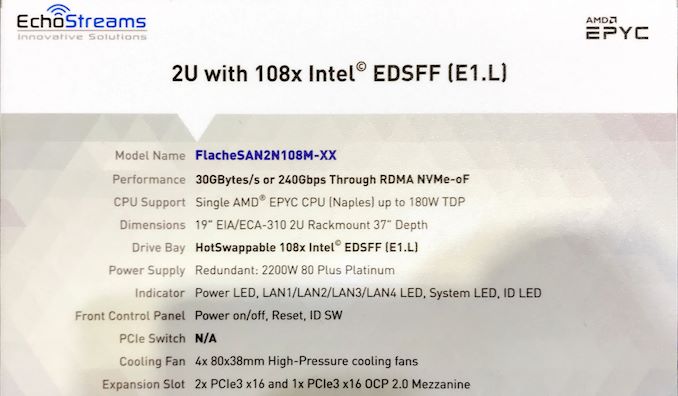

Intel’s ‘ruler’ SSD form-factor is meant to maximize density of solid-state storage devices and improve Intel’s competitive positions on two markets: storage and compute. As it turns out, AMD’s server platform can also benefit from Intel’s EDSFF E1.L drives thanks the number of PCIe lanes supported by the processor. In fact, at Computex we spotted one of the first AMD EPYC-based server carrying 108 E1.L ‘ruler’ SSDs.

EchoStreams’s FlacheSAN2N108N-XX is a 2U machine based on AMD’s EPYC ‘Naples’ with up to 32 cores accompanied by 16 DDR4 memory slots for up to 2 TB of RAM, several M.2 and PCIe slots for caching SSDs or accelerators, six Microsemi PCIe switches and so on. The key feature of the machine is the number of supported hot-swappable E1.L SSDs from Intel as well as storage capacities featured by 108 drives. At present, Intel offers DC P4500-series SSDs featuring up to 8 TB capacities, thus, the server can support a total capacity of up to 864 TB.

When it comes to performance, the FlacheSAN2N108N-XX offers up to 30 GB/s (or 240 Gbps) through RDMA NVMe-oF, it can support up to four NICs, though right now the company does not list exact models.

Intel developed its EDSFF (E1.L and E1.S) aka ‘ruler’ PCIe SSDs in a bid to increase NAND flash storage density in servers and make drives more thermally efficient. Meanwhile, because Intel’s current-generation Xeon Scalable CPUs have 48 PCIe lanes, whereas AMD’s existing EPYC processors feature 128 PCIe, AMD’s platforms can actually take more advantage of such SSDs than Intel’s own platforms.

The FlacheSAN2N108N-XX is already listed on EchoStreams’s website, so expect it to become available shortly. As for pricing, it will depend on exact configuration.

| Want to keep up to date with all of our Computex 2019 Coverage? | ||||||

Laptops |

Hardware |

Chips |

||||

| Follow AnandTech's breaking news here! | ||||||

36 Comments

View All Comments

ilt24 - Thursday, June 6, 2019 - link

@nextgentech..."108??? Sure looks like 72 to me"72 in the front and 36 in the back.

cb88 - Thursday, June 6, 2019 - link

If you have gigantic data that you absolutely must have maximum random IO performance on... it absolutely makes sense. It also would probably make sense as a storage node in a supercomputerm, or perhaps as a cache for frequenly reloaded data that doesn't need to be memory resident constantly but is acessed frequently enough that it can't be pushed out to cold storage.msroadkill612 - Saturday, June 8, 2019 - link

"as a cache for frequenly reloaded data that doesn't need to be memory resident constantly but is acessed frequently enough that it can't be pushed out to cold storage."Yes, this is what needs bearing in mind I suspect. It is almost as good as ram if it is not being rewritten all the time. Some categories of use can fry them pretty fast.

I dont think you meant cold storage tho - that is the extreme bottom rung tier of storage - the warehouse/attic.

schujj07 - Friday, June 7, 2019 - link

"This architecture makes no sense for a 2U box, let's assume 2M hours MTBF/drive, now you've got 72 drives so your effective MTBF comes all the way down to a mere 27.7K hours not to mention there is no way to take advantage of the performance of all 72 of those drives, resulting in a lots of expensive drives with powerful NVMe ASICs going to waste."27.7K hours still means 3 years for your MTBF. That is going to be far higher than having that many spinning disks in a SAN. Also you can have 2 of these boxes to serve out vSAN storage to your other hosts. With 30GB/sec of storage speed you would need 400Gb Ethernet to fully take advantage of that storage speed.

urbanman2004 - Thursday, June 6, 2019 - link

I'm sure the AMD EPYC server and a few of those ruler SSD's are probably beyond my pay grademsroadkill612 - Saturday, June 8, 2019 - link

Maybe not a bit later tho - it is ~only a format.Primarily it seems about cooling increasingly powerful nand storage devices (8TB isnt radically bigger than m.2 products considering their length), already a throttling issue on consumer desktops.