The NVIDIA GeForce GTX 1650 Review, Feat. Zotac: Fighting Brute Force With Power Efficiency

by Ryan Smith & Nate Oh on May 3, 2019 10:15 AM ESTBattlefield 1 (DX11)

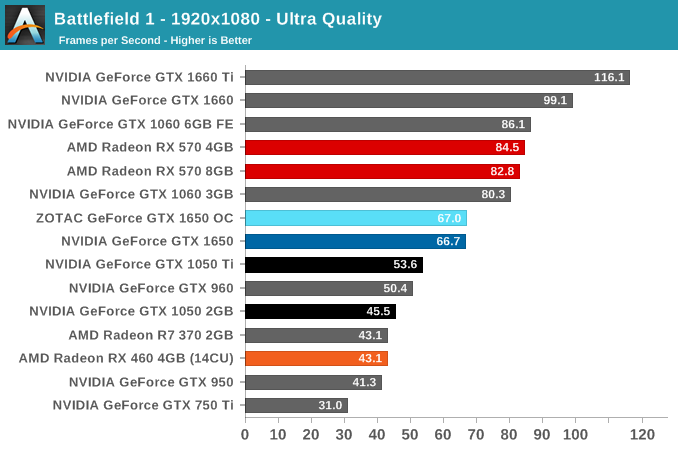

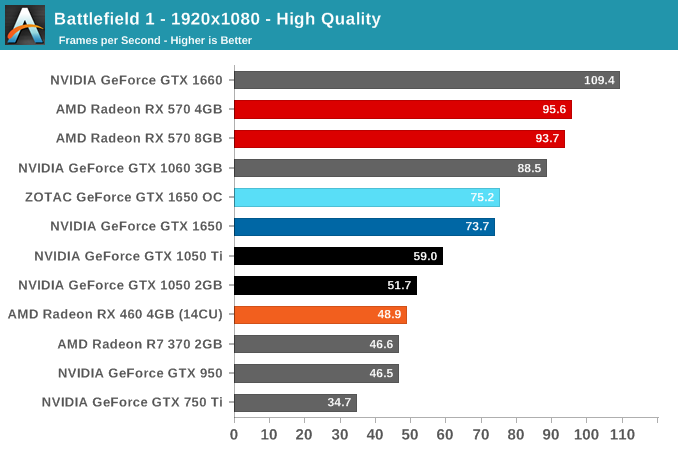

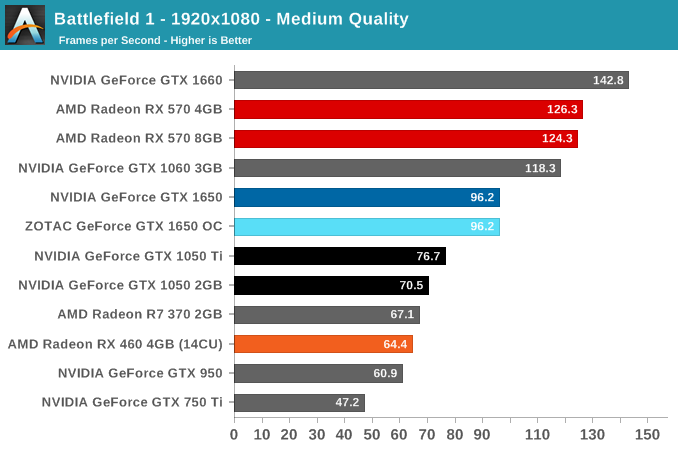

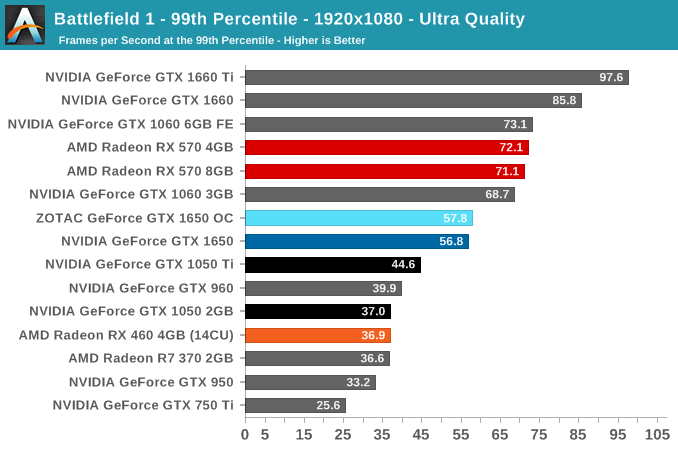

Battlefield 1 returns from the 2017 benchmark suite, the 2017 benchmark suite with a bang as DICE brought gamers the long-awaited AAA World War 1 shooter a little over a year ago. With detailed maps, environmental effects, and pacy combat, Battlefield 1 provides a generally well-optimized yet demanding graphics workload. The next Battlefield game from DICE, Battlefield V, completes the nostalgia circuit with a return to World War 2, but more importantly for us, is one of the flagship titles for GeForce RTX real time ray tracing.

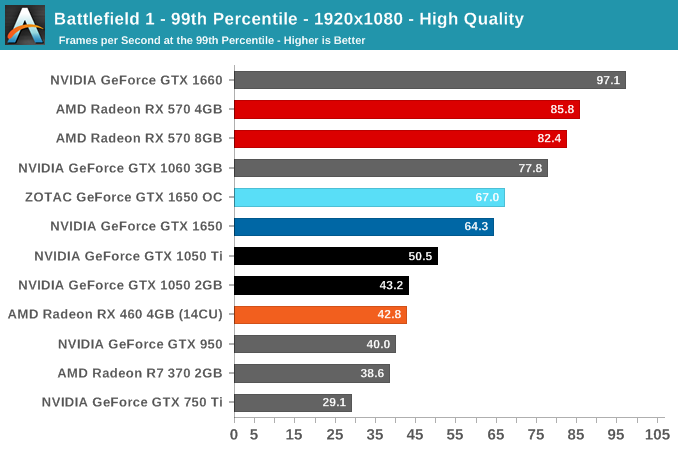

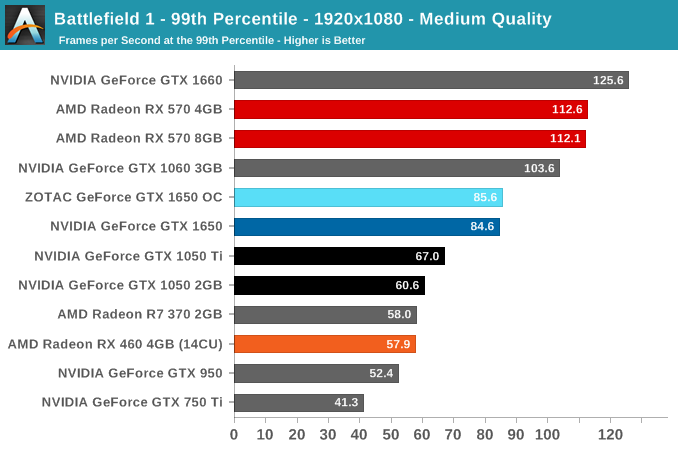

We use the Ultra, High, and Medium presets is used with no alterations. As these benchmarks are from single player mode, our rule of thumb with multiplayer performance still applies: multiplayer framerates generally dip to half our single player framerates. Battlefield 1 also supports HDR (HDR10, Dolby Vision).

Without a direct competitor in the current generation, the GTX 1650's intended position is somewhat vague, outside of iterating on Pascal's GTX 1050 variants. Looking back to Pascal's line-up, the GTX 1650 splits the difference between the GTX 1050 Ti and GTX 1060 3GB, and far from the GTX 1660.

Compared to the RX 570 though, the GTX 1650 is handily outpaced, and Battlefield 1 where the GTX 1650 is the furthest behind. That being said, the RX 570 wasn't originally in this segment, with price being the common denominator. The RX 460, meanwhile, is well-outclassed, and the additional 2 CUs in the RX 560 would be unlikely to significantly narrow the gap.

As for the ZOTAC card, the 30 MHz is an unnoticable difference in real world terms.

126 Comments

View All Comments

Yojimbo - Saturday, May 4, 2019 - link

That's true, and I noted that in my original post. But the important thing is that the price/performance comparison should consider the total cost of ownership of the card. Ultimately, the value of any particular increment in performance is a matter of personal preference, though it is possible for someone to make a poor choice because he doesn't understand the situation well.dmammar - Friday, May 3, 2019 - link

This power consumption electricity savings debate has gone on too long. The math is not hard - the annual electricity cost is equal to (Watts / 1,000) x (hours used per day) x (365 days / year) x (cost per kWh)In my area, electricity costs $0.115/kWh so a rather excessive (for me) 3 hours of gaming every day of the year means that an extra 100W power consumption equals only $12.50 higher electricity cost every year.

So for me, the electricity cost of the higher power consumption isn't even remotely important. I think most people are in the same boat, but run the numbers yourself and make your own decision. The only people who should care either live somewhere with expensive electricity and/or game way too much, in which case they should probably be using a better GPU.

Yojimbo - Saturday, May 4, 2019 - link

How is $12.50 a year not remotely important? Would you say a card costing $25 less is a big deal? If one costs $150 and the other is $175 you would not consider that to be at all a consideration to your purchase?OTG - Saturday, May 4, 2019 - link

How IS $12.50/year even worth thinking about?That's less than an hour of work for most people, it's like 3 cents a day, you could pay for it by finding pennies on the sidewalk!

PLUS you get much better performance! It's a faster card for a completely meaningless power increase.

If your PSU doesn't have a six pin, get the 1650 I guess, otherwise the price is kinda silly.

Yojimbo - Saturday, May 4, 2019 - link

I like the way you think. Whatever you buy, just buy it from me for $12.50 more than you could otherwise get it, because it's just not worth thinking about. What you say would be entirely reasonable if it didn't apply to every single purchase you make. I mean if a company comes along as says "Come on, buy this pen for $20. You're only going to buy one pen this year." would you do it? Do you ask the people who are saying NVIDIA's new cards are too expensive because they are $20 more expensive than the previous generation equivalents "How is $10 a year even worth thinking about?"Hey, if you are willing to throw money out the window if it is for electricity but not for anything else that's up to you, but you are making unreasonable decisions that harm yourself.

jardows2 - Monday, May 6, 2019 - link

Using your logic, why don't we all just save bunches of money by using Intel Integrated graphics. Since the money we save on power usage is all that matters, we might as well make sure we are only using Mobile CPU's as well.What your paying for here is the improved gaming experience provided by the extra performance of the RX570. For many people, the real-world improvement in the gaming experience is worth the relatively low cost of energy usage. Realistically, the only reason to get one of these over the 570 is if your power supply cannot handle the RX570.

Sushisamurai - Tuesday, May 7, 2019 - link

Holy crap man! The amount of electricity I spent to read this comment thread and that mount of keyboard clicks that've been consumed from my 70 million clicks from my mechanical keyboard from my total cost of ownership was totally worth reading and replying to this.OTG - Tuesday, May 7, 2019 - link

If you're pinching pennies that hard, you're probably better off not spending 4 hours a day gaming.Those games cost money, and you know what they say about time!

Maybe even set the card mining when you're away, there are profits to be had even now.

WarlockOfOz - Saturday, May 4, 2019 - link

Anyone calculating the total ownership cost of a video card in cents per day should also consider that the slightly higher performance of the 570 may allow it to last a few more months before justifying replacement, allowing the purchase price to be spread over a longer period.Yojimbo - Sunday, May 5, 2019 - link

"Anyone calculating the total ownership cost of a video card in cents per day should also consider that the slightly higher performance of the 570 may allow it to last a few more months before justifying replacement, allowing the purchase price to be spread over a longer period."Sure. Not that likely, though, because the difference isn't that great so what is more likely to affect the timing of upgrade is the card that becomes available. But at the moment, NVIDIA has a big gap between the 1650 and the 1660 so there aren't two more-efficient cards that bracket the 570 well from a price standpoint.

Of course, some people apparently don't care about $25 at all so I don't understand why they should care about $25 more than that (for a total of 50) such that it would prevent them from getting a 1660, which has a performance that blows the 570 out of the water and would be a lot more likely to play a factor in the timing of a future upgrade.