The NVIDIA GeForce GTX 1650 Review, Feat. Zotac: Fighting Brute Force With Power Efficiency

by Ryan Smith & Nate Oh on May 3, 2019 10:15 AM ESTPower, Temperature, and Noise

As always, we'll take a look at power, temperature, and noise of the GTX 1650, though the 'mini' design shouldn't hold any surprises.

| GeForce Video Card Average Clockspeeds | |||||

| Game | GTX 1650 | ZOTAC GTX 1650 OC Gaming |

|||

| Boost Clock | 1665MHz | 1695MHz | |||

| Battlefield 1 | 1855MHz | 1880MHz | |||

| Far Cry 5 | 1847MHz | 1886MHz | |||

| Ashes: Escalation | 1826MHz | 1829MHz | |||

| Wolfenstein II | 1860MHz | 1905MHz | |||

| Final Fantasy XV | 1867MHz | 1837MHz | |||

| GTA V | 1886MHz | 1905MHz | |||

| Shadow of War | 1857MHz | 1863MHz | |||

| F1 2018 | 1855MHz | 1875MHz | |||

| Total War: Warhammer II | 1865MHz | 1902MHz | |||

| FurMark | 1629MHz | 1672MHz | |||

Power Consumption

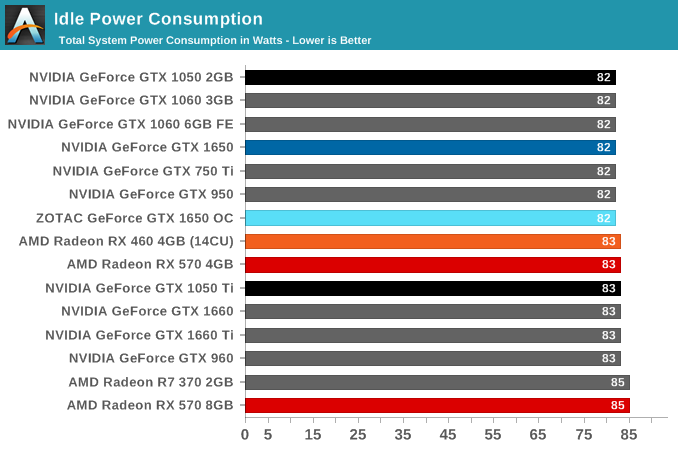

As for idle power consumption, the GTX 1650 falls in line with everything else, with total system power consumption reaching 83W. With contemporary desktop cards, idle power has reached the point where nothing short of low-level testing can expose what these cards are drawing.

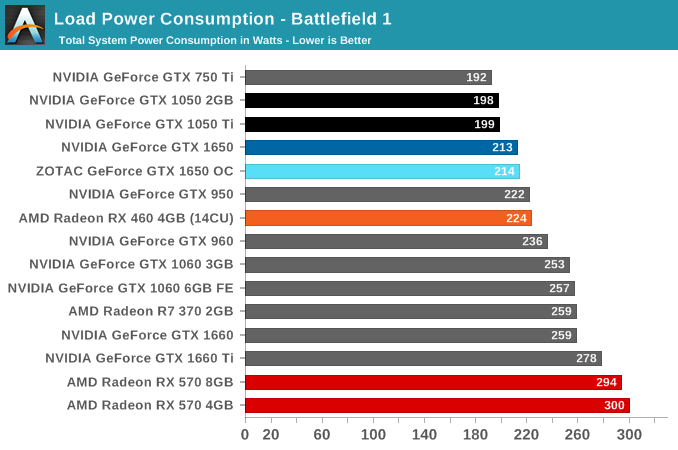

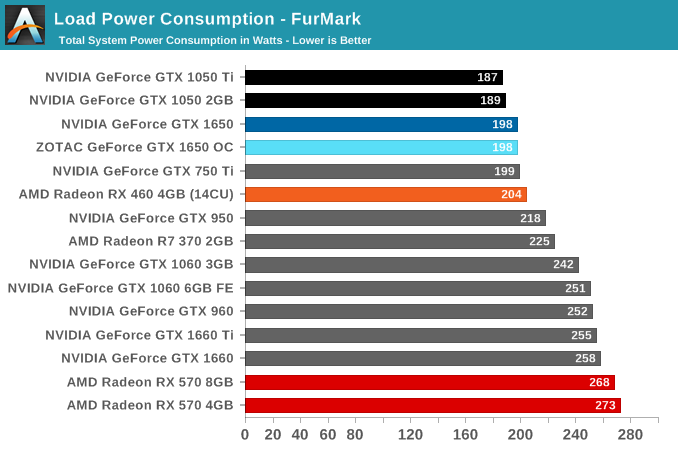

Meanwhile at full load, the power consumption disparity between the RX 570 and GTX 1650 is one of the key factors in a direct comparison. Better – but not always – performance can be had for an additional ~75W at the wall, which maps well to the 150W TBP of the RX 570 over the 75W slot-power-only GTX 1650. Though the greater cooling requirements for a higher power card does means forgoing the small form factor.

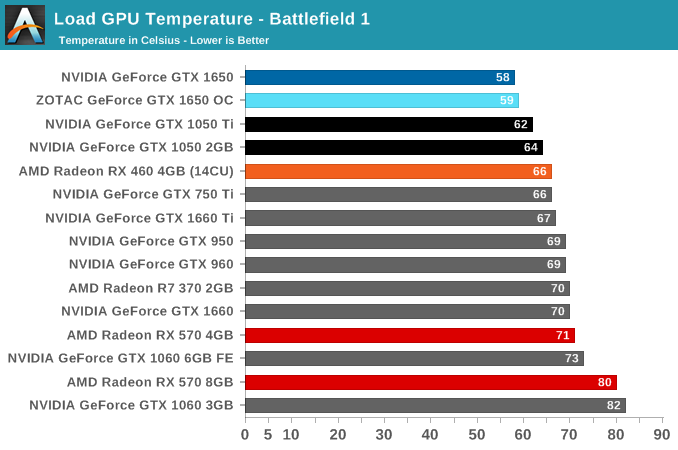

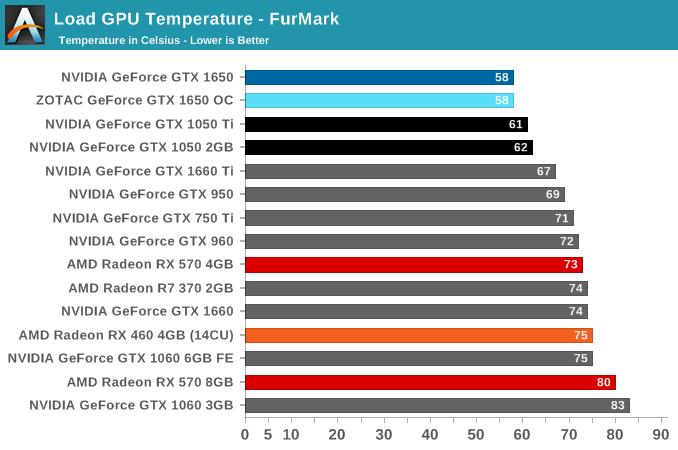

Temperature

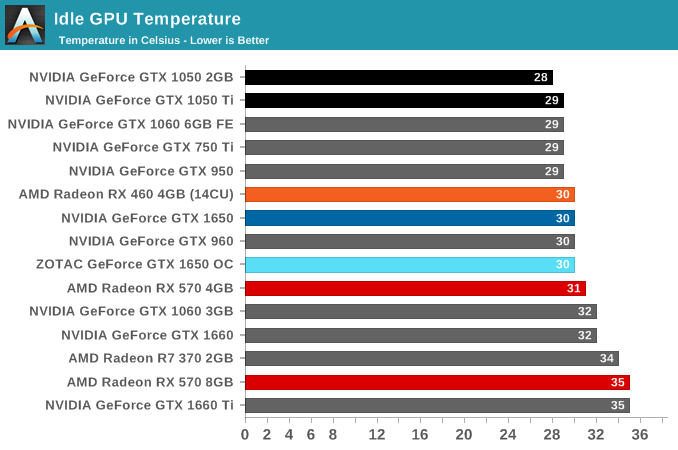

Temperatures all appear fairly normal, as the GTX 1650 stays very cool under load.

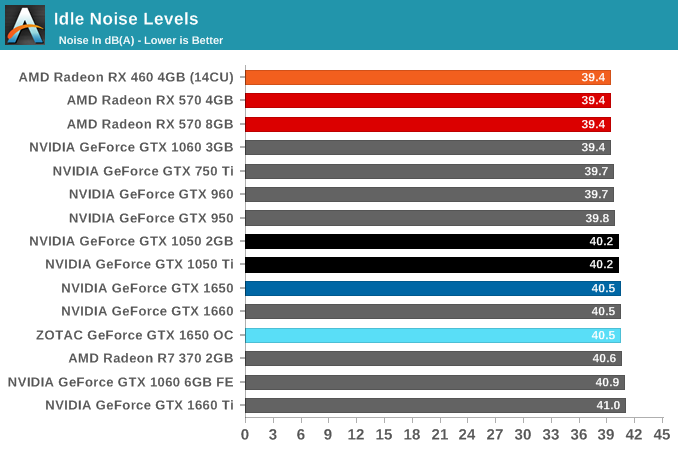

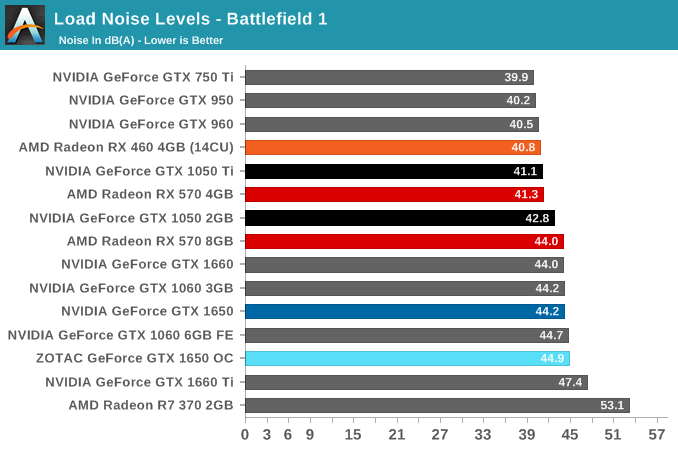

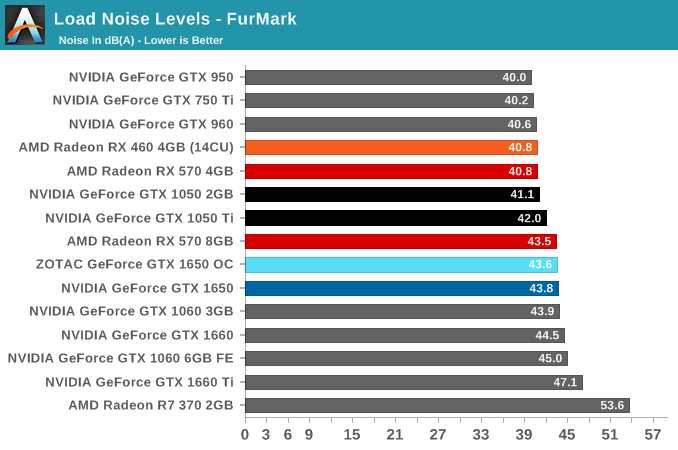

Noise

While the GTX 1650 may have good power and temperature characteristics, the noise is not as clean, if only because entry-level cards don't come with 0db fan idling technology, and SFF cards often have to deal with small shrill fans at relatively high RPM. The GTX 1650's fan isn't the worst, but it's not a standout best either. If anything, it looks to be the result of preferring cooling over acoustics, given the very low load temperatures.

126 Comments

View All Comments

PeachNCream - Tuesday, May 7, 2019 - link

Agreed with nevc on this one. When you start discussing higher end and higher cost components, consideration for power consumption comes off the proverbial table to a great extent because priority is naturally assigned moreso to performance than purchase price or electrical consumption and TCO.eek2121 - Friday, May 3, 2019 - link

Disclaimer, not done reading the article yet, but I saw your comment.Some people look for low wattage cards that don't require a power connector. These types of cards are particularly suited for MiniITX systems that may sit under the TV. The 750ti was super popular because of this. Having Turings HEVC video encode/decode is really handy. You can put together a nice small MiniITX with something like the Node 202 and it will handle media duties much better than other solutions.

CptnPenguin - Friday, May 3, 2019 - link

That would be great if it actually had the Turing HVEC encoder - it does not; it retains the Volta encoder for cost saving or some other Nvidia-Alone-Knows reason. (source: Hardware Unboxed and Gamer's Nexus).Anyone buying a 1650 and expecting to get the Turing video encoding hardware is in for a nasty surprise.

Oxford Guy - Saturday, May 4, 2019 - link

"That would be great if it actually had the Turing HVEC encoder - it does not; it retains the Volta encoder"Yeah, lack of B support stinks.

JoeyJoJo123 - Friday, May 3, 2019 - link

Or if you're going with a miniITX low wattage system, you can cut out the 75w GPU and just go with a 65w AMD Ryzen 2400G since the integrated Vega GPU is perfectly suitable for an HTPC type system. It'll save you way more money with that logic.0ldman79 - Sunday, May 19, 2019 - link

What they are going to do though is look at the fast GPU + PSU vs the slower GPU alone.People with OEM boxes are going to buy one part at a time. Trust me on this, it's frustrating, but it's consistent.

Gich - Friday, May 3, 2019 - link

25$ a year? So 7cents a day?7cents is more then 1kWh where I live.

Yojimbo - Friday, May 3, 2019 - link

The us average is a bit over 13 cents per kilowatt hour. But I made an error in the calculation and was way off. It's more like $15 over 2 years and not $50. Sorry.DanNeely - Friday, May 3, 2019 - link

That's for an average of 2h/day gaming. Bump it up to a hard core 6h/day and you get around $50/2 years. Or 2h/day but somewhere with obnoxiously expensive electricity like Hawaii or Germany.rhysiam - Saturday, May 4, 2019 - link

I'd just like to point out that if you've gamed for an average of 6h per day over 2 years with a 570 instead of a 1650, then you've also been enjoying 10% or so extra performance. That's more than 4000 hours of higher detail settings and/or frame rates. If people are trying to calculate the true "value" of a card, then I would argue that this extra performance over time, let's not forget the performance benefits!