The Intel Optane Memory H10 Review: QLC and Optane In One SSD

by Billy Tallis on April 22, 2019 11:50 AM ESTAnandTech Storage Bench - Light

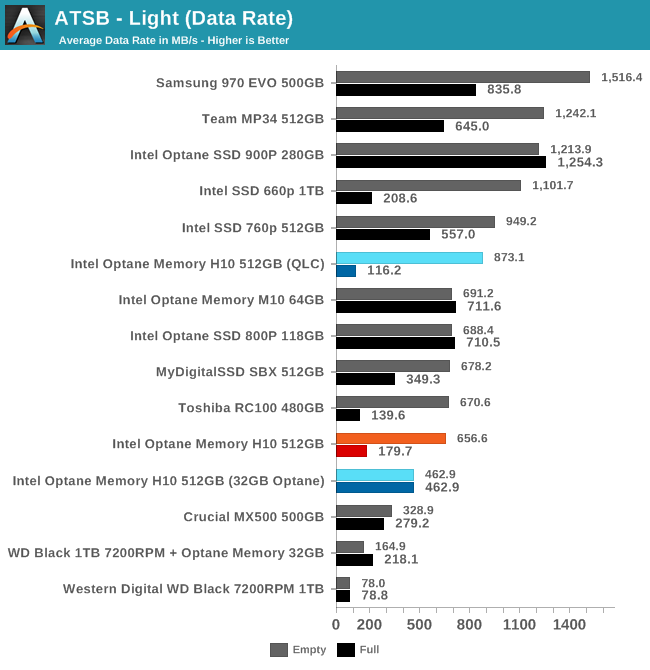

Our Light storage test has relatively more sequential accesses and lower queue depths than The Destroyer or the Heavy test, and it's by far the shortest test overall. It's based largely on applications that aren't highly dependent on storage performance, so this is a test more of application launch times and file load times. This test can be seen as the sum of all the little delays in daily usage, but with the idle times trimmed to 25ms it takes less than half an hour to run. Details of the Light test can be found here. As with the ATSB Heavy test, this test is run with the drive both freshly erased and empty, and after filling the drive with sequential writes.

The Intel Optane Memory H10 is generally competitive with other low-end NVMe drives when the Light test is run on an empty drive, though the higher performance of the QLC portion on its own indicates that the H10's score is probably artificially lowered by starting with a cold Optane cache. The full-drive performance is worse than almost all of the TLC-based SSDs, but is still significantly better than a hard drive without any Optane cache.

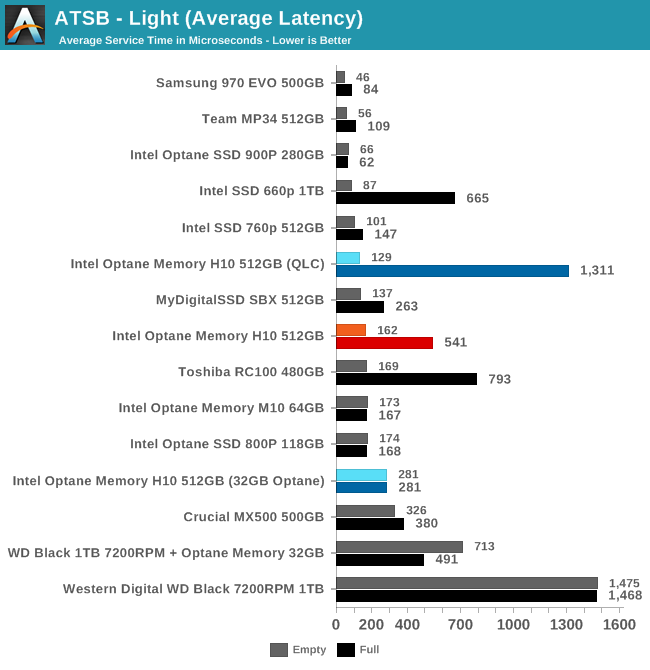

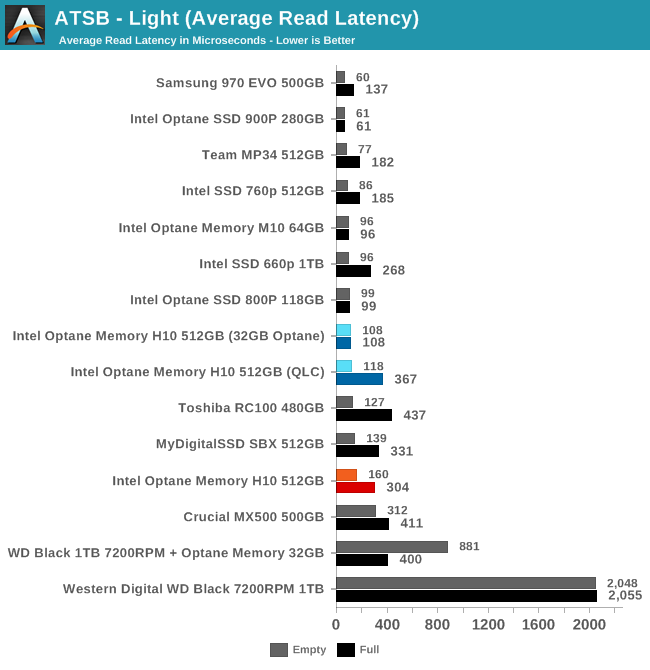

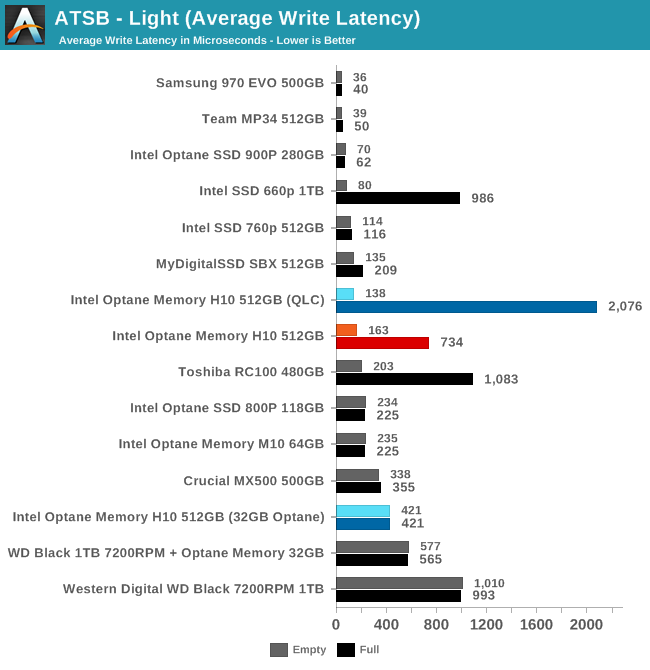

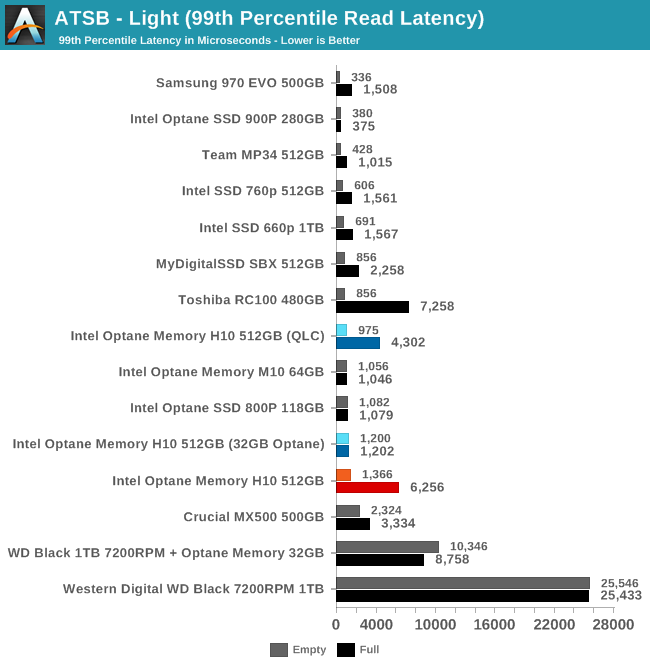

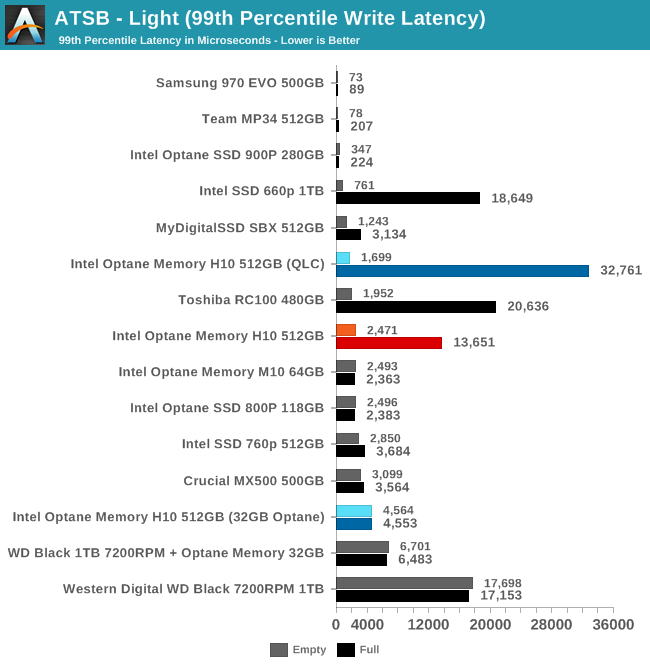

The average and 99th percentile latencies from the Optane Memory H10 are competitive with TLC NAND when the test is run on an empty drive, and even with a full drive the latency scores remain better than a mechanical hard drive.

The average write latency in the full-drive run is the only thing that sticks out and identifies the H10 as clearly different than other entry-level NVMe drives, but the TLC-based DRAMless Toshiba RC100 is even worse in that scenario.

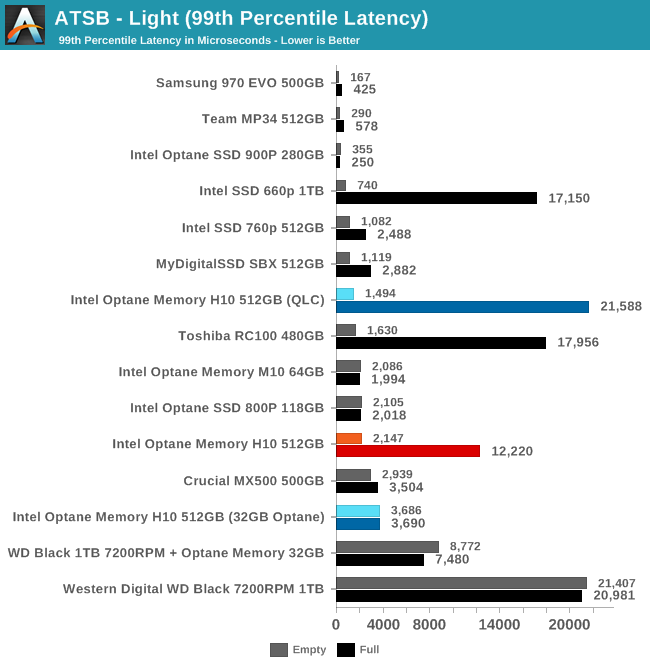

Unlike the average latencies, both the read and write 99th percentile latency scores for the Optane H10 show that it struggles greatly when full. The Optane cache is not nearly enough to make up for running out of SLC cache.

60 Comments

View All Comments

Alexvrb - Monday, April 22, 2019 - link

"The caching is managed entirely in software, and the host system accesses the Optane and QLC sides of the H10 independently. "So, it's already got serious baggage. But wait, there's more!

"In practice, the 660p almost never needed more bandwidth than an x2 link can provide, so this isn't a significant bottleneck."

Yeah OK, what about the Optane side of things?

Samus - Tuesday, April 23, 2019 - link

They totally nerf'd this thing with 2x PCIe.PeachNCream - Tuesday, April 23, 2019 - link

Linux handles Optane pretty easily without any Intel software through bcache. I'm not sure why Anandtech can't test that, but maybe just a lack of awareness.https://www.phoronix.com/scan.php?page=article&...

Billy Tallis - Tuesday, April 23, 2019 - link

Testing bcache performance won't tell us anything about how Intel's caching software behaves, only how bcache behaves. I'm not particularly interested in doing a review that would have such a narrow audience. And bcache is pretty thoroughly documented so it's easier to predict how it will handle different workloads without actually testing.easy_rider - Wednesday, April 24, 2019 - link

Is there a reliable review of 118gb intel optane ssd in M2 form factor? Does it make sense to hunt it down and put as a system drive in the dual-m2 laptop?name99 - Thursday, April 25, 2019 - link

"QLC NAND needs a performance boost to be competitive against mainstream TLC-based SSDs"The real question is what dimension, if any, does this thing win on?

OK, it may not be the fastest out there? But does it, say, provide approximately leading edge TLC speed at QLC prices, so it wins by being cheap?

Because just having a cache is meaningless. Any QLC drive that isn't complete garbage will have a controller-managed cache created by using the QLC flash as SLC; and the better controllers will slowly degrade across the entire drive, maintaining always an SLC cache, but also using the entire drive (till its filled up) as SLC, then switching blocks to MLC, then to TLC, and only when the drive is approaching capacity, using blocks as QLC.

So the question is not "does it give cached performance to a QLC drive", the question is does it give better performance or better price than other QLC solutions?

albert89 - Saturday, April 27, 2019 - link

Didn't I tell ya ? Optane's capacity was too small for many yrs and compatible with a very tiny number devices/hardware/OS. She played the game of hard to get and now no guy wants her.peevee - Monday, April 29, 2019 - link

"The caching is managed entirely in software, and the host system accesses the Optane and QLC sides of the H10 independently. Each half of the drive has two PCIe lanes dedicated to it."Fail.

ironargonaut - Monday, April 29, 2019 - link

"While the Optane Memory H10 got us into our Word document in about 5 seconds, the TLC-based 760P took 29 seconds to open the file. In fact, we waited so long that near the end of the run, we went ahead and also launched Google Chrome with it preset to open four websites. "https://www.pcworld.com/article/3389742/intel-opta...

Win

realgundam - Saturday, November 16, 2019 - link

What if you have a normal 660p and an Optane stick? would it do the same thing?