Intel Architecture Manual Updates: bfloat16 for Cooper Lake Xeon Scalable Only?

by Anton Shilov on April 5, 2019 2:15 PM EST- Posted in

- CPUs

- Xeon

- Servers

- Cooper Lake

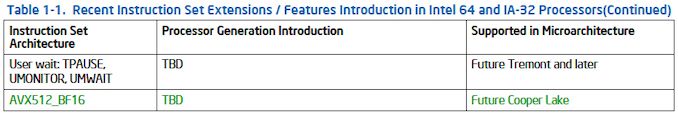

Intel recently released a new version of its document for software developers revealing some additional details about its upcoming Xeon Scalable ‘Cooper Lake-SP’ processors. As it appears, the new CPUs will support AVX512_BF16 instructions and therefore the bfloat16 format. Meanwhile, the main intrigue here is the fact that at this point AVX512_BF16 seems to be only supported by the Cooper Lake-SP microarchitecture, but not its direct successor, the Ice Lake-SP microarchitecture.

The bfloat16 is a truncated 16-bit version of the 32-bit IEEE 754 single-precision floating-point format that preserves 8 exponent bits, but reduces precision of the significand from 24-bits to 8 bits to save up memory, bandwidth, and processing resources, while still retaining the same range. The bfloat16 format was designed primarily for machine learning and near-sensor computing applications, where precision is needed near to 0 but not so much at the maximum range. The number representation is supported by Intel’s upcoming FPGAs as well as Nervana neural network processors, and Google’s TPUs. Given the fact that Intel supports the bfloat16 format across two of its product lines, it makes sense to support it elsewhere as well, which is what the company is going to do by adding its AVX512_BF16 instructions support to its upcoming Xeon Scalable ‘Cooper Lake-SP’ platform.

| AVX-512 Support Propogation by Various Intel CPUs Newer uArch supports older uArch |

||||||

| Xeon | General | Xeon Phi | ||||

| Skylake-SP | AVX512BW AVX512DQ AVX512VL |

AVX512F AVX512CD |

AVX512ER AVX512PF |

Knights Landing | ||

| Cannon Lake | AVX512VBMI AVX512IFMA |

AVX512_4FMAPS AVX512_4VNNIW |

Knights Mill | |||

| Cascade Lake-SP | AVX512_VNNI | |||||

| Cooper Lake | AVX512_BF16 | |||||

| Ice Lake | AVX512_VNNI AVX512_VBMI2 AVX512_BITALG AVX512+VAES AVX512+GFNI AVX512+VPCLMULQDQ (not BF16) |

AVX512_VPOPCNTDQ | ||||

| Source: Intel Architecture Instruction Set Extensions and Future Features Programming Reference (pages 16) | ||||||

The list of Intel’s AVX512_BF16 Vector Neural Network Instructions includes VCVTNE2PS2BF16, VCVTNEPS2BF16, and VDPBF16PS. All of them can be executed in 128-bit, 256-bit, or 512-bit mode, so software developers can pick up one of a total of nine versions based on their requirements.

| Intel AVX512_BF16 Instructions Intel C/C++ Compiler Intrinsic Equivalent |

||

| Instruction | Description | |

| VCVTNE2PS2BF16 | Convert Two Packed Single Data to One Packed BF16 Data Intel C/C++ Compiler Intrinsic Equivalent: VCVTNE2PS2BF16 __m128bh _mm_cvtne2ps_pbh (__m128, __m128); VCVTNE2PS2BF16 __m128bh _mm_mask_cvtne2ps_pbh (__m128bh, __mmask8, __m128, __m128); VCVTNE2PS2BF16 __m128bh _mm_maskz_cvtne2ps_pbh (__mmask8, __m128, __m128); VCVTNE2PS2BF16 __m256bh _mm256_cvtne2ps_pbh (__m256, __m256); VCVTNE2PS2BF16 __m256bh _mm256_mask_cvtne2ps_pbh (__m256bh, __mmask16, __m256, __m256); VCVTNE2PS2BF16 __m256bh _mm256_maskz_cvtne2ps_ pbh (__mmask16, __m256, __m256); VCVTNE2PS2BF16 __m512bh _mm512_cvtne2ps_pbh (__m512, __m512); VCVTNE2PS2BF16 __m512bh _mm512_mask_cvtne2ps_pbh (__m512bh, __mmask32, __m512, __m512); VCVTNE2PS2BF16 __m512bh _mm512_maskz_cvtne2ps_pbh (__mmask32, __m512, __m512); |

|

| VCVTNEPS2BF16 | Convert Packed Single Data to Packed BF16 Data Intel C/C++ Compiler Intrinsic Equivalent: VCVTNEPS2BF16 __m128bh _mm_cvtneps_pbh (__m128); VCVTNEPS2BF16 __m128bh _mm_mask_cvtneps_pbh (__m128bh, __mmask8, __m128); VCVTNEPS2BF16 __m128bh _mm_maskz_cvtneps_pbh (__mmask8, __m128); VCVTNEPS2BF16 __m128bh _mm256_cvtneps_pbh (__m256); VCVTNEPS2BF16 __m128bh _mm256_mask_cvtneps_pbh (__m128bh, __mmask8, __m256); VCVTNEPS2BF16 __m128bh _mm256_maskz_cvtneps_pbh (__mmask8, __m256); VCVTNEPS2BF16 __m256bh _mm512_cvtneps_pbh (__m512); VCVTNEPS2BF16 __m256bh _mm512_mask_cvtneps_pbh (__m256bh, __mmask16, __m512); VCVTNEPS2BF16 __m256bh _mm512_maskz_cvtneps_pbh (__mmask16, __m512); |

|

| VDPBF16PS | Dot Product of BF16 Pairs Accumulated into Packed Single Precision Intel C/C++ Compiler Intrinsic Equivalent: VDPBF16PS __m128 _mm_dpbf16_ps(__m128, __m128bh, __m128bh); VDPBF16PS __m128 _mm_mask_dpbf16_ps( __m128, __mmask8, __m128bh, __m128bh); VDPBF16PS __m128 _mm_maskz_dpbf16_ps(__mmask8, __m128, __m128bh, __m128bh); VDPBF16PS __m256 _mm256_dpbf16_ps(__m256, __m256bh, __m256bh); VDPBF16PS __m256 _mm256_mask_dpbf16_ps(__m256, __mmask8, __m256bh, __m256bh); VDPBF16PS __m256 _mm256_maskz_dpbf16_ps(__mmask8, __m256, __m256bh, __m256bh); VDPBF16PS __m512 _mm512_dpbf16_ps(__m512, __m512bh, __m512bh); VDPBF16PS __m512 _mm512_mask_dpbf16_ps(__m512, __mmask16, __m512bh, __m512bh); VDPBF16PS __m512 _mm512_maskz_dpbf16_ps(__mmask16, __m512, __m512bh, __m512bh); |

|

Only for Cooper Lake?

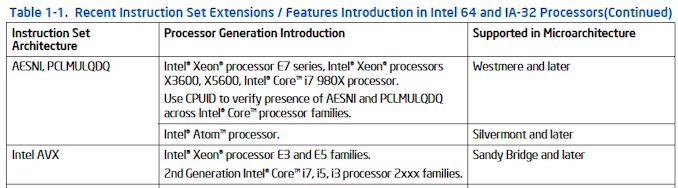

When Intel mentions an instruction in its Intel Architecture Instruction Set Extensions and Future Features Programming Reference, the company usually states the first microarchitecture to support it and indicates that its successors also support it (or are set to support it) by calling them ‘later’ omitting the word microarchitecture. For example, Intel’s original AVX is supported by Intel’s ‘Sandy Bridge and later’.

This is not the case with AVX512_BF16. This one is said to be supported by ‘Future Cooper Lake’. Meanwhile, after the Cooper Lake-SP platform comes the long-awaited 10nm Ice Lake-SP server platform and it will be a bit odd for it not to support something its predecessor does. However, this is not an entirely impossible scenario. Intel is keen on offering differentiated solutions these days, so tailoring Cooper Lake-SP for certain workloads while focusing Ice Lake-SP on others may be the case here.

We have reached out to Intel for additional information and will update the story if we get some extra details on the matter.

Update: Intel has sent us the following:

At this time, Cooper Lake will add support for Bfloat16 to DL Boost. We’re not giving any more guidance beyond that in our roadmap.

Related Reading

- Intel Server Roadmap: 14nm Cooper Lake in 2019, 10nm Ice Lake in 2020

- An Interview with Lisa Spelman, VP of Intel’s DCG: Discussing Cooper Lake and Smeltdown

- Intel Documents Point to AVX-512 Support for Cannon Lake Consumer CPUs

- Cisco Documents Shed Light on Cascade Lake, Cooper Lake, and Ice Lake for Servers

- Intel’s Xeon Scalable Roadmap Leaks: Cooper Lake-SP, Ice Lake-SP Due in 2020

Source: Intel Architecture Instruction Set Extensions and Future Features Programming Reference (via InstLatX64/Twitter)

19 Comments

View All Comments

Yojimbo - Saturday, April 6, 2019 - link

I just read about flexpoint and it seems the idea is to do 16-bit integer operations on the accelerator and allow those integers to express values in ranges defined by a 5 bit exponent held on the host device and (implicitly) shared by the various accelerator processing elements. Thus they get very energy-efficient and die-space efficient processing elements but have the flexibility to deal with larger dynamic range than an integer unit can provide.That's a very different method of dealing with the problem than bfloat16, which actually reduces the number of significands from 10 in half-precision to 7 in bfloat16 in order to increase the exponent from 5 bits to 8 bits to provide more dynamic range. I wonder how easily code written to create an accurate network on an architecture using the one data type could be ported to an architecture on the other system. It sounds problematic to me.

mode_13h - Wednesday, April 10, 2019 - link

> I wonder how easily code written to create an accurate network on an architecture using the one data type could be ported to an architecture on the other system. It sounds problematic to me.You realize that people are inferencing with int-8 (and less) models trained with fp16, right? Nvidia started this trend with their Pascal GP102+ chips and doubled down on it with Turing's Tensor cores (which, unlike Volta's, also support int8 and I think int4). AMD jumped on the int8/int4 bandwagon, with Vega 20 (i.e. 7nm).

Yojimbo - Wednesday, April 10, 2019 - link

I'm not sure what you mean. I am talking about code portability, not about compiling trained networks. bfloat16 and flexpoint are both floating point implementations and therefore, as you seem to agree with, targeted primarily towards training and not inference.Santoval - Saturday, April 6, 2019 - link

It is remarkable that IBM is doing training at FP8, even if they had to modify their training algorithms. I thought such precision was possible only for inference. Apparently since inference is already at INT4 training had to follow suit. In the blog you linked they say they intend to go even further in the future, with inference at .. 2-bit(?!) and training at an unspecified <8-bit (6-bit?).Bulat Ziganshin - Sunday, April 7, 2019 - link

humans perform inference at single-bit precision (like/hate) and became most successful specie. May be, IBM just revealed this secret? :Dmode_13h - Wednesday, April 10, 2019 - link

It's funny you mention that. Spiking neural networks are more closely designed to model natural ones.One-bit neural networks are an ongoing area of research. I really haven't followed them, but they do seem to come up, now and then.

Elstar - Monday, April 8, 2019 - link

I'd wager that Intel is eager to add BF16 support, but they weren't *planning* on it originally. Therefore tweaking an existing proven design to have BF16 support in the short term is easier than adding BF16 support to Icelake, which ships later but needs significantly more qualification testing due to being a huge redesign.mode_13h - Wednesday, April 10, 2019 - link

Probably right.JayNor - Saturday, June 29, 2019 - link

I believe Intel has recently dropped their original flexpoint idea from the NNP-L1000 training chip, replacing it with bfloat16. It is stated in the fuse.wikichip article from April 14, 2019.