Intel Columbiaville: 800 Series Ethernet at 100G, with ADQ and DDP

by Ian Cutress on April 2, 2019 1:00 PM EST- Posted in

- Networking

- Intel

- Ethernet

- 100G

- 100G Ethernet

- Columbiaville

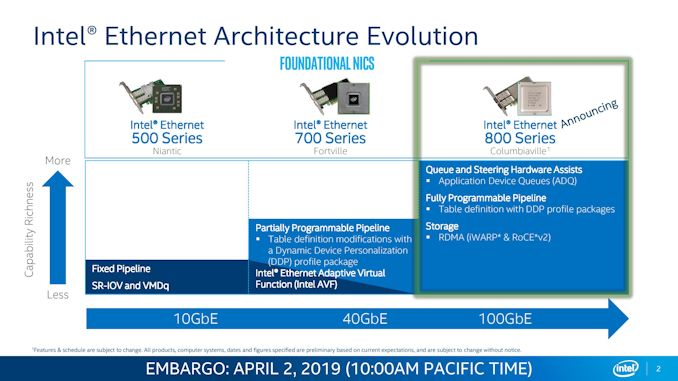

Among the many data center announcements today from Intel, one that might fly under the radar is that the company is launching a new family of controllers for 100 gigabit Ethernet connectivity. Aside from the speed, Intel is also implementing new features to improve connectivity, routing, uptime, storage protocols, and an element of programmability to address customer needs.

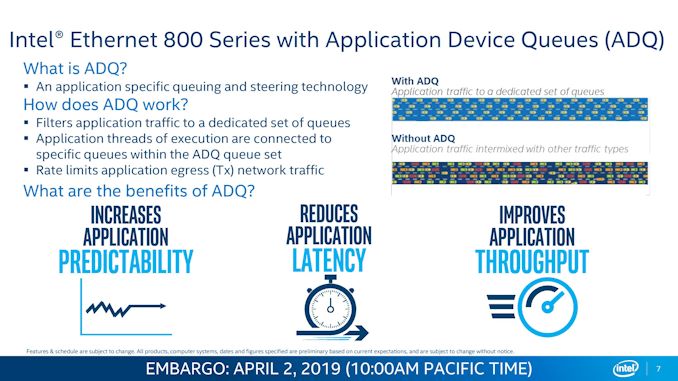

The new Intel 800-Series Ethernet controllers and PCIe cards, using the Columbiaville code-name, are focused mainly on one thing aside from providing a 100G connection – meeting customer requirements and targets for connectivity and latency. This involves reducing the variability in application response time, improving predictability, and improving throughput. Intel is doing this through two technologies: Application Device Queues (ADQ) and Dynamic Device Personalization (DDP).

Application Device Queues (ADQ)

Adding queues to networking traffic isn’t new – we’ve seen it in the consumer space for years, with hardware-based solutions from Rivet Networks or software solutions from a range of hardware and software companies. Queuing network traffic allows high-priority requests to be sent over the network preferentially to others (in the consumer use case, streaming a video is a higher priority over a background download), and different implementations either leave it for manual arrangement, or offer whitelist applications, or do traffic analysis to queue appropriate networking patterns.

With Intel’s implementation of ADQ, it instead relies on the application deployment to know the networking infrastructure and direct accordingly. The example given by Intel is a distributed Redis database – the database should be in control of its own networking flow, so it can tell the Ethernet controller how to manage which packets and how to route them. The application knows which packets are higher priority, so it can send them on the fastest way around the network and ahead of other packets, while it can send non-priority packets on different routes to ease congestion.

Unfortunately, it was hard to see how much of a different ADQ did in the examples that Intel provided – they compared a modern Cascade Lake system (equipped with the new E810-CQDA2 dual port 100G Ethernet card and 1TB of Optane DC Persistent Memory) to an old Ivy Bridge system with a dual-port 10G Ethernet card and 128 GB of DRAM (no Optane). While this might be indicative of a generational upgrade to the system, it’s a sizeable upgrade that hides the benefit of the new technology by not providing an apples-to-apples comparison.

Dynamic Device Personalization (DDP)

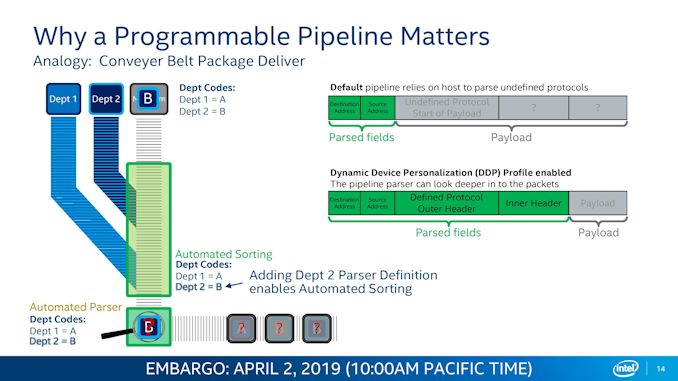

DDP was introduced with Intel’s 40 GbE controllers however it gets an updated model for the new 800 series controllers. DDP in simple terms allows for a programmable protocol within the networking infrastructure, which can be used for faster routing and additional security. With DDP, the controller can work with the software to craft a user-defined protocol and header within a network packet to provide additional functionality.

As mentioned, the two key areas here are security (cryptography) or speed (bandwidth/latency). With the pipeline parser embedded in the controller, it can both craft an outgoing data packet or analyse one coming in. When the packet comes in, if it knows the defined protocol, it can act on it – either sending the payload to an accelerator for additional processing, or pushing it to the next hop on the network without needing to refer to its routing tables. With the packet being custom defined (within an overall specification), the limits to the technology depend on how far the imagination goes. Intel already offers DDP profiles for its 700-series products for a variety of markets, and that is built upon for the 800-series. For the 800 series, these custom DDP profiles can be loaded pre-boot, in the OS, or during run-time.

But OmniPath is 100G, right?

For users involved in the networking space, I know what you are going to say: doesn’t Intel already offer OmniPath at 100G? Why would they release an Ethernet based product to cannibalize their own OmniPath portfolio? Your questions are valid, and depending on who you talk to, OmniPath has either had a great response, a mild response, or no response to their networking deployments. Intel’s latest version of OmniPath is actually set to be capable of 200G, and so at this point it is a generation ahead of Intel’s Ethernet offerings. It would appear that based on the technologies, Intel is likely to continue this generational separation, giving a choice for customers to either take the latest it has to offer on OmniPath, or take its slower Ethernet version.

What We Still Need to Know, Launch Timeframe

When asked, Intel stated that it is not disclosing the process node the new controllers are built on, nor the power consumption. Intel stated that its 800 series controllers and corresponding PCIe cards should launching in Q3.

Related Reading

- Intel Announces The FPGA PAC N3000 for 5G Networks

- Things We Missed: Realtek Has 2.5G Gaming Ethernet Controllers

- Wi-Fi Naming Simplified: 802.11ax Becomes Wi-Fi 6

- In The Lab: The Netgear XS724EM, a 24-port 2.5G/5G/10GBase-T Switch

- Omni-Path Switches at SuperComputing 15: Supermicro and Dell

- Exploring Intel’s Omni-Path Network Fabric

20 Comments

View All Comments

binarp - Thursday, April 4, 2019 - link

It's hard to say from the slides here which are largely useless drivel that say something like "application centric packet queuing", but this NIC looks like it is based on the FM line of chips that came into Intel with the Fulcrum acquisition. The FM6000 that put Fulcrum on the map was one of the earliest Ethernet switch ASICs with a meaningfully reconfigurable pipeline.Dug - Friday, April 5, 2019 - link

I don't see RDMA listed for 500 or 700 series. Seems odd.thehevy - Friday, April 5, 2019 - link

I wanted to help clarify a point of confusion regarding the ADQ test results for Redis. The performance comparison of open source Redis using 2nd Gen Intel® Xeon® Scalable processors and Intel® Ethernet 800 Series with ADQ vs. without ADQ on the same server (SUT). The test shows the same application running with and without ADQ enabled. It is not comparing Redis running on two different servers.This statement does not reflect the actual test configuration used:

“Unfortunately, it was hard to see how much of a different ADQ did in the examples that Intel provided – they compared a modern Cascade Lake system (equipped with the new E810-CQDA2 dual port 100G Ethernet card and 1TB of Optane DC Persistent Memory) to an old Ivy Bridge system with a dual-port 10G Ethernet card and 128 GB of DRAM (no Optane). While this might be indicative of a generational upgrade to the system, it’s a sizeable upgrade that hides the benefit of the new technology by not providing an apples-to-apples comparison.”

Tests performed using Redis* Open Source with two 2nd Generation Intel® Xeon® Platinum 8268 processor @ 2.8GHz and Intel® Ethernet 800 series 100GbE on Linux 4.19.18 kernel.

The client systems used were 11 Dell PowerEdge R720 servers with two Intel Xeon processor E5-2697 v2 @ 2.7GHz and one Intel Ethernet Converged Network Adapter X520-DA2.

For complete configuration information see the Performance Testing Application Device Queues (ADQ) with Redis Solution Brief at this link: - http://www.intel.com/content/www/us/en/architectur...

The Intel Ethernet 800 Series is the next-generation architecture providing port speeds of up to 100Gb/s. Here is a link to the Product Preview:

https://www.intel.com/content/www/us/en/architectu...

Here is a link to the video of a presentation Anil and I gave at the Tech Field Day on April 2nd.

https://www.youtube.com/watch?v=f0c6SKo2Fi4

https://techfieldday.com/event/inteldcevent/

I hope this helps clarify the Redis performance results.

Brian Johnson – Solutions Architect, Intel Corporation

#iamintel

Performance results are based on Intel internal testing as of February 2019, and may not reflect all publicly available security updates. See configuration disclosure for details. No product or component can be absolutely secure.

brunis.dk - Saturday, April 6, 2019 - link

I just want 10Gb to be affordableabufrejoval - Thursday, April 11, 2019 - link

Buffalo sells 8 and 12 NBASe-T port switches at €$50/port and Aquantia NICs are €$99.It's not affordable for everyone, but remembering what they asked 10 years ago, I fell for it and have no regrets.

boe - Sunday, April 7, 2019 - link

I have no idea what to get at this point. I currently have 10g at home but that isn't enough - my raid controllers are pushing my 10g to the limit (I don't have a switch - I have a single quad port connected to 3 other systems). When I look at throughput on the ports they are maxed out. I was considering 40g but there are no quad port nics and 40g switches are too expensive for me. I was also looking for 25 or 50g or 100g nics but can't find the right option which was simple with 10g. Anyone know of any cheap 25/50 or 100g switches that are cheap or 25 or 50 or 100g quad port nics?abufrejoval - Thursday, April 11, 2019 - link

Since this seems to be a 'home' setup: You may be better off getting used 25 or even 40Gbit Infiniband NICs and switches: I have seen some really tempting offers on sites specializing on recycling data center equipment.Perhaps it's a little difficult to find, but bigger DCs are moving so quickly to 100 and even 400Gbit, that 25/40 get moved out.

And you can typically run simulated Ethernet on IF as well as TCP/IP at least with Mellanox (sorry Nvidia) gear.

If you don't have a lot of systems you can also work with Mellanox 100Gbit NICs that are running in host chaining mode, essentially something that looks like FC-AL or Token ring. Only works with the Ethernet personality of those hybrid cards, but you avoid the switch at the cost of some latency when there are too many hops (I wouldn't use more than 4 chained hosts). I am running that with 3 fat Xeon nodes and because these NICs are dual ports I can even avoid the latency and bandwidth drop of the extra hop, going right or left in the 'ring'.

The feature was given a lot of public visibility in 2016, but since then Mellanox management must have realized that they might sell fewer switches if everyone started using 100Gbit host chaning to avoid 25 or 40 Gbit upgrades, so it's tuck away in a corner without 'official' support, but it works.

And if your setup is dense you can use direct connect cables and these 100Gbit NICs are much cheaper than if you have to add a new 40Gbit switch.

boe - Wednesday, May 8, 2019 - link

I'd like to know how many media access controllers they support. I have 4 computers I want to connect at greater than 10g (what I currently have and max). I can connect each direct without a switch because I have a quad port 10g on one. I doubt we'll see any quad port 100g nics (although that would be great). An option would be using a fanout cable but that requires more than two media access controllers per nic . The dual port Mellanox cards only have 2 per dual card which means you can't use fannouts to connect to other nics directly.boe - Tuesday, November 26, 2019 - link

Haven't they been talking about this for about a year? When do we see the product available for sale?Guy111 - Thursday, August 27, 2020 - link

hi,I have e810 cam-2

I created bridge on the card and no get rx packets from ixia.

Other card (diffrent brand) works well.

I installed centos 7.6 with ice driver.

Why?