CXL Specification 1.0 Released: New Industry High-Speed Interconnect From Intel

by Ian Cutress on March 11, 2019 9:00 AM EST- Posted in

- CPUs

- Intel

- GPUs

- DRAM

- Interconnect

- FPGAs

- Compute Express Link

- CXL

With the battleground moving from single core performance to multi-core acceleration, a new war is being fought with how data is moved around between different compute resources. The Interconnect Wars are truly here, and the battleground just got a lot more complicated. We’ve seen NVLink, CCIX, and GenZ come out in recent years as offering the next generation of host-to-device and device-to-device high-speed interconnect, with a variety of different features. Now CXL, or Compute Express Link, is taking to the field.

This new interconnect, for which the version 1.0 specification is being launched today, started in the depths of Intel’s R&D Labs over four years ago, however what was made is being launched as an open standard, headed up by a consortium of nine companies. These companies include Alibaba, Cisco, Dell EMC, Facebook, Google, HPE, Huawei, Intel, and Microsoft, which as a collective was described as one of the companies as ‘the biggest group of influencers driving a modern interconnect standard’.

In our call, we were told that the specification is actually been in development for a few years at Intel, and was only lifted recently to drive a new consortium around an open cache coherent interconnect standard. The upcoming consortium of the nine founding companies will be incorporated later this year, working under US rules. The consortium states that members will be free to use the IP on any device, and aside from the nine founders, other companies can become contributors and/or adopters, depending on if they want to use the technology or help contribute to the next standard.

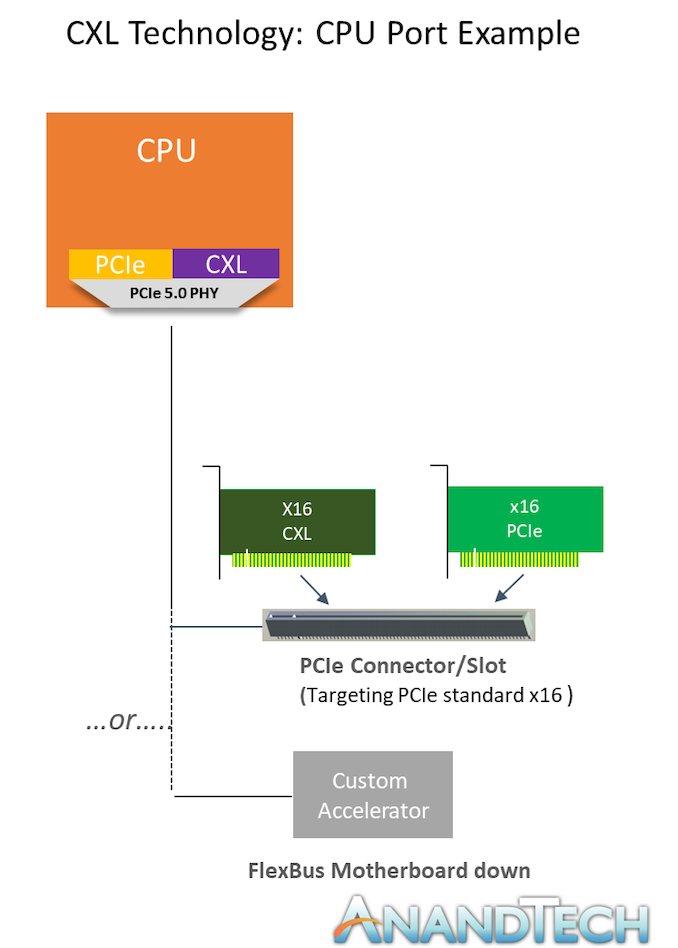

At its heart, Compute Express Link (CXL) will initially begin as a cache-coherent host-to-device interconnect, focusing on GPUs and FPGAs. It will use current PCIe 5.0 standards for physical connectivity and electrical standards, providing protocols for I/O and memory with coherency interfaces. The focus of CXL is to help accelerate AI, machine learning, media services, HPC, and cloud applications. With Intel being at the heart of this technology, we might expect to see future Intel GPUs and FPGAs connecting in a PCIe slot in ‘CXL’ mode. It will be interesting to see if this will be an additional element of the product segmentation strategy.

While some of the competing standards have 20-50+ members, the Compute Express Link actually has more founding members than PCIe (5) or USB (7). That being said however, there are a few key names in the industry missing: Amazon, Arm, AMD, Xilinx, etc. Other standards playing in this space, such as CCIX and GenZ, have common members with CXL, and when questioned on this, the comment from CXL was that GenZ made a positive comment to the CXL press release - they stated that there is a lot of synergy between CXL and GenZ, and they expect the standards to dovetail rather than overlap. It should be pointed out that Xilinx, Arm, and AMD have already stated core CCIX support, either plausible future support or in products at some level, making this perhaps another VHS / Betamax battle. The other missing company is NVIDIA, who are more than happy with NVLink and its association with IBM.

The Compute Express Link announcement is part standard and part recruitment – the as-yet consortium is looking for contributors and adopters. Other CPU architectures beyond x86 are more than welcome, with the Intel representative stating that he is happy to jump on a call to explain the company’s motivation behind the new standard. Efforts are currently underway to develop the CXL 2.0 Specification.

CXL Promoter Statements of Support

Dell EMC

“Dell EMC is delighted to be part of the CXL Consortium and its all-star cast of promoter companies. We are encouraged to see the true openness of CXL, and look forward to more industry players joining this effort. The synergy between CXL and Gen-Z is clear, and both will be important components in supporting Dell EMC’s kinetic infrastructure and this data era.”

Robert Hormuth, Vice President & Fellow, Chief Technology Officer, Server & Infrastructure Systems, Dell EMC

“Facebook is excited to join CXL as a founding member to enable and foster a standards-based open accelerator ecosystem for efficient and advanced next generation systems.”

Vijay Rao, Director of Technology and Strategy, Facebook

“Google supports the open Compute Express Link collaboration. Our customers will benefit from the rich ecosystem that CXL will enable for accelerators, memory, and storage technologies.”

Rob Sprinkle, Technical Lead, Platforms Infrastructure, Google LLC

HPE

“At HPE we believe that being able to compose compute resources over open interfaces is critical if our industry is to keep pace with the demands of a data and AI-driven future. We applaud Intel for opening up the interface to the processor. CXL will help customers utilize accelerators more efficiently and dovetails well with the open Gen-Z memory-semantic interconnect standard to aid in building fully-composable, workload-optimized systems.”

Mark Potter, HPE CTO and Director of Hewlett Packard Labs

Huawei

“Being a leading provider in the industry, Huawei will play an important role in the contribution of technology specification. Huawei’s intelligent computing products which incorporates Huawei’s chip, acceleration components and intelligent management together with innovative optimized system design, can deliver end-to-end solutions which significantly improves the rollout and system efficiency of data centers.”

Zhang Xiaohua, GM of Huawei’s Intelligent Computing BU

Intel

“CXL is an important milestone for data-centric computing, and will be a foundational standard for an open, dynamic accelerator ecosystem. Like USB and PCI Express, which Intel also co-founded, we can look forward to a new wave of industry innovation and customer value delivered through the CXL standard.”

Jim Pappas, Director of Technology Initiatives, Intel Corporation

Microsoft

“Microsoft is joining the CXL consortium to drive the development of new industry bus standards to enable future generations of cloud servers. Microsoft strongly believes in industry collaboration to drive breakthrough innovation. We look forward to combining efforts of the consortium with our own accelerated hardware achievements to advance emerging workloads from deep learning to high performance computing for the benefit of our customers.”

Dr. Leendert van Doorn, Distinguished Engineer, Azure, Microsoft

Gen-Z Consortium

“As a Consortium founded to encourage an open ecosystem for the next-generation memory and compute architectures, Gen-Z welcomes Compute Express Link (CXL) to the industry and we look forward to opportunities for future collaboration between our organizations.”

Kurtis Bowman, President, Gen-Z Consortium

48 Comments

View All Comments

HStewart - Monday, March 11, 2019 - link

I not sure what you are getting at here with above statement - but you should note the GenZ is included as one of the members on this new standard.I not sure why Facebook and Google are included as members.

GreenReaper - Monday, March 11, 2019 - link

Presumably, because they have huge datacenters and wish to use CXL, and maybe influence it.tk.icepick - Monday, March 11, 2019 - link

Is there a time table for some kind of subscription to Anandtech?I know a decent chunk of people would be willing to pay a small fee each month to avoid ads and still support the site (and use less battery and data).

Thanks!

mayankleoboy1 - Tuesday, March 12, 2019 - link

So where does AMD's infinity fabric fit in the whole picture? Is this cxl competing for the same space, or is it aiming for a different usecase/market?JayNor - Wednesday, March 31, 2021 - link

Looks like AMD might use ccix over infinity fabric, which would provide symmetric coherency among GPUs and CPUs.Xajel - Tuesday, March 12, 2019 - link

The only thing I'm wondering is what does this adds over PCIe 5.0 now or then ?, I can understand NVLink, GenZ that these provides higher bandwidth. But seeing CXL is based on PCIe 5.0 makes me wonder if it will provide the same bandwidth or more bandwidth, in the later case makes sense actually as depending on PCIe 5.0 PHY will lower the costs and being an open standard will make it more popular.peevee - Thursday, March 14, 2019 - link

Seems to me it adds cache coherency - meaning if the device wants to read some host memory and the memory is changed in a CPU cache but not written to RAM yet, CPU will know about it and will write its cache to RAM before enabling the transfer; and maybe other way around for accelerators with write-back caches (some TPUs?).The article does not say much about it - as probably the press-release as usually done by non-engineers - people who don't understand that either.

JayNor - Wednesday, March 31, 2021 - link

CXL adds asymmetric/biased cache coherency and disaggregated memory pools so that master cpu can give accelerator xpu private access to its own memory pool for big stretches without the coherency monitoring.