The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTPower, Temperature, and Noise

As always, we'll take a look at power, temperature, and noise of the GTX 1660 Ti, though as a pure custom launch we aren't expecting anything out of the ordinary. As mentioned earlier, the XC Black board has already revealed itself in its RTX 2060 guise.

As this is a new GPU, we will quickly review the GeForce GTX 1660 Ti's stock voltages and clockspeeds as well.

| NVIDIA GeForce Video Card Voltages | ||

| Model | Boost | Idle |

| GeForce GTX 1660 Ti | 1.037V | 0.656V |

| GeForce RTX 2060 | 1.025v | 0.725v |

| GeForce GTX 1060 6GB | 1.043v | 0.625v |

The voltages are naturally similar to the 16nm GTX 1060, and in comparison to pre-FinFET generations, these voltages are exceptionally lower because of the FinFET process used, something we went over in detail in our GTX 1080 and 1070 Founders Edition review. As we said then, the 16nm FinFET process requires said low voltages as opposed to previous planar nodes, so this can be limiting in scenarios where a lot of power and voltage are needed, i.e. high clockspeeds and overclocking. For Turing (along with Volta, Xavier, and NVSwitch), NVIDIA moved to 12nm "FFN" rather than 16nm, and capping the voltage at 1.063v.

| GeForce Video Card Average Clockspeeds | |||||

| Game | GTX 1660 Ti | EVGA GTX 1660 Ti XC |

RTX 2060 | GTX 1060 6GB | |

| Max Boost Clock |

2160MHz

|

2160MHz |

2160MHz

|

1898MHz

|

|

| Boost Clock | 1770MHz | 1770MHz | 1680MHz | 1708MHz | |

| Battlefield 1 | 1888MHz | 1901MHz | 1877MHz | 1855MHz | |

| Far Cry 5 | 1903MHz | 1912MHz | 1878MHz | 1855MHz | |

| Ashes: Escalation | 1871MHz | 1880MHz | 1848MHz | 1837MHz | |

| Wolfenstein II | 1825MHz | 1861MHz | 1796MHz | 1835MHz | |

| Final Fantasy XV | 1855MHz | 1882MHz | 1843MHz | 1850MHz | |

| GTA V | 1901MHz | 1903MHz | 1898MHz | 1872MHz | |

| Shadow of War | 1860MHz | 1880MHz | 1832MHz | 1861MHz | |

| F1 2018 | 1877MHz | 1884MHz | 1866MHz | 1865MHz | |

| Total War: Warhammer II | 1908MHz | 1911MHz | 1879MHz | 1875MHz | |

| FurMark | 1594MHz | 1655MHz | 1565MHz | 1626MHz | |

Looking at clockspeeds, a few things are clear. The obvious point is that the very similar results of the reference-clocked GTX 1660 Ti and EVGA GTX 1660 Ti XC are reflected in the virtually identical clockspeeds. The GeForce cards boost higher than the advertised boost clock, as is typically the case in our testing. All told, NVIDIA's formal estimates are still run a bit low, especially in our properly ventilated testing chassis, so we won't complain about the extra performance.

But on that note, it's interesting to see that while the GTX 1660 Ti should have a roughly 60MHz average boost advantage over the GTX 1060 6GB when going by the official specs, in practice the cards end up within half that span. Which hints that NVIDIA's official average boost clock is a little more correctly grounded here than with the GTX 1060.

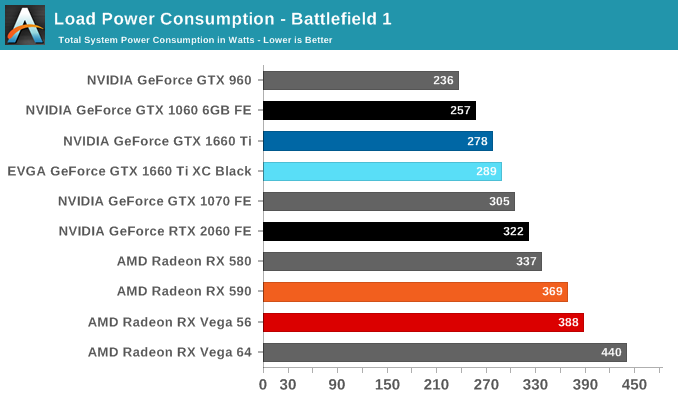

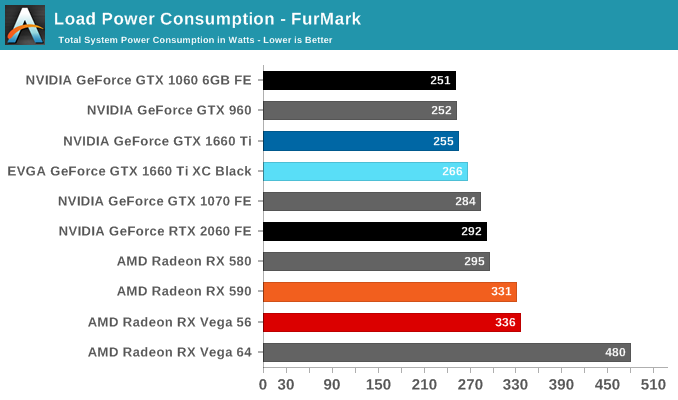

Power Consumption

Even though NVIDIA's video card prices for the xx60 cards have drifted up over the years, the same cannot be said for their power consumption. NVIDIA has set the reference specs for the card at 120W, and relative to their other cards this is exactly what we see. Looking at FurMark, our favorite pathological workload that's guaranteed to bring a video card to its maximum TDP, the GTX 960, GTX 1060, and GTX 1660 are all within 4 Watts of each other, exactly what we'd expect to see from the trio of 120W cards. It's only in Battlefield 1 do these cards pull apart in terms of total system load, and this is due to the greater CPU workload from the higher framerates afforded by the GTX 1660 Ti, rather than a difference at the card level itself.

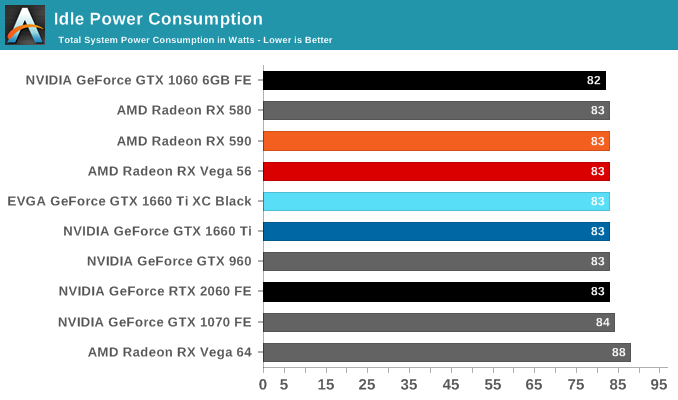

Meanwhile when it comes to idle power consumption, the GTX 1660 Ti falls in line with everything else at 83W. With contemporary desktop cards, idle power has reached the point where nothing short of low-level testing can expose what these cards are drawing.

As for the EVGA card in its natural state, we see it draw almost 10W more on the dot. I'm actually a bit surprised to see this under Battlefield 1 as well since the framerate difference between it and the reference-clocked card is barely 1%, but as higher clockspeeds get increasingly expensive in terms of power consumption, it's not far-fetched to see a small power difference translate into an even smaller performance difference.

All told, NVIDIA has very good and very consistent power control here. and it remains one of their key advantages over AMD, and key strengths in keeping their OEM customers happy.

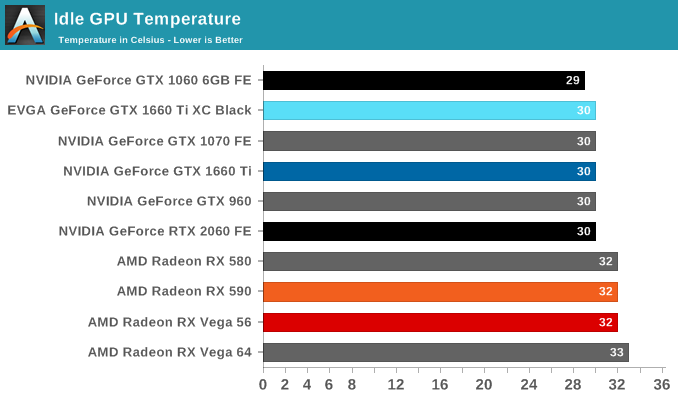

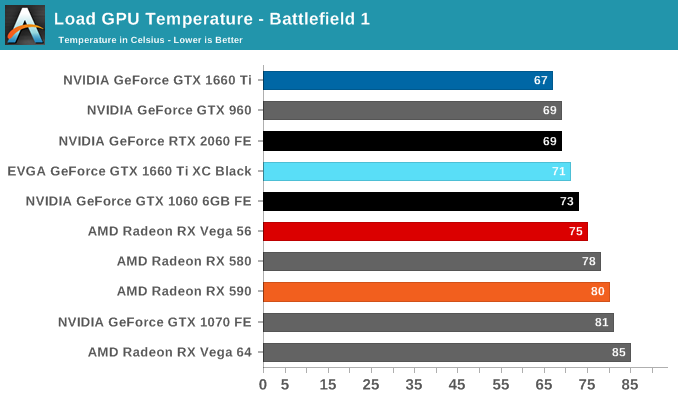

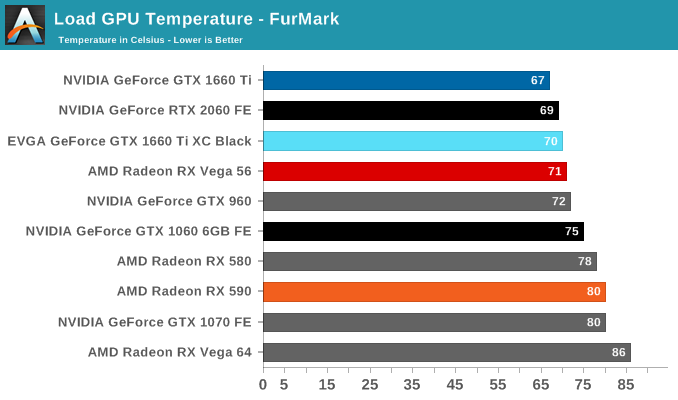

Temperature

Looking at temperatures, there are no big surprises here. EVGA seems to have tuned their card for high performance cooling, and as a result the large, 2.75-slot card reports some of the lowest numbers in our charts, including a 67C under FurMark when the card is capped at the reference spec GTX 1660 Ti's 120W limit.

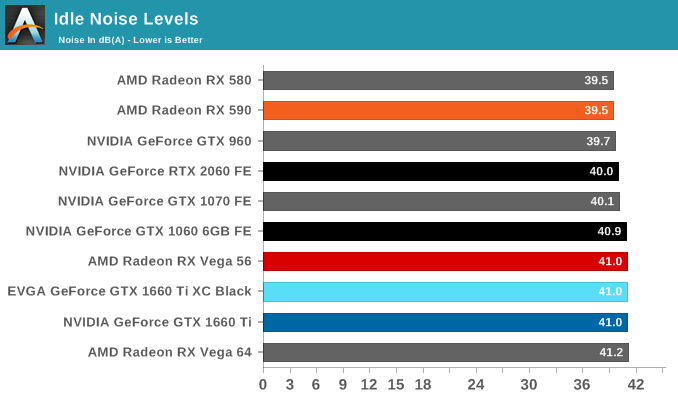

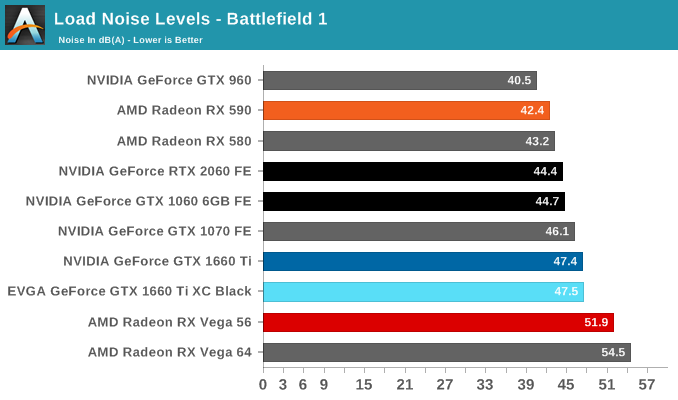

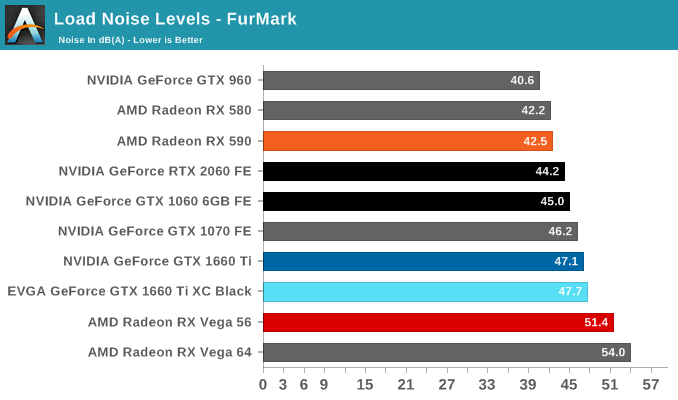

Noise

Turning again to EVGA's card, despite being a custom open air design, the GTX 1660 Ti XC Black doesn't come with 0db idle capabilties and features a single smaller but higher-RPM fan. The default fan curve puts the minimum at 33%, which is indicative that EVGA has tuned the card for cooling over acoustics. That's not an unreasonable tradeoff to make, but it's something I'd consider more appropriate for a factory overclocked card. For their reference-clocked XC card, EVGA could have very well gone with a less aggressive fan curve and still have easily maintained sub-80C temperatures while reducing their noise levels as well.

157 Comments

View All Comments

Yojimbo - Saturday, February 23, 2019 - link

My guess is that in the next (7 nm) generation, NVIDIA will create the RTX 3050 to have a very similar number of "RTX-ops" (and, more importantly, actual RTX performance) as the RTX 2060, thereby setting the capabilities of the RTX 2060 as the minimum targetable hardware for developers to apply RTX enhancements for years to come.Yojimbo - Saturday, February 23, 2019 - link

I wish there were an edit button. I just want to say that this makes sense, even if it eats into their margins somewhat in the short term. Right now people are upset over the price of the new cards. But that will pass assuming RTX actually proves to be successful in the future. However, if RTX does become successful but the people who paid money to be early adopters for lower-end RTX hardware end up getting squeezed out of the ray-tracing picture that is something that people will be upset about which NVIDIA wouldn't overcome so easily. To protect their brand image, NVIDIA need a plan to try to make present RTX purchases useful in the future being that they aren't all that useful in the present. They can't betray the faith of their customers. So with that in mind, disabling perfectly capable RTX hardware on lower end hardware makes sense.u.of.ipod - Friday, February 22, 2019 - link

As a SFFPC (mITX) user, I'm enjoying the thicker, but shorter, card as it makes for easier packaging.Additionally, I'm enjoying the performance of a 1070 at reduced power consumption (20-30w) and therefore noise and heat!

eastcoast_pete - Friday, February 22, 2019 - link

Thanks! Also a bit disappointed by NVIDIA's continued refusal to "allow" a full 8 GB VRAM in these middle-class cards. As to the card makers omitting the VR required USB3 C port, I hope that some others will offer it. Yes, it will add $20-30 to the price, but I don't believe I am the only one who's like the option to try some VR gaming out on a more affordable card before deciding to start saving money for a full premium card. However, how is VR on Nvidia with 6 GB VRAM? Is it doable/bearable/okay/great?eastcoast_pete - Friday, February 22, 2019 - link

"who'd like the option". Google keyboard, your autocorrect needs work and maybe some grammar lessons.Yojimbo - Friday, February 22, 2019 - link

Wow, is a USB3C port really that expensive?GreenReaper - Friday, February 22, 2019 - link

It might start to get closer once you throw in the circuitry needed for delivering 27W of power at different levels, and any bridge chips required.OolonCaluphid - Friday, February 22, 2019 - link

>However, how is VR on Nvidia with 6 GB VRAM? Is it doable/bearable/okay/great?It's 'fine' - the GTX 1050ti is VR capable with only 4gb VRAM, although it's not really advisable (see Craft computings 1050ti VR assessment on youtube - it's perfectly useable and a fun experience). The RTX 2060 is a very capable VR GPu, with 6gb VRAm. It's not really VRAM that is critical in VR GPU performance anyway - more the raw compute performance in rendering the same scene from 2 viewpoints simultaneously. So, I'd assess that the 1660ti is a perfectly viable entry level VR GPU. Just don't expect miracles.

eastcoast_pete - Saturday, February 23, 2019 - link

Thanks for the info! About the miracles: Learned a long time ago not to expect those from either Nvidia or AMD - fewer disappointments this way.cfenton - Friday, February 22, 2019 - link

You don't need a USB C port for VR, at least not with the two major headsets on the market today.