The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTMeet The EVGA GeForce GTX 1660 Ti XC Black GAMING

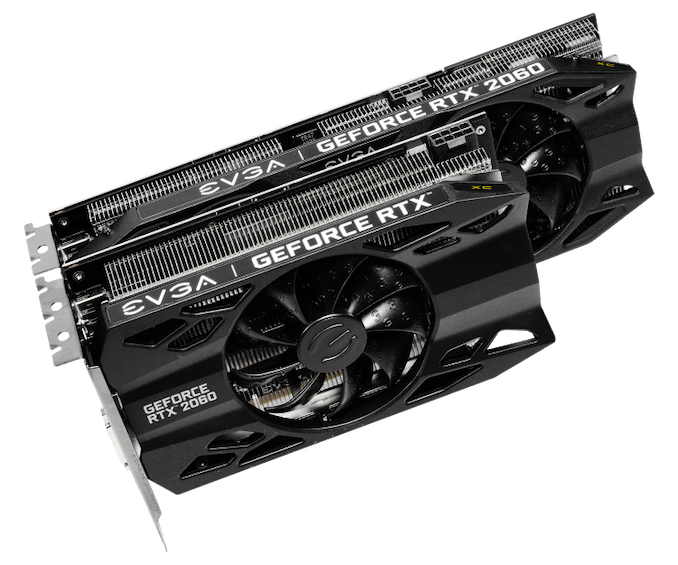

As a pure virtual launch, the release of the GeForce GTX 1660 Ti does not bring any Founders Edition model, and so everything is in the hands of NVIDIA’s add-in board partners. For today, we look at EVGA’s GeForce GTX 1660 Ti XC Black, a 2.75-slot single-fan card with reference clocks and a slightly increased TDP of 130W.

| GeForce GTX 1660 Ti Card Comparison | ||||

| GTX 1660 Ti Ref Spec | EVGA GTX 1660 Ti XC Black GAMING | |||

| Base Clock | 1500MHz | 1500MHz | ||

| Boost Clock | 1770MHz | 1770MHz | ||

| Memory Clock | 12Gbps GDDR6 | 12Gbps GDDR6 | ||

| VRAM | 6GB | 6GB | ||

| TDP | 120W | 130W | ||

| Length | N/A | 7.48" | ||

| Width | N/A | 2.75-Slot | ||

| Cooler Type | N/A | Open Air | ||

| Price | $279 | $279 | ||

Seeing as the GTX 1660 Ti is intended to replace the GTX 1060 6GB, EVGA’s cooler and card design is new and improved compared to their Pascal cards, and was first introduced with the RTX 20-series as they rolled out the iCX2 cooling design and new “XC” card branding, complementing their existing SC and Gaming series. As we’ve seen before, the iCX platform is comprised of a medley of features, and some of the core technology is utilized even when the full iCX suite isn’t. For one, EVGA reworked their cooler design with hydraulic dynamic bearing (HDB) fans, offering lower noise and higher lifespan than sleeve and ball bearing types, and this is present in the EVGA GTX 1660 Ti XC Black.

In general, the card essentially shares the design of the RTX 2060 XC, complete with those new raised EVGA ‘E’s on the fans, intended to improve slipstream. The single-fan RTX 2060 XC was paired with a thinner dual-fan XC Ultra variant, and in the same vein the GTX 1660 Ti XC Black is a one-fan design that essentially occupies three slots due to the thick heatsink and correspondingly taller fan hub. Being so short, though, makes the size a natural fit for mini-ITX form factors.

As one of the cards lower down the RTX 20 and now GTX 16 series stack, the GTX 1660 Ti XC Black also lacks LEDs and zero-dB fan capability, where fans turn off completely at low idle temperatures. The former is an eternal matter of taste, as opposed to the practicality of the latter, but both tend to be perks of premium models and/or higher-end GPUs. Putting price aside for the moment, the reference-clocked GTX 1660 Ti and RTX 2060 XC Black editions are the more mainstream variant anyhow.

Otherwise, the GTX 1660 Ti XC Black unsurprisingly lacks a USB-C/VirtualLink output, offering up the mainstream-friendly 1x DisplayPort/1x HDMI/1x DVI setup. Although the TU116 GPU still supports VirtualLink, the decision to implement it is up to partners; the feature is less applicable for cards further down the stack, where cards are more sensitive to cost and are less likely to be used for VR. Additionally, the 30W USB-C controller power budget could be significant amount relative to the overall TDP.

And on the topic of power, the GTX 1660 Ti XC Black’s power limit is actually capped at the default 130W, though theoretically the card’s single 8-pin PCIe power connector could supply 150W on its own.

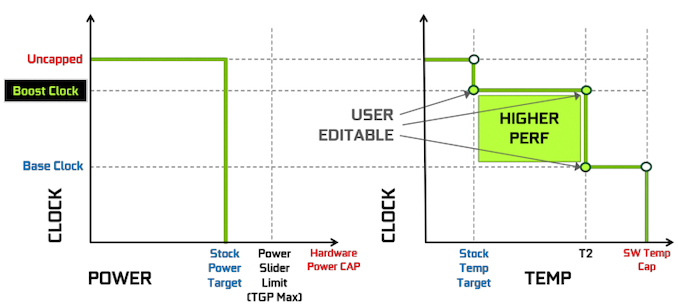

The rest of the other GPU-tweaking knobs are there for your overclocking needs, and for EVGA this goes hand-in-hand with Precision, their overclocking utility. For NVIDIA’s Turing cards, EVGA released Precision X1, which allows modifying the voltage-frequency curve and scanning for auto-overclocking as part of Turing’s GPU Boost 4. Of course, NVIDIA’s restriction of actual overvolting is still in place, and for Turing there is a cap at 1.068v.

157 Comments

View All Comments

Midwayman - Friday, February 22, 2019 - link

I feel like they don't realize that until they improve the performance per $$$ there is very little reason to upgrade. I'm happy sitting on an older card until that changes. Though If I were on a lower end card I might be kicking myself for not just buying a better card years ago.eva02langley - Friday, February 22, 2019 - link

Since the bracket price moved up so much for relative performance at higher price point from the last generation, there is absolutely no reason for upgrading. That is different if you need a GPU.zmatt - Friday, February 22, 2019 - link

Agreed. It's kind of wild that I have to pay $350 to get on average 10fps better than my 980ti. If I want a real solid performance improvement I have to essentially pay the same today as when the 980ti was brand new. The 2070 is anywhere between $500-$600 right now depending on model and features. IIRC the 980ti was around $650. And according to Anantech's own benchmarks it gives on average 20fps better performance. That 2 generations, 5 years and I get 20fps for $50 less? No. I should have a 100% performance advantage for the same price by this point. Nvidia is milking us. I'm eyeballing it a bit here but the 2080Ti is a little bit over double the performance of a 980Ti. It should cost less than $700 to be a good deal.Samus - Friday, February 22, 2019 - link

I agree in that this card is a tough sell over a RTX2060. Most consumers are going to spend the extra $60-$70 for what is a faster, more well-rounded and future-proof card. If this were $100 cheaper it'd make some sense, but it isn't.PeachNCream - Friday, February 22, 2019 - link

I'm not so sure about the value prospects of the 2070. The banner feature, real-time ray tracing, is quite slow even on the most powerful Turing cards and doesn't offer much of a graphical improvement for the variety of costs involved (power and price mainly). That positions the 1660 as a potentially good selling graphics card AND endangers the adoption of said ray tracing such that it becomes a less appealing feature for game developers to implement. Why spend the cash on supporting a feature that reduces performance and isn't supported on the widest possible variety of potential game buyers' computers and why support it now when NVIDIA seems to have flinched and released the 1660 in a show of a lack of commitment? Already game studios have ditched SLI now that DX12 pushed support off GPU companies and into price-sensitive game publisher studios. We aren't even seeing the hyped up feature of SLI between a dGPU and iGPU that would have been an easy win on the average gaming laptop due in large part to cost sensitivity and risk aversion at the game studios (along with a healthy dose of "console first, PC second" prioritization FFS).GreenReaper - Friday, February 22, 2019 - link

What I think you're missing is that the DirectX rendering API set by Microsoft will be implemented by all parties sooner or later. It really *does* met a need which has been approximated in any number of ways previously. Next generation consoles are likely to have it as a feature, and if so all the AAA games for which it is relevant are likely to use it.Having said that, the benefit for this generation is . . . dubious. The first generation always sells at a premium, and having an exclusive even moreso; so unless you need the expanded RAM or other features that the higher-spec cards also provide, it's hard to justify paying it.

alfatekpt - Monday, February 25, 2019 - link

I'm not sure about that. It is also an increase in thermals and power consumption that also costs money overtime. RTX advantage is basically null at that point unless you want to play at low FPS so 2060 advantage is 'merely' raw performance.For most people and current games 1160 already offers ultra great performance so not sure if people gonna shell out even more money for the 2060 since 1160 is already a tad expensive.

1160 seems to be an awesome combination of performance and efficiency. Would it be better $50 lower? of course but why? since they don't have real competition from AMD...

Strunf - Friday, February 22, 2019 - link

Why would nvidia give up of a market that costs them almost nothing ? if 5 years from now they do cloud gaming then they pretty much are still doing GPU.Anyways even in 5 years cloud gaming will still be a minor part of the GPU market.

MadManMark - Friday, February 22, 2019 - link

"They are pushing prices up and up but that's not a long term strategy."That comment completely ignores the massive increase in value over both the RX 590 and Vega 56. Nividia produces a card that both makes the RX590 at the same pricepoint completely unjustifiable, and prompts AMD to cut the price of the Vega 56 in HALF overnight, and you are saying that it is *Nvidia* not *AMD* that is charging high prices?!?! I've always thought the AMD GPU fanatics who think AMD delivers more value were somewhat delusional, but this comment really takes the cake.

eddman - Saturday, February 23, 2019 - link

It's not about AMD. The launch prices have clearly been increased compared to previous gen nvidia cards.Even this card is $30 more than the general $200-250 range.