The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM EST

When NVIDIA put their plans for their consumer Turing video cards into motion, the company bet big, and in more ways than one. In the first sense, NVIDIA dedicated whole logical blocks to brand-new graphics and compute features – ray tracing and tensor core compute – and they would need to sell developers and consumers alike on the value of these features, something that is no easy task. In the second sense however, NVIDIA also bet big on GPU die size: these new features would take up a lot of space on the 12nm FinFET process they’d be using.

The end result is that all of the Turing chips we’ve seen thus far, from TU102 to TU106, are monsters in size; even TU106 is 445mm2, never mind the flagship TU102. And while the full economic consequences that go with that decision are NVIDIA’s to bear, for the first year or so of Turing’s life, all of that die space that is driving up NVIDIA’s costs isn’t going to contribute to improving NVIDIA’s performance in traditional games; it’s a value-added feature. Which is all workable for NVIDIA in the high-end market where they are unchallenged and can essentially dictate video card prices, but it’s another matter entirely once you start approaching the mid-range, where the AMD competition is alive and well.

Consequently, in preparing for their cheaper, sub-$300 Turing cards, NVIDIA had to make a decision: do they keep the RT and tensor cores in order to offer these features across the line – at a literal cost to both consumers and NVIDIA – or do they drop these features in order to make a leaner, more competitive chip? As it turns out, NVIDIA has opted for the latter, producing a new Turing GPU that is leaner and meaner than anything that’s come before it, but also very different from its predecessors for this reason.

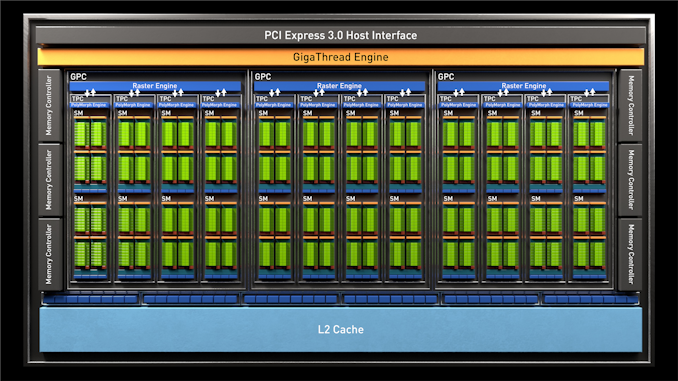

That GPU is TU116, and it’s part of what will undoubtedly become a new sub-family of Turing GPUs for NVIDIA as the company starts rolling out Turing into the lower half of the video card market. Kicking things off in turn for this new GPU is NVIDIA’s latest video card, the GeForce GTX 1660 Ti. Launching today at $279, it’s destined to replace NVIDIA’s GTX 1060 6GB in the market and is NVIDIA’s new challenger for the mainstream video card market.

| NVIDIA GeForce Specification Comparison | ||||||

| GTX 1660 Ti | RTX 2060 Founders Edition | GTX 1060 6GB (GDDR5) | RTX 2070 | |||

| CUDA Cores | 1536 | 1920 | 1280 | 2304 | ||

| ROPs | 48 | 48 | 48 | 64 | ||

| Core Clock | 1500MHz | 1365MHz | 1506MHz | 1410MHz | ||

| Boost Clock | 1770MHz | 1680MHz | 1708MHz | 1620MHz FE: 1710MHz |

||

| Memory Clock | 12Gbps GDDR6 | 14Gbps GDDR6 | 8Gbps GDDR5 | 14Gbps GDDR6 | ||

| Memory Bus Width | 192-bit | 192-bit | 192-bit | 256-bit | ||

| VRAM | 6GB | 6GB | 6GB | 8GB | ||

| Single Precision Perf. | 5.5 TFLOPS | 6.5 TFLOPS | 4.4 TFLOPs | 7.5 TFLOPs FE: 7.9 TFLOPS |

||

| "RTX-OPS" | N/A | 37T | N/A | 45T | ||

| SLI Support | No | No | No | No | ||

| TDP | 120W | 160W | 120W | 175W FE: 185W |

||

| GPU | TU116 (284 mm2) |

TU106 (445 mm2) |

GP106 (200 mm2) |

TU106 | ||

| Transistor Count | 6.6B | 10.8B | 4.4B | 10.8B | ||

| Architecture | Turing | Turing | Pascal | Turing | ||

| Manufacturing Process | TSMC 12nm "FFN" | TSMC 12nm "FFN" | TSMC 16nm | TSMC 12nm "FFN" | ||

| Launch Date | 2/22/2019 | 1/15/2019 | 7/19/2016 | 10/17/2018 | ||

| Launch Price | $279 | $349 | MSRP: $249 FE: $299 |

MSRP: $499 FE: $599 |

||

We’ll go into the full ramifications of what NVIDIA has (and hasn’t) taken out of TU116 on the next page, but at a high level it’s still every bit a Turing GPU, save the RTX functionality (RT and tensor cores). This means that it has the same core architecture in its SMs, and is directly comparable to the likes of the RTX 2060. Or to flip things around the other direction, versus the older Pascal and Maxwell-based video cards, it comes with all of Turing’s performance and efficiency benefits for traditional graphics workloads.

Compared to RTX 2060 then, the GTX 1660 Ti is actually rather similar. For this fully-enabled TU116 card, NVIDIA has dialed back on the number of SMs a bit, going from 30 to 24, and memory clockspeeds have dropped as well, from 14Gbps to 12Gbps. But past that, the two cards are closer in specifications than we might expect to see for a $70 price tag difference, especially as NVIDIA has kept the 6GB of GDDR6 on a 192-bit memory bus. In an added quirk, the GTX 1660 Ti actually has a slightly higher average boost clockspeed than the RTX 2060, with its 1770Mhz clockspeed giving it a 5% edge here.

The end result is that, on paper, the GTX 1660 Ti actually has a bit more ROP pixel pushing power than its bigger sibling thanks to that 5% boost clock advantage. However the drop in the SM count definitely hits compute and texture performance, where GTX 1660 Ti is going to deliver around 85% of RTX 2060’s compute and shading throughput. Or to frame things in reference to the GTX 1060 6GB it replaces, on the new card offers around 24% more compute/shader throughput (before taking architecture into account), a much smaller 4% increase in ROP throughput, and a very sizable 50% increase in memory bandwidth.

Speaking of memory bandwidth, NVIDIA’s continued use of a 192-bit memory bus in this segment continues to be a somewhat vexing choice since it leads to such odd memory amounts. I’ll fully admit I would have liked to have seen 8GB here, but then that was the case for RTX 2060 as well. The flip side being that at least they aren’t trying to ship a card with just a 128-bit memory bus, as was the case for GTX 960. This puts GTX 1660 Ti in an interesting spot in terms of memory bandwidth, since it’s benefitting from the jump to GDDR6; if you thought the GTX 1060 could use a little more memory bandwidth, GTX 1660 Ti gets it in spades. This has also allowed NVIDIA to opt for cheaper 12Gbps GDDR6 VRAM, marking the first time we’ve seen this in any video card.

Finally, taking a look at power consumption, we see that NVIDIA is going to be holding the line at 120W, which is the same TDP as the GTX 1060 6GB. This is notable because all of the other Turing cards to date have had higher TDPs than the cards they replace, leading to a broad case of generational TDP inflation. Of course we’ll see what actual power consumption is like in our testing, but right off the bat NVIDIA is setting up GTX 1660 Ti to be noticeably more power efficient than the RTX 20 series cards.

Wait, It's a GTX Card?

Along with the new TU11x family of GPUs, for this launch NVIDIA is also creating a new family of video cards: the GeForce GTX 16 series. With GTX 1660 Ti and its obligatory siblings lacking support for NVIDIA’s RTX family of features, the company has decided to clarify their product naming in only a way that NVIDIA can. The end result is that along with keeping the GTX prefix rather than RTX – since these parts obviously lack RTX functionality – the company is also giving them a lower series number. Overall it’s probably for the best that NVIDIA didn’t include these cards with the 20 series, least we get another GeForce 4 situation.

But on the flip side, the number “16” also doesn’t have any great meaning to it; other than not being “20” the number is somewhat arbitrary. According to NVIDIA, they essentially picked it because they wanted a number close to 20 to indicate that the new GPU is very close in functionality and performance to TU10x, and thus “16” instead of “11” or the like. Of course I’m not sure calling it the GTX 1660 Ti is doing anyone any favors when the next card up is the RTX 2060 (sans Ti), but there’s none the less a somewhat clear numerical progression here – and at least for the moment, one not based on memory capacity.

Price, Product Positioning, & The Competition

Moving on, unlike NVIDIA’s other Turing card launches up until now – and unlike the GTX 1060 6GB – the GTX 1660 Ti is not getting a reference card release. Instead this is a pure virtual launch, as NVIDIA calls it, meaning all the cards hitting the shelves are customized vendor cards. Traditionally these launches tend to be closer to semi-custom cards – partners tend to use NVIDIA’s internal reference board design or their first cards – so we’ll have to see what pops up over the coming weeks and months. For now then, this means we’re going to see a lot of single and dual-fan cards, similar to the kinds of designs used for a lot of the GTX 1060 cards and some of the RTX 2070 cards.

Another constant across the Turing family has been price inflation, and the GTX 1660 Ti is no exception. With a launch price of $279, the new card is launching at $30 above the GTX 1060 6GB it replaces. This is a lot better than the $349 that NVIDIA wants for the RTX 2060, but in case anyone thought that the $250 price tag of the GTX 1060 was a fluke, then it’s clear that sub-$300 is the new norm for xx60 cards, and not sub-$200 as the GTX 960 flirted with. It’s also worth noting that NVIDIA won’t be launching with any bundles here; neither the RTX Game On bundle nor the GTX 1060 Fortnite bundles will be in play here, so what you see is what you get.

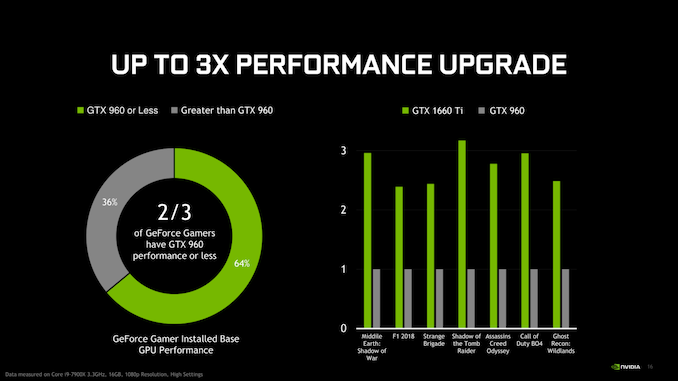

In terms of positioning against their own cards, NVIDIA is rolling out the GTX 1660 Ti as the successor to the GTX 1060 6GB, the latter of which are becoming increasingly rare in the market as NVIDIA’s unplanned Pascal stockpile is finally drawn down. So the GTX 1660 Ti and GTX 1060 won’t be sharing space on store shelves for long. However like the other Turing cards, the GTX 1660 Ti is not a true generational successor to the GTX 1060; at roughly 36% faster, NVIDIA is not expecting anyone to upgrade from their mid-range Pascal card to this. Instead, NVIDIA’s marketing efforts are going to be heavily focused on enticing GTX 960 users, who are a further generation back, to finally upgrade. In that respect the GTX 1660 Ti has a very large performance advantage, but this may be a tough sell since the GTX 960 launched at a much cheaper $199 price point.

As for AMD, the launch of the GTX 1660 Ti finally puts a Turing card in competition with their Polaris cards, particularly the $279 Radeon RX 590, a fight that the Radeon cannot win. While AMD hasn’t announced any price changes for the RX 590 at this time, AMD will have little choice but to bring it down in price.

Instead, AMD’s competitor for the GTX 1660 Ti looks like it will be the Radeon RX Vega 56. The company sent word last night that they are continuing to work with partners to offer lower promotional prices on the card, including a single model that was available for $279, but as of press time has since sold out. Notably, AMD is asserting that this is not a price drop, so there’s an unusual bit of fence sitting here; the company may be waiting to see what actual, retail GTX 1660 Ti card prices end up like. So I’m not wholly convinced we’re going to see too many $279 Vega 56 cards, but we’ll see. If nothing else, AMD’s Raise the Game Bundle is being offered, giving them an edge over NVIDIA in terms of pack-in games.

| Q1 2019 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| Radeon RX Vega 64 | $499 | GeForce RTX 2070 | |||

| $349 | GeForce RTX 2060 | ||||

| $329 | GeForce GTX 1070 | ||||

| Radeon RX Vega 56* Radeon RX 590 |

$279 | GeForce GTX 1660 Ti | |||

| $249 | GeForce GTX 1060 6GB (1280 cores) |

||||

| Radeon RX 580 (8GB) | $179/$189 | GeForce GTX 1060 3GB (1152 cores) |

|||

157 Comments

View All Comments

Fallen Kell - Friday, February 22, 2019 - link

I don't have any such hopes for Navi. The reason is that AMD is still competing for the console and part of that is maintaining backwards compatibility for the next generation of consoles with the current gen. This means keeping all the GCN architecture so that graphics optimizations coded into the existing games will still work correctly on the new consoles without the need for any work.GreenReaper - Friday, February 22, 2019 - link

Uh... I don't think that follows. Yes, it will be a bonus if older games work well on newer consoles without too much effort; but with the length of a console refresh cycle, one would expect a raw performance improvement sufficient to overcome most issues. But it's not as if when GCN took over from VLIW, older games stopped working; architecture change is hidden behind the APIs.Korguz - Friday, February 22, 2019 - link

Fallen Kell" The reason is that AMD is still competing for the console and part of that is maintaining backwards compatibility for the next generation of consoles with the current gen. "

prove it... show us some links that state this

Korguz - Friday, February 22, 2019 - link

CiccioB" GCN was dead at its launch time"

prove it.. links showing this, maybe .....

CiccioB - Saturday, February 23, 2019 - link

Aahahah.. prove it.9 years of discounted sell are not enough to show you that GCN simply started as half a generation old architecture to end as a generation obsolete one?

Yes, you may recall the only peak glory in GCN life, that is Hawaii, which was so discounted that made nvidia drop the price of their 780Ti. After that move AMD just brought up a fail after the other, starting with Fiji and it's monster BOM cost just to reach the much cheaper GM200 based cards and following with Polaris (yes, the once labeled "next AMD generation is going to dominate") and then again with Vega and Vega VII is not different.

What have you not understood that AMD has to use whatever technology available to get a performance near that of a 2080? What do you think will be the improvements nvidia will achieve once they will move to 7nm (or 7nm+)?

Today AMD is incapable to get to nvidia performances and they also lack their modern features. GCN at 7nm can be as fast a a 1080Ti that is 3 years older. Despite the AMD card still uses more power, which still shows how inefficient GCN architecture is.

That's why I hope Navi is a real improvement, or we will be left with nvidia monopoly as at 7nm it will really have more than generation of advantage, seen it will be much more efficient and still having new features that AMD will then add only in 3 or 4 years from now.

Korguz - Saturday, February 23, 2019 - link

links to what you stated ??? sounds a little like just your opinion, with no links....considering that AMD doesnt have the deep pockets that Nvidia has, or the part that amd has to find the funds to R&D BOTH gpus, AND gpus, while nvida can put all the funds that can into R&D, it seems to me that AMD has done ok for that that have had to to work with over the years, with Zen doing well in the CPU space, they might start to have a little more funds to put back into their products, and while i havent read much about Navi, i am also hopeful that it may give nvidia some competition, as we sure do need it...

CiccioB - Monday, February 25, 2019 - link

It seems you lack the basic intelligence to understand the facts that can be seen by anyone else.You just have hopes that are based on "your opinion", not facts.

"they might start to have a little more funds to put back into their products". Well, last quarter with Zen selling like never before they managed to have a 28 millions net income.

Yes, you read right. 28. Nvidia with all its problems got more than 500. Yes, you read right. About 20 times more.

The facts are 2 (this are numbers based on facts, not opinion, and you can create interpretations on facts, not your hopes of the future):

- AMD is selling Ryzen CPU at a discount like GPUs and boths have a 0.2% net margin

- The margins they have on CPU can't compensate the looses they have in the GPU market, and seen that they manage to make some money with console only when there are some spike of requests, I am asking you when and from what AMD will get new funds to pay something.

You see, it's not that really "a hope" believing that AMD is loosing money for every Vega they sell seen the cost of the BOM with respect to the costs of the competition. Negating this is a "hope it is not real", not a sensible way to ask for "facts".

You have to know at least basic facts before coming here to ask for links on basic things that everyone that knows this market already knows.

If you want to start looking less idiot than you do by asking constantly for "proves and links", just start reading quarter results and see what are the effects of the long period strategies both companies have achieved.

Then, if you have a minimum of technical competence (which I doubt) look at what AMD does with its mm^2 and Watts and what nvidia does.

Then come again to ask for "links" where I can tell you that AMD architecture is one generation behind (and will be probably left once nvidia will pass to 7nm.. unless Navi is not GCN).

Do you have other intelligent questions to pose?

Korguz - Wednesday, February 27, 2019 - link

right now.. your facts.. are also just your opinion, i would like to see where you get your facts, so i can see the same, thats why i asked for links...again.. AMD is fighting 2 fronts, CPUs AND gpus.. Nvida.. GPU ONLY, im not refuting your " facts " about how much each is earning...its obvious... but.. common sense says.. if one company has " 20 times " more income then the other.. then they are able to put more back into the business, then the other... that is why, for the NHL at least, they have have salary caps, to level the playing field so some teams, cant " buy " their way to winning... thats just common sense...

" AMD is selling Ryzen CPU at a discount like GPUs and boths have a 0.2% net margin "

and where did you read this.. again.. post a link so we can see the same info... where did you read how much it costs AMD to make losing money on each Vega GPU ?? again.. post links so we can see the SAME info you are...

" You have to know at least basic facts before coming here to ask for links on basic things that everyone that knows this market already knows. " and some would like to see where you get these " facts " that you keep talking about... thats basic knowledge.

" if you have a minimum of technical competence " actually.. i have quite a bit of technical competence, i build my own comps, even work on my own car when i am able to. " look at what AMD does with its mm^2 and Watts and what nvidia does. " that just shows nvidia's architecture is just more efficient, and needs less power for that it does...

lastly.. i have been civil and polite to you in my posts.. resorting to name calling and insults, does not prove your points, or make your supposed " facts " and more real. quite frankly.. resorting to name calling and insults, just shows how immature and childish you are.. that is a fact

CiccioB - Thursday, February 28, 2019 - link

Did you read last (and understood) AMD latest quarter results?

Have you seen the total cost of production and the relative net income?

Have you an idea of how the margin is calculated (yes, it takes into account the production costs that are reported in the quarter results)?

Have you understood half of what I have written when based on facts that AMD just made $28 of NET income last quarter there are 2 possible ways of seeing the cause of those pitiful numbers? One is that AMD is discounting every product (GPU and CPU) to a ridiculous margin, the other that Zen is sold at a profit while GPUs are not? Or you may try to hypnotize the third view, that they are selling Zen at a loss and GPU with a big margin. Anything is good, but at leas one of these is true. Take the one you prefer and then try to think which one requires less artificial hypothesis to be true (someone once said that the best solution is most often the most simple one).

That demonstrates that nvidia architecture is simply one generation head, as what changes from a generation to the other is usually the performance you can get from a certain die are which sucks a certain amount of energy and just is the reason because a x80 level card today costs >$1000. If you can get the same 1080Ti performance (and so I'm not considering the new features nvidia has added to Turing) by using a complete new PP node, more current and HBM just 3 years later, then you may arrive at the conclusion that something is not working correctly on what you have created.

So my statement that GCN was dead at launch (when a 7970 was on par with a GTX680 which was smaller and uses much less energy) just finds it perfect demonstration in Vega 20 where GCN is simply 3 years back with a BOM costing at least twice that of the 1080Ti (and still using more energy).

Now, if you can't understand the minimum basic facts and your hope is that their interpretation using a completely genuine and coherent thesis is wrong and requires "fact and links", than it is no my problem.

Continue to hope that what I wrote is totally rubbish and that you are the one with the right answer to this particular situation. However what I said is completely coherent with t e facts that we have been witnessing from GCN launch up to now.

Korguz - Friday, March 1, 2019 - link

" Have you seen the total cost of production and the relative net income? "no.. thats is why i asked you to post where you got this from, so i can see the same info, what part do YOU not understand ?

" based on facts that AMD just made $28 of NET income last quarter" because you continue to refuse to even mention where you get these supposed facts from, i think your facts, are false.

" One is that AMD is discounting every product (GPU and CPU) to a ridiculous margin"

and WHERE have you read this??? has any one else read this, and can verify it?? i sure dont remember reading anything about this, any where

what does being hypnotized have to do with anything ?? do you even know what hypnotize means ?

just in case, this is what it means :

to put in the hypnotic state.

to influence, control, or direct completely, as by personal charm, words, or domination: The speaker hypnotized the audience with his powerful personality.

again.. resorting to being insulting means you are just immature and childish....

look.. you either post links, or mention where you are getting your facts and info from, so i can also see the SAME facts and info with out having to spend hours looking.. so i can make the the same conclusions, or, admit, you CANT post where you get your facts and info from, because, they are just your opinion, and nothing else.. but i guess asking a child to mention where they get their facts and info from, so one can then see the same facts and info, is just to much to ask...