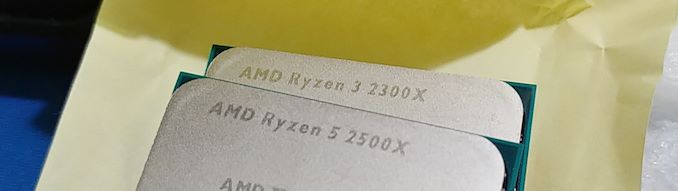

The AMD Ryzen 5 2500X and Ryzen 3 2300X CPU Review

by Ian Cutress on February 11, 2019 11:45 AM ESTAMD Ryzen 5 2500X and Ryzen 3 2300X Conclusion

With AMD only offering the Ryzen 5 2500X and Ryzen 3 2300X to large OEMs, it allows AMD to move some of the risk of supporting multiple CPUs and stock levels in the channel to its partners. It also pleases the partners to have special parts and build products around them, especially when those parts are competitive. Unfortunately for the end user, it means that if these parts are competitive, they have to buy a prebuilt system to get one, rather than build their own. To add to the quandary, these processors fall right into the price segment of its own very competitive APUs, and the company had to decide whether to have a CPU/APU crossover at retail, or a specific separation between the two. AMD went for the latter.

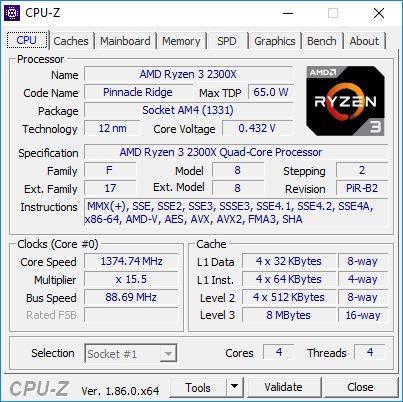

The reasons behind the way AMD organises it's retail product stack aside, the 2500X and 2300X actually fall into two small gaps in the lineup.

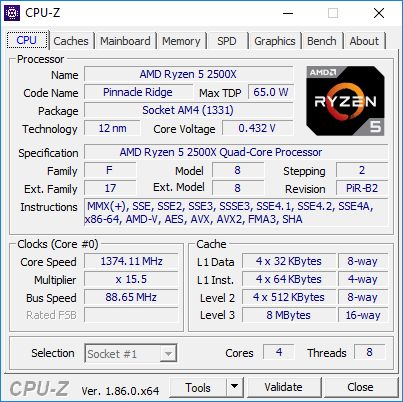

The quad core 2500X with simultaneous multithreading sits between the 2400G at $145 with similar specs, slightly lower frequency, and much lower power consumpton, and the 2600 at $160 with two more cores. There's arguably no room in there for the 2500X - and we see in our benchmarks it fits between both pretty easily.

On the other hand, the quad core 2300X without hyperthreading is more akin to a faster 2200G, albeit without the integrated graphics, and butts up against the 2400G above it. This gap to fit the 2300X is bigger, between $95 of the 2200G and $145 of the 2400G, so there could easily be an argument for a faster 2200G for discrete graphics users. On performance, the 2300X handsomely beats the 2200G due to consuming more power per core, and even takes the 2400G on lightly threaded tests, but it is less power efficient. For small form factor systems the APUs still win, but in raw CPU and gaming performance, the 2300X easily fits between the two APUs, especially if we marked it at $110 or so, rather than $130.

So where does this AMD? In my professional opinion, the 2300X could be a really nice low-end processor for users that don't want to spend money on integrated graphics they won't use. Add in a 95W stock cooler and at $100-$110, it would be a really nice chip. The 2500X is a harder sell. For the small price gap it fits into, I would tell users to bite the bullet and go for the 2600, or if they need integrated graphics to get the 2400G. The only way the 2500X makes sense is if the 2400G or 2600 isn't available in a particular local market.

It will be interesting to see if AMD ever sells these at retail. There are four other chips they are not selling at retail either (at least, not retail worldwide): the 2700E, 2600E, 2400GE, and 2200GE. If anyone finds any of these for sale, let me know over email or Twitter @IanCutress

%20-%20Copya_678x452.jpg)

65 Comments

View All Comments

Le Québécois - Monday, February 11, 2019 - link

Ian, any reason why more often than not, you seem to "skip" 1440 in your benchmarks? It's only present for a few games.Considering the GTX 1080, your best card, is always the bottleneck at 4K, as your numbers show, wouldn't it make more sense to focus more on 1440 instead?

Especially considering it's the "best" resolution on the market if you are looking for a high pixel density yet still want to run your games at a playable levels of fps.

Ian Cutress - Monday, February 11, 2019 - link

Some benchmarks are run at 1440p. Some go up to 8K. It's a mix. There's what, 10 games there? Not all of them have to conform to the same testing settings.Le Québécois - Tuesday, February 12, 2019 - link

Sorry for the confusion. I can clearly see we've got very different settings in that mix. I guess a more direct question would be: why do it this way and not with a more standardized series of test?A followup question would also be, why 8K? You are already GPU limited at 4K so your 8K result are not going to give any relevant information about those CPUs.

Sorry, I don't mean to criticized, I simply wish to understand your thought process.

MrSpadge - Monday, February 11, 2019 - link

What exactly do you want to see there that you can't see at 1080p? Differences between CPUs are going to be muddied due to approaching the GPU limit, and that's it.Le Québécois - Tuesday, February 12, 2019 - link

Well, at 1080, you can definitely see the difference between them, and exactly like you said, at 4K, it's all the same because of the GPU limitations. 1440 seems more relevant than 4K considering this. This is after all, a CPU review and most of the 4K results could be summed up by "they all perform within a few %".neblogai - Monday, February 11, 2019 - link

End of page 19: R5 2600 is really 65W TDP, not 95W.Ian Cutress - Monday, February 11, 2019 - link

Doh, a typo in all my graphs too. Should be updated.imaheadcase - Monday, February 11, 2019 - link

Im on phone on AT and truly see how terrible ads are now. AT straight up letting scam ads now being served because desperate for revenue. 😂PeachNCream - Monday, February 11, 2019 - link

Is there a point in even mentioning that give how little control they now have over advertising? Just fire up the ad blocker or visit another site and let the new owners figure it out the hard way.StevoLincolnite - Tuesday, February 12, 2019 - link

Anandtech had Maleware/Viruses infect it's userbase years ago via crappy adverts.That was the moment I got Ad-Block. And that is the moment where I will never turn it off again.