Intel to Discontinue Itanium 9700 ‘Kittson’ Processor, the Last of the Itaniums

by Anton Shilov on January 31, 2019 6:30 PM EST

Intel on Thursday notified its partners and customers that it would be discontinuing its Itanium 9700-series (codenamed Kittson) processors, the last Itanium chips on the market. Under their product discontinuance plan, Intel will cease shipments of Itanium CPUs in mid-2021, or a bit over two years from now. The impact to hardware vendors should be minimal – at this point HP Enterprise is the only company still buying the chips – but it nonetheless marks the end of an era for Intel and their interesting experiment into a non-x86 VLIW-style architecture.

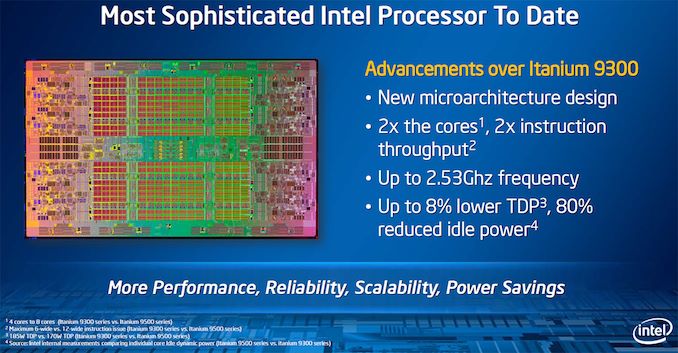

The current-generation octa and quad-core Itanium 9700-series processors were introduced by Intel in 2017, in the process becoming the final processors based on the IA-64 ISA. Kittson for its part was a clockspeed-enhanced version of the Itanium 9500-series ‘Poulson’ microarchitecture launched in 2012, and featured a 12 instructions per cycle issue width, 4-way Hyper-Threading, and multiple RAS capabilities not found on Xeon processors back then. It goes without saying that the writing has been on the wall for Itanium for a while now, and Intel has been preparing for an orderly wind-down for quite some time.

At this point, the only systems that actually use Itanium 9700-series CPUs are the HPE Integrity Superdome machines, which are running the HP-UX 11i v3 operating system and launched in mid-2017. So Intel's sole Itanium customer will have to submit their final Itanium orders – as well as orders for Intel’s C112/C114 scalable memory buffers – by January 30, 2020. Intel will then ship its last Itanium CPUs by July 29, 2021. HPE for its part will support their systems through at least December 31, 2025, but depending on how much stock HPE wants to keep on hand, they'll presumably stop selling them a few years sooner than that.

With the EOL plan for the Itanium 9700-series CPUs in place, it certainly means that this is the end of the road for the whole Itanium project, both for HPE and Intel. The former has been offering Xeon-based NonStop and Integrity servers for years now, whereas the latter effectively ceased development of new CPUs featuring the IA-64 ISA earlier this decade. The machines running these CPUs will of course continue their operations through at least late 2025 (or until HPE drops HP-UX 11i v3) simply because mission-critical systems are bought for the long-haul, but Intel will cease shipments of Itaniums in 2.5 years from now.

Related Reading:

- Intel to Discontinue Itanium 9500 ‘Poulson’ CPUs

- Intel’s Itanium Takes One Last Breath: Itanium 9700 Series CPUs Released

- Intel to Release New Itanium CPUs in 2012: New Architecture and Up to Eight Cores

Source: Intel

63 Comments

View All Comments

SarahKerrigan - Friday, February 1, 2019 - link

Eh, not really. I spent a lot of years working at a very intimate level with IPF, and while there were a lot of things I liked, they were almost all uarch, not ISA.The good:

+Ridiculously fast caches. Single-cycle L1, large and fast L2 and L3.

+Four load-store units. Meant that you could keep a LOT of memory throughput going. Doesn't apply to Poulson or Merced though.

+Two large, fast FMA units. Paired with the above, meant some linear algebra code performed very well.

+Speculative loads - software-controlled speculation that didn't entirely suck.

The bad:

-Code density was absolutely atrocious. Best case, assuming no NOP padding (ie, favorable templates for your code stream) was 128 bits for three ops. That's also assuming you don't use the extended form (82 bits IIRC) that took up two slots in your instruction word.

-Advanced loads never worked well and had strange side effects. This is *not* software speculation done right.

-L1 miss rate was always high IME, both on I and D side. I've assumed there was a trade-off made here that resulted in the undersized 2-way L1 that was accessible in one cycle.

-Intel never seems to have felt SIMD beyond MMX equivalence was necessary. There were technical-compute apps that would have benefited from it.

-Intel never seems to have taken multithreading seriously. The switch-on-event multithreading in Montecito and up offered tiny gains IME, and at least one OEM (SGI) didn't bother supporting it at all. Even FGMT would have been a welcome improvement.

-I feel like there was a tendency in the IPF design to jump to whizz-bang features that didn't offer much in real code - RSE comes to mind.

In summary, I had a lot of fun working with Itanium. It had a million dials and switches that appealed to me as a programmer. But in-order cores have progressively looked more and more like the way forward, and IPF was never consistently good enough to disprove that.

mode_13h - Sunday, February 3, 2019 - link

Only 2-way? Ouch!Lack of FP SIMD sounds like a really bad decision. Perhaps, by the time it became feasible, the writing was already on the wall.

I assume switch-on-event is referring to events like cache-miss?

What's RSE?

Why do you say in-order cores looked like the way forward? Only in GPUs and ultra-low power.

SarahKerrigan - Sunday, February 3, 2019 - link

I meant out-of-order was the future. Embarrassing typo. I held onto the "maybe this EPIC thing can work out!" gospel up until Power7 came out, but after that, it was pretty clear where the future was going.There was very basic FP SIMD, but IIRC only paired single-precision ops on existing registers. I suspect that 128b SIMD would have been seen as heretical by the original IPF design group - remember that Multiflow had NO VECTORS coffee mugs! That being said, it wasn't really an outlier - the other RISC/UNIX players were all pretty late to the SIMD party. SPARC never got a world-class vector extension until Fujitsu's HPC-ACE, and while IBM had VMX in its pocket for years, Power6 was the first mainline Power core to ship it (and VMX performance was decidedly underwhelming on P6; P7 improved it greatly.)

RSE was the Register Stack Engine. The idea was that registers would automatically fill/spill to backing store across function calls in a way that was mostly transparent to the application.

Switch-on-event was indeed long-running events. IIRC the main things were either a software-invoked switch hint or an L3 miss. It took something like 15 cycles to do a complete thread switch (pipeline flush of thread 1 + time for ops from thread 2 to fill the pipeline) on Montecito/Tukwila. Per my recollection, Poulson knocked a couple cycles off SoEMT thread switch times (as well as doing some funky stuff with how it was implemented internally), but it was still several.

Yeah, 2-way L1 was pretty painful. It was offset a little bit by the fact that - especially after Montecito shipped - the L2 was fast (7 cyc L2I, 5-6 cyc L2D IIRC) and *very* large, but the hit rate for L1I and L1D was fairly embarrassing, especially on code with less-than-perfectly-regular memory access patterns.

SarahKerrigan - Sunday, February 3, 2019 - link

A quick look at the reference manual indicates I misremembered and the L1 was actually 4-way. Still 16+16, though, and my point about hit rates being decidedly mediocre stands.mode_13h - Sunday, February 3, 2019 - link

How do you get a bad hit rate? Did it have a poor eviction policy? Or maybe it tried to do some fancy prefetching that burned through cache too quickly?How big were the cachelines? Perhaps too small + no hardware prefetching could've caused a lot of misses.

SarahKerrigan - Sunday, February 3, 2019 - link

L1I miss rate was bad due to a combination of being only 16KB and Itanium ops being enormous (as mentioned above, best-case 3 ops in 128 bits; frequently worse in real code.)Fancy prefetching is almost entirely absent on Itanium, but there are several software-controlled solutions (cache-control ops, speculative loads [which load a NaT value instead of generating exception if they fail], and advanced loads [loads into a buffer called the ALAT, which then gets checked at load time, resulting in either picking up the value or failing to and initiating a new non-advanced load.])

mode_13h - Sunday, February 3, 2019 - link

So, did RSE keep a dirty bit for each register? I thought a cool way to solve the caller-save vs. callee-save debate would be to save the intersection (logical AND) of the registers modified by the caller vs. the registers the callee would modify. The dirty mask could then be updated by zeroing those registers that had been saved, for the sake of functions called by the callee.mode_13h - Sunday, February 3, 2019 - link

It was the future of computing, until the future passed it by. Now, it is the past.The kinds of things it was really good at are easily ported to GPUs. Those are already much faster than any EPIC CPU that could've existed.

WasHopingForAnHonestReview - Saturday, February 2, 2019 - link

But can it run crysis at 60fps 4k?mode_13h - Sunday, February 3, 2019 - link

No.