The NVIDIA GeForce RTX 2060 6GB Founders Edition Review: Not Quite Mainstream

by Nate Oh on January 7, 2019 9:00 AM ESTAshes of the Singularity: Escalation (DX12)

A veteran from both our 2016 and 2017 game lists, Ashes of the Singularity: Escalation remains the DirectX 12 trailblazer, with developer Oxide Games tailoring and designing the Nitrous Engine around such low-level APIs. The game makes the most of DX12's key features, from asynchronous compute to multi-threaded work submission and high batch counts. And with full Vulkan support, Ashes provides a good common ground between the forward-looking APIs of today. Its built-in benchmark tool is still one of the most versatile ways of measuring in-game workloads in terms of output data, automation, and analysis; by offering such a tool publicly and as part-and-parcel of the game, it's an example that other developers should take note of.

Settings and methodology remain identical from its usage in the 2016 GPU suite. To note, we are utilizing the original Ashes Extreme graphical preset, which compares to the current one with MSAA dialed down from x4 to x2, as well as adjusting Texture Rank (MipsToRemove in settings.ini).

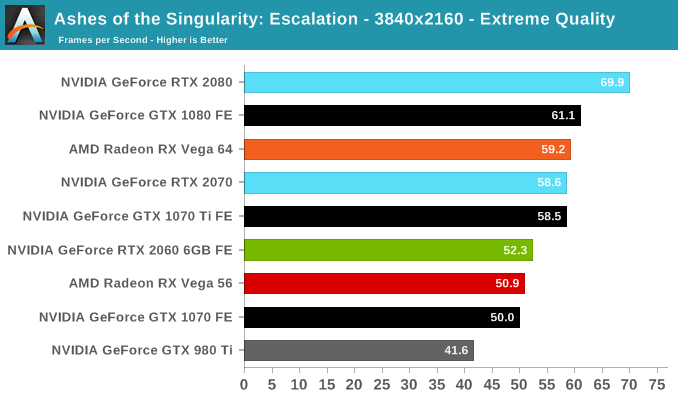

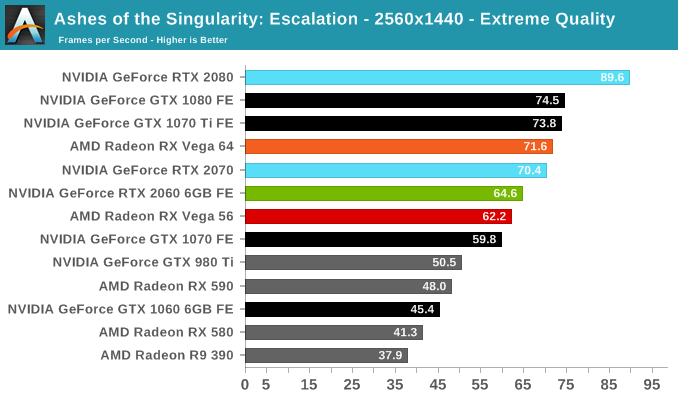

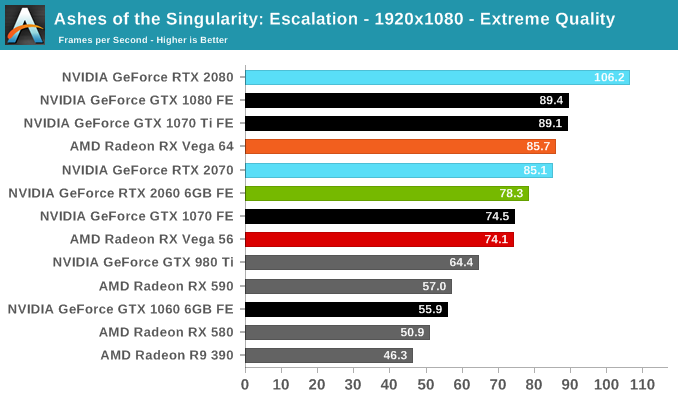

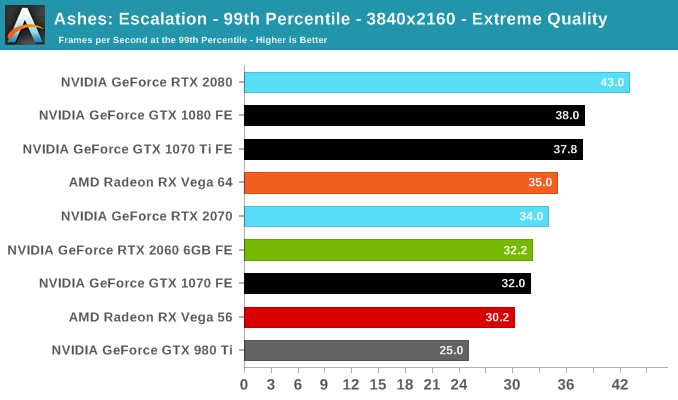

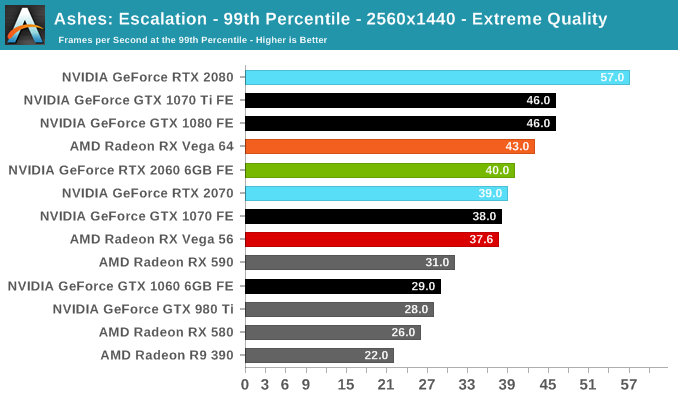

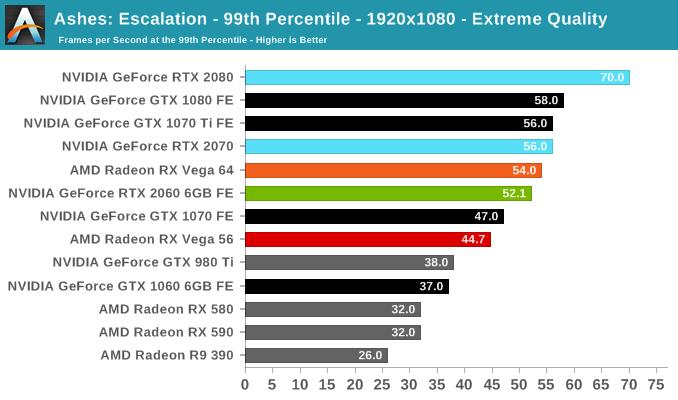

Somewhat surprisingly, the RTX 2060 (6GB) performs poorly in Ashes, closer to the GTX 1070 than the GTX 1070 Ti. Although it is still ahead of the RX Vega 56, it's not an ideal situation, where the lead over the GTX 1060 6GB is cut to around 40%.

134 Comments

View All Comments

CiccioB - Thursday, January 10, 2019 - link

I would like to remind you that when the 4K interest begun there were cards like the 980TI and the Fury, both unable to cope with such a resolution.Did you ever write a single sentence against the fact that 4K was a gimmick useless to most people because it was too expensive to support?

You may know that if you want to get to a point you have to start walking towards it. If you never start, you'll never reach it.

nvidia started before any other one in the market. You find it a gimmick move. I find it real innovation. Does it costs too much for you? Yes, also Plasma panels had 4 zeros in their price tag at the beginning, but a certain point I could get one myself without going bankruptcy.

AMD and Intel will come to the ray tracing table sooner than you think (that is next generation for AMD after Navi that is already finalized without the new computing units)

saiga6360 - Thursday, January 10, 2019 - link

Here's the problem with that comparison, 4K is not simply about gaming while ray tracing is. 4K started in the movie industry, then home video, then finally games. There is a trend that the gaming industry couldn't avoid if it tried so yes, nvidia started it but its not like nobody was that surprised and many thought AMD will soon follow. Ray tracing in real time is a technical feat that not everyone will get on board right away. I do applaud nvidia for starting it but it's too expensive and that's a harder barrier to entry than 4K ever was.maroon1 - Monday, January 7, 2019 - link

wolfenstein 2 Uber texture is waste of memory. It does not look any different compared to ultrahttp://m.hardocp.com/article/2017/11/13/wolfenstei...

Quote from this review

" We also noticed no visual difference using "Uber" versus "Ultra" Image Streaming unfortunately. In the end, it’s probably not worth it and best just to use the "Ultra" setting for the best experience."

sing_electric - Monday, January 7, 2019 - link

I wish the GPU pricing comparison charts included a relative performance index (even if it was something like the simple arithmetic mean of all the scores in the review).The 2060 looks like it's in a "sweet spot" for performance if you want to spend more less than $500 but are willing to spend more than $200, but you can't really tell that from the chart (though if you read the whole review it's clear). Spending the extra $80 to go from a 1060/RX 580 to a RX 590 doesn't net you much performance, OTOH, going from the $280 RX 580 to the $350 2060 gets you a very significant boost in performance.

Semel - Monday, January 7, 2019 - link

"11% faster than the RX Vega 56 at 1440p/1080p, "A two fans card is faster than a terrible, underperforming due to a bad one fan design reference Vega card. Shocker.

Now get a proepr Vega 56 card, undervolt it and OC it. And compare to OCed 2060.

YOu are in for a surprise.

CiccioB - Monday, January 7, 2019 - link

A GPU born for the computational task, with 480mm^2 of silicon thought for that, 8GB of expensive HBM and consuming 120W more being powned by a chip in the x60 class sold for the same price (and despite the silicon not all being used and benchmarked for the today games, the latter still preforms better, let's see when RTX compute units and tensor will be used for other tasks like ray tracing but also DLSS, AI and any other kind of effects. And do not forget about mesh shading).I wonder how low the price of that crap should go down before someone consider it a good deal.

Vega chip failed miserably at its aim of making any competition to Pascal in both games, prosumer and professional market, now with this new cutted Turing chip Vega completely looses any meaning of even being produced. Each sold pieces is a rob to AMD's cash coffin and it will be EOF sooner than later.

The problem for AMD is that until Navi they will have nothing to go against Turing (the 590 launch is a joke, can't you really thing a company that is serious in this market can do that, can you?) and will constantly loose money in the graphics division. And if Navi is not launched soon enough, they will lose a lot of money the more GPU they (under)sell. If launched too early they will loose money for using a not mature enough PP with lower yields (and boosting the voltage isn't really going to produce a #poorvolta(ge) device even at 7nm). These are the problem of being an underdog that needs latest expensive technological applications to create something that can vaguely being considered decent with respect to the competition.

Let's hope Navi is not a flop as Polaris, or also the generation after Turing will cost even more, after the price have already gone up with Kepler, Maxwell and Pascal.

Great job this GCN architecture! Great job Koduri!

nevcairiel - Monday, January 7, 2019 - link

Comparing two bog standard reference cards is perfectly valid. If AMD wanted to shine there, they should've done a better job.Retycint - Tuesday, January 8, 2019 - link

Exactly. AMD shouldn't have pushed the Vega series so far past the performance/voltage sweet spot in the first place.sing_electric - Tuesday, January 8, 2019 - link

I mean, at that point, then, why bother releasing it? If you look at perf/watt, it's not really much of an improvement over Polaris.D. Lister - Monday, January 14, 2019 - link

@Semel: "...get a proepr Vega 56 card, undervolt it..."Why is AMD so bad at setting the voltage in their GPUs? How good their products can be if they can't even properly do something that even the average Joe weekend overclocker can figure out?

Answer to the first question is: "They aren't. AMD sets those voltages because they know it is necessary to keep the GPU stable under load. So, when you think yourself more clever than a multi billion dollar tech giant and undervolt a Radeon, you make it less reliable outside of scripted benchmark runs.