The NVIDIA GeForce RTX 2060 6GB Founders Edition Review: Not Quite Mainstream

by Nate Oh on January 7, 2019 9:00 AM ESTWolfenstein II: The New Colossus (Vulkan)

id Software is popularly known for a few games involving shooting stuff until it dies, just with different 'stuff' for each one: Nazis, demons, or other players while scorning the laws of physics. Wolfenstein II is the latest of the first, the sequel of a modern reboot series developed by MachineGames and built on id Tech 6. While the tone is significantly less pulpy nowadays, the game is still a frenetic FPS at heart, succeeding DOOM as a modern Vulkan flagship title and arriving as a pure Vullkan implementation rather than the originally OpenGL DOOM.

Featuring a Nazi-occupied America of 1961, Wolfenstein II is lushly designed yet not oppressively intensive on the hardware, something that goes well with its pace of action that emerge suddenly from a level design flush with alternate historical details.

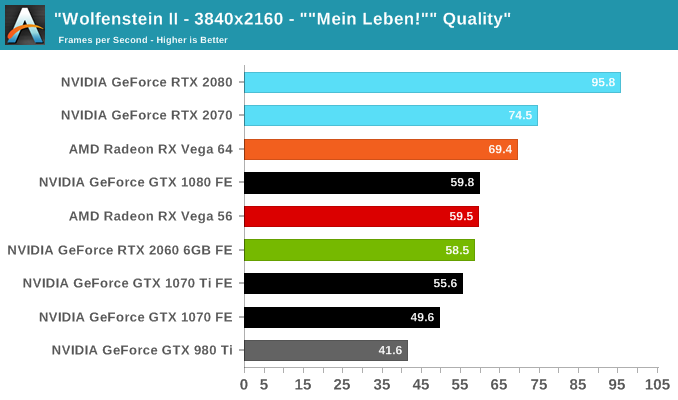

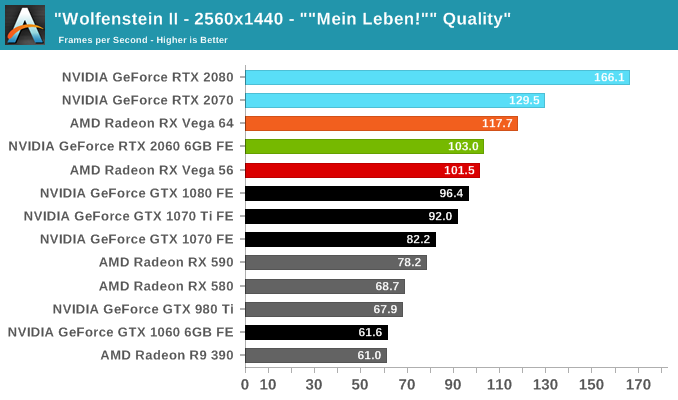

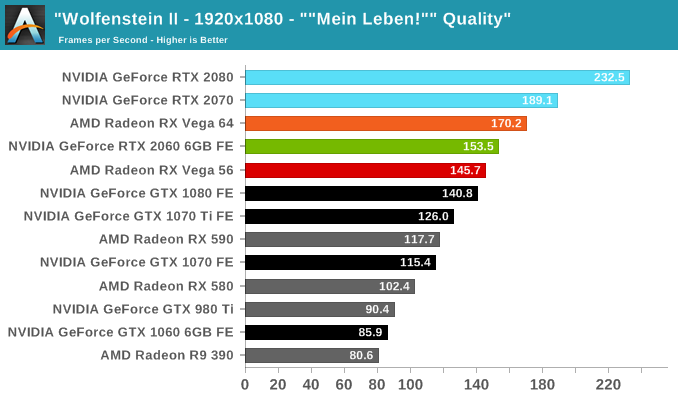

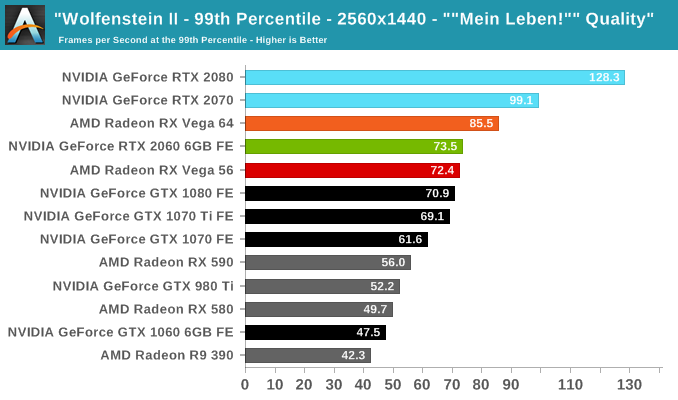

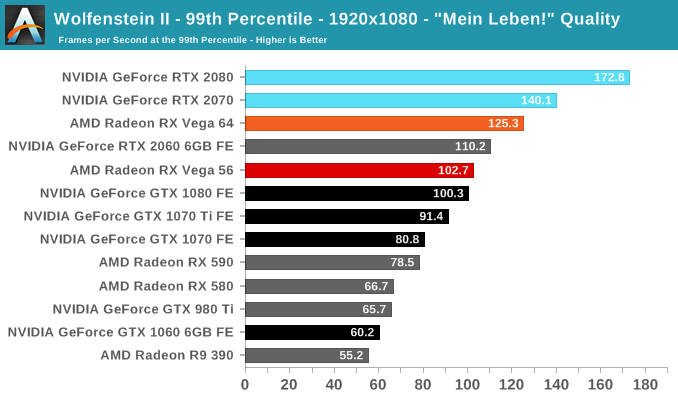

The highest quality preset, "Mein leben!", was used. Wolfenstein II also features Vega-centric GPU Culling and Rapid Packed Math, as well as Radeon-centric Deferred Rendering; in accordance with the preset, neither GPU Culling nor Deferred Rendering was enabled. NVIDIA Adaptive Shading was not enabled.

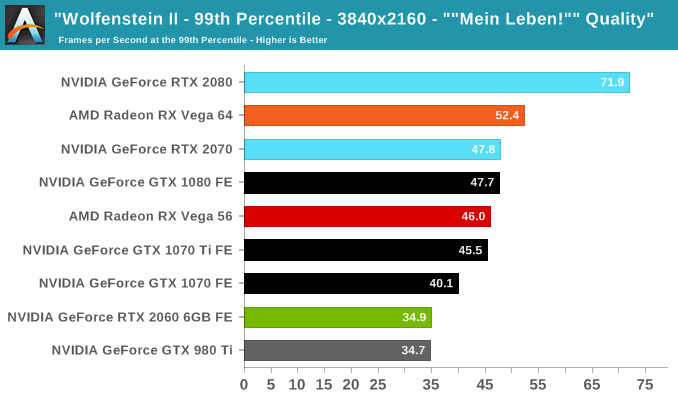

In summary, Wolfenstein II tends to scales well, enables high framerates with minimal CPU bottleneck, enjoys running on modern GPU architectures, and consumes VRAM like nothing else. For the Turing-based RTX 2060 (6GB), this results in outpacing the GTX 1080 as well as RX Vega 56 at 1080p/1440p. The 4K results can be deceiving; looking closer at 99th percentile framerates shows a much steeper dropoff, more likely than not to be related to the limitations of the 6GB framebuffer. We've already seen the GTX 980 and 970 struggle at even 1080p, chained by 4GB video memory.

134 Comments

View All Comments

B3an - Monday, January 7, 2019 - link

More overpriced useless shit. These reviews are very rarely harsh enough on this kind of crap either, and i mean tech media in general. This shit isn't close to being acceptable.PeachNCream - Monday, January 7, 2019 - link

Professionalism doesn't demand harshness. The charts and the pricing are reliable facts that speak for themselves and let a reader reach conclusions about the value proposition or the acceptability of the product as worthy of purchase. Since opinions between readers can differ significantly, its better to exercise restraint. These GPUs are given out as media samples for free and, if I'm not mistaken, other journalists have been denied pre-NDA-lift samples by blasting the company or the product. With GPU shortages all around and the need to have a day one release in order to get search engine placement that drives traffic, there is incentive to tenderfoot around criticism when possible.CiccioB - Monday, January 7, 2019 - link

It all depends on what is your definition of "shit".Shit may be something that for you costs too much (so shit is Porche, Lamborghini and Ferrari, but for some else, also Audi, BMW and Mercedes and for some one else also all C cars) or may be something that does not work as expected or under perform with respect to the resources it has.

So for someone else it may be shit a chip that with 230mm^q, 256GB/s of bandwidth and 240W perform like a chip that is 200mm^2, 192GB/s of bandwidth and uses half the power.

Or it may be a chip that with 480mm^2, 8GB of latest HBM technology and more than 250W perform just a bit better than a 314mm^2 chip with GDDR5X and that uses 120W less.

On each one its definition of "shit" and what should be bought to incentive real technological progress.

saiga6360 - Tuesday, January 8, 2019 - link

It's shit when your Porsche slows down when you turn on its fancy new features.Retycint - Tuesday, January 8, 2019 - link

The new feature doesn't subtract from its normal functions though - there is still an appreciable performance increase despite the focus on RTS and whatnot. Plus, you can simply turn RTS off and use it like a normal GPU? I don't see the issue heresaiga6360 - Tuesday, January 8, 2019 - link

If you feel compelled to turn off the feature, then perhaps it is better to buy the alternative without it at a lower price. It comes down to how much the eye candy is worth to you at performance levels that you can get from a sub $200 card.CiccioB - Tuesday, January 8, 2019 - link

It's shit when these fancy new features are kept back by the console market that has difficult at handling less than half the polygons that Pascal can, let alone the new Turing CPUs.The problem is not the technology that is put at disposal, but it is the market that is held back by obsolete "standards".

saiga6360 - Tuesday, January 8, 2019 - link

You mean held back by economics? If Nvidia feels compelled to sell ray tracing in its infancy for thousands of dollars, what do you expect of console makers who are selling the hardware for a loss? Consoles sell games, and if the games are compelling without the massive polygons and ray tracing then the hardware limitations can be justified. Besides, this hardly can be said of modern consoles that can push some form of 4K gaming at 30fps of AAA games not even being sold on PC. Ray tracing is nice to look at but it hardly justifies the performance penalties at the price point.CiccioB - Wednesday, January 9, 2019 - link

The same may be said for 4K: fancy to see but 4x the performance vs FulllHD is too much.But as you can se, there are more and more people looking for 4K benchmarks to decide which card to buy.

I would trade better graphics vs resolution any day.

Raytraced films on bluray (so in FullHD) are way much better than any rasterized graphics at 4K.

The path for graphics quality has been traced. Bear with it.

saiga6360 - Wednesday, January 9, 2019 - link

4K vs ray tracing seems like an obvious choice to you but people vote with their money and right now, 4K is far less cost prohibitive for the eye-candy choice you can get. One company doing it alone will not solve this, especially at such cost vs performance. We got to 4K and adaptive sync because it is an affordable solution, it wasn't always but we are here now and ray tracing is still just a fancy gimmick too expensive for most. Like it or not, it will take AMD and Intel to get on board for ray tracing on hardware across platforms, but before that, a game that truly shows the benefits of ray tracing. Preferably one that doesn't suck.