The NVIDIA GeForce RTX 2060 6GB Founders Edition Review: Not Quite Mainstream

by Nate Oh on January 7, 2019 9:00 AM ESTAshes of the Singularity: Escalation (DX12)

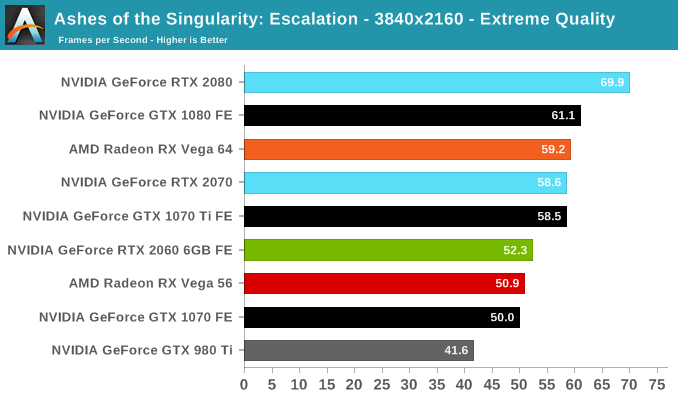

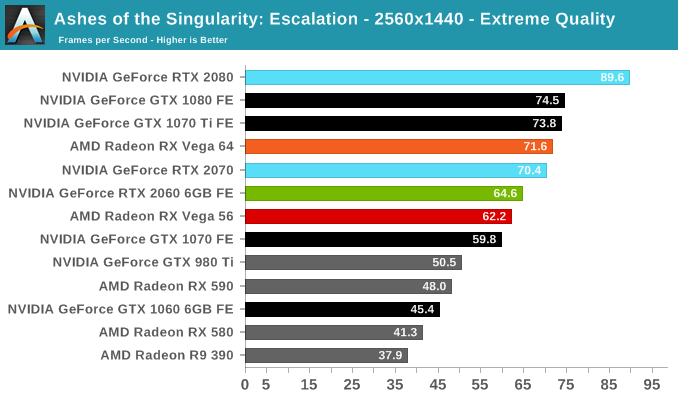

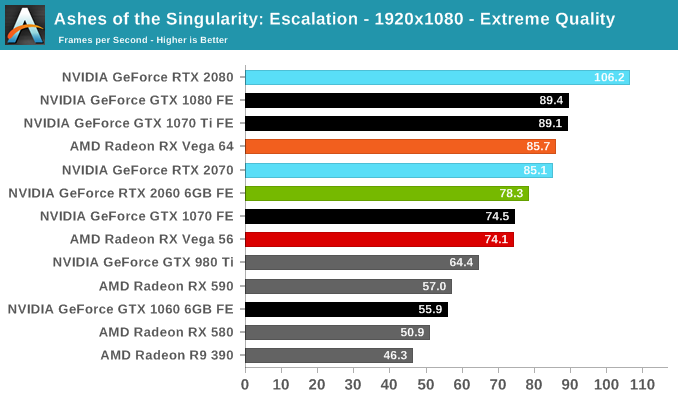

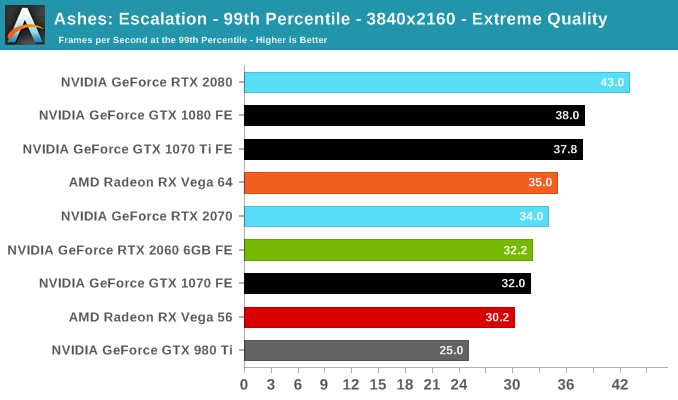

A veteran from both our 2016 and 2017 game lists, Ashes of the Singularity: Escalation remains the DirectX 12 trailblazer, with developer Oxide Games tailoring and designing the Nitrous Engine around such low-level APIs. The game makes the most of DX12's key features, from asynchronous compute to multi-threaded work submission and high batch counts. And with full Vulkan support, Ashes provides a good common ground between the forward-looking APIs of today. Its built-in benchmark tool is still one of the most versatile ways of measuring in-game workloads in terms of output data, automation, and analysis; by offering such a tool publicly and as part-and-parcel of the game, it's an example that other developers should take note of.

Settings and methodology remain identical from its usage in the 2016 GPU suite. To note, we are utilizing the original Ashes Extreme graphical preset, which compares to the current one with MSAA dialed down from x4 to x2, as well as adjusting Texture Rank (MipsToRemove in settings.ini).

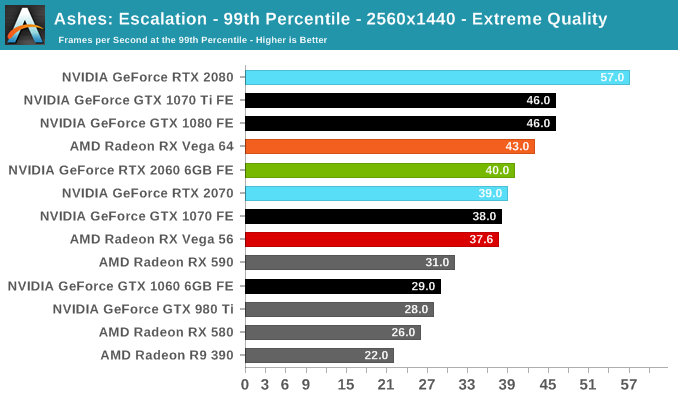

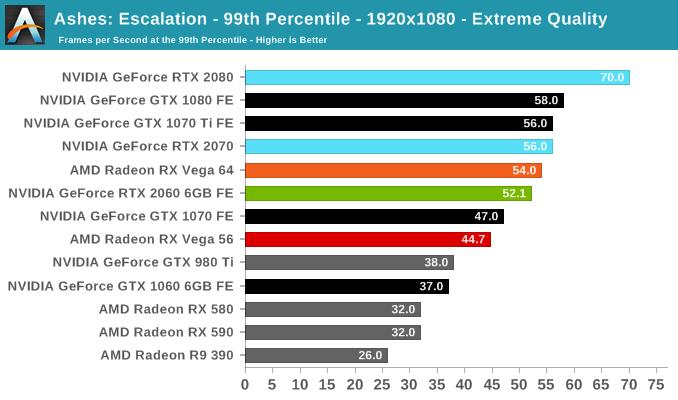

Somewhat surprisingly, the RTX 2060 (6GB) performs poorly in Ashes, closer to the GTX 1070 than the GTX 1070 Ti. Although it is still ahead of the RX Vega 56, it's not an ideal situation, where the lead over the GTX 1060 6GB is cut to around 40%.

134 Comments

View All Comments

just4U - Wednesday, January 23, 2019 - link

wait late to this and likely no one will read it but shoot you never know. I have Vega cards. I undervolt and overclock. They work great.sing_electric - Monday, January 7, 2019 - link

Here's the thing, though, right now, there ISN'T a card on the market that offers anything like that level of performance for that price, if you can actually buy one for close to MSRP. The RX 590 is almost embarrassing in this test; a recently-launched card (though based on older tech) for $60 less than the 2060 but offering nowhere near the performance. The way I read the chart on performance/prices, there's good value at ~$200 (for a 580 card), then no good values up till you get to the $350 2060 (assuming it's available for close to MSRP). If AMD can offer the Vega 56 for say, $300 or less, it becomes a good value, but today, the best price I can find on one is $370, and that's just not worth it.jrs77 - Monday, January 7, 2019 - link

I don't say, that the 2060 isn't good value, but it simply is priced way too high to be a midrange card, which the xx60-series is supposed to be.Midrange = $1000 gaming-rig and that only leaves some $200-250 for the GPU. And as I wrote, even the 1060 was out of that pricerange for most of the last two years.

sing_electric - Monday, January 7, 2019 - link

I totally get your point - but to some extent, it's semantics. I'd never drop the ~$700 that it costs to get a 2080 today, but given that that card exists and is sold to consumers as a gaming card, it is now the benchmark for "high end." The RTX 2060 is half that price, so I guess is "mid range," even if $350 is more than I'd spend on a GPU.We've seen the same thing with phones - $700 used to be 'premium' but now the premium is more like $1k.

The one upside of all this is that the prices mean that there's likely to be a lot of cards like the 1060/1070/RX 580 in gaming rigs for the next few years, and so game developers will likely bear that in mind when developing titles. (On the other hand, I'm hoping maybe AMD or Intel will release something that hits a much better $/perf ratio in the next 2 years, finally putting pricing pressure on Nvidia at the mid/high end which just doesn't exist at the moment.)

Bluescreendeath - Monday, January 7, 2019 - link

It could be possible that the GTX2060 is not midranged but lower high range card. Most XX60 cards in the past were midranged, but they were not all midranged. Though most past XX60 cards have been midranged and cost around $200-$300, if you go to the GTX200 series, the GTX260's MSRP was $400 and was more of an upper ranged card. The Founder's Edition of the 1060 also launched at $300.dave_the_nerd - Monday, January 7, 2019 - link

Weeeeeeeeeelllll.... before all the mining happened, the 970 was a pretty popular card at $300-$325. (At one point iirc it was the single most popular discrete GPU on Steam's hardware survey.)Vayra - Wednesday, January 9, 2019 - link

Yeah, I think 350 is just about the maximum Nvidia can charge for midrange. The 970 had the bonus of offering 780ti levels of performance very shortly after that card launched. Today, we're looking at almost 3 years for such a jump (1080 > 2060).StrangerGuy - Wednesday, January 9, 2019 - link

I paid an inflated $450 for my launch 1070 2.5 years, and this 2060 is barely faster at $100 less. Godawful value proposition especially when release dates are taken into consideration.ScottSoapbox - Monday, January 7, 2019 - link

I wonder if custom 2060 cards will add 2GB more VRAM and how much that addition will cost.A5 - Monday, January 7, 2019 - link

It's been a *long* time since I've seen a board vendor offer a board with more VRAM than spec'd by the GPU maker. I would be surprised if anyone did it...easier to point people at the 2070.