The Intel Xeon W-3175X Review: 28 Unlocked Cores, $2999

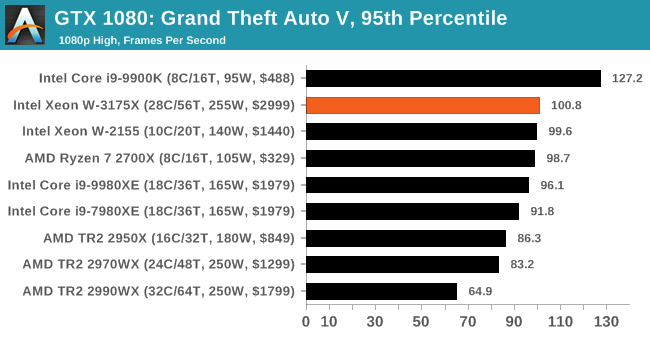

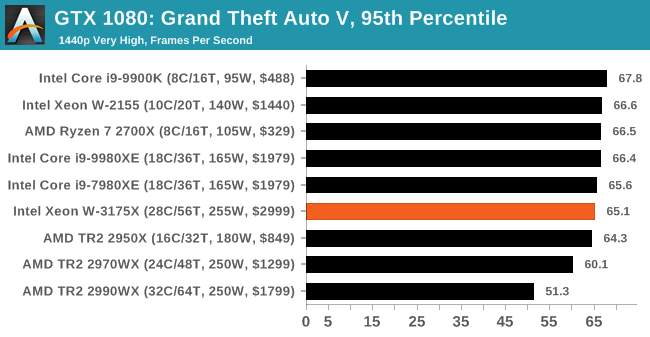

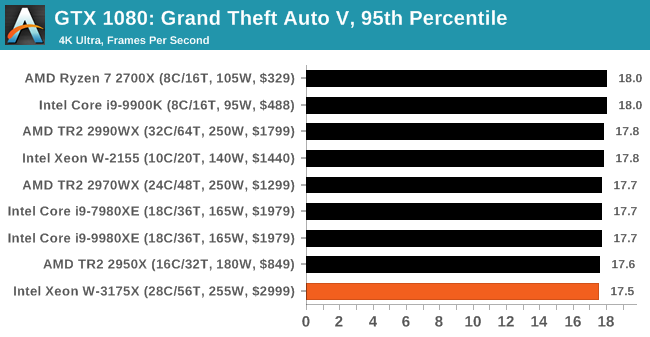

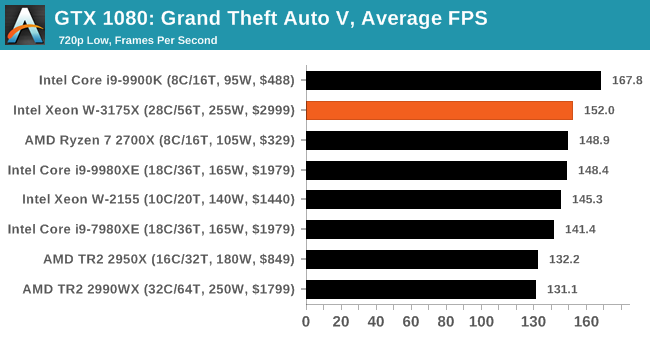

by Ian Cutress on January 30, 2019 9:00 AM ESTGaming: Grand Theft Auto V

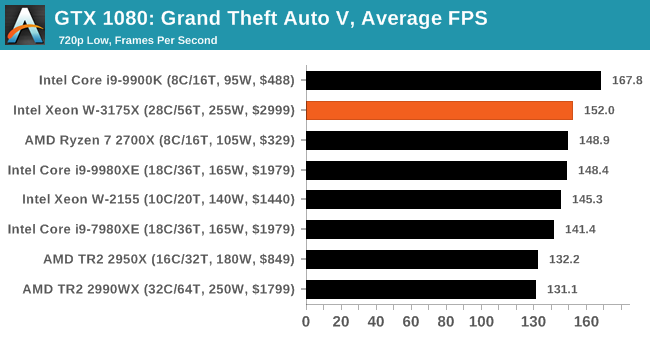

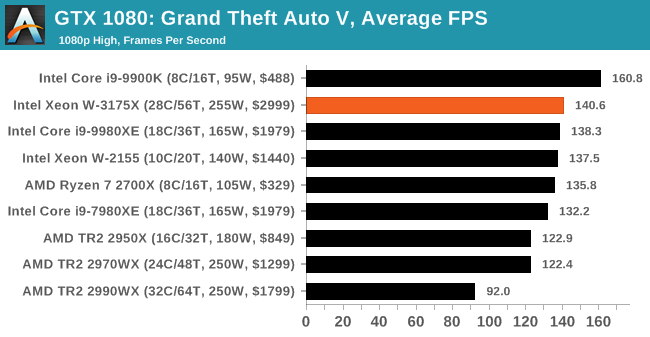

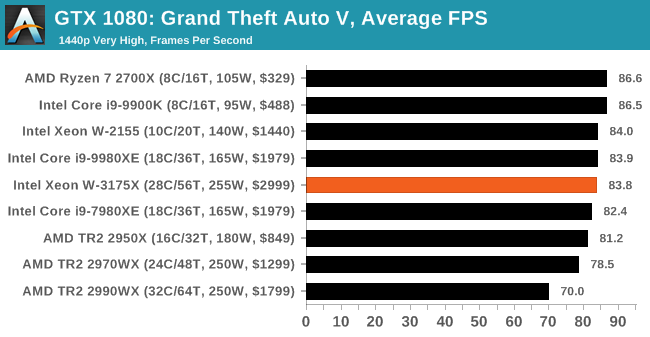

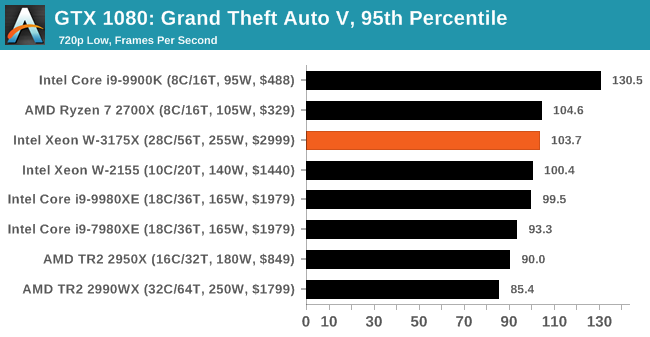

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

| AnandTech CPU Gaming 2019 Game List | ||||||||

| Game | Genre | Release Date | API | IGP | Low | Med | High | |

| Grand Theft Auto V | Open World | Apr 2015 |

DX11 | 720p Low |

1080p High |

1440p Very High |

4K Ultra |

|

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

All of our benchmark results can also be found in our benchmark engine, Bench.

| GTA V | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

.

136 Comments

View All Comments

johngardner58 - Monday, February 24, 2020 - link

Again it depends on the need. If you need speed, there is no alternative. You can't get it by just running blades because not everything can be broken apart into independent parallel processes. Our company once ran an analysis that took a very long time. When time is money this is the only thing that will fill the bill for certain workloads. Having shared high speed resources (memory and cache) make the difference. That is why 255 Raspberry PIs clustered will not outperform most home desktops unless they are doing highly independent parallel processes. Actually the MIPS per watt on such a processor is probably lower than having individual processors because of the combined inefficiencies of duplicate support circuitry.SanX - Friday, February 1, 2019 - link

Every second home has few running space heaters 1500W at winter timejohngardner58 - Monday, February 24, 2020 - link

Server side: depends on workload, usually yes a bladed or multiprocessor setup is usually better for massively parallel (independent) tasks, but cores can talk to each other much much much faster than blades, as they share caches, memory. So for less parallel work loads (single process multiple threads: e.g. rendering, numerics & analytics) this can provide far more performance and reduced costs. Probably the best example of the need for core count is GPU based processing. Intel also had specialized high core count XEON based accelerator cards with 96 cores at one point. There is a need even if limited.Samus - Thursday, January 31, 2019 - link

The problem is in the vast majority of the applications an $1800 CPU from AMD running on a $300 motherboard (that's an overall platform savings of $2400!) the AMD CPU either matches or beats the Intel Xeon. You have to cherry-pick the benchmarks Intel leads in, and yes, it leads by a healthy margin, but they basically come down to 7-zip, random rendering tasks, and Corona.Disaster strikes when you consider there is ZERO headroom for overclocking the Intel Xeon, where the AMD Threadripper has some headroom to probably narrow the gap on these few and far between defeats.

I love Intel but wow what the hell has been going on over there lately...

Jimbo2K7 - Wednesday, January 30, 2019 - link

Baby's on fire? Better throw her in the water!Love the Eno reference!

repoman27 - Wednesday, January 30, 2019 - link

Nah, I figure Ian for more of a Die Antwoord fan. Intel’s gone zef style to compete with AMD’s Zen style.Ian Cutress - Wednesday, January 30, 2019 - link

^ repoman gets it. I actually listen mostly to melodic/death metal and industrial. Something fast paced to help overclock my brainWasHopingForAnHonestReview - Wednesday, January 30, 2019 - link

My manIGTrading - Wednesday, January 30, 2019 - link

Was testing done with mediation regarding the specific windows BUG that affects AMD's CPUs with more than 16 cores? Or was it done with no attempt to ensure normal processing conditions for ThreadRipper, despite the known bug?eva02langley - Thursday, January 31, 2019 - link

Insomnium, Kalmah, Hypocrisy, Dark Tranquility, Ne Obliviscaris...By the way, Saor and Rotting Christ are releasing their albums in two weeks.

You might want to check out Carpenter Brut - Leether Teeths and Rivers of Nihil - Where Owls Know My Name.